AI News for 5/21/2024-5/22/2024. We checked 7 subreddits, 384 Twitters and 29 Discords (380 channels, and 7699 messages) for you. Estimated reading time saved (at 200wpm): 805 minutes.

Lots of nontechnical news - the California Senate passed SB 1047, more explosive news on OpenAI employee contracts from Vox and safetyist resignations, and though Mistral v0.3 was released there's no evals or blogpost to discuss yet.

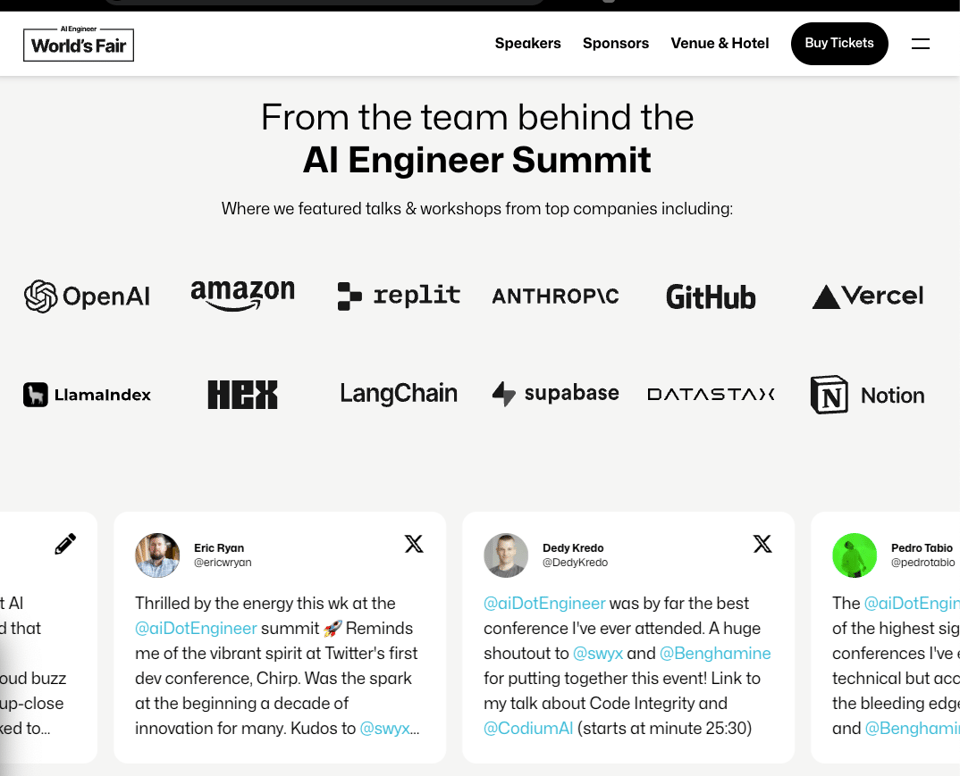

Given its a technically quiet day, we take the opportunity to share our announcements of the initial wave of AI Engineer World's Fair speakers!

TLDR we're giving a onetime discount to AI News readers: CLICK HERE and enter

AINEWSbefore EOD Friday :)

The AI Engineer World's Fair (Jun 25-27 in SF)

The first Summit was well reviewed and now the new format is 4x bigger, with booths and talks and workshops from:

- Top model labs (OpenAI, DeepMind, Anthropic, Mistral, Cohere, HuggingFace, Adept, Midjourney, Character.ai etc)

- All 3 Big Clouds (Microsoft Azure, Amazon AWS, Google Vertex)

- BigCos putting AI in production (Nvidia, Salesforce, Mastercard, Palo Alto Networks, AXA, Novartis, Discord, Twilio, Tinder, Khan Academy, Sourcegraph, MongoDB, Neo4j, Hasura etc)

- Disruptive startups setting the agenda (Modular aka Chris Lattner, Cognition aka Devin, Anysphere aka Cursor, Perplexity, Groq, Mozilla, Nous Research, Galileo, Unsloth etc)

- The top tools in the AI Engineer landscape (LangChain, LlamaIndex, Instructor, Weights & Biases, Lambda Labs, Neptune, Datastax, Crusoe, Covalent, Qdrant, Baseten, E2B, Octo AI, Gradient AI, LanceDB, Log10, Deepgram, Outlines, Unsloth, Crew AI, Factory AI and many many more)

across 9 tracks of talks: RAG, Multimodality, Evals/Ops (new!), Open Models (new!), CodeGen, GPUs (new!), Agents, AI in the Fortune 500 (new!), and for the first time a dedicated AI Leadership track for VPs of AI, and 50+ workshops and expo sessions covering every AI engineering topic under the sun. Of course, the most important track is the unlisted one: the hallway track, which we are giving lots of love to but can't describe before it happens.

To celebrate the launch of the World's Fair, we're giving a onetime discount to AI News readers: CLICK HERE and enter AINEWS before EOD Friday :)

If the curation here/on Latent Space has the most cosine similarity with your interests, this conference was made for you. See you in SF June 25-27!

{% if medium == 'web' %}

Table of Contents

[TOC]

{% else %}

The Table of Contents and Discord Summaries have been moved to the web version of this email: [{{ email.subject }}]({{ email_url }})!

{% endif %}

AI Twitter Recap

all recaps done by Claude 3 Opus, best of 4 runs. We are working on clustering and flow engineering with Haiku.

Anthropic's Interpretability Research on Claude 3 Sonnet

- Extracting Interpretable Features: @AnthropicAI used dictionary learning to extract millions of interpretable "features" from Claude 3 Sonnet's activations, corresponding to abstract concepts the model has learned. Many features are multilingual and multimodal.

- Feature Steering to Modify Behavior: @AnthropicAI found that intervening on these features during a forward pass ("feature steering") could reliably modify the model's behavior and outputs in interpretable ways related to the meaning of the feature.

- Safety-Relevant Features: @AnthropicAI identified many "safety-relevant" features corresponding to concerning capabilities or behaviors, like unsafe code, bias, dishonesty, power-seeking, and dangerous/criminal content. Activating these features could induce the model to exhibit those behaviors.

- Preliminary Work, More Research Needed: @AnthropicAI notes this work is preliminary, and while the features seem plausibly relevant to safety applications, much more work is needed to establish practical utility.

- Hiring for Interpretability Team: @AnthropicAI is hiring managers, research scientists, and research engineers for their interpretability team to further this work.

Microsoft's Phi-3 Models

- Phi-3 Small and Medium Released: @_philschmid announced Microsoft has released Phi-3 small (7B) and medium (14B) models under the MIT license, with instruct versions up to 128k context.

- Outperforming Mistral, Llama, GPT-3.5: @_philschmid claims Phi-3 small outperforms Mistral 7B and Llama 3 8B on benchmarks, while Phi-3 medium outperforms GPT-3.5 and Cohere Command R+.

- Training Details: @_philschmid notes the models were trained on 4.8 trillion tokens including synthetic and filtered public datasets with multilingual support, fine-tuned with SFT and DPO. No base models were released.

- Phi-3-Vision Model: Microsoft also released Phi-3-vision with 4.2B parameters, which @rohanpaul_ai notes outperforms larger models like Claude-3 Haiku and Gemini 1.0 Pro V on visual reasoning tasks.

- Benchmarks and Fine-Tuning: Many are eager to benchmark the Phi-3 models and potentially fine-tune them for applications, though @abacaj notes fine-tuning over a chat model can sometimes result in worse performance than the base model.

Perplexity AI Partners with TakoViz for Knowledge Search

- Advanced Knowledge Search with TakoViz: @perplexity_ai announced a partnership with TakoViz to bring advanced knowledge search and visualization to Perplexity users, allowing them to search, juxtapose and share authoritative knowledge cards.

- Authoritative Data Providers: @perplexity_ai notes TakoViz sources knowledge from authoritative data providers with a growing index spanning financial, economic and geopolitical data.

- Interactive Knowledge Cards: @AravSrinivas explains users can now prompt Perplexity to compare data like stock prices or lending over specific time periods, returning interactive knowledge cards.

- Expanding Beyond Summaries: @AravSrinivas says this allows Perplexity to go beyond just summaries and enable granular data queries across timelines, which is now possible from a single search bar.

- Passion for the Partnership: @AravSrinivas expresses his love for working with the TakoViz team and participating in their pre-seed round, noting their customer obsession and the value this integration will bring to Perplexity users.

Miscellaneous

- Karina Nguyen Joins OpenAI: @karinanguyen_ announced she has left Anthropic after 2 years to join OpenAI as a researcher, sharing lessons learned about AI progress, culture, and personal growth.

- Suno Raises $125M for AI Music: @suno_ai_ announced raising $125M to build AI that amplifies human creativity in music production, and is hiring music makers, music lovers and technologists.

- Yann LeCun on LLMs vs Next-Gen AI: @ylecun advises students interested in building next-gen AI systems to not work on LLMs, implying he is working on alternative approaches himself.

- Mistral AI Releases New Base and Instruct Models: @_philschmid shared that Mistral AI released new 7B base and instruct models with extended 32k vocab, function calling support, and Apache 2.0 license.

- Cerebras and Neural Magic Enable Sparse LLMs: @slashML shared a paper from Cerebras and Neural Magic on enabling sparse, foundational LLMs for faster and more efficient pretraining and inference.

AI Reddit Recap

Across r/LocalLlama, r/machinelearning, r/openai, r/stablediffusion, r/ArtificialInteligence, /r/LLMDevs, /r/Singularity. Comment crawling works now but has lots to improve!

AI Model Releases and Benchmarks

- Microsoft releases Phi-3 models under MIT license: In /r/LocalLLaMA, Microsoft has released their Phi-3 small (7B) and medium (14B) models under the MIT license on Huggingface, including 128k and 4-8k context versions along with a vision model.

- Phi-3 models integrated into llama.cpp and Ollama: The Phi-3 models have been added to the llama.cpp and Ollama frameworks, with benchmarks showing they outperform other models in the 7-14B parameter range.

- Meta may not open source 400B model: According to a leaker on /r/LocalLLaMA, Meta may go back on previous indications and not open source their 400B model, which would disappoint many.

- Benchmark compares 17 LLMs on NL to SQL: A comprehensive benchmark posted on /r/LocalLLaMA compared 17 LLMs including GPT-4 on natural language to SQL tasks, with GPT-4 leading in accuracy and cost but significant performance variation by hosting platform.

AI Hardware and Compute

- Microsoft introduces NPUs for mainstream PCs: Microsoft announced that neural processing units (NPUs) will become mainstream in PCs for AI workloads, with new Surface laptops having an exclusive 64GB RAM option to support large models.

- Overview of M.2 and PCIe NPU accelerators: An overview on /r/LocalLLaMA looked at the current landscape of M.2 and PCIe NPU accelerator cards, noting most are still limited in memory bandwidth compared to GPUs but the space is evolving rapidly.

AI Concerns and Regulation

- Europe passes AI Act regulating AI development and use: The EU has passed the comprehensive AI Act which will regulate the development and use of AI systems starting in 2026 and likely influence regulation globally.

- California Senate passes AI safety and innovation bill: The California Senate has passed SB1047 to promote AI safety and innovation, with mixed reactions and some concerns it will limit AI progress in the state.

- TED head calls Meta "reckless" for open sourcing AI: Chris Anderson, head of TED, called Meta "reckless" for open sourcing AI models, a concerning stance to AI progress advocates from an influential figure.

AI Assistants and Agents

- Microsoft introduces Copilot AI agent capabilities: Microsoft announced new agent capabilities for Copilot that can act as virtual employees, with early previews showing ability to automate complex workflows.

- Demo showcases real-time multimodal AI game agents: A demo posted on /r/singularity showcased real-time multimodal AI agents assisting in video games by perceiving game state visually and providing strategic guidance.

- Questions raised about Amazon's lack of AI assistant progress: /r/singularity discussed Amazon's apparent lack of progress in AI assistants compared to other tech giants, given their broad consumer reach with Alexa.

Memes and Humor

- Memes highlight rapid AI progress: Memes and jokes circulated about the rapid pace of AI progress, companies making dramatic claims, and concerns about advanced AI systems.

AI Discord Recap

A summary of Summaries of Summaries

-

LLM Benchmarking and Performance Optimization:

- Microsoft's Phi-3 Models offer high context lengths and robust performance, stirring discussions on benchmarks and memory usage but uncovering compatibility issues in tools like llama.cpp.

- Various techniques like torch.compile and specific GPU setups were debated for optimizing computation efficiency, shared via insights like those in tensor reshaping examples.

-

Open-Source AI Tools and Frameworks:

- The Axolotl framework emerged as a go-to for fine-tuning models like Llama and Mistral, with Docker setups facilitating ease of use (quickstart guide).

- LlamaIndex introduced techniques for document parsing and batch inference, integrating GPT-4o's capabilities to enhance complex document manipulation and query accuracy.

-

AI Legislation and Community Responses:

- California's SB 1047 bill prompted heated debates on the impact of new regulations on open-source models, with concerns over stifling innovation and favoritism towards major incumbents.

- Discussions on ethical and legal questions arose around AI voice replication, highlighted by OpenAI's controversial mimicking of Scarlett Johansson's voice, leading to its subsequent removal after public backlash.

-

Novel AI Model Releases and Analysis:

- Community excitement surrounded new releases such as Mistral-7B v0.3 with extended vocabularies and function calling (details), while Moondream2 updates improved resolution and accuracy in visual question-answering.

- Anthropic's work on interpretable machine learning and the release of Phi-3 Vision spurred deep dives into scaling monosemanticity (research) and practical AI applications.

-

Practical AI Implementations and Challenges:

- Members shared practical AI implementations, from PDF extraction with Surya OCR transforming documents into markdown (GitHub repo), to building secure code execution environments on Azure (dynamic sessions).

- The LangChain community highlighted issues with deployment and endpoint consistency, with detailed troubleshooting on the GitHub repo helping streamline deployment processes and enhance chatbot functionalities.

{% if medium == 'web' %}

PART 1: High level Discord summaries

Unsloth AI (Daniel Han) Discord

Phi-3 Comes into Play, Skepticism and Excitement Ensue: The introduction of Phi-3 models by Microsoft, such as Phi-3-Medium-128K-Instruct, sparked discussions, with excitement tinged by skepticism due to potential benchmarking issues, highlighted by a user's single-word remark: "literally."

New Legal Frontiers in AI: California's SB 1047 sparked discussions concerning AI laws and open-source model implications, accentuated by Meta's decision to not open the weights for its 400B model, provoking a community debate on the wide-reaching effects of such restricted access.

Unsloth Woes with Model Saving and Flash Attention: Trouble reported with Unsloth's model.save_pretrained_gguf() function and Flash Attention compatibility, with suggestions from the community advising an Unsloth reinstall or removing Flash Attention and specific workarounds for T4 GPU issues on PyTorch version 2.3.

Guided Decoding and YAML Finesse: A spirited discussion on using guided decoding for generating structured YAML outputs revealed potential vLLM support with advanced syntaxes, emphasizing the integration of grammars into the prompting process.

Cutting-Edge Model Discussions Mix with Sci-Fi: Users shared advancements and tested methods like MoRA, alongside spirited talks about the Dune series' philosophical undertones and defenses of novel reading over movie watching, underscoring a preference for depth in sci-fi storytelling.

LLM Finetuning (Hamel + Dan) Discord

-

PDF Extraction Wins with Surya OCR: Marker PDF effectively converts PDFs into markdown, surpassing other models with Surya OCR, and the solution has been open-sourced on GitHub.

-

Self-Translation Outshines Native Prompts: Native language prompts were compared to translated English instructions; a member shared research on self-translation, recommending task-specific prompt strategies, and provided a relevant paper.

-

Singapore Member Shares LLM Workshop Notes: Cedric from Singapore summarized key points on LLM mastery in his workshop notes, which were well-received by the community.

-

Convergence on

axolotlfor Model Training and Tuning: Multiple channels discussed using Axolotl for fine-tuning models with a reference to Axolotl's main branch. Users are directed to the Axolotl Docker image and shared a setup guide. -

Gradio Maintainer Jumps In: Freddy, a Gradio maintainer, supports the community with Gradio resources for quickstarts and developing chatbots, while another member indicates they'll have questions about a Gradio extension they've written.

Perplexity AI Discord

-

Microsoft's Bold Move with Copilot+: Discussions erupted over Microsoft's announcement of their "Copilot+ PCs" which have been observed to incorporate features remarkably similar to those of OpenAI. These PCs boast of 40+ TOPS performance, all-day battery life, AI image generation capabilities, and live captions in over 40 languages.

-

Dissecting the GPT-4o Context Window: Amid debates regarding the context window of GPT-4o, the guild anchored on the understanding that a 32k default size is the status quo, though the boundary of its capabilities remained a subject of intrigue.

-

Perplexity's Haiku Hurdles (Avoiding "Unleash"): The guild uncovered a significant shift in Perplexity's default model usage, pivoting from GPT-3.5 to Haiku for regular users while Sonar remains exclusive for pro users, sparking discussions on model availability and strategy.

-

API Anomalies Afoot: Concerns surfaced as Perplexity's API was found to lag behind its web counterpart, generating outdated headlines and unsatisfactory search outputs; further compounded by its beta status and limited endpoint support.

-

Community Collaboration Callout: Members of the guild were nudging each other to make shared threads properly shareable and provided visual aids to help understand the process, while also sharing specific Perplexity AI search links for topics of collective interest.

Stability.ai (Stable Diffusion) Discord

-

Mixing Models - Not a Perfect Blend: Discussions about integrating Lightning and Hyper models with base stable models revealed that while this approach could reduce image generation steps, incompatible architectures often lead to low-quality results.

-

EU AI Act Concerns Rise: Users criticized the newly approved EU AI Act, particularly the watermarking requirements, which could pose difficulties for AI-generated content creators.

-

Local AI Setup Woes: The community shared struggles with implementing Stable Diffusion locally, especially on AMD GPUs. The consensus hinted at a preference for Nvidia GPUs due to setup simplicity and performance advantages.

-

Quality Control for AI-Generated Content: There was palpable discontent with the flood of low-effort, generic, and often sexualized AI-generated images in various online spaces, suggesting a need for better content curation and value assessment in the AI art space.

-

GPUs Debate - Nvidia Wins Favor: A lively debate confirmed Nvidia GPUs as the preferred choice for running Stable Diffusion, with recommendations favoring versions with at least 12GB of VRAM for optimal AI performance.

Eleuther Discord

-

JAX Implementations Face TFLOPS Discrepancies: Engineers shared difficulties in benchmarking JAX implementations of pallas, naive, and flash v2. Shared memory errors and TFLOPS discrepancies on GPUs were reported, highlighting the necessity for precise performance measurements.

-

Mixed Opinions on Preprints and Academic Publishing: The guild debated the usage of preprints on platforms like ArXiv. The consensus appears to be shifting, with major journals increasingly accepting preprints, signaling a change in how academic dissemination is being approached.

-

GPT-3's Randomness at Zero Temperature: Conversations revolved around GPT-3's non-deterministic outputs at temperature 0, with insights into potential hardware level factors such as CUDA kernel non-determinism. Mentioned resources include an arxiv paper and an OpenAI forum discussion.

-

Small Data Set Dilemmas: In a brief interjection, members talked about the challenges of training AI on small datasets, pointing out that the performance often lags behind models trained on much larger corpuses, like the entirety of the internet.

-

Interpretable Machine Learning Gains Traction: Excitement brewed over Anthropic's work on interpretable machine learning features, which can be further explored here.

-

MCQ Randomization Query in lm-evaluation Harness: Guild members raised concerns regarding the lack of answer choice randomization for MCQs within lm-eval-harness, especially for datasets like SciQ and MMLU, suggesting the potential for benchmark biases.

HuggingFace Discord

-

Microsoft's Phi-3 Integration into Transformers: Microsoft announced the release of Phi-3 models with up to 128k context and a vision-language (VLM) version, accessible on HuggingFace. These releases offer new possibilities for instruction-based and vision-language AI tasks.

-

ZeroGPU Bolstering Open-Source AI: With a $10M ZeroGPU initiative, Hugging Face is supporting independent and academic creators by providing free GPU resources, reaching over 1,300 spaces since May 1, 2024. See the official tweet for more details.

-

Struggles in Fine-Tuning Large Models: The community engaged in discussions concerning the challenges of fine-tuning models like Falcon-180B, noting the need for hardware beyond an 8xH100 configuration. There are ongoing efforts to adapt embedding quantization in models like Llama-8B for more efficient memory usage.

-

Legislative Watch on AI: Conversations indicate apprehension towards California's AI regulatory law and its implications for startups versus large companies. NousResearch's Discord server was suggested for deeper discourse on the topic.

-

Tooling and Contributions in AI: Developers contributed several tools and datasets, such as a markdown note-taking app named Notie, a Docker-friendly static wiki with Hexo.js, and various new models like the multilingual NorskGPT-Llama3-70b. There's also mention of a tool called SDXL Flash purportedly generating high-quality images in seconds, showcasing the dynamism in AI tool development.

LM Studio Discord

Dual GPU Dynamics in LM Studio: LM Studio can handle dual GPUs, but they must be of the same type, and users should align VRAM capacities for optimal performance. Configuration for multiple GPUs involves creating and modifying a preset file in the system.

Prompt Precision and Levity: Users suggest quoting text directly in prompts for clarity, while the light-hearted term "prompt engineering" was used to describe meticulous prompt crafting strategies.

Phi-3 Models in the Spotlight: Integrating the Phi-3 models into llama.cpp is a work in progress, with users eagerly waiting for a beta release and an LM Studio update to support the new models. Meanwhile, quantization advice for running Phi-3 Medium suggests staying at Q4 or below.

ROCM Realm for Linux: Linux users expressed their interest in ROCm test builds, with the acknowledgment of challenges running Phi-3-medium-128k models due to tensor mismatch errors on ROCm platforms.

Intriguing New Model Releases: Mistral v0.3 Instruct, featuring an improved tokenizer and function calling support, is now available for use, offering advancements in language model functionality. Access it on the lmstudio community Huggingface page.

Nous Research AI Discord

-

Apple ID Unlock with a Twist: Engineers revealed a new website to bypass Vision Pro app restrictions for non-US Apple IDs, possibly interesting for those looking to access geo-restricted AI tools.

-

Enhanced Moondream Release Pushes Limits: The latest update to Moondream has increased image resolution up to 756x756, and improved TextVQA scores from 53.1 to 57.2, marking a ~0.5% improvement on various benchmarks, as detailed in this tweet.

-

Phi-3 Small on the Horizon?: Speculation is rife on Microsoft's release strategy for Phi models as engineers shared insights into the availability of Phi 3, 7, and 14. Yann LeCun debunked rumors about the upcoming LLaMa 3 400B+ model being closed-weight, pointing to its continued open status on Twitter.

-

SB 1047 Stirs the Pot: California's SB 1047 has engineers worried over its implications for open-source software (OSS), highlighted by shared bill text and Meta being criticized for alleged regulatory manipulation.

-

Anthropic's Cognitive Cartography: Anthropic's efforts to trace the cognitive map of language models captured engineers' attention, providing a potentially valuable resource to those focused on AI interpretation. Conversations around home setups for LLM inference, with personal infrastructure using 2x 4090s, and platforms like Runpod and Replicate were up for discussion due to convenience, despite some platforms being harder to navigate.

-

Phi-3 Vision Drops with Depth and Access: Launched with a comprehensive educational package, engineers discussed the 128K context multi-modal model, Phi-3 Vision, providing links to Microsoft resources like the Tech Report and the model on Hugging Face.

-

Grand Designs of Digital Knowledge: A conversation emerged around Obsidian's knowledge graph visualization, likened to "synthetic brains", and expanded to cover its plugin integrations and data philosophy, complemented with a knowledge graph time-lapse video and explanatory videos for users new to Obsidian.

CUDA MODE Discord

-

SASS Crash Course Wanted: Engineering guild members are seeking guidance on how to learn Syntactically Awesome Style Sheets (SASS), an extension of CSS with a focus on maintaining style sheets efficiently.

-

CUDA Curiosity on Function Modifiers: There's an ongoing discussion about function qualifiers in CUDA, including why a function can be both

__device__and__host__but not__global__and__host__. -

Optimizations and Pitfalls in Torch & Numpy: Members are comparing the performance of

torch.empty_likewithtorch.emptyand discussing memory leaks caused bynumpy's np.zeros_like. There are also shared insights on compiling_issues with ResNet blocks, leveraging user-defined Triton kernels for optimization, and an informative PyTorch tutorial. -

Legislative Buzz for AI Safety: There's a vibrant conversation about the passing of SB 1047, a safety and innovation bill that sets the stage for more regulated AI development, alongside the mention of an ultra-compact ray-casting engine described here.

-

Technical Dive into GitHub Pull Requests: There are deep dives into GitHub pull requests focusing on determinism in encoder backward passes, DataLoader refactoring for large datasets, HellaSwag evaluation in C, and determinism in kernel operations, reflecting the community’s emphasis on efficiency and precision. Links such as this PR for deterministic encoder backward kernels and this one for a DataLoader refactor are part of this roundup.

OpenAI Discord

-

Artificial Voicing Controversy: An AI-generated voice similar to that of Scarlett Johansson led to concerns over ethical practices in AI after OpenAI's model was noted to create a voice "eerily similar" to hers. OpenAI's later decision to remove the voice came after a request for transparency by Johansson's legal team.

-

Chatbots Galore: For coding assistance, users recommended alternatives to GPT-3.5, singling out Meta AI’s Llama 3 and Mistral Large as effective, free options. In contrast, there was dissatisfaction with Microsoft's Copilot owing to its perceived intrusiveness and telemetry issues.

-

Tools and Tricks for Tighter Tokens: In managing token usage and response verbosity, AI Engineers advised setting max tokens and using output templates to create succinct responses. Regarding custom tools, some developers cited stronger results with their own prompts as compared to using aids like CodePilot.

-

Platform and Model Tweaks Needed: Participants pointed out formatting issues, such as unwanted line breaks in OpenAI Playground's output and inconsistent newline handling. Additionally, service outages prompted the sharing of the OpenAI Status Page for service monitoring.

-

Microsoft Expanding Multimodal AI: Microsoft introduced the Phi-3-vision, which combines language and vision, remarking on its potential for various applications. For further reading, members referred to a blog post detailing new models added to the Phi-3 family on Azure.

Modular (Mojo 🔥) Discord

-

Mojo Community Meeting Recap: Mojo enthusiasts can catch up on the latest community meeting by watching the recording which covered topics on Basalt and Compact Dict. The meeting signaled the deprecation of Tensors in Mojo, opening a dialogue on developing new libraries for numerical and AI applications.

-

Python IPC vs. Threading: For long-running queries in a Tkinter app, solutions ranged from threading, message queues, to IPC modules to prevent UI lag. A link to RabbitMQ's Pika Python client tutorial, although promising, led to implementation difficulties.

-

Mojo's Technical Evolution and Practices: Discussion on Mojo revealed no official package manager but

.mojopkgfiles are in play, particularly with lightbug_http. Optimizations in Mojo are MLIR-backed, with ongoing curiosity about their impact on custom data types.math.bithas now been aptly renamed tobit, with adjustments to several function names likebswaptobyte_reverse. -

Nightly Build and Dev Challenges: Nightly build discussions included a PR issue with a commit by the wrong author, leading to a DCO test suite failure, addressed on GitHub. Delays in the nightly release were traced to GitHub Actions, confirmed via GitHub Status. The

math.bitmodule was also renamed tobit, amending function names for clarity. -

Performance Optimization Suggestions: When sorting small data sets, sorting an array of pointers can be more efficient. Regarding DTypePointer memset, a vectorized version performed 20% faster for 100,000 bytes but didn't scale up as effectively with larger data, due to potential issues with "using clobber memory".

LAION Discord

-

Voice AI's Legal Labyrinth: Utilizing a voice actor mimicking Scarlett Johansson raised legal and ethical debates about 'passing off' rights, with members reflecting on the Midler v. Ford Motor Co. case as a potential precedent.

-

Investigating Dataset Disappearances: The sudden removal of the Sakuga-42M dataset, involved in cartoon animation frame research, has left members puzzled about potential legal triggers, stirring up discussions about the broader implications of sharing datasets within legal confines.

-

Microsoft's Multimodal Model Causes a Stir: Discussion on Microsoft's Phi-3 Vision model delved deep into its mechanics, showcased by Hugging Face, sparking conversations about its functionality, particularly when compared with GPT-4's color-sorted chart outputs.

-

Anthropic paper perplexes engineers: The recent Anthropic scaling paper has been marked as heavy yet unread, suggesting that despite its potential significance in the field, it may need clearer distillation to be fully appreciated by practitioners.

-

Old School Synthetic Voices Charm the Community: Members took a stroll down memory lane, reminiscing about the DECtalk voice synthesis technology and shared nostalgia through a Speak & Spell video, which was one of the earliest introductions to personal computing for many.

LlamaIndex Discord

-

GPT-4o Paves the Path for Document Parsing: GPT-4o has been leveraged to parse complex documents like PDFs and slide decks into structured markdown, despite challenges with background images and irregular layouts, using LlamaParse. Details are available here.

-

Secure Containerized Code Execution on Azure: Azure Container Apps are enabling the secure execution of LLM-generated code in dynamic sessions. Further insights are provided in these Azure-related links: Container Apps and Code Security.

-

Introduction to OpenDev AI Engineers: The release of a webinar discussing OpenDevin, a platform designed for creating autonomous AI engineers, offers a tutorial by Robert Brennan. Interested viewers can find it here.

-

Batch Inference Bolsters GenAI Capabilities: The latest on batch inference processing for GenAI applications suggests major benefits for data analysis and querying capabilities. Delve into the details via these links: Batch Inference Integration and GenAI Techniques.

-

Navigating LlamaIndex Challenges and Solutions: AI engineers have wrestled with LlamaIndex challenges, from setting up document previews in chat frontends to errors like

"ModuleNotFoundError"and"pydantic.v1.error_wrappers.ValidationError". Solutions to these issues involve import path corrections and prompt removal, while indexing strategies, such as retrievers using cosine similarity and HNSW, are under discussion for scaling efficiency.

OpenRouter (Alex Atallah) Discord

Typing Quirks Spark Role-playing Debate: Members humorously identified two main types of OpenRouter users: those seeking AI companionship and those delving into fantasy narratives. The conversation took a light-hearted dive into the role-playing tendencies of some users.

Eyes on Phi-3: The Phi-3 Vision Model, praised for high-quality reasoning, was introduced on the server. The model's attributes can be explored through HuggingFace.

Verbose Wizard Needs a Trim: Wizard8x22 model's verbosity issues are recognized, with an adjustment to the repetition penalty proposed as a solution. The dialogue extended to compare other models' performance, highlighting that model behavior is not consistent across the board.

Billing Blues and Nonprofit Woes: A user's billing error on a student platform spurred discussion, leading to a temporary fix involving re-entering billing info. Hopes for nonprofit discounts in the future were also expressed.

Experimenting with LLM Action Commands: Innovative use of LLMs was shared through a Twitter thread, exploring action commands as a fresh way to enhance interactions with language models. Feedback from fellow engineers was solicited to push the boundaries of current LLM interaction paradigms.

Interconnects (Nathan Lambert) Discord

Phi Models Join the Fray: The launch of Phi-small and Phi-medium prompted discussions about the characteristics of Phi-3 Vision, with confirmations that it represents a new and slightly larger variant.

Meta's Model Decisions Cause Stir: A tweet suggested Meta might keep its 400B model closed due to legislative fears, but this was refuted by another source stating the model will remain open-weight. The confusion underscores the delicacy of sharing large-scale model weights in the current regulatory landscape.

OpenAI Under Fire for Unkept Promises: OpenAI has disbanded its Superalignment team due to the unfulfilled promise of 20% compute resource allocation, sparking resignations. This, coupled with a scandal involving NDAs and vested equity issues for ex-employees, casts a cloud over OpenAI's leadership and transparency.

AI Performance Takes a Drawback: Microsoft's Surface drawing AI faces criticism due to latency issues resulting from cloud-based safety checks — reflecting the compromises between local processing power and safety protocols in AI applications.

The Trope of Researcher Titles: Amazement was expressed at Anthropic now boasting over 500 'researchers', igniting a conversation about the dilution of the 'researcher' title and its implications for perception in the tech industry.

OpenAccess AI Collective (axolotl) Discord

-

Cohere Integration and Tokenizer Troubles: Engineers are working on integrating Cohere (commandr) into the Axolotl system, while resolving tokenization issues with references to the

CohereTokenizerFastin the documentation. -

Discovering Tiny Mistral and Distillation Pipeline Updates: A Tiny Mistral model for testing custom functions was shared, as the community discussed ongoing work on a distillation pipeline for Mistral models, reported to be functioning decently.

-

Full Finetuning Versus LoRA Discussion: There was a constructive back-and-forth over full finetuning versus LoRA with insights on performance differences, particularly around style retention for model adjustments, also suggesting direct referencing of the Axolotl GitHub README for tokenization issues.

-

Axolotl's Next Major Release and GPU Finetuning Woes: Users expressed curiosity about the next stable major release of Axolotl and discussed challenges with GPU memory requirements when finetuning using

examples/mistral/lora.yml, seeking advice on managingCUDA out of memory errors. -

Guidance on LoRA merges and State Dictionary Offloading: Clarification was given on setting

offload_dirfor LoRA merges, pointing out the importance of using theoffload_state_dictfunction post-merge to handle large model state dictionaries, referring to the code search in Phorm AI).

Latent Space Discord

-

Langchain JS Awaits Refinements: Engineers discussed the utility of Langchain JS for quick prototyping, despite lagging behind its Python counterpart in refinement. Plans for rearchitecture promise enhancements in future versions.

-

Scale AI Hits the Billion-Dollar Jackpot: Scale AI has raised a staggering $1 billion in a funding round, skyrocketing its valuation to $13.8 billion, with the phasing forecast of profitability by the end of 2024.

-

Phi Packs a Punch: Microsoft's Phi 3 models with links to 4K and 128K context lengths have debuted and are being praised for their capacity to run on platforms as light as a MacBook Pro M1 Pro. The community is scrutinizing them for competitive performance against leading models like Mixtral, Llama, and GPT.

-

Anthropic Defines Features with Dictionary Learning: Anthropic has made significant strides with dictionary learning in their frontier model, allowing millions of features to be extracted. This is viewed as a leap forward in AI safety and effectiveness, transforming the handling of model activations.

-

Humane Eyes a Ripe Acquisition after AI Pin Stumbles: Humane is seeking acquisition after their AI Pin device's market obstacles, with talks indicating a valuation aspiration between $750 million and $1 billion. Conversations revolve around the difficulties of hardware innovation in a market dominated by giants like Apple.

-

Survey Paper Club: Condensing AI Research: Members are invited to join the Survey Paper Club for efficient exposure to multiple research papers within an hour, with email notifications facilitated upon signing up.

LangChain AI Discord

-

LangChain Community Specs vs LangChain: Discussions articulated distinctions between LangChain and LangChain Community versions; the former's architecture is elaborated in the official documentation.

-

LangServe 'invoke' Woes: Technical issues in LangServe concerning the 'invoke' endpoint which fails to provide comprehensive outputs were reported, spurring debate across several channels, with users flagging inconsistencies in output delivery. Specific problems included the absence of document retrieval and empty outputs, as documented in LangServe discussion #461 and related GitHub issues.

-

Operational Issues with RemoteRunnable: Inconsistency was noted when RemoteRunnable did not perform as expected, unlike the RunnableWithMessageHistory, leading to missing document sources and affecting the operational reliability (see GitHub issue).

-

PDF Powered by Upstage AI Solar and LangChain: A blog post was shared guiding on harnessing the new Upstage AI Solar LLM with LangChain to build a PDF query assistant.

-

LangServe AWS Deployment Made Easier: Members were directed to a Medium article that simplifies deploying LangServe on AWS, eschewing the complexities of cloud technologies such as Terraform, Pulumi, or AWS CDK.

OpenInterpreter Discord

Tech Talk: OpenInterpreter's Device Dialogues: Engineers are exploring how Open Interpreter can create links between apps and devices, utilizing tools like Boox E Ink tablets, OneNote, and VSCode. There's particular interest in using Open Interpreter for querying code or papers without browser intervention.

Speedy GPT-4o Troubleshot: While integrating GPT-4o with Open Interpreter, users note a minimum 5x speed increase but face challenges with error messages pertaining to API keys.

Newline Nuisance in Gemini: Code execution is being hindered in models such as Gemini 1.5 and Gemini Flash due to unnecessary newline characters; the absence of "python" declarations in code blocks also came under scrutiny.

Legislative Lore and AI: California’s controversial AI bill and subsequent discussions have ignited the community, with an open letter from Senator Scott Wiener being circulated and debated for its emphasis on responsible AI development.

Bill Gates Foresees Friendlier AI: Gates recently penned thoughts on the future of AI in software, anticipating interfaces that can handle tasks through simple language directives, akin to a friend's assistance; his article is gaining traction among tech enthusiasts. An unofficial ChatGPT macOS app waitlist workaround made rounds on Twitter, demonstrating interest in quicker access to AI software tools.

tinygrad (George Hotz) Discord

-

Trigonometric Redefinition a No-Go: Community members debated the efficacy of attempting to redefine trigonometric functions such as sine using Taylor series, with the consensus being that it's an unnecessary reinvention. IBM's practical approach to partitioning intervals for functions like sine was cited, showing that achieving perfect accuracy in functions is possible with established methods.

-

IBM's Code Holds the Answers: Participants shared IBM’s implementation of the sine function, highlighting the intricacies of achieving perfect accuracy. Further, they referenced IBM’s range reduction solution for large numbers which is complex but doesn’t generally impact performance.

-

Training Mode Tips and Tricks: In tinygrad, the use of

Tensor.train()andTensor.no_gradwas explained for toggling gradient tracking. Helpful code examples, such as this cartpole example, illustrate the usage and benefits of these mechanisms. -

Under the Hood of

Tensor.train: It was made clear thatTensor.trainis effectively managing theTensor.trainingstatus. For those preferring direct control, manually settingTensor.trainingis an option, supported by tinygrad’s backend implementation. -

Nailing Views with Movement Ops: A discussion unfolded around the behavior of chained movement operations and their potential to create multiple views. An example using

ShapeTrackerdemonstrated how specific op combinations could produce such scenarios.

DiscoResearch Discord

SFT vs Preference Optimization Debate: In a discussion on model training strategies, a member distinguished Supervised Fine-Tuning (SFT) as enhancing the model's probability distribution for target data points, whereas Preference Optimization adjusts both desired and undesired outcomes. They questioned the prevalent use of SFT over Preference Optimization, which may offer a more rounded approach to model behavior.

Excitement Over Phi3 Vision's Low-Parameter Efficiency: One engineer highlighted the development of Phi3 Vision with only 4.2 billion parameters as a significant advancement for low-latency inference in image processing tasks. Asserting that this could have groundbreaking implications for robotics, the model was praised for potential throughput improvements, as links to the announcement were shared (source).

Comparing Image Models Between Moondream2 and Phi3 Vision: The community weighed in on the performance of Moondream2 comparative to Phi3 Vision for image-related tasks. While Moondream2 has had issues with hallucinations, a member mentioned efforts to mitigate this, showcasing the ongoing pursuit of fidelity in image models (Moondream2).

Mixed Reactions to Microsoft's Model Drops: The release of Microsoft's 7b and 14b Instruct models sparked diverse opinions, from concerns about their limitations in certain languages to optimism about their utility in complex reasoning and extraction tasks. The discussion reflects the community's critical analysis of newly released models and their capabilities.

Skepticism Towards Meta's 400b Model: With concerns circulating in the community about Meta potentially not releasing a 400b model as open source, one member highlighted skepticism by pointing to the uncertain credibility of the source, nicknamed Jimmy. This indicates a critical attitude toward rumor validation within the community.

Cohere Discord

-

Cohere is hiring: An enthusiastic member shared a career opportunity at Cohere, highlighting the chance to tackle real-world problems with advanced ML/AI.

-

VRAM Calculator Intrigues: Engineers are discussing the findings of the LLM Model VRAM Calculator, questioning the higher VRAM use of the Phi 3 Mini compared to Phi 3 Medium for identical context lengths.

-

Bilingual Bot Integration Quest: Multiple posts indicate a member searching for a guide to incorporate Command-R into BotPress, requesting help in both English and Spanish.

-

Link Confusion Alert: There is confusion over accessing the Cohere careers page, with at least one member unable to find the correct page through the provided link.

AI Stack Devs (Yoko Li) Discord

- Banter About AI Companions: Discussion sparked by the phrase "AI waifus save lives!" led to a conversation about potentially emotional AI, alluding to the relevance of sentiment analysis for chatbots.

- Emotional Intelligence in Chatbots on the Rise: Shared VentureBeat article prompts engineers to consider the implications for business bots when AI begins to 'understand' emotions, which could be significant for user experience and interface design. VentureBeat article on Emotional AI.

- 3D Chatbots Gaining Traction: A member from 4Wall AI highlighted their ongoing work on 3D character chatbots, suggesting new opportunities for human-computer interaction within the field.

- Pop Culture Meets AI: A reference to "Just Monika" prompted sharing of a Doki Doki Literature Club GIF, showcasing how pop culture can influence dialogues around AI personas. Ddlc Doki Doki Literature Club GIF.

Datasette - LLM (@SimonW) Discord

Snapdragon Dev Kit Sparks Debate: Qualcomm's new Snapdragon Dev Kit priced at $899.99, featuring Snapdragon X Elite and boasting 32GB of LPDDR5x RAM and 512GB NVMe storage, has sparked discussions on cost-effectiveness compared to the previous $600 model, as detailed on The Verge and Microsoft Store.

Mac Mini Server Gets Thumbs Up: An AI engineer shared their success in using a Mac Mini as a reliable Llamafile server with Tailscale, praising its zero-cold start feature and seamless 'llm' CLI integration, suggesting a practical use case for developers needing stable server solutions.

Affordable Dev Kits in Demand: Discussion among users indicates a strong desire for more affordable development kits, with aesthetic preferences also being voiced, such as a wish for a translucent case design, yet no specific products were mentioned.

Smalltalk AI Shows Promise: A member introduced Claude's ability to engage in Smalltalk, using "What are frogs?" as an example question overcome by the AI with a basic reply about amphibians, indicating advances in AI's conversational capabilities.

LLM Perf Enthusiasts AI Discord

Brevity Blunder in Llama3/Phi3: An inquiry was made regarding how to stop llama3/phi3 from truncating responses with "additional items omitted for brevity," but no solutions or further discussion ensued.

Mozilla AI Discord

-

Community Events for Engineering Minds: Mozilla AI announced the initiation of member-organized events to inspire idea-sharing and community interaction, featuring talks, AMAs, and demos, starting with an AMA hosted by LLM360.

-

AMA on Open-Source LLMs: LLM360 hosted an AMA session, diving into the specifics of their work with open-source LLMs and attracting a tech-savvy crowd.

-

Embeddings with Llamafiles: Kate Silverstein, a Staff Machine Learning Engineer, will demonstrate the use of llamafiles for generating embeddings and elaborate on her latest blog post.

-

Events Calendar a Click Away: Mozilla AI encourages members to frequent the events calendar for a robust lineup of community-led discussions and technical activities.

-

Query on Model Spec in LLaMA CPP: A member sought clarity on using a tinyllama model via terminal, questioning whether the

model="LLaMA_CPP"specification is necessary and which model is actually in play when the code snippet runs successfully.

The MLOps @Chipro Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The AI21 Labs (Jamba) Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The YAIG (a16z Infra) Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

PART 2: Detailed by-Channel summaries and links

Unsloth AI (Daniel Han) ▷ #general (1309 messages🔥🔥🔥):

- OpenAI and Dataset Challenges: Members discussed various dataset challenges including converting formats, using ShareGPT, and optimizing training parameters such as batch sizes. One user shared that they "spent 5 hours scraping site into alpaca format" only to find it unhelpful, indicating how persnickety these processes can be.

- Phi-3 out!; Users skeptical but excited: Phi-3 models from Microsoft generated excitement with members mentioning Phi-3-Medium-128K-Instruct, yet some noted skepticism about the validity of its benchmarks. One user said, "literally".

- Latest Legal Constraints: Conversations about AI regulations like California's SB 1047 law sparked discussions on the implications for open-source models. "Meta plans to not open the weights for its 400B model," catalyzed a debate, with users expressing concerns about its global effects.

- Technical Issues and Workarounds for Colab/Kaggle: Common technical glitches were noted, especially around updates breaking compatibility. User

theyruinedelisepointed out necessary workarounds like restarting Colab sessions due to the "Pytorch not detecting T4's properly" issue. - Unsloth Platform Developments: Users discussed new model support on the Unsloth platform such as Mistral v3, expressing excitement over improved fine-tuning features. "Unsloth now supports Mistral v3", facilitating easier adoption of cutting-edge models in the community.

Links mentioned:

Unsloth AI (Daniel Han) ▷ #random (233 messages🔥🔥):

-

MoRA sparks curiosity: Members inquired about a new method called MoRA and shared plans to test its vanilla implementation. One noted that it appears to be a "scaled up" version of LoRA, optimized for measuring the intrinsic dimension of objective landscapes.

-

Dune series and philosophy discussions dominate: Users engaged in a detailed discussion about the philosophical depth of the Dune series beyond its initial hero's journey. They noted that subsequent books become progressively more philosophical, moving away from simple narratives.

-

Sci-fi novels and recommendations flood the chat: The conversation shifted to various sci-fi novels and recommendations, including Peter Watts' "Blindsight," which features unique takes on alien intelligence and vampires, described as "the hardest sci-fi that ever sci-fied."

-

Fondness for intricate sci-fi plots: Users expressed enthusiasm for complex and intriguing sci-fi plots, comparing elements of hard sci-fi novels to modern AI's behavior. They discussed the appeal of realistic and imaginative alien life forms in literature over cliché humanoid representations.

-

Debate on movies versus reading novels: Members compared the experience of watching sci-fi movies to reading novels, with some expressing a preference for the latter due to the more profound and imaginative storytelling. The conversation highlighted dissatisfaction with recent movie adaptations of popular sci-fi stories, noting a decline in quality compared to the depth found in books.

Link mentioned: MoRA: High-Rank Updating for Parameter-Efficient Fine-Tuning: Low-rank adaptation is a popular parameter-efficient fine-tuning method for large language models. In this paper, we analyze the impact of low-rank updating, as implemented in LoRA. Our findings sugge...

Unsloth AI (Daniel Han) ▷ #help (192 messages🔥🔥):

- Unsloth models face saving issues: Users report problems with the

model.save_pretrained_gguf()function documented in GitHub Issue #485, which breaks due to aUnboundLocalError. - Flash Attention causes CUDA issues: Several users experienced errors with Flash Attention versions misconfigured for their setups and discussed switching to

xformersinstead. Consequently, starsupernova recommended uninstalling and reinstalling Unsloth without Flash Attention. - Pytorch ≥ 2.3 breaks T4 GPUs: Multiple users reported compatibility issues with Pytorch version 2.3 on Tesla T4 GPUs, leading to recommendations to downgrade and disable bf16 support. A community workaround involved specifying

dtypeexplicitly. - Guided decoding for YAML: There was an in-depth discussion on leveraging guided decoding for structured output in YAML, with insights on using grammars and constraining output effectively while prompting models. This includes potential support in vLLM using different syntaxes like JSON Schema or BNF.

- Installation and training discrepancies: Discrepancies in installation instructions and training behavior were analyzed, particularly focusing on the

trllibrary and its versions affecting model training. Adjustments to ensure consistent setups and installations were advised, especially considering instability in recent library versions.

Links mentioned:

-

offload

-

Update llama.py

-

Update llama.py

-

Update llama.py

-

Update llama.py

-

Update llama.py

-

Update llama.py

-

Update llama.py

-

continued pret...pytorch/torch/cuda/init.py at b40fb2de5934afea63231eb6d18cc999e228100f · pytorch/pytorch: Tensors and Dynamic neural networks in Python with strong GPU acceleration - pytorch/pytorch

Unsloth AI (Daniel Han) ▷ #showcase (7 messages):

- Superfantastic results ignite excitement: One member expressed amazement with their results, describing them as "super fantastic." Another commented on their own struggles, stating they couldn’t get results below 52k and expressed anticipation for an upcoming article.

- Recipe for success forthcoming: A member mentioned they will "release the recipe this/next week," despite noting that it won't fully reproduce earlier results due to the use of proprietary data. They added that it might perform a bit better for English datasets.

- Knowledge Graph Embeddings: A member shared their past experience with Knowledge Graph Embeddings, mentioning difficulty in transitioning from a Neo4j graph to a PyTorch Geometric Dataset due to complex

cypherqueries. Another member implied that such a task should be easier with current tools.

LLM Finetuning (Hamel + Dan) ▷ #general (242 messages🔥🔥):

-

Modal Learning Opens New Doors: One member shared that their company uses Marker PDF, leveraging Surya OCR to convert PDFs into markdown format. They noted that the tool's results surpass other open models, and they have open-sourced the solution on GitHub.

-

Native Prompts vs. Translations?: Members discussed the efficacy of native language prompts versus English prompts with instructions to translate. One member shared a paper focusing on self-translation models, adding various experiences and suggesting task-specific strategies.

-

PDF Parsing and Multimodal LLMs: Challenges in PDF parsing were highlighted with multiple tools such as LlamaParse, Unstructured, and table transformers mentioned, but none provided perfect results. There was interest in strategies involving multimodal LLMs and fine-tuning on target data.

-

Anthropic’s Sonnet Paper Sparks Interest: A member shared a link to a paper on interpretability by Anthropic, sparking discussions about safety and steering model behavior. Another member added further insights with a related Twitter thread.

-

Community Engagement with Modal and Tools: Discussions included preference for tools like pyenv, mamba (through miniforge), and the ease of using GUI for language model fine-tuning. Members shared installation guides and discussed various workflows and their experiences with different packages and environments.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #workshop-1 (83 messages🔥🔥):

-

Extracting Villa Attributes from User Prompts: One member discussed extracting structured attributes like bedrooms and swimming pools from user-provided prompts about their villa wishes. They highlighted the importance of maintaining low latency and high performance, and expressed interest in using synthetic data for evaluation.

-

Workflows and Synthetic Data: Another member shared their use case of predicting workflows and generating them using GPT-4 for various domains. They focus on using synthetic data to fine-tune Mistral models for providing workflow recommendations.

-

User Testing with LLM Agents: A use case was presented for using LLM agents to conduct user tests for web applications, tuning prompts to capture user personalities and desired feedback. The focus lies in prompt tuning to effectively simulate user interactions.

-

Model for Grant Application Assistance: One user proposed fine-tuning models to help UK farmers and organizations navigate and complete grant applications. They plan to combine natural language understanding with domain-specific knowledge from the UK government website.

-

In-Store Book Recommendation System: An idea was put forward for creating a recommendation system that uses user queries to provide book suggestions from a bookstore's database. The system would rely initially on prompt engineering and RAG, with potential fine-tuning to reduce costs as the model scales up.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #asia-tz (26 messages🔥):

-

Cedric shares extensive notes from LLM workshop: A member from Singapore, Cedric, shared his notes from a workshop, summarizing key points about "Mastering LLMs". The notes were met with positive feedback with members expressing gratitude.

-

Pune Meetup Proposal Gains Interest: A member from Pune suggested a local meetup and received enthusiastic responses. The intent to set up the event was emphasized with a follow-up message on logistics: "[Possible Pune meetup ?]".

-

Growth in Singapore and Malaysia Community: Several members from Singapore, Malaysia, and other parts of Asia introduced themselves. Collaborative enthusiasm was high with many members taking interest in discussing topics and meeting locally.

-

General Members' Greetings: Multiple members from various parts of India and Asia introduced themselves, expressing interest in connecting with others. These introductions highlighted the geographical diversity and the active participation from different regions in Asia.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #🟩-modal (77 messages🔥🔥):

-

Satish's Surya OCR and Modal Issues: "I have created the PDF extractor using Surya OCR" but faced issues with Modal running every time the model loads. Suggested to join Modal's Slack for quicker support as outlined here.

-

Axolotl Running Issue: Nisargvp faced trouble recognizing

axolotl.gitURL in Modal; suggested to refer to Modal's LLM Finetuning sample repo. -

Inference Configuration Confusion: Intheclouddan ran into issues while setting up inference using a specific prompt format and was advised to use the full llama 3 chat template and shared related example repo.

-

Modal Credits Inquiry: Numerous participants mentioned filling out forms and awaiting Modal credits. Charles shared the claim form link and mentioned the credits process details are in a specific Discord channel.

-

Training and Inference Execution Errors: Troubleshooting related to execution errors showed that repeated attempts sometimes resolve the issues. Ripes suggested checking related discussions on Modal's Slack community.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #learning-resources (10 messages🔥):

-

Reverse Engineering Transformers benefits from interactive articles: A member shared a comprehensive resource on reverse engineering transformer language models into human-understandable programs, inspired by the Distill Circuits Thread and other interactive articles like Activation Atlases. They also noted Distill's hiatus and mentioned that new content may be added in collaboration with other institutions.

-

Fine-Tuning Benchmarks Showcase Open-Source LLM Performance: The Predibase fine-tuning index offers performance benchmarks from fine-tuning over 700 open-source LLMs, highlighting that smaller models can deliver GPT-like performance through fine-tuning. Performance metrics are presented in interactive charts to help AI teams select the best open-source models for their applications.

-

Dedicated GitHub Repo for LLM Resource Collaboration: A member created a GitHub repo for better collaboration on LLM resources for a workshop by Dan Becker and Hamel Husain. They asked users not to directly edit the README.md file as it's auto-generated through GitHub actions and encouraged pull requests for contributions.

-

ML Engineering Book Added to LLM Resource Repo: A member plans to add Stas' ML Engineering book to the resource repo, highlighting its in-depth insights on training LLMs at scale, covering various aspects such as orchestration, good training loss, and planning. The book is praised as an invaluable resource despite its chunkiness due to the detailed coverage.

-

AI Model Comparison Website as a Favorite Resource: A member recommended artificialanalysis.ai for comparing and analyzing AI models across metrics like quality, price, performance, and speed. They noted the site's detailed metrics and FAQs for further details and highlighted the trade-offs between model quality and throughput.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #jarvis-labs (36 messages🔥):

-

Members plan to run Axolotl on Jarvis: Multiple users expressed interest in experimenting with Axolotl and discussed the sign-up and credit allocation process for Jarvislabs. User

vishnu9158shared the steps to start using Axolotl locally via a Docker image. -

Credits for JarvisLabs: Users inquired about getting credits after signing up on Jarvislabs. It was clarified that if the Jarvislabs account email differs from the course email, it might cause delays.

-

Creating and Running Axolotl Instances: The community discussed running Axolotl instances using a Docker image and JupyterLab for fine-tuning. Vishnu9158 mentioned that a documentation and video tutorial are coming soon.

-

Blog posts for better understanding: Several users, inspired by a previous suggestion, shared or planned to share their blog posts about their learning experiences on platforms like Medium.

-

Hugging Face model issues resolved: Some members faced issues accessing the llama-3 model on Hugging Face, despite having access. Dhar007 provided steps to resolve this by creating and using an access token, but then ran into CUDA out of memory errors, suggesting adjustments in batch sizes.

Link mentioned: Jarvislabs: Making AI affordable and simple for everyone: Jarvislabs is a platform that allows you to run and explore multiple AI framerworks on powerful GPUs with zero setup

LLM Finetuning (Hamel + Dan) ▷ #hugging-face (11 messages🔥):

-

Hugging Face model filter issue: Users discussed the issue of filtering for

axolotlmodels on Hugging Face without getting results. A link was shared to Hugging Face models, and solutions involving theHfApilibrary were proposed. -

Pre-defined tags for filtering: A Hugging Face team member clarified that the

Othertab uses a set of pre-defined tags to avoid overwhelming users, making the user experience more consistent. They mentioned a potential improvement: showing "+N other tags" to make it clearer. -

Energy over Hybrid Sharding with FSDP and DS: A user expressed enthusiasm for hybrid sharding strategies when sharding models using FSDP and DS.

-

Uploading fine-tuned models: A user had issues with uploading a large fine-tuned

gpt2-mediummodel to Hugging Face, noting that it resulted in multiple.pthfiles instead of one. They were advised to seek help in a more relevant channel for detailed guidance.

Link mentioned: Models - Hugging Face: no description found

LLM Finetuning (Hamel + Dan) ▷ #replicate (10 messages🔥):

-

Clarification on Replicate's Use Case: A member questioned the primary use case for Replicate, asking if it's mainly to offer API endpoints for downstream tasks and for firms/individuals. They also noted the availability of "defined tasks, fine-tuning, and customized datasets."

-

Conference Registration Email Issues: Several members, including hughdbrown and project_disaster, reported issues with conference registration where the emails used for GitHub registration differ from those used for the conference.

-

Credits and Email Address Workaround: harpreetsahota mentioned that users can set a different email address after signing up on Replicate if their GitHub email differs. However, filippob82 indicated that emails containing a

+sign are currently not being accepted. -

Credit Allocation Enquiries: Users like digitalbeacon are awaiting credits post-sign-up. 0xai queried whether entering the maven registered address in the notifications section would automatically add these credits.

LLM Finetuning (Hamel + Dan) ▷ #langsmith (4 messages):

- Credit dispatch in progress: A member asked if credits had already been dispatched. Another member responded, directing them to a pinned message and clarifying that announcements would be made on Discord and by email.

LLM Finetuning (Hamel + Dan) ▷ #whitaker_napkin_math (4 messages):

- Hamel gets his own fan channel: A member humorously acknowledges that Hamel has his own fan channel. The sentiment was light and playful, stating, "Not sure what to do with such power.".

- Session preparation hints: Another member hints that they'll fill the channel with relevant content before conducting a scheduled session. They plan to ensure engaging discussion leading up to the event.

Link mentioned: Minion Hello GIF - Minion Hello Minions - Discover & Share GIFs: Click to view the GIF

LLM Finetuning (Hamel + Dan) ▷ #workshop-2 (525 messages🔥🔥🔥):

-

NVLink woes and creative solutions: Members discussed issues with NVLink, including mismatched card heights and lack of NVLink compatibility on certain setups. Suggested solutions included using riser cables with support brackets.

-

Hamel's evaluation steps clarification: A user asked for the significance of the evaluation step that Hamel discussed, leading to an understanding that breaking down tasks and iterative iterations are key to completing projects efficiently. "80% of the time is spent getting to 80% quality, and 500% of the time to reach 100%."

-

Using Modal and Jarvis for running Axolotl: Users discussed using Modal, RunPod, and Jarvis Labs for running Axolotl, with suggestions to initially try straightforward setups like RunPod or Jarvis before attempting more automated or complex configurations such as Modal. "You can run it on modal if you have the credits" and "try Jarvis which offers credits as part of the course."

-

Axolotl dataset formats and model usage: The community explored various dataset formats for Axolotl, including JSONL and conversation-based formats like ShareGPT. There was a preference for JSONL due to its flexibility and ease of use, with an emphasis on using the 'input_output' format for cases without strict templates.

-

Recording workshops and resource links: The community shared feedback on the need for more practical examples and clear steps to run fine-tuning workshops. Helpful links to resources and blog posts, such as Loom video and Medium post, were highlighted, and recordings were made available promptly.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #jason_improving_rag (3 messages):

- Excitement for Jason's W&B course: Filippob82 expressed enthusiasm for Jason's session and mentioned they are halfway through his W&B course. They used an emoji to convey their excitement.

- Curiosity about prompt engineering: Nehil8946 showed interest in Jason's work on optimizing prompts and asked if there is a systematic approach to prompt engineering that Jason follows. They are looking forward to learning about it in his workshop.

LLM Finetuning (Hamel + Dan) ▷ #jeremy_python_llms (1 messages):

nirant: Woohoo! Looking forward to <@660097403046723594>

LLM Finetuning (Hamel + Dan) ▷ #gradio (2 messages):

- Meet Freddy, your Gradio expert: Freddy introduced himself as one of the maintainers of Gradio, a Python library for developing user interfaces for AI models. He shared helpful resources for getting started and creating chatbots with Gradio, including a quickstart guide and a tutorial on building a chatbot.

- Mnemic1 prepares for questions: A member expressed thanks for the resources and mentioned they would have questions about an A1111-extension they wrote, which had some unresolved issues.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #axolotl (85 messages🔥🔥):

- **Members address Axolotl issue #1436**: Discussion about `bitsandbytes==0.43.0` not installing on macOS from [GitHub Issue #1436](https://github.com/OpenAccess-AI-Collective/axolotl/issues/1436). Recommendations include using Linux GPU servers on RunPod.

- **Axolotl and MLX integration not yet supported**: Members discuss the lack of MLX support on Axolotl as detailed in [GitHub Issue #1119](https://github.com/OpenAccess-AI-Collective/axolotl/issues/1119). Users are advised to stay updated.

- **Best setup practices explored**: Members share various methods to set up Axolotl. The Axolotl [Readme](https://github.com/OpenAccess-AI-Collective/axolotl/tree/main?tab=readme-ov-file#quickstart-) and Docker method are mentioned as the most reliable.

- **Fine-tuning and integration concerns**: Members inquire about using Axolotl on local machines and fine-tuning models like LLaMA3. Issues related to configuration and compatibility with Modal environments are discussed.

- **Tips for troubleshooting installation**: For users facing installation difficulties, such as receiving a `CUDA` error, several members recommend steps including installing specific CUDA/PyTorch versions and using the docker container. Links to [Docker](https://hub.docker.com/layers/winglian/axolotl/main-20240522-py3.11-cu121-2.2.2/images/sha256-47e0feb612caf261764631a0c516868910fb017786a17e4dd40d3e0afb48e018?context=explore) and a [setup guide](https://latent-space-xi.vercel.app/til/create-a-conda-env-for-axolotl) are provided.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #zach-accelerate (49 messages🔥):

- Hugging Face Presentation and Accelerate Resources: A member shared various resources including a presentation on Hugging Face and documentation for Accelerate. Links included tutorials on FSDP vs. DeepSpeed and examples on GitHub.

- Creating Slides with Quarto Saves Time: Members discussed how using Quarto made creating presentations easier and faster. One user mentioned they now only use Quarto for slides due to the streamlined workflow.

- Using Accelerate in Python Scripts: There was a conversation on how to utilize Accelerate within Python scripts, suggesting code snippets for launching processes and saving models with Accelerate. One user provided a detailed answer to streamline implementation.

- Interest in Different Demo Videos for Accelerate: Members expressed interest in seeing recorded demos of Accelerate's usage in various scenarios, including local vs. cloud training, hybrid modes, and focusing on techniques like LoRa without quantization. Specific requests included comparing setups and configurations for different environments.

- Upcoming GPU Optimization Workshop: An event was shared featuring a workshop on GPU optimization with speakers from OpenAI, NVIDIA, Meta, and Voltron Data, with details on event registration, YouTube livestream, and relevant reading materials.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #wing-axolotl (30 messages🔥):

-

Caching Precautions for Multiple Model Training: A user asked about the necessary precautions for separating cached samples when training multiple models simultaneously. They inquired whether sequence length, datasets, tokenizers, and other settings are relevant factors.

-

Custom Callbacks for Evaluations: A user sought guidance on using custom callbacks to run evaluations on custom datasets during training and transferring checkpoints between devices while displaying outputs in wandb/mlflow.

-

Dataset Types: Pretrain vs. Completion: A user asked for the difference between "pretrain" and "completion" dataset types and the appropriate use cases for each.

-

Solving Command Errors: Several users discussed unresolved issues with running the command

accelerate launch -m axolotl.cli.train hc.yml. Troubleshooting suggestions included ensuring dependencies liketorchandgccare correctly installed, and using a docker image for a more straightforward setup. -

Helpful GCC Installation Resource: A user provided a link to a tutorial for installing the GCC compiler on Ubuntu to help resolve installation issues.

Links mentioned:

Perplexity AI ▷ #announcements (1 messages):

- Perplexity integrates Tako for enhanced knowledge search: Perplexity teams up with Tako to provide advanced knowledge search and visualization. Users can now search for comparative data like “Gamestop vs. AMC stock since 5/3/24” with interactive knowledge cards, initially available in the U.S. and in English, with mobile access coming soon.

Link mentioned: Tako: no description found

Perplexity AI ▷ #general (835 messages🔥🔥🔥):

- **Microsoft Stole OpenAI's Ideas**: A member shared a [blog post](https://blogs.microsoft.com/blog/2024/05/20/introducing-copilot-pcs/) stating that Microsoft has copied features from OpenAI and introduced "Copilot+ PCs,” the fastest and most intelligent Windows PCs ever built. They noted features like an impressive 40+ TOPS, all-day battery life, AI image generation, and live captions for 40+ languages.

- **GPT-4o Context Concerns**: There were discussions about the context window of GPT-4o as **perceived on Perplexity**. A consensus formed that **context window defaults to 32k**, with uncertainties about higher capacities.

- **Perplexity's Default Model Surprise**: Members expressed surprise that the default model for Perplexity might be **Haiku** instead of an in-house model, **Sonar**, which is available only for pro users. One member noted that free users previously used GPT-3.5, but this has changed recently.

- **Perplexity's API Queries**: Discussion revolved around how Perplexity configures and charges for API usage. Members speculated about using in-house models and the potential financial implications of their pricing structure.

- **Service Downtime Creates Community Stir**: Perplexity experiencing downtime led to widespread frustration and speculation among users about the cause. Users shared alternative resources and a member posted a supportive message to help calm the community during the outage.

Links mentioned:

Perplexity AI ▷ #sharing (9 messages🔥):

-

Members share Perplexity AI links: Multiple members shared specific search-related links from Perplexity AI, indicating queries and interests such as "Layer", "indoor discussions", and "creating SFW content". One particularly notable search was about "Ether is" with a specific focus link.

-

Reminder to make threads shareable: A gentle reminder was issued to ensure that shared threads are marked as "Shareable". The comment included a screenshot from Discord.

-

User interest in Taiwan Semiconductor: A member showed interest in Taiwan Semiconductor, sharing a specific Perplexity AI search link.

Perplexity AI ▷ #pplx-api (11 messages🔥):

- Headlines API delivers outdated news: A member reported getting headlines from a year ago when using the same prompt for the API as on the web. They asked if anyone else had similar issues generating relevant daily headlines.

- Attempted to refine search queries: Another member suggested adding a date filter (

after:12-02-2024). They further clarified that this should be added directly to the query. - API underperforms compared to the web version: The original member reported that the suggested fixes did not work, as they continued to get poor results through the API compared to the web. They mentioned they were getting good results on the web but terrible ones through the API.

- API limitations highlighted: It was noted that the API is still in beta and only supports one endpoint. This limitation may be contributing to the inconsistent results between the web and API outputs.

Stability.ai (Stable Diffusion) ▷ #general-chat (497 messages🔥🔥🔥):

-

Lightning and Hyper models debate: A member discussed the efficiency of mixing Lightning and Hyper models with base stable models, proposing it could reduce the number of steps required for image generation. However, another member advised against mixing checkpoints from different architectures, warning it often results in poor-quality images.

-

EU AI Act sparks outrage: Following the approval of the EU AI Act, several members expressed frustration and confusion about its implications. One shared a link to the official press release, highlighting the potential difficulties related to watermarking requirements for AI-generated content.

-

Frustrations with Local AI Setup: Members frequently discussed the challenges of setting up Stable Diffusion locally, particularly with AMD GPUs, while suggesting Nvidia GPUs as a better alternative. One member humorously noted that the "best wizard" would help them acquire a Nvidia GPU to solve their issues.

-

Discontent with AI content quality: The rampant creation of low-quality AI-generated images, particularly generic and heavily sexualized content, was criticized. Members pointed out the prevalence of such content on platforms like CivitAI and the AI art subreddit, questioning the value it adds to the community.

-