AI News for 5/30/2024-5/31/2024. We checked 7 subreddits, 384 Twitters and 29 Discords (393 channels, and 2911 messages) for you. Estimated reading time saved (at 200wpm): 337 minutes.

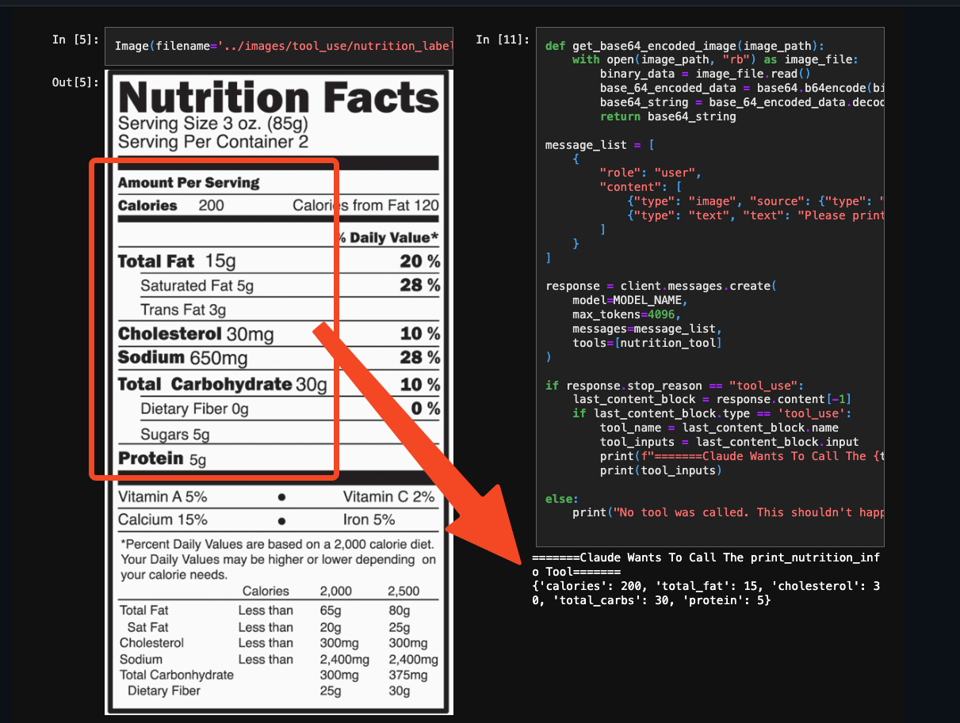

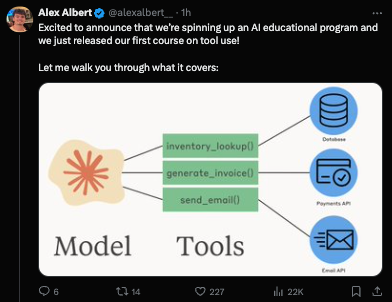

Together with Anthropic's GA of tool use/function calling today on Anthropic/Amazon/Google, with support for streaming, forced use, and vision...

Alex Albert shared 5 architectures for using them in an agentic context:

- Delegation: Use cheaper, faster models for cost and speed gains.

- For example, Opus can delegate to Haiku to read a book and return relevant passages. This works well if the task description & result are more compact than the full context.

- Parallelization: Cut latency (but not cost) by running agents in parallel.

- e.g. 100 sub-agents each read a different chapter of a book, then return key passages.

- Debate: Multiple agents with different roles engage in discussion to reach better decisions.

- For example, a software engineer proposes code, a security engineer reviews it, a product manager gives a user's view, then a final agent synthesizes and decides.

- Specialization: A generalist agent orchestrates, while specialists execute tasks.

- For example, the main agent uses a specifically prompted (or fine-tuned) medical model for health queries or a legal model for legal questions.

- Tool Suite Experts: When using 100s or 1000s of tools, specialize agents in tool subsets.

- Each specialist (the same model, but with different tools) handles a specific toolset. The orchestrator then maps tasks to the right specialist (keeps the orchestrator prompt short).

Nothing particularly groundbreaking here but a very handy list to think about for patterns. Anthropic also launched a self guided course on tool use:

{% if medium == 'web' %}

Table of Contents

[TOC]

{% else %}

The Table of Contents and Channel Summaries have been moved to the web version of this email: [{{ email.subject }}]({{ email_url }})!

{% endif %}

AI Twitter Recap

all recaps done by Claude 3 Opus, best of 4 runs. We are working on clustering and flow engineering with Haiku.

AI Research and Development

- Open Science and Research Funding: @ylecun expressed a clear ethical rule for research: "Do not get research funding from entities that restrict your ability to publish." He emphasized that making new knowledge available to the world is intrinsically good, regardless of the funding source. @ylecun noted that this ethical rule has made him a strong advocate of open science and open source.

- Emergence of Superintelligence: @ylecun believes the emergence of superintelligence will be a gradual process, not a sudden event. He envisions starting with an architecture at the intelligence level of a rat or squirrel and progressively ramping up its intelligence while designing proper guardrails and safety mechanisms. The goal is to design an objective-driven AI that fulfills goals specified by humans.

- Convolutional Networks for Image and Video Processing: @ylecun recommends using convolutions with stride or pooling at low levels and self-attention circuits at higher levels for real-time image and video processing. He believes @sainingxie's work on ConvNext has shown that convolutional networks can be just as good as vision transformers if done right. @ylecun argues that self-attention is equivariant to permutations, which is nonsensical for low-level image/video processing, and that global attention is not scalable since correlations are highly local in images and video.

- AI Researchers in Industry vs. Academia: @ylecun noted that if a graph showed absolute numbers instead of percentages, it would reveal that the numbers of AI researchers in industry, academia, and government have all grown, with industry growing earlier and faster than the rest.

AI Tools and Applications

- Suno AI: @suno_ai_ announced the release of Suno v3.5, which allows users to make 4-minute songs in a single generation, create 2-minute song extensions, and experience improved song structure and vocal flow. They are also paying $1 million to the top Suno creators in 2024. @karpathy expressed his love for Suno and shared some of his favorite songs created using the tool.

- Claude by Anthropic: @AnthropicAI announced that tool use for Claude is now generally available in their API, Amazon Bedrock, and Google Vertex AI. With tool use, Claude can intelligently select and orchestrate tools to solve complex tasks end-to-end. Early customers use Claude with tool use to build bespoke experiences, such as @StudyFetch using Claude to power Spark.E, a personalized AI tutor. @HebbiaAI uses Claude to power complex, multi-step customer workflows for their AI knowledge worker.

- Perplexity AI: @AravSrinivas introduced Perplexity Pages, described as "AI Wikipedia," which allows users to analyze sources and synthesize a readable page with a simple "one-click convert." Pages are available for all Pro users and rolling out more widely to everyone. Users can create a page as a separate entity or convert their Perplexity chat sessions into the page format. @perplexity_ai noted that Pages lets users share in-depth knowledge on any topic with formatted images and sections.

- Gemini by DeepMind: @GoogleDeepMind announced that developers can now start building with Gemini 1.5 Flash and Pro models using their API pay-as-you-go service. Flash is designed to be fast and efficient to serve, with an increased rate limit of 1000 requests per minute.

Memes and Humor

- @huybery introduced im-a-good-qwen2, a chatbot that interacts in the comments.

- @karpathy shared his opinion on 1-on-1 meetings, stating that he had around 30 direct reports at Tesla and didn't do 1-on-1s, which he believes was great. He finds 4-8 person meetings and large meetings for broadcast more useful.

- @ReamBraden shared a meme about the challenges of being a startup founder.

- @cto_junior shared a meme about Tencent AI developers working to replace underpaid anime artists.

- @nearcyan made a humorous comment about people who believe we should not talk to animals, build houses, or power plants, and instead "rot in caves and fight over scraps as god intended."

AI Reddit Recap

Across r/LocalLlama, r/machinelearning, r/openai, r/stablediffusion, r/ArtificialInteligence, /r/LLMDevs, /r/Singularity. Comment crawling works now but has lots to improve!

AI Image & Video Generation

- Photorealistic avatars: In /r/singularity, impressive photorealistic avatars were showcased from the Neural Parametric Gaussian Avatars (NPGA) research at the University of Munich, Germany (example 1, example 2). These high-quality avatars demonstrate the rapid advancements in AI-generated human-like representations.

- Cartoon generation and interpolation: The ToonCrafter model was introduced for generating and interpolating cartoon-style images, with experiments showcasing its capabilities. This highlights the expanding range of AI-generated content beyond photorealistic imagery.

- AI-powered game development: An open-source game engine was presented that leverages AI models like Stable Diffusion, AnimateDiff, and ControlNet to generate game assets and animations. The engine's source code and techniques for rendering animated sprites are fully available.

- AI animation APIs: The Animate Anyone API, with code available on GitHub, enables the animation of people in images. However, comments suggest that alternatives like MusePose may offer better results.

AI Ethics & Societal Impact

- AI partnerships and competition: Microsoft CEO Satya Nadella expressed concerns over a potential OpenAI-Apple deal, highlighting the strategic importance of AI partnerships and the competitive landscape.

- Deepfake concerns: The growing potential for misuse of deepfake technology was emphasized, underscoring the need for safeguards and responsible AI practices.

- AI in the film industry: Sony's plans to use AI for reducing film production costs raised questions about the impact on the creative industry and potential job displacement.

- AI-generated content and realism: An AI-generated image titled "All Eyes On Rafah" faced criticism for lacking realism and potentially misrepresenting a sensitive situation, highlighting the challenges of AI-generated content.

- AI and influence campaigns: OpenAI reported that Russia and China used its AI tools for covert influence campaigns, emphasizing the need for proactive measures against AI misuse, as detailed in their efforts to combat deceptive AI use.

AI Capabilities & Advancements

- Bioprocessors and brain organoids: A groundbreaking bioprocessor utilizing human brain organoids was developed, offering highly efficient computation compared to digital chips.

- AI in healthcare: New AI technology was shown to predict cardiac events related to coronary inflammation up to 10 years in advance, based on a landmark study published in The Lancet.

- Quantum computing breakthrough: Chinese researchers, led by a US-returned physicist, claimed to have built the world's most powerful ion-based quantum computer.

OpenAI News & Developments

- Leadership clarification: Paul Graham clarified that Y Combinator did not fire Sam Altman, contrary to circulating rumors.

- Robotics research revival: OpenAI is rebooting its robotics team, signaling a renewed focus on the intersection of AI and robotics.

- Addressing concerns: OpenAI board members responded to warnings raised by former members regarding the company's direction and practices.

- AI for nonprofits: OpenAI launched an initiative to make its tools more accessible to nonprofit organizations, promoting beneficial AI applications.

- Partnership with Reddit: The announcement of a partnership between OpenAI and Reddit raised questions about the potential implications for both platforms.

AI Humor & Memes

- Robots then and now: A humorous comparison of the "I, Robot" movie's portrayal of robots in the past versus the present day was shared.

AI Discord Recap

A summary of Summaries of Summaries

1. Model Performance Optimization and Benchmarking

-

K2 Triumphs Over Llama 2: The K2 model from LLM360 surpasses Llama 2 70B in performance while using 35% less compute, fully open-sourced under Apache 2.0 license.

-

NeurIPS Hosts Model Merging Competition: A competition with an $8,000 prize invites contenders to blend optimal AI models, details available on the NeurIPS Model Merging Website.

-

Tailored Positional Embeddings Boost Transformer Arithmetic: Researchers achieved 99% accuracy on 100-digit sums using specific embeddings, detailed in their paper.

2. Fine-Tuning and Prompt Engineering

-

Tackling Dataset Merging and Training Tips: Axolotl users discussed effective merging datasets during fine-tuning to avoid issues like catastrophic forgetting. Recommended tools include Hugging Face Accelerate.

-

Fine-Tuning Techniques for Legal Draft Systems and Chatbots: Users fine-tuning LLMs for applications like legal drafts and financial document summarization swapped strategies, with resources like Fine-Tune PaliGemma.

-

Resolving Training Issues for Text Classification Models: Issues with training Spanish entity categorization models involved fine-tuning recommendations, exploring frameworks like RoBERTa.

3. Open-Source AI Developments and Collaborations

-

Milvus Lite for Efficient Vector Storage: Introducing Milvus Lite, a lightweight solution for Python-focused vector storage, detailed in the Milvus documentation.

-

MixMyAI Integrates Multiple AI Models on a Single Platform: The mixmyai.com platform consolidates open and closed-source models, emphasizing privacy and avoiding server storage of chat data.

-

LlamaIndex Offers Flexible Retrieval Systems: New Django-based web app templates facilitate Retrieval Augmented Generation (RAG) applications, utilizing data management and user access controls, as detailed here.

4. AI Community Innovations and Knowledge Sharing

-

Using Axolotl for Consistent Prompt Formats: Adjustments in Axolotl were made to ensure prompt format consistency, guiding users to settings like Axolotl prompters.py.

-

Challenges with Language Support in Ghost XB Beta: Unsloth AI discussed multilingual support in models like Ghost XB Beta aiming for 9+ languages during training phases, highlighting Ghost XB details.

-

Incorporating OpenAI and LangChain Tools: Resources like LangChain Intro, and real-time features announced for GPT-4 Alpha, were discussed for creating advanced AI applications.

5. Hardware Advancements and Compatibility Challenges

-

NVIDIA's New 4nm Research Chip Impresses: Achieving 96 int4 TOPs/Watt efficiency, significantly outpacing Blackwell's capabilities, with discussion on impacts shared here.

-

ROCm Support Challenges on AMD GPUs: Frustrations over ROCm's lack of support for GPUs like RX 6700 and RX580 led to discussions on potential alternatives and performance impacts.

-

Implementing Efficient Data Handling in CUDA: Discussions on optimizing CUDA operations, using techniques like fusing element-wise operations for better performance, with source code insights available here.

PART 1: High level Discord summaries

LLM Finetuning (Hamel + Dan) Discord

-

Transformer Trainings and Troubleshooting: Interactive sessions on Transformer architecture were requested for better understanding of complex topics like RoPE and RMS Normalization. In the meantime, Google Gemini Flash will allow free fine-tuning come June 17th, while careful calculation of costs for production using RAG LLMs remains imperative, prioritizing GPU time and third-party services considerations. The GGUF format is being advocated for to maintain compatibility with ecosystem tools, easing the fine-tuning process. (Fine-tune PaliGemma for image to JSON use cases)

-

Braving BM25 and Retrieval Woes: A hunt for a reliable BM25 implementation was launched, with the Python package

rank_bm25in the crosshairs due to its limited functionality. The conversation focused on enhancing vector retrieval; meanwhile, Modal users are directed to documentation for next steps after deploying v1 finetuned models, and a Modal credits initiative clarified expiration concerns. -

Data Dominates Dialogue: The parsing of structured information from document AI required techniques like OCR and LiLT models. In parallel, data processing for 5K scraped LinkedIn profiles was considered with OpenPipe and GPT-4, while multi-modal approaches and document understanding stayed hot topics. Emphasis on precise matching of training data file formats to prevent

KeyError: 'Input'surfaced as a troubleshooting tip. -

Learning LLM Legwork and LangChain Link-Up: Resources from Humble Bundle and Sebastian Raschka offered insights into prompt engineering and LLM finetuning, though skepticism was raised about the quality of some materials. Reflecting the community's thirst for knowledge, O'Reilly released Part II of their series on building with LLMs, targeting operational challenges in LLM applications.

-

Curating Conversational Context: The distinction between instruct-LLM and chat-LLM models was dissected with the former following clear instructions, and the latter mastering conversational context. Projects discussed ranged from an Alexa-like music player to a legal draft system and a chatbot for financial document summaries, indicating the range of possible implementations for fine-tuned LLMs.

-

Modal Moves and Market Reach: Mediums like blogs played a vital role in spreading knowledge, with John Whitaker's blog becoming a go-to place for learning about things like Basement Hydroponics and LLM performance. More when practitioners shared gradient optimization tricks such as gradient checkpointing and agreed that sometimes, the simplest explanations, like those from Johno's sessions, resonate best.

-

Space for Spaces: Queries on how to change prompt styles for the alpaca format in Axolotl and Qwen tokenizer usage issues were discussed, with references pointing to specific GitHub configs. Meanwhile, deploying a Gradio-based RAG app sparked interest in using HF Spaces due to its ease of use.

-

Credit Craze and Communal Connects: Moments of panic and clarification underscored the urgency of filling out credit forms, as emphasized in urgent announcements. Social gatherings and discussions ranged from SF eval meetups to Modal Labs hosting office hours in NYC, indicating robust community connections and knowledge-sharing events.

-

Europe Engagement and Predibase Prospects: Check-ins from across Europe, such as Nuremberg, Germany and Milan, Italy, manifested the group's geographical span. Elsewhere, the mention of a 30-day free trial of Predibase offering $25 in credits reflected ongoing efforts to provide accessible finetuning and deployment platforms.

-

Career Crossroads: From academia to industry, members shared experiences and encouraged one another in career transitions. The discussion showcased contracting as a viable pathway, with mentorship and perseverance identified as crucial for navigating the tech landscape where GitHub portfolios can serve as vital stepping stones.

These summaries encapsulate the detailed, often granular discussions among AI Engineers in the Discord guild, highlighting the collective endeavor to optimize LLM fine-tuning and deployment amidst pursuit of career growth and community building.

HuggingFace Discord

K2 Triumphs Over Llama 2: LLM360's K2 model outpaces Llama 2 70B, achieving better performance with 35% less computational effort; it's touted as fully-reproducible and is accessible under the Apache 2.0 license.

Numbers Are No Match for Positional Embeddings: Researchers cracked the nut on transformers' arithmetic abilities; with tailored positional embeddings, transformers reach a 99% accuracy on 100-digit sums, a monumental feat outlined in their paper.

NeurIPS Throws Down the Merging Gauntlet: With an $8,000 purse, the NeurIPS Model Merging Competition invites contenders to blend optimal AI models. Hugging Face, among others, sponsors this competition, more info in the announcement and competition website.

Data Dive: From 150K Datasets to Clothing Sales: A treasure trove of 150k+ datasets is now at engineers' fingertips for exploration with DuckDB, explained in a blog post, while a novel clothing sales dataset propelled the development of an image regression model which was then detailed in this article.

Learning Resources and Courses Amplify Skills: In the perpetually advancing field of AI, engineers can bolster their expertise through Hugging Face courses in Reinforcement Learning and Computer Vision, with more information accessible at Hugging Face - Learn.

Unsloth AI (Daniel Han) Discord

Quantization Quandaries and High-Efficiency Hardware: Unsloth AI guild members highlight challenges with the quantized Phi3 finetune results, noting performance issues without quantization tricks. NVIDIA's new 4nm research chip is generating buzz with its 96 int4 tera operations per second per watt (TOPs/Watt) efficiency, overshadowing Blackwell's 20T/W and reflecting industry-wide advancements in power efficiency, numerical representation, Tensor Cores' efficiency, and sparsity techniques.

Model Fine-Tuning and Upscaling Discussions: AI engineers share insights on fine-tuning strategies, including dataset merging, with one member unveiling an 11.5B upscale model of Llama-3 using upscaling techniques. An emerging fine-tuning method, MoRA, suggests a promising avenue for parameter-efficient updates.

Troubleshooting Tools and Techniques: Engineers confront various hurdles, from GPU selection in Unsloth (os.environ["CUDA_VISIBLE_DEVICES"]="0") and troubleshooting fine-tuning errors to handling dual-model dependencies and addressing VRAM spikes during training. Workarounds for issues like Kaggle installation challenges underscore the need for meticulous problem-solving.

AI in Multiple Tongues: Ghost XB Beta garners attention for its capability to support 9+ languages fluently and is currently navigating through its training stages. This progress reaffirms the guild’s commitment to developing accessible, cost-efficient AI tools for the community, especially emphasizing startup support.

Communal Cooperative Efforts and Enhancements: Guild discussions reveal a collective push for self-deployment and community backing, with members sharing updates and seeking assistance across a spectrum of AI-related endeavors such as the Open Empathic project and Unsloth AI model improvements.

Perplexity AI Discord

-

Tako Widgets Limited Geographic Scope?: Discussion around the Tako finance data widget raised questions about its geographic limitations, with some users unsure if it's exclusive to the United States.

-

Perplexity Pro Trials End: Users talked about the discontinuation of Perplexity Pro trials, including the yearly 7-day option, spurring conversations around potential referral strategies and self-funded trials.

-

Perplexity's Page-Section Editing Quirks: Some confusion arose around editing sections on Perplexity pages, where users can alter section details but not the text itself – a limitation confirmed by multiple members.

-

Search Performance Trade-offs Noted: There's been observance of a slowdown in Perplexity Pro search, attributed to a new strategy that sequentially breaks down queries, which, despite lower speeds, offers more detailed responses.

-

Exploring Perplexity's New Features: Excitement was apparent as users shared links to newly introduced Perplexity Pages and discussions about Codestral de Mistral, hinting at enhancements or services within the Perplexity AI platform.

CUDA MODE Discord

-

NVIDIA and Meta's Chip Innovations Generate Buzz: The community was abuzz with NVIDIA's revelation of a 4nm inference chip achieving 96 int4 TOPs/Watt, outperforming the previous 20T/W benchmark, and Meta unveiling a next-generation AI accelerator clocking in at 354 TFLOPS/s (INT8) with only 90W power consumption, signaling a leap forward in AI acceleration capabilities.

-

Deep Dive into CUDA and GPU Programming: Enthusiasm surrounded the announcement of a FreeCodeCamp CUDA C/C++ course aimed at simplifying the steep learning curve of GPU programming. Course content requests emphasized the importance of covering GEMM for rectangular matrices and broadcasting rules pertinent to image-based convolution applications.

-

Making Sense of Scan Algorithms and Parallel Computing: The community engaged in eager anticipation of the second part of a scan algorithm series. At the same time, questions were raised regarding practical challenges with parallel scan algorithms highlighted in the

Single-pass Parallel Prefix Scan with Decoupled Look-backpaper, as well as requests for clarification of CUDA kernel naming in Triton for improved traceability in kernel profiling. -

Strategies for Model Training and Data Optimization Shared: The conversation included a sharing of strategies on efficient homogeneous model parameter sharing to avoid inefficient replication during batching in PyTorch, and issues like loss spikes during model training which could potentially be diagnosed through gradient norm plotting. The idea of hosting datasets on Hugging Face was floated to facilitate access, with compression methods suggested to expedite downloads.

-

Cross-Platform Compatibility and Community Wins Celebrated: Progress and challenges in extending CUDA and machine learning library compatibility to Windows were discussed, with acknowledgment of Triton's intricacies. Meanwhile, the community celebrated reaching 20,000 stars for a repository and shared updates on structuring and merging directories to enhance organization, strengthening the ongoing collaboration within the community.

Stability.ai (Stable Diffusion) Discord

-

Online Content Privacy Calls for Education: A participant emphasized the importance of not publishing content as a privacy measure and stressed the need to educate people about the risks of providing content to companies.

-

Striving for Consistent AI Tool Results: Users noted inconsistencies when using ComfyUI compared to Forge, suggesting that different settings and features such as XFormers might influence results, despite identical initial settings.

-

Strategies for Merging AI Models Discussed: Conversations revolved around the potential of combining models like SDXL and SD15 to enhance output quality, though ensuring consistent control nets across model phases remains crucial.

-

Custom AI Model Training Insights Shared: Enthusiasts exchanged tips on training bespoke models, mentioning resources like OneTrainer and kohya_ss for Lora model training, and sharing helpful YouTube tutorials.

-

Beginner Resources for AI Exploration Recommended: For AI newbies, starting with simple tools like Craiyon was recommended to get a feel for image generation AI, before progressing to more sophisticated platforms.

LM Studio Discord

GPU Blues with ROCm? Not Music to Our Ears: Engineers discussed GPU performance with ROCm, lamenting the lack of support for RX 6700 and old AMD GPUs like RX580, influencing token generation speeds and overall performance. Users seeking performance benchmarks on multi-GPU systems with models such as LLAMA 3 8B Q8 reported a 91% efficiency with two GPUs compared to one.

VRAM Envy: The release of LM Studio models ignited debates on VRAM adequacy, where the 4070's 12GB was compared unfavorably to the 1070's 20GB, especially concerning suitability for large models like "codestral."

CPU Constraints Cramp Styles: CPU requirements for running LM Studio became a focal point, where AVX2 instructions proved mandatory, leading users with older CPUs to use a prior version (0.2.10) for AVX instead.

Routing to the Right Template: AI engineers shared solutions and suggestions for model templates, such as using Deepseek coder prompt template for certain models, and advised checking tokenizer configurations for optimal formatting with models like TheBloke/llama2_7b_chat_uncensored-GGUF.

New Kids on the Block - InternLM Models: Several InternLM models designed for Math and Coding, ranging from 7B to a mixtral 8x22B, were announced. Models such as AlchemistCoder-DS-6.7B-GGUF and internlm2-math-plus-mixtral8x22b-GGUF were highlighted among the latest tools available for AI engineers.

OpenRouter (Alex Atallah) Discord

-

Speed Boost to Global API Requests: OpenRouter has achieved a global reduction in latency, lowering request times by ~200ms, especially benefiting users from Africa, Asia, Australia, and South America by optimizing edge data delivery.

-

MixMyAI Launches Unified Pay-as-You-Go AI Platform: A new service called mixmyai.com has been launched, consolidating open and closed-source models in a user-friendly interface that emphasizes user privacy and avoiding the storage of chats on servers.

-

MPT-7B Redefines AI Context Length: The Latent Space podcast showcased MosaicML's MPT-7B model and its breakthrough in exceeding the context length limitations of GPT-3 as detailed in an in-depth interview.

-

Ruby Developers Rejoice with New AI Library: A new OpenRouter Ruby client library has been released, along with updates to Ruby AI eXtensions for Rails, essential tools for Ruby developers integrating AI into their applications.

-

Server Stability and Health Checks Called Into Question: OpenRouter users confronted sporadic 504 errors across global regions, with interim solutions provided and discussions leaning towards the need for a dedicated health check API for more reliable status monitoring.

OpenAI Discord

Pro Privileges Propel Chat Productivity: Pro users of OpenAI now enjoy enhanced capabilities such as higher rate limits, and exclusive GPT creation, along with access to DALL-E and real-time communication features. The alluring proposition maintains its charm despite the $20 monthly cost, marking a clear divide from the limited toolkit available to non-paying users.

AI Framework Favorites Facilitate Functional Flexibility: The Chat API is recommended over the Assistant API for those developing AI personas with idiosyncratic traits, as it offers superior command execution without surplus functionalities such as file searching.

Bias Brouhaha Besieges ChatGPT: A suspension due to calling out perceived racism in ChatGPT's outputs opened up a forum of contention around inherent model biases, spotlighting the relentless pursuit of attenuating such biases amidst the ingrained nuances of training data.

Virtual Video Ventures Verified: Sora and Veo stand as subjects of a speculative spree as the guild contemplates the curated claims and practical potency of the pioneering video generation models, juxtaposed against the realities of AI-assisted video crafting.

API Agitations and Advancements Announced: Persistent problems presented by memory leaks causing lag and browser breakdowns mar the ChatGPT experience, triggering talks on tactical chat session limits and total recall of past interactions to dodge the dreariness of repetition. Meanwhile, the anticipated arrival of real-time voice and visual features in GPT-4 has been slated to debut in an Alpha state for a select circle, broadening over subsequent months as per OpenAI's update.

Nous Research AI Discord

NeurIPS Competition: Merge Models for Glory and Cash: NeurIPS will host a Model Merging competition with an $8K prize, sponsored by Hugging Face and Sakana AI Labs—seeking innovations in model selection and merging. Registration and more info can be found at llm-merging.github.io as announced on Twitter.

AI's Quest to Converse with Critters: A striking $500K Coller Prize is up for grabs for those who can demystify communication with animals using AI, sparking excitement for potential breakthroughs (info). This initiative echoes Aza Raskin's Earth Species Project, aiming to untangle interspecies dialogue (YouTube video).

Puzzling Over Preference Learning Paradox: The community is abuzz after a tweet highlighted unexpected limitations in RLHF/DPO methods—preference learning algorithms are not consistently yielding better ranking of preferred responses, challenging conventional wisdom and suggesting a potential for overfitting.

LLMs Reigning Over Real-Time Web Content: A revelation for web users: LLMs are often churning out web pages in real-time, rendering what you see as it loads. This routine faces hiccups with lengthy or substantial pages due to context constraints, an area ripe for strategic improvements.

Google Enhances AI-Driven Search: Google has upgraded its AI Overviews for US search users, improving both satisfaction and webpage click quality. Despite some glitches, they're iterating with a feedback loop, detailed in their blog post – AI Overviews: About last week.

LlamaIndex Discord

-

Milvus Lite Elevates Python Vector Databases: The introduction of Milvus Lite offers a lightweight, efficient vector storage solution for Python, compatible with AI development stacks like LangChain and LlamaIndex. Users are encouraged to integrate Milvus Lite into their AI applications for resource-constrained environments, with instructions available here.

-

Crafting Web Apps with Omakase: A new Django-based web app template facilitates the building of scalable Retrieval Augmented Generation (RAG) applications, complete with RAG API, data source management, and user access control. The step-by-step guide can be found here.

-

Navigating Data Transfer for Retrieval Systems: For those prototyping retrieval systems, the community suggests creating an "IngestionPipeline" to efficiently handle data upserts and transfers between SimpleStore classes and RedisStores.

-

Complexities in Vector Store Queries Assessed: The functionalities of different vector store query types like

DEFAULT,SPARSE,HYBRID, andTEXT_SEARCHin PostgreSQL were clarified, with the consensus that bothtextandsparsequeries utilizetsvector. -

Troubleshooting OpenAI Certificate Woes: Addressing SSL certificate verification issues in a Dockerized OpenAI setup, it was recommended to explore alternative base Docker images to potentially resolve the problem.

Eleuther Discord

-

Luxia Language Model Contamination Alert: Luxia 21.4b v1.2 has been reported to show a 29% increase in contamination on GSM8k tests over v1.0, as detailed in a discussion on Hugging Face, raising concerns about benchmark reliability.

-

Ready, Set, Merge!: NeurIPS Model Merging Showdown: A prize of $8K is up for grabs in the NeurIPS 2023 Model Merging Competition, enticing AI engineers to carve new paths in model selection and merging.

-

Cutting-Edge CLIP Text Encoder and PDE Solver Paradigm Shifts: Recognition is given for advancements in the CLIP text encoder methodology through pretraining, as well as the deployment of Poseidon, a new model for PDEs with sample-efficient and accurate results, highlighting papers on Jina CLIP and Poseidon.

-

Softmax Attention's Tenure in Transformers: A debate has crystallized around the necessity of softmax weighted routing in transformers, with some engineers suggesting longstanding use trumps the advent of nascent mechanisms like "function attention" that retain similarities to existing methodologies.

-

A Reproducibility Conundrum with Gemma-2b-it: Discrepancies emerged in attempts to replicate Gemma-2b-it's 17.7% success rate, with engineers turning to a Hugging Face forum and a Colab notebook for potential solutions, while results for Phi3-mini via lm_eval have proven more aligned with expected outcomes.

Modular (Mojo 🔥) Discord

-

Mojo Rising: Package Management and Compiler Conundrums: The Mojo community is awaiting updates on a proposed package manager, as per Discussion #413 and Discussion #1785. The recent nightly Mojo compiler version

2024.5.3112brought fixes and feature changes, outlined in the raw diff and current changelog. -

Ready for Takeoff: Community Meetings and Growth: The Mojo community looks forward to the next meeting featuring interesting talks on various topics with details available in the community doc and participation through the Zoom link.

-

The Proof is in the Pudding: Mojo Speeds and Fixes: A YouTube video demonstrates a significant speedup by porting K-Means clustering to Mojo, detailed here. The discovery of a bug in

reversed(Dict.items())which caused flaky tests was rectified with a PR found here. -

STEMming the Learning Curve: Educational Resources for Compilers: For learning about compilers, an extensive syllabus has been recommended, available here.

-

Stringing Performance Together: A more efficient string builder is proposed to avoid memory overhead and the conversation inclines toward zero-copy optimizations along with using

iovecandwritevfor better memory management.

LangChain AI Discord

-

Public Access for LangChainAPI: A request was made for a method to expose LangChainAPI endpoints publicly for LangGraph use cases, with an interest in utilizing LangServe but awaiting an invite.

-

LangGraph Performance Tuning: Discussions around optimizing LangGraph configurations focused on reducing load times and increasing the start-up speed of agents, indicating a preference for more efficient processes.

-

Memory and Prompt Engineering in Chat Applications: Participants sought advice on integrating summaries from "memory" into

ChatPromptTemplateand combiningConversationSummaryMemorywithRunnableWithMessageHistory. They shared tactics for summarizing chat history to manage the token count effectively, alongside relevant GitHub resources and LangChain documentation. -

LangServe Website Glitch Reported: An error on the LangServe website was reported, together with sharing a link to the site for further details.

-

Prompt Crafting with Multiple Variables: Queries were made on how to structure prompts with several variables from the LangGraph state, providing a formulated prompt example and inquiries about variable insertion timing.

-

Community Projects and Tool Showcases: In the community work sphere, two projects were highlighted: a YouTube tutorial on creating custom tools for agents (Crew AI Custom Tools Basics), and an AI tool named AIQuire for document insights, which is available for feedback at aiquire.app.

LAION Discord

-

Fineweb Fires Up Image-Text Grounding: A promising approach called Fineweb utilizes Puppeteer to scrape web content, extracting images with text for VLM input—offering novel means for grounding visual language models. See the Fineweb discussion here.

-

StabilityAI's Selective Release Stirs Debate: StabilityAI's decision to only release a 512px model, leaving the full suite of SD3 checkpoints unpublished, incites discussion among members on how this could influence future model improvements and resource allocation.

-

Positional Precision: There's technical chatter regarding how positional embeddings in DiT models may lead to mode collapse when tackling higher resolution images, despite their current standard uses.

-

Open-Source Jubilation: The open-source project tooncrafter excites the community with its potential, although minor issues are being addressed, showcasing a communal drive towards incremental advancement.

-

Yudkowsky's Strategy Stirs Controversy: Eliezer Yudkowsky's institute published a "2024 Communication Strategy" advocating for a halt in AI development, sparking diverse reactions amongst tech aficionados. Delve into the strategy.

-

Merging Models at NeurIPS: NeurIPS is hosting a Model Merging competition with an $8K prize to spur innovation in LLMs. Interested participants should visit the official Discord and registration page.

-

RB-Modulation for Aesthetic AI Artistry: The RB-Modulation method presents a novel way to stylize and compose images without additional training, and members can access the project page, paper, and soon-to-be-released code.

OpenAccess AI Collective (axolotl) Discord

-

Yuan2 Model Sparks Community Interest: Members shared insights on the Yuan2 model on Huggingface, highlighting a keen interest in examining its training aspects.

-

Training Techniques Face-Off: Detailed discussions compared various preference training methodologies, spotlighting the ORPO method, which was suggested to supersede SFT followed by DPO, due to its "stronger effect". Supporting literature was referenced through an ORPO paper.

-

Challenging Model Fine-Tuning: Concerns emerged over struggles in fine-tuning models like llama3 and mistral for Spanish entity categorization. One instance detailed issues with model inference after successful training.

-

Members Seek and Offer Tech Aid: From installation queries about Axolotl and CUDA to configuring an early stopping mechanism using Hugging Face Accelerate library for overfitting issues, the guild members actively sought and rendered technical assistance. Shared resources included the axolotl documentation and the early stopping configuration guide.

-

Axolotl Configuration Clarifications: There was an advisory exchange regarding the proper configuration of the

chat_templatein Axolotl, recommending automatic handling by Axolotl to manage Alpaca formatting with LLama3.

DiscoResearch Discord

-

DiscoLeo Caught in Infinite Loop: The incorporation of ChatML into DiscoLeo has resulted in an End Of Sentence (EOS) token issue, causing a 20% chance of the model entering an endless loop. Retraining DiscoLeo with ChatML data is proposed for resolution.

-

ChatML Favored for Llama-3 Template: German finetuning has shown a preference for using ChatML over the Llama-3 instruct template, especially when directed towards models like Hermes Theta that are already ChatML-based.

-

IBM’s “Granite” Models Spark Curiosity: Engineers are exploring IBM's Granite models, including Lab version, Starcode-based variants, and Medusa speculative decoding, with resources listed on IBM’s documentation and InstructLab Medium article.

-

Merlinite 7B Pit Against Granite: The Merlinite 7B model has garnered attention for its proficiency in German, vying for comparison with the IBM Granite models tracked under the Lab method.

-

Quality Concerns Over AI-Generated Data: The community indicated dissatisfaction with the quality of AI-generated data, illustrated by sub-par results in benchmarks like EQ Bench on q4km gguf quants, and showed interest in new strategies to enhance models without catastrophic forgetting.

Interconnects (Nathan Lambert) Discord

-

Google's Expansion Raises Eyebrows: A tweet has hinted at Google bolstering its compute resources, sparking speculation over its implications for AI model training capacities.

-

OpenAI Puts Robotics Back on Table: OpenAI reboots its robotics efforts, now hiring research engineers, as reported via Twitter and in a Forbes article, marking a significant re-entry into robotics since 2020.

-

Confusion Clouds GPT-3.5's API: Community members expressed frustration with the confusing documentation and availability narrative around GPT-3.5; some pointed out discrepancies in timelines and the inconvenience caused by deleted technical documentation.

-

Sergey's Call to Arms for Physical Intelligence: Nathan Lambert relayed Sergey's recruitment for a project in physical intelligence, signaling opportunities for those with interest in Reinforcement Learning (RL) to contribute to practical robot utilization.

-

'Murky Waters in AI Policy' Session Served Hot: The latest episode of the Murky Waters in AI Policy podcast dishes out discussions on California's controversial 1047 bill and a rapid-fire roundup of recent OpenAI and Google mishaps. Nathan Lambert missed the open house for the bill, details of attendance or reasons were not provided.

Cohere Discord

- Cohere Pioneering AI Sustainability: Cohere was noted for prioritizing long-term sustainability over immediate grand challenges, with a focus on concrete tasks like information extraction from invoices.

- AGI Still Room to Grow: Within the community, it's agreed that the journey to AGI is just starting, and there's a continuous effort to understand what lies beyond the current "CPU" stage of AI development.

- Enhanced Server Experience with Cohere: The server is undergoing a makeover to simplify channels, add new roles and rewards, replace server levels with "cohere regulars," and introduce Coral the AI chatbot to enhance interactions.

- Express Yourself with Custom Emojis: For a touch of fun and to improve interactions, the server will incorporate new emojis, with customization options available through moderator contact.

- Feedback Wanted on No-Code AI Workflows: A startup is looking for insights on their no-code workflow builder for AI models, offering a $10 survey incentive—they're curious why users might not return after the first use.

Latent Space Discord

Adapter Layers Bridge the Gap: Engineers are exploring embedding adapters as a means to improve retrieval performance in AI models, with evidence showcased in a Chroma research report. The effectiveness of these can be likened to Froze Embeddings, which the Vespa team employs to eliminate frequent updates in dynamic systems (Vespa's blog insights).

ChatGPT Goes Corporate with PwC: The acquisition of ChatGPT Enterprise licenses by PwC for roughly 100,000 employees sparked debates around the estimated value of $30M/year, with member guesses on the cost per user ranging from $8 to $65 per month.

Google's Twin Stars: Gemini 1.5 Flash & Pro: Release updates for Google Gemini 1.5 Flash and Pro have been pushed to general availability, introducing enhancements such as increased RPM limits and JSON Schema mode (Google developers blog post).

TLBrowse Joins the Open Source Universe: TLBrowse, melding Websim with TLDraw, was open-sourced, allowing users to conjure up infinite imagined websites on @tldraw canvas, with access to a free hosted version.

AI Stack Devs (Yoko Li) Discord

-

Literary Worlds in Your Browser: Rosebud AI gears up for their "Book to Game" game jam, inviting participants to build games from literary works with Phaser JS. The event offers a $500 prize and runs until July 1st, details available through their Twitter and Discord.

-

Navigating the Digital Terrain: A new guild member expressed difficulties in using the platform on Android, describing the experience as "glitchy and buggy". They also sought help with changing their username to feel more at home within the virtual space.

OpenInterpreter Discord

- Check the Pins for Manufacturing Updates: It's crucial to stay on top of the manufacturing updates in the OpenInterpreter community; make sure to check the pinned messages in the #general channel for the latest information.

- Codestral Model Sparks Curiosity: Engineers have shown interest in the Codestral model with queries about its efficiency appearing in multiple channels; however, user experiences have yet to be shared. Additionally, it's noted that Codestral is restricted to non-commercial use.

- Combating Integration Challenges: There's a shared challenge in integrating HuggingFace models with OpenInterpreter, with limited success using the

interpreter -ycommand. Engineers facing these issues are advised to seek advice in the technical support channel. - Scam Alert Issued: Vigilance is essential as a "red alert" was issued about a potential scam within the community. No further details about the scam were provided.

- Android Functionality Discussions Ongoing: Members are engaged in discussions regarding O1 Android capability, specifically around installation in Termux, although no conclusive responses have been observed yet.

Mozilla AI Discord

- Llamafile Joins Forces with AutoGPT: AutoGPT member announced a collaboration to weave Llamafile into their system, expanding the tool's reach and capabilities.

- Inquiry into Content Blocks: Queries were made about whether Llamafile can handle content blocks within messages, seeking parity with an OpenAI-like feature; similar clarity was sought for llama.cpp's capabilities in this domain.

MLOps @Chipro Discord

- Netflix PRS Event Gathering AI Enthusiasts: AI professionals are buzzing about the PRS event at Netflix, with multiple members of the community confirming attendance for networking and discussions.

Datasette - LLM (@SimonW) Discord

-

Mistral 45GB Model Composition Speculations: Interest is brewing around the Mistral 45GB model's language distribution, with a hypothesis suggesting a strong bias towards English and a smaller presence of programming languages.

-

Codestral Compliance Conundrum: The community is engaging with the intricacies of the Mistral AI Non-Production License (MNPL), finding its restrictions on sharing derivative or hosted works underwhelming and limiting for Codestral development.

tinygrad (George Hotz) Discord

- TensorFlow vs. PyTorch Debate Continues: A user named helplesness asked why TensorFlow might be considered better than PyTorch, sparking a comparison within the community. The discussion did not provide an answer but is indicative of the ongoing preference debates among frameworks in the AI engineering world.

The LLM Perf Enthusiasts AI Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The AI21 Labs (Jamba) Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The YAIG (a16z Infra) Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

PART 2: Detailed by-Channel summaries and links

{% if medium == 'web' %}

LLM Finetuning (Hamel + Dan) ▷ #general (86 messages🔥🔥):

-

Guest Session on Transformer's Internals Requested: A member requested a session discussing Transformer architecture topics like vanilla transformer, RoPE, RMS Normalization, and more. Video resources on these topics were shared, but the member emphasized the need for interactive sessions for Q&A.

-

Google's Gemini Flash to Support Fine-Tuning: Starting June 17th, Google will allow free fine-tuning on Gemini Flash, with inference costs matching the base model rates. This was highlighted as a cost-effective opportunity for fine-tuning.

-

Cost Management for Production-Level Systems: There was an exchange about calculating the costs for production systems using RAG LLMs, focusing on GPU time utilization and third-party service trade-offs. The discussion emphasized the importance of experimenting with compute platforms and managing expectations based on usage scenarios.

-

GGUF Format for Fine-Tuning Models: GGUF format was recommended for fine-tuning LLMs to ensure compatibility with various tools in the ecosystem. A link to a detailed blog post on fine-tuning and inference steps was shared along with an update that Hugging Face is working on easier HF to GGUF conversions.

-

Document AI Developments: Multiple users discussed their experiences and challenges with document processing, such as invoices and utility bills. Techniques like OCR, LiLT models, segmentation, and using multimodal/multimodal-less approaches for extracting structured information were shared, along with links to resources and related papers.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #workshop-1 (10 messages🔥):

- User offers help with legal draft system: A member expressed willingness to assist another user with their "lgeal draft system". No further details were provided on the system or assistance needed.

- Alexa-like music player proposal: A member queried if fine-tuning would be suitable for a product resembling Alexa but not locked to Amazon Music. They suggested using LangGraph + function calling to interact with various music service APIs like YouTube and Spotify.

- Chatbot for financial document summaries: A member outlined a project to develop a chatbot capable of answering complex financial questions by summarizing financial documents. They indicated the necessity of RAG and a form of PO for generating user-preferenced summaries.

- Fine-tuning LLM for Cypher/GQL translation: One user intends to fine-tune an LLM to translate natural language questions into Cypher/GQL. They noted that this could greatly enhance interaction with graph data.

- Discussion on instruct-LLM vs. chat-LLM: An extensive discussion debated the models' distinctions, focusing on the training and evaluation differences. Users noted that while newer models blur these lines, instruct models follow clear instructions and chat models handle conversational context.

LLM Finetuning (Hamel + Dan) ▷ #asia-tz (1 messages):

blaine.wishart: Hi everyone...I'm on Hainan for the next 3 months.

LLM Finetuning (Hamel + Dan) ▷ #🟩-modal (18 messages🔥):

- Deployed model next steps: After deploying the v1 finetuned model, a member sought guidance on using it further. This documentation was suggested to understand usage and invoking deployed functions on the Modal platform.

- Modal credits hiccups resolved: Various issues and queries about Modal credits were tackled, like a user noting lost credits and another inquisitive about expiration. Modal credits expire after one year, but active users can contact support to potentially roll over credits, and users doing academic research or involved in startups can get additional credits.

- Troubleshooting dataset issues: A member faced a "KeyError: 'Input'" while working with a specific training data file. It was recommended to check the dataset's format consistency and ensure the correct field keys match what's defined in the config.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #learning-resources (9 messages🔥):

-

AI-Coding Humble Bundle Alert: A member informed the channel about an AI-coding humble bundle, but expressed skepticism about the content quality, noting "First skim does not look great." Another member added that generating such materials independently could be cheaper.

-

Sebastian Raschka’s Chapter on LLM Fine-Tuning: A link to Sebastian Raschka’s chapter on finetuning LLMs for classification in his upcoming book was shared, outlining topics like different finetuning approaches, dataset preparation, and accuracy evaluation for spam classification.

-

O'Reilly Releases Part II on LLM Building: Following positive feedback on Part I, O'Reilly fast-tracked the release of Part II of their series on building with LLMs, shifting focus from tactical to operational aspects of building LLM applications, and noting challenges worth addressing.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #langsmith (4 messages):

-

Langsmith-HIPAA Compatibility Inquiry: A member inquired whether Langsmith offers paid plans supporting HIPAA environments, mentioning the need for handling PII/PHI securely and the necessity of a Business Associate Agreement (BAA) in place.

-

Langsmith Compatibility with OpenAI Models: Another user asked if Langsmith can be used with OpenAI-compatible models like Mixtral, or any models following the same API standards, such as Anthropic.

-

Langsmith Connecting to various Models: Lucas shared insights on using Langchain and Langsmith with Meta's Llama-3:8b via Ollama and highlighted Langchain’s integration with Together AI. The detailed steps and code snippets for using Together AI can be found in Lucas's blog post.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #kylecorbitt_prompt_to_model (1 messages):

- Seeking advice on processing LinkedIn profile data: A user asked for the best approach to processing data from 5K scraped LinkedIn profiles with 20+ columns. They aim to build a fine-tuned model to generate personalized introduction lines using OpenPipe with GPT-4, and later fine-tune a llama-3-8b model.

LLM Finetuning (Hamel + Dan) ▷ #berryman_prompt_workshop (3 messages):

-

Deck from the talk now accessible: A member asked if the deck from a recent talk was available. Another provided a Discord link that also includes a link to the slides.

-

Insightful prompt crafting resource shared: A member highlighted ExplainPrompt as a valuable resource. The site is maintained by a GitHub colleague who posts summaries and visual guides about prompt crafting techniques based on the latest papers.

Link mentioned: ExplainPrompt: no description found

LLM Finetuning (Hamel + Dan) ▷ #whitaker_napkin_math (268 messages🔥🔥):

-

Begin your LLM adventure on Johno's blog: Members were excited to share John Whitaker's valuable content. Check out his blog featuring insightful articles like More=Better?, mini-hardware projects on Basement Hydroponics, and tips on high-surface-area problems.

-

Quadcopter crash avoided by profile optimization: GitHub links such as fsdp_qlora benchmarks and does LoRA cause memory leaks were posted. These references enhance knowledge about LLM training, memory leak issues, and their practical resolutions.

-

Johno shares LoRA wisdom: Discussions included practical tips around LoRA's functionality, such as approximating large matrices with smaller ones, and considerations for LoRA ranks (N x r). Useful for those fine-tuning models while optimizing resource efficiency.

-

The napkin maestro redefines simplicity: Johno's clear and effective teaching style captivated attendees, leading to calls for more in-depth sessions. "He knows how to teach and explain things really well," one member noted, urging further opportunities to learn from him.

-

Unlock the power of gradient tricks: Members shared advanced techniques like gradient checkpointing and splitting the gradient calculation to optimize memory and speed. Hyperlinks to Twitter, GitHub, and Google Docs were passed around for further reading and exploration.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #workshop-2 (7 messages):

-

Building Internal Tool for Compliance: A member is developing an internal tool that converts inputs like "CloudTrail should have encryption at-rest enabled" into multiple files, including rego files compliant with a specific company schema. They are assessing whether the system's 66% accuracy is due to the retrieval method and considering fine-tuning the model for schema and code logic improvements.

-

Challenges in Rule Retrieval and Accuracy: The tool currently retrieves entire documents for context, potentially overwhelming the model. Issues include code compilation errors, incomplete code, hallucinations, and incorrect logic, with considerations on whether fine-tuning could improve its adherence to schema.

-

Text Classification in Spanish Entities: A member is refining a model to categorize Spanish text entities into persons, companies, or unions but faces poor performance during inference. They outline the multi-step instructions used for classification and seek advice on improving model accuracy.

-

Maintaining Template Alignment in Fine-Tuning: For multi-turn chat applications, there's a discussion on whether adhering to the official chat template is crucial when fine-tuning models to retain general utility without starting from scratch. A member assumes alignment is beneficial but looks for community confirmation.

LLM Finetuning (Hamel + Dan) ▷ #abhishek_autotrain_llms (57 messages🔥🔥):

-

AutoTrain Simplifies AI Model Creation: A member shared links to AutoTrain, emphasizing its user-friendly approach to creating powerful AI models without code. AutoTrain handles a variety of tasks including LLM Finetuning, text and image classification, and is integrated with the Hugging Face Hub for easy deployment.

-

Clarification on ORPO and SimPO: Discussion surrounded ORPO, described as "odds ratio preference optimization" akin to DPO but without a reference model, and SimPO, with participants noting its promising aspects despite being very new and possibly subject to "buzz".

-

Challenges Without Nvidia GPUs: Members discussed the impracticality of training AI models without Nvidia GPUs, lamenting the slow performance of CPUs and the lack of support for other GPU brands in AI libraries.

-

Dataset and Optimizer Queries: Participants requested more details on setting up datasets for RAG and customizing optimizer functions for AutoTrain, suggesting these questions be raised in a Zoom Q&A for detailed responses.

-

Gratitude and Additional Resources: The session ended with multiple users expressing thanks to Abhishek for the presentation on AutoTrain and sharing additional resources, including a GitHub repo for AutoTrain Advanced and various configuration guides.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #clavie_beyond_ragbasics (3 messages):

-

Love/Hate Relationship with Single Vector Embeddings: Although single-vector embeddings are useful for prototyping, they fall short of a full retrieval pipeline. ColBERT surpasses single-vector methods in out-of-domain tasks due to its token-level encodings, which provide richer information for OOD scenarios (Vespa blog on ColBERT).

-

Sparse Embeddings and M3 Mentioned Briefly: The discussion will briefly touch on sparse embeddings and M3, focusing primarily on the advantages and limitations of single-vector embeddings for retrieval pipelines.

-

ColBERT's Detailed Output: Unlike single-vector methods that pool everything into a 1024-dimensional vector per document, ColBERT produces numerous 128-dimensional vectors, one per token, resulting in a higher dimensional output for more detailed information processing. For instance, 500 documents with 300 tokens each yield an output of

500,300,128.

Link mentioned: Announcing the Vespa ColBERT embedder: Announcing the native Vespa ColBERT embedder in Vespa, enabling explainable semantic search using token-level vector representations

LLM Finetuning (Hamel + Dan) ▷ #jason_improving_rag (1 messages):

- Search for a reliable BM25 implementation: A user is looking for a BM25 ranking method to mix with vector retrieval and mentions the Python package

rank_bm25. They express surprise that it doesn't use sklearn tokenizers/vectorizers or handle n-grams, stop words, or stemming in creating the vocabulary, asking what others are using.

LLM Finetuning (Hamel + Dan) ▷ #gradio (6 messages):

-

Mitch dives into Gradio fine-tuning: A user shared their Gradio fine-tuning project on GitHub, aiming to generate a quality dataset to leverage Gradio’s latest features. They mentioned following advice from an earlier course to learn by contributing to open-source projects.

-

Question realism concerns: Another member pointed out that the LLM-generated questions in the dataset might not reflect realistic user queries. They suggested referencing more concrete questions, providing a specific example, and adding few-shot examples to the prompt.

-

Experimenting with RAG: A user admitted to not trying Retrieval-Augmented Generation (RAG) before diving into fine-tuning, acknowledging that a strong prompt sometimes surpasses fine-tuning efforts. They are considering integrating RAG into their workflow to enhance question-answer generation.

-

Value of data generation: Members exchanged views on the importance of data generation and collection in fine-tuning projects. One noted the process as the "secret sauce," showing excitement about the progress and potential of this Gradio fine-tuning venture.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #axolotl (7 messages):

-

Axolotl not logging to WandB during training: A user reported that while running locally, initial training metrics are logged to WandB, but nothing updates as training progresses. They suspect it might be related to changes in their configuration file, mentioning, "the step number restarts after the 2nd step... the metrics reported after the first step 0 are the only metrics I ever see logged."

-

Override datasets in axolotl CLI: A user inquired whether it's possible to override the datasets (path and type) when calling

axolotl.cli.trainfrom the command line. No solutions were provided in the message thread. -

Installation issue on Apple M3 Max with axolotl: A user on an Apple M3 Max reported an error when running

pip3 install -e '.[flash-attn,deepspeed]'. They also posted a screenshot of the error but did not receive any responses yet. -

Creating instruction-style prompt templates: A user asked for help setting up a prompt template in axolotl so that it uses a system message from their dataset instead of the preamble. They mentioned struggling with this issue for a couple of hours and sought advice.

LLM Finetuning (Hamel + Dan) ▷ #zach-accelerate (9 messages🔥):

-

Loading Large Shards With Accelerate Is Painful: One member inquired about speeding up "loading shards with accelerate" as it "takes quite some time for 70b". Another jokingly suggested getting "a faster hard drive" and warned about the upcoming Lama 400B with weights "near 1TB in size".

-

Unsloth vs Accelerate Shard Loading: Members discussed how unsloth can save 4bit models and load them in 6 shards, suggesting a similar approach for accelerate. However, one noted that the delay in loading times is likely related to quantization rather than hard drive speed or mere disk read times.

LLM Finetuning (Hamel + Dan) ▷ #wing-axolotl (6 messages):

-

Qwen tokenizer debugging insights shared: After extensive debugging and communication with the Qwen team, it was determined that PreTrainedTokenizer is correct and using Qwen2Tokenizer might cause issues. This issue stems from differences in how LLamaFactory and Axolotl handle

get_train_dataloadercalls (Huggingface transformers trainer; Axolotl trainer builder). -

Adjusting prompt styles in Axolotl: A member inquired about setting different prompt styles for the alpaca format in Axolotl. Another member suggested using the

chat_template: chatmlconfiguration to change the prompt format as per the dataset requirements (Axolotl prompters).

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #freddy-gradio (2 messages):

- Deploy Gradio RAG app easily: A user asked for the easiest way to deploy a simple Python script for a Gradio-based RAG app so that a small group of users can test it out. Another user recommended using HF Spaces for the deployment.

- Concerns about "share=true" functionality: The same user expressed curiosity about whether using "share=true" in the launch method sends their code to be stored on a Gradio server. There were no additional responses to this query in the messages provided.

LLM Finetuning (Hamel + Dan) ▷ #charles-modal (12 messages🔥):

- Charles Frye praises community support: Charles thanked the members who posted event links, highlighting specific users. "Thanks so much to the folks who posted the links i mentioned in this chat! y'all are the best."

- Anticipation and OLAP orientation: Charles expressed excitement about future discussions and noted their system is "much more oriented to read-heavy OLAP than write-heavy OLTP."

- Recording of Office Hours: Modal with Charles Frye missing: A user asked about the recording of the event, noting that the course page still had the "join event" link. Other members confirmed the issue, and Dan fixed it, stating, "Should be fixed now."

LLM Finetuning (Hamel + Dan) ▷ #langchain-langsmith (70 messages🔥🔥):

-

LangChain Tools Demystified: A discussion on the differences between LangChain, LangSmith, LangGraph, LangFlow, and LangServe revealed that LangChain is a framework for developing applications using LLMs, LangSmith is for inspecting and optimizing chains, and LangServe turns any chain into an API. LangFlow and LangGraph usages were more ambiguously linked to this framework (LangChain Intro).

-

LangServe Praised, LangFlow Not Directly Related: LangServe was highlighted as a favorite tool for turning chains into APIs. Several users clarified that LangFlow is not directly related to the LangChain suite but uses the LangChain framework.

-

Infrastructure and Deployment Talks: There was interest in more granular controls within LangServe, and frustrations were expressed regarding its API documentation. Additionally, discussions touched on leveraging OpenAI's batch API for synthetic data generation and the comprehensive learning required for GPU optimization and fine-tuning algorithms.

-

Generative UI Hype: Members discussed new developments like GenUI for improving consumer understanding of AI, with a notable focus on generative UI examples from CVP at W&B and a Generative UI GitHub template.

-

Blog Post on LangChain & LangSmith: A user shared a blog post detailing their experience using LangChain and LangSmith with LLama3:8b on Ollama and Jarvislabs, prompting others to share it on social media for broader visibility.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #allaire_inspect_ai (4 messages):

LLM Finetuning (Hamel + Dan) ▷ #credits-questions (12 messages🔥):

-

Confusion over Registration Deadlines: There was some confusion regarding the deadline for submitting forms for $3,500 in compute credits, with Hamel Husain's tweet indicating May 29, while email communications suggested May 30.

-

Last-Minute Form Submission: Members were concerned about submitting forms by the deadline, but it was clarified that the deadline was midnight to provide 24hrs of leeway for those who signed up the previous night.

-

Credit Allocation Delays: Users expressed worries about delays in credit grants from modal forms and Charles explained the slight delay was due to human review processes taking time.

-

Predibase Registration Issue Solved: Michael Ortega informed users that Predibase had removed the restriction on Gmail addresses for account creation and encouraged users facing issues to contact support.

-

Notification of Credits: It was clarified that credits would be reflected directly in users' accounts on the respective platforms and that the allocation might take until the middle of next week due to varying vendor responsiveness.

Link mentioned: Tweet from Hamel Husain (@HamelHusain): The $3,500 in compute credits end TODAY. We won't be able to give them out after 11:59 PM PST 5/29/2024 Quoting Eugene Yan (@eugeneyan) PSA: Signups for LLM-conf + finetuning workshop close to...

LLM Finetuning (Hamel + Dan) ▷ #west-coast-usa (1 messages):

- Evals Gathering in SF: A member announced a gathering with 50 or so folks at their co-op in the Mission, SF, to discuss evaluations this Sunday. They asked interested parties to DM them for an invite and provide a social account for verification.

LLM Finetuning (Hamel + Dan) ▷ #east-coast-usa (4 messages):

-

Hopeful Registration for Private Event: One member expressed excitement about registering for an upcoming event and is hopeful for acceptance.

-

Call for DC Meetup: Another member suggested organizing a meetup event in Washington, DC.

-

Chicago's Geographical Dilemma: A member highlighted that Chicago feels closer to the East Coast and inquired about the possibility of creating a midwest-usa channel.

-

Modal Labs Hosting Office Hours in NYC: Modal Labs is hosting an office hours session at their HQ in SoHo, NYC. Details for registration, location, and the event schedule are listed in the event link, open to those verifying token ownership with their wallet.

Link mentioned: [NYC] Modal Office Hours · Luma: Have questions about your Modal deployment or just want to learn more? Come by our first office hours in NY! Even if you don't have a particular question in…

LLM Finetuning (Hamel + Dan) ▷ #europe-tz (4 messages):

- Users share their locations across Europe: Members are checking in from different parts of Europe. One user mentioned being in Nuremberg, Germany, another from Milan, Italy, a third from Munich, Germany, and a final one from Linz, Austria.

LLM Finetuning (Hamel + Dan) ▷ #announcements (1 messages):

- Last Reminder for Credit Forms: "THIS IS YOUR LAST REMINDER FOR CREDITS! If you not fill out the forms within the next EIGHT HOURS you will NOT BE GRANTED ACCESS TO ANY OF THE CREDITS YOU HAVE AVAILABLE TO YOU! YOU CAN FILL THEM AGAIN JUST IN CASE." Members are urged to fill out credit forms immediately to ensure access.

LLM Finetuning (Hamel + Dan) ▷ #predibase (2 messages):

- Predibase offers a free trial: Users were encouraged to sign up for a 30-day free trial of Predibase, which includes $25 of credits. Predibase allows for fine-tuning and deploying open-source models on a scalable cloud infrastructure.

- Inquiry about selecting checkpoints in Predibase: A user asked if it's possible to try different checkpoints after fine-tuning a model, referencing Predibase's documentation that "The checkpoint used for inference will be the checkpoint with the best performance (lowest loss) on the evaluation set." The user used Predibase to fine-tune a L3 70B model on a small ~200 record dataset.

Link mentioned: Request Free Trial: Try Predibase for free today - Sign up for your trial

LLM Finetuning (Hamel + Dan) ▷ #career-questions-and-stories (8 messages🔥):

-

Academic to Industry Transition Troubles: A user with a Ph.D. in philosophy and cognitive science shared their experience of shifting from academia to data science and software engineering. They expressed the challenge of finding new learning opportunities outside a small academic lab and the difficulty of choosing a path in AI given their broad interests and family obligations.

-

Contracting as a Lucrative Option: Another member shared their positive experiences with contracting, explaining that it offers exposure to diverse problems and cultures. They highlighted the benefits of having the flexibility to choose projects and the possibility of getting long-term offers from organizations if performance is good.

-

Industry Job Rejections Part of the Process: A user advised that breaking into the industry may involve many rejections and possibly taking an unappealing first job. They emphasized the importance of building a resume and GitHub portfolio to make future job applications easier and more successful.

-

Transition from Academia to Tech Roles: One member recounted their move from academia to industry, starting with an internship at a tech startup and eventually holding various roles like sales engineer and product manager. They stressed the difficulty of finding opportunities that don't require a decrease in quality of life, especially with current economic challenges.

-

Encouragement and Offer to Help: Several users encouraged the original poster and others in similar situations, offering support and emphasizing the importance of perseverance. "If there's anything I can do to help, hit me up. I'm all about people lifting each other up."

LLM Finetuning (Hamel + Dan) ▷ #openai (1 messages):

rubenamtz: 👀 , credits are still cooking?

HuggingFace ▷ #announcements (10 messages🔥):

-

GPT-powered PDF Chats using llama.cpp and Qdrant: Check out the everything-ai project which now supports llama.cpp and Qdrant, enabling users to chat with their PDFs. This has been publicly appreciated as "coolest community news" and well-received by community members.

-

Codestral-22B Quantization and Nvidia's New Model Demo: The quantized version of Mistral's model, Codestral-22B-v0.1-GGUF, has been highlighted. Nvidia’s embedding model demo adds to the suite of innovative AI applications shared this month.

-

SD.Next and BLIP Dataset Innovations: The SD.Next release is praised for its new UI and high-res generation capabilities. Additionally, the BLIP dataset was developed using Clotho, which enhances the growing dataset collection.

-

New Tools and Plugins Galore: From the OSS voice-controlled robotic arm YouTube video to the Falcon VLM demo, multiple utilities and demos are shared. These include free-to-use calligraphy datasets, better transcription apps, and visual 3D protein analysis tools.

-

Community Events and Engagement: The community events, such as coding sessions and discussions about AI projects and community-led news, have been noted for their value. These highlights are appreciated by several community members for keeping them updated and engaged with the latest advancements.

Links mentioned:

HuggingFace ▷ #general (415 messages🔥🔥🔥):

-

Confusion Over Data Formatting and Tokens: There was an ongoing discussion about the correct format of chatbot training data. Examples like

"<|user|>Do you enjoy cooking?</s><|assistant|>I can bake, but I'm not much of a cook.</s></s>"led to confusion, prompting questions about whether two</s>tokens are necessary. -

Billing Issues Cause Uproar: A user named Tensorrist expressed urgent distress over a $100 charge for Hugging Face services, claiming they never used the service. Attempts to direct them to contact support at [email protected] seemed to escalate into a heated back-and-forth.

-

Model Merging Competition at NeurIPS: An announcement was shared about a model merging competition at NeurIPS, offering a prize pool of $8K. The community was encouraged to participate and revolutionize model selection and merging (link).

-

Blogpost Discussions: Multiple users discussed creating tutorial blog posts, particularly focusing on fine-tuning specific models like TinyLlama and Mistral. One user requested help in avoiding overwriting their README every time they push to the hub.

-

Questions About Fine-Tuning with Specific Data: Questions were asked about fine-tuning large language models on unique datasets, such as using RDF dumps from Wikipedia or multi-modal models with only text data. Responses suggested technical methods and directed users to the proper channels for more engagement.

Links mentioned:

HuggingFace ▷ #today-im-learning (3 messages):

-

Queries about Unit 1's identity: Members discussed the concept of "Unit 1" prompting a question about whether it's a course on Hugging Face. This was clarified with information about various courses including Reinforcement Learning and Computer Vision.

-

Reinforcement Learning Course: It was specified that one of the courses offered is a Reinforcement Learning course where participants train Huggy the dog. A link to the course was shared, pointing to Hugging Face's learning resources.

-

Community Computer Vision Course Shared: Another course, focused on computer vision ML, was mentioned. The course objectives include teaching ML concepts using libraries and models from the Hugging Face ecosystem, with a shared link to Community Computer Vision Course.

Link mentioned: Hugging Face - Learn: no description found

HuggingFace ▷ #cool-finds (6 messages):

-

NeurIPS hosts model merging contest: A member shared an announcement about a model merging competition at NeurIPS with a link to the announcement tweet. The competition offers an $8K prize and is sponsored by Hugging Face, Sakana AI Labs, and arcee ai, with more details available on the official site.

-

Transformers tackle arithmetic with new embeddings: A new paper titled Transformers Can Do Arithmetic with the Right Embeddings reveals that adding specific positional embeddings to each digit helps transformers solve arithmetic problems more efficiently. The study achieved up to 99% accuracy on 100-digit addition problems by training on 20-digit numbers for just one day. Read the paper here.

Links mentioned:

HuggingFace ▷ #i-made-this (10 messages🔥):