AI News for 6/5/2024-6/6/2024. We checked 7 subreddits, 384 Twitters and 30 Discords (408 channels, and 2450 messages) for you. Estimated reading time saved (at 200wpm): 304 minutes.

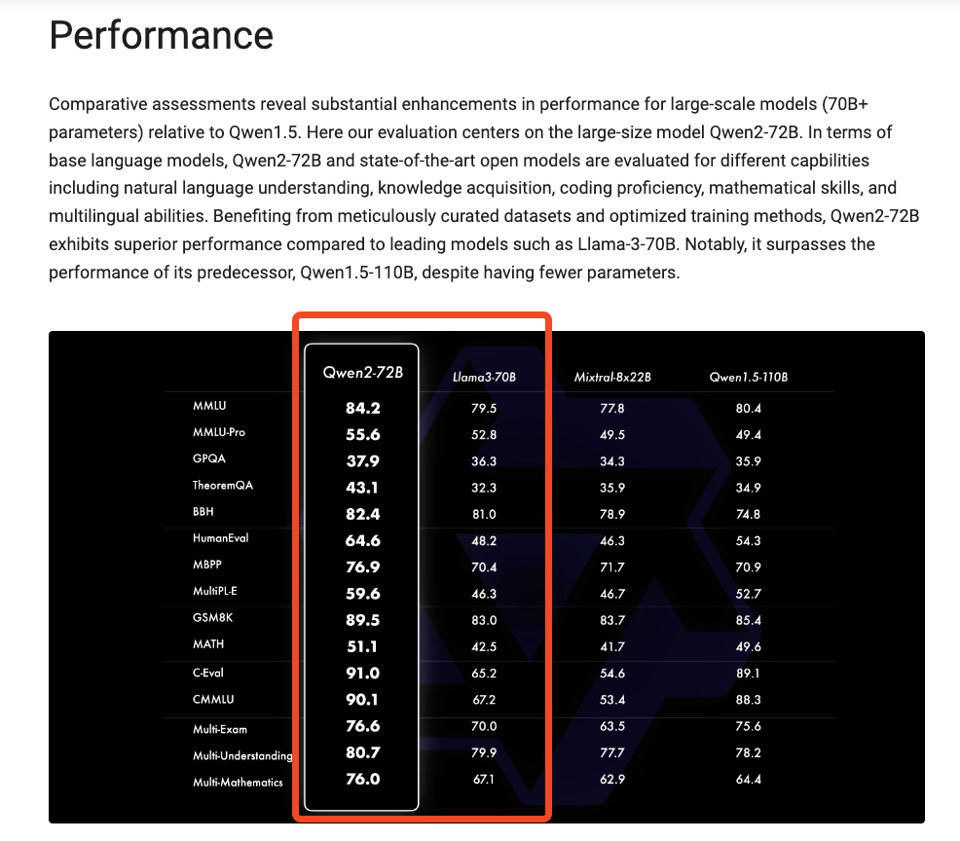

With Qwen 2 being Apache 2.0, Alibaba is now claiming to universally beat Llama 3 for the open models crown:

There are zero details on dataset so it's hard to get any idea of how they pulled this off, but they do drop some hints on post-training:

Our post-training phase is designed with the principle of scalable training with minimal human annotation.

Specifically, we investigate how to obtain high-quality, reliable, diverse and creative demonstration data and preference data with various automated alignment strategies, such as

- rejection sampling for math,

- execution feedback for coding and

- instruction-following, back-translation for creative writing,

- scalable oversight for role-play, etc.

These collective efforts have significantly boosted the capabilities and intelligence of our models, as illustrated in the following table.

They also published a post on Generalizing an LLM from 8k to 1M Context using Qwen-Agent.

{% if medium == 'web' %}

Table of Contents

[TOC]

{% else %}

The Table of Contents and Channel Summaries have been moved to the web version of this email: [{{ email.subject }}]({{ email_url }})!

{% endif %}

AI Twitter Recap

all recaps done by Claude 3 Opus, best of 4 runs. We are working on clustering and flow engineering with Haiku.

Qwen2 Open-Source LLM Release

- Qwen2 models released: @huybery announced the release of Qwen2 models in 5 sizes (0.5B, 1.5B, 7B, 57B-14B MoE, 72B) as Base & Instruct versions. Models are multilingual in 29 languages and achieve SOTA performance on academic and chat benchmarks. Released under Apache 2.0 except 72B.

- Performance highlights: @_philschmid noted Qwen2-72B achieved MMLU 82.3, IFEval 77.6, MT-Bench 9.12, HumanEval 86.0. Qwen2-7B achieved MMLU 70.5, MT-Bench 8.41, HumanEval 79.9. On MMLU-PRO, Qwen2 scored 64.4, outperforming Llama 3's 56.2.

- Multilingual capabilities: @huybery highlighted Qwen2-7B-Instruct's strong multilingual performance. The models are trained in 29 languages including European, Middle East and Asian languages according to @_philschmid.

Groq's Inference Speed on Large LLMs

- Llama-3 70B Tokens/s: @JonathanRoss321 reported Groq achieved 40,792 tokens/s input rate on Llama-3 70B using FP16 multiply and FP32 accumulate over the full 7989 token context length.

- Llama 70B in 200ms: @awnihannun put the achievement in perspective, noting Groq ran Llama 70B on ~4 Wikipedia articles in 200 milliseconds, which is about the blink of an eye, with 16-bit precision and 32-bit accumulation (lossless).

Sparse Autoencoder Training Methods for GPT-4 Interpretability

- Improved SAE training: @gdb shared a paper on improved methods for training sparse autoencoders (SAEs) at scale to interpret GPT-4's neural activity.

- New training stack and metrics: @nabla_theta introduced a SOTA training stack for SAEs and trained a 16M latent SAE on GPT-4 to demonstrate scaling. They also proposed new SAE metrics beyond MSE/L0 loss.

- Scaling laws and metrics: @nabla_theta found clean scaling laws with autoencoder latent count, sparsity, and compute. Larger subject models have shallower scaling law exponents. Metrics like downstream loss, probe loss, ablation sparsity and explainability were explored.

Meta's No Language Left Behind (NLLB) Model

- NLLB model details: @AIatMeta announced the NLLB model, published in Nature, which can deliver high-quality translations directly between 200 languages, including low-resource ones.

- Significance of the work: @ylecun noted NLLB's ability to provide high-quality translation between 200 languages in any direction, with sparse training data, and for many low-resource languages.

Pika AI's Series B Funding

- $80M Series B: @demi_guo_ announced Pika AI's $80M Series B led by Spark Capital. Guo expressed gratitude to investors and team members.

- Hiring and future plans: @demi_guo_ reflected on the past year's progress and teased updates coming later in the year. Pika AI is looking for talent across research, engineering, product, design and ops (link).

Other Noteworthy Developments

- Anthropic's elections integrity efforts: @AnthropicAI published details on their processes for testing and mitigating elections-related risks. They also shared samples of the evaluations used to test their models.

- Cohere's startup program launch: @cohere launched a startup program to support early-stage companies solving real-world business challenges with AI. Participants get discounted access, technical support and marketing exposure. Cohere also released a library of cookbooks for enterprise-grade frontier models for applications like agents, RAG and semantic search (link).

- Prometheus-2 for RAG evaluation: @llama_index introduced Prometheus-2, an open-source LLM for evaluating RAG applications as an alternative to GPT-4. It can process direct assessment, pairwise ranking and custom criteria.

- LangChain x Groq integration: @LangChainAI announced an upcoming webinar on building LLM agent apps with LangChain and Groq's integration.

- Databutton AI engineer platform: @svpino shared that Databutton launched an AI software engineer platform to help build applications with React frontends and Python backends based on a business idea.

- Microsoft's Copilot+ PCs: @DeepLearningAI reported on Microsoft's launch of Copilot+ PCs with AI-first specs featuring generative models and search capabilities, with the first machines using Qualcomm Snapdragon chips.

AI Reddit Recap

Across r/LocalLlama, r/machinelearning, r/openai, r/stablediffusion, r/ArtificialInteligence, /r/LLMDevs, /r/Singularity. Comment crawling works now but has lots to improve!

LLM Developments and Applications

-

Open-source RAG application: In /r/LocalLLaMA, user open-sourced LARS, a RAG-centric LLM application that generates responses with detailed citations from documents. It supports various file formats, has conversation memory, and allows customization of LLMs and embeddings.

-

Favorite open-source LLMs: In /r/LocalLLaMA, users shared their go-to open-source LLMs for different use cases, including Command-R for RAG on small-medium document sets, LLAVA 34b v1.6 for vision tasks, Llama3-gradient-70b for complex questions on large corpora, and more.

-

AI business models: In /r/LocalLLaMA, a user questioned how much original work vs leveraging existing LLM APIs new AI businesses are actually doing, as many seem to just wrap OpenAI's API for specific domains.

-

Querying many small files with LLMs: In /r/LocalLLaMA, a user sought advice on feeding 200+ small wiki files into an LLM while preserving relationships between them, as embeddings and RAG have been hit-or-miss. Proper LLM training or LoRA are being considered.

-

Desktop app for local LLM API: In /r/LocalLLaMA, a user created a desktop app to interact with LMStudio's API server on their local machine and is gauging interest in releasing it for free.

-

Educational platform for LLMs: In /r/LocalLLaMA, Open Engineer was announced, a free educational resource covering topics like LLM fine-tuning, quantization, embeddings and more to make LLMs accessible to software engineers.

-

LLM assistant for database operations: In /r/LocalLLaMA, a user shared their experience integrating an LLM assistant into software for database CRUD and order validation, considering using the surrounding system to supplement the LLM with product lookups for more robust order processing.

AI Developments and Concerns

-

Speculation on superintelligence development: In /r/singularity, a user speculated that major AI labs, chip companies and government agencies are likely already coordinating on superintelligence development behind the scenes, similar to the Manhattan Project, and that the US government is probably forecasting and preparing for the implications.

-

Questions around UBI implementation: In /r/singularity, a user expressed frustration at the lack of concrete plans or frameworks for implementing UBI despite it being seen as a solution to AI-driven job displacement, raising questions about funding, population growth impacts, and more that need to be addressed.

-

Risks of open-source AGI misuse: In /r/singularity, a user asked how the open-source community will prevent powerful open-source AGI from being misused by bad actors to cause harm, given the lack of safeguards, arguing the "it's the same as googling it" counterargument is oversimplified.

-

Controlling ASI: In /r/singularity, a user questioned the belief that ASI could be controlled by humans or militaries given its superior intelligence, with commenters agreeing it's unlikely and attempts at domination are misguided.

AI Assistants and Interfaces

-

Chat mode as voice data harvesting: In /r/singularity, a user speculated OpenAI's focus on chat mode is a strategic move to collect high-quality, natural voice data to overcome the limitations of text data for AI training, as voice represents a continuous stream of consciousness closer to human thought processes.

-

Unusual ChatGPT use cases: In /r/OpenAI, users shared unusual things they use ChatGPT for, including generating overly dramatic plant watering reminders, converting cooking instructions from oven to air fryer, and mapping shopping list items to store aisles.

-

Need for an "AI shell" protocol: In /r/OpenAI, a user envisioned the need for a standardized "AI shell" protocol to allow AI agents to easily interface with and control various devices, similar to SSH or RDP, as existing protocols may not be sufficient.

AI Content Generation

-

Evolving views on AI music: In /r/singularity, a user shared their evolving perspective on AI music, seeing it as well-suited for generic YouTube background music due to aggressive restrictions on mainstream music, and asked for others' thoughts.

-

AI-generated post-rock playlist: In /r/singularity, a user shared a 30-minute AI-generated post-rock playlist on YouTube featuring immersive tracks made with UDIO, receiving positive feedback about forgetting it's AI music.

-

AI in VFX project: In /r/singularity, a user shared a VFX project incorporating AI to create urban aesthetics inspired by ad displays, using procedural systems, image compositing, and layered AnimateDiffs, with CG elements processed individually and integrated.

AI Discord Recap

A summary of Summaries of Summaries

-

LLM and Model Performance Innovations:

-

Qwen2 Attracts Significant Attention with models ranging from 0.5B to 7B parameters, appreciated for their ease of use and rapid iteration capabilities, supporting innovative applications with 128K token contexts.

-

Stable Audio Open 1.0 Generates Interest leveraging components like autoencoders and diffusion models, as detailed on Hugging Face, raising community engagement in custom audio generation workflows.

-

ESPNet Competitive Benchmarks Shared for Efficient Transformer Inference: Discussions around the newly released ESPNet showed promising transformer efficiency, pointing towards enhanced throughput on high-end GPUs (H100), as documented in the ESPNet Paper.

-

Seq1F1B Promotes Efficient Long-Sequence Training: The pipeline scheduling method introduces significant memory savings and performance gains for LLMs, as per the arxiv publication.

-

-

Fine-tuning and Prompt Engineering Challenges:

-

Model Fine-tuning Innovations: Fine-tuning discussions highlight the use of gradient accumulation to manage memory constraints, and custom pipelines such as using

FastLanguageModel.for_inferencefor Alpaca-style prompts, as demonstrated in a Google Colab notebook. -

Chatbot Query Generation Issues: Debugging Cypher queries using Mistral 7B emphasized the importance of systematic evaluation and iterative tuning methods in successful model training.

-

Adapter Integration Pitfalls: Critical challenges with integrating trained adapters pointed to a need for more efficient adapter loading techniques to maintain performance, supported by practical coding experiences.

-

-

Open-Source AI Developments and Collaborations:

-

Prometheus-2 Evaluates RAG Apps: Prometheus-2 offers an open-source alternative to GPT-4 for evaluating RAG applications, valued for its affordability and transparency, detailed on LlamaIndex.

-

Launch of OpenDevin sparks collaboration interest, featuring a robust AI system for autonomous engineering developed by Cognition, with documentation available via webinar and GitHub.

-

Gradient Accumulation Strategies Improve Training: Discussions on Unsloth AI emphasized using gradient accumulation to handle memory constraints effectively, reducing training times highlighted by shared YouTube tutorials.

-

Mojo Rising as a Backend Framework: Developers shared positive experiences using Mojo for HTTP server development, depicting its advantages in static typing and compile-time computation features on GitHub.

-

-

Deployment, Inference, and API Integrations:

-

Perplexity Pro Enhances Search Abilities: The recent update added step-by-step search processes via an intent system, enabling more agentic execution, as discussed within the community around Perplexity Labs.

-

Discussion on Modal's Deployment and Privacy: Queries about using Modal for LLM deployments included concerns about its fine-tuning stack and privacy policies, with additional support provided through Modal Labs documentation.

-

OpenRouter Technical Insights and Limits: Users explored technical specifications and capabilities, including assistant message prefill support and handling function calls through Instructor tool.

-

-

AI Community Discussions and Events:

-

Stable Diffusion 3 Speculation: Community buzz surrounds the anticipated release, with speculation about features and timelines, as detailed in various Reddit threads.

-

Human Feedback Foundation Event on June 11: Upcoming discussions on integrating human feedback into AI, featuring speakers from Stanford and OpenAI with recordings available on their YouTube channel.

-

Qwen2 Model Launches with Overwhelming Support: Garnering excitement for its multilingual capabilities and enhanced benchmarks, the release on platforms like Hugging Face highlights its practical evaluations.

-

Call for JSON Schema Support in Mozilla AI: Requests for JSON schema inclusion in the next version to ease application development were prominently noted in community channels.

-

Keynote on Robotics AI and Foundation Models: Investment interests in "ChatGPT for Robotics" amid foundation model companies underscore the strategic alignment detailed in Newcomer's article.

-

PART 1: High level Discord summaries

LLM Finetuning (Hamel + Dan) Discord

-

Web Scraping Tool Talk: Engineers swapped notes on OSS scraping platforms like Beautiful Soup and Scrapy, with a nod to Trafilatura for its proficiency in extracting dynamic content from tricky sources like job postings and SEC filings, citing its documentation.

-

New UI Kid on the Block: Google's new Mesop is stirring conversations for its potential in crafting UIs for internal tools, stepping into the domain of Gradio and Streamlit, despite lacking advanced authentication - curiosity piqued with a glance at Mesop's homepage.

-

Query Crafting Challenges: Engineers debugged generating Cypher queries with Mistral 7B, emphasizing the importance of systematic evals, test-driven development, and the iterative process—a testament to the nitty-gritty of model fine-tuning.

-

Diving into Deployment: Questions swirled about Modal's usage, including its fine-tuning stack complexity and privacy policy—a reference to Modal Labs query for policy seekers, and a nod to their Dreambooth example for practical enlightenment.

-

CUDA Compatibility Quirks: The compatibility quirks between CUDA versions took the spotlight as engineers faced issues installing the

xformersmodule—a pointer to Jarvis Labs documentation on updating CUDA came to the rescue.

OpenAI Discord

- GPT-3's Programming Puzzle: While GPT models like GPT-3 are adept at assisting with programming, limitations become apparent with highly specific and complex questions, signaling a push against their current capabilities.

- Logical Loophes with Math Equations: Even simple logical tasks, such as correcting a math equation, can stumble GPT models, revealing gaps in basic logical reasoning.

- Eagerly Awaiting GPT-4o's Special Features: Discussions anticipate GPT-4o updates, with voice and real-time vision features to be initially available to ChatGPT Plus users in weeks, and wider access later, as suggested by OpenAI's official update.

- DALL-E's Text Troubles: Users are sharing workarounds for DALL-E's struggles with generating logos that contain precise text, including prompts to iteratively refine text accuracy and a useful custom GPT prompt.

- 7b and Vision Model Synergy: An integration success story where the 7b model harmonizes well with the llava-v1.6-mistral-7b vision model, expanding possibilities for model collaboration.

Unsloth AI (Daniel Han) Discord

Gradient Accumulation to the Rescue: Engineers agreed that gradient accumulation can alleviate memory constraints and improve training times, but warned of potential pitfalls with larger batch sizes due to unexpected memory allocation behaviors.

Tackling Inferential Velocity with Alpacas: An engineer shared a code snippet leveraging FastLanguageModel.for_inference to utilize Alpaca-style prompts for sequence generation in LLMs, which sparked interest alongside discussions about a shared Google Colab notebook.

Adapter Merging Mayday: Challenges with integrating trained adapters causing significant dips in performance led to calls for more efficient adapter loading techniques to maintain training efficiency.

Qwen2 Models Catch Engineers' Eyes: Excitement bubbles over the release of Qwen2 models, with engineers keen on the smaller-sized models ranging from 0.5B to 7B for their ease of use and faster iteration capabilities.

Quest for Solutions in the Help Depot: Conversations in the help channel emphasized a need for a VRAM-saving lora-adapter file conversion process, quick intel on a bug potentially slowing down inference, strategies for mitigating GPU memory overloads, and clarifications on running gguf models and implementing a RAG system, referenced to Mistral documentation.

Stability.ai (Stable Diffusion) Discord

-

Stable Audio Open 1.0 Hits the Stage: Stable Audio Open 1.0 garners community interest for its innovative components, including the autoencoder and diffusion model, with members experimenting with tacotron2, musicgen, and audiogen within custom audio generation workflows.

-

Size Matters in Stable Diffusion: Users recommend generating larger resolution images in Stable Diffusion before downsizing as an effective workaround for the random color issue plaguing small resolution (160x90) image outputs.

-

ControlNet Sketches Success: ControlNet emerges as a preferred solution among members for converting sketches to realistic images without retaining unwanted colors, providing better control over the final composition and image details.

-

CivitAI Content Quality Control Called Into Question: A surge in non-relevant content on CivitAI has led to calls for enhanced filtering capabilities to better curate quality models and maintain user experience.

-

Stable Diffusion 3 Awaits Clarification: Despite rampant speculation within the community, the release date and details surrounding Stable Diffusion 3 remain nebulous, with some members referencing a Reddit post for tentative answers.

LM Studio Discord

-

Taming the RAM Beast in LM Studio: Users discussed strategies to limit RAM usage in LM Studio, suggesting approaches like loading and unloading models during use; a detailed method can be found in the llamacpp documentation. While not a built-in feature in LM Studio, such tactics are employed to enable models to utilize RAM only when active despite efficiency losses.

-

Nomic Embed Models Step into the Limelight: The discussion elevated Nomic Embed Text models to multimodal status, thanks to nomic-embed-vision-v1.5, setting them apart with impressive benchmark performances. The AI community within the server also noted Q6 quant models of Llama-3 as a balance of quality and performance.

-

Jostling for the Perfect Configuration: A user raised an issue about model settings resetting when initiating new chats, and discovered that retaining old chats could be a simple fix. The conversation also touched on

use_mlockand--no-mmapsettings in LM Studio, affecting stability during 8B model operations, emphasizing operating system-dependent subtleties. -

Unlocking the Potential of Hardware Synergy for AI: Engineers entered into a hearty debate on Nvidia’s proprietary drivers versus AMD’s open-source approach, highlighting implications for systems administration and security. Additionally, there was excitement about the promises of new Qualcomm chips and caution against judging ARM CPUs solely by synthetic benchmarks.

-

Updates and Upgrades Stir Excitement: The Higgs LLAMA model garnered praise for its intelligence at a 70B scale, with anticipation building around an upcoming LMStudio update to incorporate relevant llamacpp adjustments. Another user is considering a massive RAM upgrade in anticipation of the much-discussed LLAMA 3 405B model, reflecting the intertwining interests between hardware capabilities and AI model evolution.

HuggingFace Discord

Moderation is Key: The community debated moderation strategies in response to reports of inappropriate behavior. Professionalism in handling such issues is crucial.

Gradio API Challenges: Integrating Gradio with React Native and Node.js raised questions within the community. It's built with Svelte, so users were directed to investigate Gradio's API compatibility.

Text with Stability: Discussion around Stable Diffusion models for text generation pointed members towards solutions like AnyText and TextDiffuser-2 from Microsoft for robust output.

When Compute Goes Peer-to-Peer: The conversation turned to peer-to-peer compute for distributed machine learning, with tools like Petals and experiences with privacy-conscious local swarms offering promising avenues.

Human Feedback in AI: The Human Feedback Foundation is making strides in incorporating human feedback into AI, with an event on June 11th and a trove of educational sessions on their YouTube channel.

Small Datasets, Big Challenges: In computer vision discussions, dealing with small datasets and unrepresentative validation sets was a pressing concern. Solutions include using diverse training data and maybe even transformers despite their longer training times.

Swin Transformer Tests: There was a query about applying the Swin Transformer to CIFar datasets, highlighting the community's interest in experimenting with contemporary models in various scenarios.

Deterministic Models Turn Down the Heat: A single message highlighted lowering temperature settings to 0.1 to achieve more deterministic model behavior, prompting reflection on model tuning approaches.

Sample Input Snafus: Confusion over text embeddings and proper structuring of sample inputs for models like text-enc 1 and text-enc 2 surfaced, along with a discussion on the challenges posed by added kwargs in a dictionary format.

Re-parameterising with Results: A member successfully re-parameterised Segmind's ssd-1b into a v-prediction/zsnr refiner model and lauded it as a new favorite, hinting at a possible trend toward 1B mixture of experts models.

A Helping Hand for Projects: In a stretch of community aid, members offered personal assistance through DMs for addressing dataset questions, adding to the guild's collaborative environment.

Eleuther Discord

KAN Skepticism Expressed: Kolmogorov-Arnold Networks (KANs) were deemed less efficient than traditional neural networks by guild members, with concerns about their scalability and interpretability. However, there's interest in more efficient implementations of KANs, such as those using ReLU, evidenced by a shared ReLU-KAN architecture paper.

Expanding the Data Curation Toolbox: Participants debated the utility of influence functions in data quality evaluation, with the LESS algorithm (LESS algorithm) being mentioned as a potentially more scalable alternative for selecting high-quality training data.

Breakthroughs in Efficient Model Training: Innovations in model training were widely shared, including Nvidia's new open weights available on GitHub, the exploration of MatMul-free models (arXiv) for increased efficiency, and Seq1F1B's promise for more memory-efficient long-sequence training (arXiv).

Quantization Technique May Boost LLM Performance: The novel QJL method presents a promising avenue for large language models by compressing KV cache requirements through a quantization process (arXiv).

Brain-Data Speech Decoding Adventure: A guild member reported experimenting with Whisper tiny.en embeddings and brain implant data to decode speech, requesting peer suggestions to optimize the model by adjusting layers and loss functions while facing the constraint of a single GPU for training.

Perplexity AI Discord

-

Perplexity Pro Gets Smarter: Perplexity Pro has upgraded to show its search process step-by-step, employing an intent system for a more agentic-like execution, approximately a week ago.

-

File Format Frustrations: Users are experiencing difficulties with Perplexity's ability to read PDFs, with success varying based on the PDF's content layout ranging from heavily styled to plain text.

-

Sticker Shock at Dev Costs: The community reacted with humor and disbelief to a member's request for building a text-to-video MVP on a shoestring budget of $100, highlighting the disconnect between expectations and market rates for developers.

-

Haiku Feature Haunts No More: The removal of Haiku and select features from Perplexity labs sparked discussions, leading members to speculate on cost-saving measures and express their discontent due to the impact on their workflow.

-

Curious about OpenChat Expansion: An inquiry was raised regarding the potential addition of an "openchat/openchat-3.6-8b-20240522" model to Perplexity, alongside current models like Mistral and Llama 3.

CUDA MODE Discord

-

Discovering Past Technical Sessions: Recordings of past CUDA MODE technical events can be accessed on the CUDA MODE YouTube channel.

-

Debugging Tensor Memory in PyTorch: A code snippet using

storage().data_ptr()was shared to test if two PyTorch tensors share the same memory, stirring discussion on checking memory overlap. A member requested assistance in locating the source code for a PyTorch C++ function, specifically at::_weight_int4pack_mm. -

Extension on AI Models and Techniques: Conversations hinge on methodologies like MoRA improving upon LoRA, and DPO versus PPO with respect to RLHF. Separate mentions went to CoPE for positional encodings and S3D accelerating inference, all found detailed in AI Unplugged.

-

Torch Innovation Sparks: Discussions ignited around torch.compile boosting KAN performance to rival MLPs, with insights shared from Thomas Ahle's tweet, practical experiences, and the GitHub repository for KANs and MLPs.

-

MLIR Sets Sights on ARM: An MLIR meeting covered creating an ARM SME Dialect, offering insights into ARM's Scalable Matrix Extension via a YouTube video. Hints pointing to potential Triton ARM support are discussed, with references to 'arm_neon' dialect for NEON operations in the MLIR documentation.

Interconnects (Nathan Lambert) Discord

-

The Quest for the ChatGPT of Robotics: Investors are on the lookout for startups that can be the "ChatGPT for robotics," prioritizing AI over hardware. Excitement is building around niche foundation model companies as detailed in this article.

-

Qwen2 Attracts Attention: Qwen2's launch has generated interest with models supporting up to 128K tokens in multilingual tasks, but users report gaps in recent event knowledge and general accuracy.

-

Dragonfly Unfurls Its Wings in Multi-modal AI: Together.ai's Dragonfly brings advances in visual reasoning, particularly in medical imaging, demonstrating integration of text and visual inputs in model development.

-

AI Community Takes a Critical View: From discussions around influential lab employees criticizing smaller players to a shared tweet highlighting the risk of AI labs inadvertently sharing advances with the CCP not the American research community, and The Verge article on Humane AI's safety issue, the community remains vigilant.

-

Reinforcement Learning Paper Stirring Interest: Sharing of the “Self-Improving Robust Preference Optimization” (SRPO) paper as announced in this tweet indicates a focus on training LLMs using robust and self-improving RLHF methods. Nathan Lambert plans to dedicate time to discuss such cutting-edge papers.

Modular (Mojo 🔥) Discord

-

Mojo Rising: Discussions in various channels have centered on the advantages of using Mojo for backend server development, with examples like lightbug_http demonstrating its use in crafting HTTP servers. The Mojo roadmap was shared, indicating future core programming features, as active comparison to Python sparked debates on performance merits due to Mojo's static typing and compile-time computation.

-

Keeping Python Safe: In teaching Python to newcomers, it's essential to avoid potential pitfalls ("footguns") to help learners transition to more complex languages, like C++.

-

Model Performance Frontiers Explored: Discussions indicated that extending models like Mistral beyond certain limits requires ongoing pretraining, with suggestions to apply the difference between UltraChat and base Mistral to Mistral-Yarn as a merging strategy, though practicality and skepticism were voiced.

-

Community Coding Contributions Cloaked with Humor: Expressions like "licking the cookie" were employed humorously to discuss encouraging open-source contributions, and members playfully reflected on the complexities of technical talks and coding challenges, likening a simple request for quicksort implementation to a noble quest.

-

Nightly Builds Yield Illumination and Frustration: Nightly builds were examined with a spotlight on the usage of immutable auto-deref for list iterators and the introduction of features like

String.formatin the latest compiler release2024.6.616. However, network hiccups and the unpredictable nature ofalgorithm.parallelizefor aparallel_sortfunction were sources of frustration, as seen from GitHub discussions and shared troubleshooting on workflow timing and network issues.

Latent Space Discord

- Cohere Cashes In Big Time: Cohere has secured a jaw-dropping $450 million in funding with significant contributions from tech titans like NVidia and Salesforce, despite not boasting a high revenue last year, as per a Reuters report.

- IBM's Granite Gains Ground: IBM's Granite models are being hailed for their transparency, particularly on data usage for training—prompting debates on whether they surpass OpenAI in the enterprise domain with insights from Talha Khan.

- Databricks Dominates Forrester’s AI Rankings: In the latest report by Forrester on AI foundation models, Databricks has been recognized as a leader, emphasizing the tailored needs of enterprises and suggesting benchmark scores aren't everything. The report is highlighted in Databricks' announcement blog and is accessible for free here.

- Qwen 2 Trumps Llama 3: The new Qwen 2 model, with impressive 128K context window capabilities, shows superior performance to Llama 3 in code and math tasks, announced in a recent tweet.

- New Avenues for AI Web Interaction and Assistance: Browserbase celebrates a $6.5 million seed fund aimed at enabling AI applications to navigate the web, shared by founders Nat & Dan, while Nox introduces an AI assistant designed to make the user experience feel invincible, with early sign-ups here.

LlamaIndex Discord

Prometheus-2 Pitches for RAG App Judging: Prometheus-2 is presented as an open-source alternative to GPT-4 for evaluating RAG applications, sparking interest due to concerns about transparency and affordability.

LlamaParse Pioneers Knowledge Graph Construction: A posted notebook demonstrates how LlamaParse can execute first-class parsing to develop knowledge graphs, paired with a RAG pipeline for node retrieval.

Configuration Overload in LlamaIndex: AI engineers are expressing difficulty with the complexity of configuring LlamaIndex for querying JSON data and are seeking guidance, as well as discussing issues with Text2SQL queries not balancing structured and unstructured data retrieval.

Exploring LLM Options for Resource-Limited Scenarios: Discussions on alternative setups for those with hardware limitations veer towards smaller models like Microsoft Phi-3 and experimenting with platforms like Google Colab for heavier models.

Scoring Filters Gain Customizable Edges: Engineers are discussing the capability of LlamaIndex to filter results by customizable thresholds and performance score, indicating a need for fine-tuned precision in search results.

Cohere Discord

-

Startup Perks for Early Adopters: Cohere introduces a startup program aiming to equip Series B or earlier stage startups with discounts on AI models, expert support, and marketing clout to spur innovation in AI tech applications.

-

Refining the Chatbot Experience: Upcoming changes to Cohere's Chat API on June 10th will bring a new default multi-step tool behavior and a

force_single_stepoption for simplicity's sake, all documented for ease of adoption in the API spec enhancements. -

Hot Temperatures for AI Samplers: OpenRouter stands out by allowing the temperature setting to exceed 1 for AI response samplers, contrasting with Cohere trial's 1.0 upper limit, opening discussions on flexibility in response variation and quality.

-

Developing Smart Group Chatbots: Suggestions arose regarding the deployment of AI chatbots in group scenarios like business meetings, analyzing Rhea's advantageous multi-user context handling and potential precision concerns for personalized responses among numerous users.

-

Cohere Networking & Demos: Community members welcomed new participant Toby Morning, exchanging LinkedIn profiles (LinkedIn Profile) for broader connections and showcasing enthusiasm for upcoming demonstrations of the Coral AGI system's prowess in multi-user settings.

Nous Research AI Discord

Qwen2 Leaps Ahead: The launch of the Qwen2 models marks a significant evolution from Qwen1.5, now featuring support for 128K token context lengths, 27 additional languages, and pretrained as well as instruction-tuned models in various sizes. They are available on platforms like GitHub, Hugging Face, and ModelScope, along with a dedicated Discord server.

Map Event Prediction Discussion: A user inquired about predicting true versus false event points on a map with temporal data, leading to a conversation about relevant commands and techniques, although specific methods were not provided.

Update on Mistral API and Model Storage: Mistral's introduction of a fine-tuning API and associated costs sparked discussion, with a focus on practical implications for development and experimentation. The API, including pricing details, is explained in their fine-tuning documentation.

Mobile Text Input Gets a Makeover: WorldSim Console updated their mobile platform, resolving bugs related to text input, improving text input reliability, and offering new features such as enhanced copy/paste and cosmetic customization options.

Music Exploration in Off-Topic: One member shared links to explore "Wakanda music", though this might have limited technical relevance for the engineer audience. Among the shared links were music videos like DG812 - In Your Eyes and MitiS & Ray Volpe - Don't Look Down.

OpenRouter (Alex Atallah) Discord

Server Management Made Easy with Pilot: The Pilot bot is revolutionizing how Discord servers are managed by offering features such as "Ask Pilot" for intelligent server insights, "Catch Me Up" for message summarization, and weekly "Health Check" reports on server activity. It's free to use and improves community growth and engagement, accessible through their website.

AI Competitors in Role-Playing Realm: The WizardLM 8x22b model is currently gaining popularity in the role-playing community, nevertheless Dolphin 8x22 emerges as a potential rival, awaiting user tests to compare their effectiveness.

Gemini Flash Sparks Image Output Curiosity: Inquiries about whether Gemini Flash can render images spurred clarification that while no Large Language Model (LLM) presently offers direct image outputs, they can theoretically use base64 or call external services like Stable Diffusion for image generation.

Tool Tips for Handling Function Calls: For handling specific function calls and formatting, Instructor is recommended as a powerful tool, facilitating automated command execution and improving user workflows.

Technical Discussions Amidst Model Enthusiasm: A member's query regarding prefill support in OpenRouter led to a confirmation that it's possible, particularly with the usage of reverse proxies; meanwhile, excitement is building around GLM-4 due to its support for the Korean language, hinting at the model's potential in multilingual applications.

MLOps @Chipro Discord

-

Human Feedback Spurs AI Improvement: The upcoming Human Feedback Foundation event on June 11th is set to address the role of human feedback in enhancing AI applications across healthcare and civic domains; interested parties can register via Eventbrite. Additionally, recordings of past events with speakers from UofT, Stanford, and OpenAI are available at the Human Feedback Foundation YouTube Channel.

-

Azure Event Attracts AI Enthusiasts: "Unleash the Power of RAG in Azure" is a highly subscribed Microsoft event happening in Toronto, as mentioned by an attendee seeking fellow participants; more details can be found on the Microsoft Reactor page.

-

Tackling Messy Data: Engineers discussed strategies for dealing with high-cardinality categorical columns, including the use of aggregate/grouping, manual feature engineering, string matching, and edit distance techniques, all with the goal of refining inputs for better regression outcomes.

-

Merging Data and Clustering Techniques: There's a shared perspective that combining spell correction with feature clustering might streamline the challenges posed by high-cardinality categorical data, with an emphasis on treating such issues as core data modeling problems.

-

Practical Approaches to Feature Engineering: Conversations pivoted towards pragmatic approaches like breaking down complex problems (e.g., isolating brand and item elements) and incorporating moving averages as part of the simplification technique for price prediction. Appreciation was expressed for the multifaceted solutions discussed, including regex for feature extraction.

OpenAccess AI Collective (axolotl) Discord

Data Feast for AI Enthusiasts: Engineers lauded the accessibility of 15T datasets, humorously noting the conundrum of abundance in data but scarcity in computing resources and funding.

GPU Banter Amidst Hardware Discussions: The suitability of 4090s for pretraining massive datasets sparked a facetious exchange, jesting about the limitations of consumer GPUs for such demanding tasks.

Finetuning Fun with GLM and Qwen2: The community shared tips and configurations for finetuning GLM 4 9b and Qwen2 models, noting that Qwen2's similarity to Mistral simplifies the process.

Quest for Reliable Checkpointing: The use of Hugging Face's TrainingArguments and EarlyStoppingCallback featured in talks about checkpoint strategies, specifically for capturing both the most recent and best performing states based on eval_loss.

Error Hunting in AI Code: Troubleshooting the "returned non-zero exit status 1" error prompted members to suggest pinpointing the failing command, scrutinizing stdout and stderr, and checking for permission or environment variable issues.

LAION Discord

-

Catchy Naming Conundrum: In the guild, clarity was sought on the 1B parameter zsnr/vpred refiner; it's critiqued that the model is actually a 1.3B, not a 1B model, sparking a light-hearted jab at the need for more accurate catchy names.

-

Vega's Parameter Predicament: Discussions on the Vega model highlighted its swift processing prowess but raised concerns about its insufficient parameter size potentially limiting coherent output generation.

-

Elrich Logos Dataset Remains a Mystery: A member queried about the availability of the Elrich logos dataset but did not receive any conclusive information or response regarding access.

-

The Dawn of Qwen2: The launch of Qwen2 has been announced, introducing substantial improvements over Qwen1.5 across multiple fronts including language support, context length, and benchmark performance. Qwen2 is now available in different sizes and supports up to 128K tokens with resources spread across GitHub, Hugging Face, ModelScope, and demo.

LangChain AI Discord

-

Knowledge Graph Construction Security Measures: A tutorial on constructing knowledge graphs from text was shared, emphasizing the importance of data validation for security before inclusion in a graph database.

-

LangChain Tech Nuggets: Confusion about the necessity of tools decorator prompted discussions for clarity, while a request for understanding token consumption tracking methods was observed among users. Additionally, a question arose about the creation of RAG diagrams as seen in a LangChain's FreeCodeCamp tutorial video.

-

Flow Control and Search Automation Resources: A LangGraph conditional edges YouTube video was highlighted for its utility in flow control in flow engineering, and a new project termed search-result-scraper-markdown was shared for fetching search results and converting them to Markdown.

-

Cross-Framework Agent Collaboration: Users expressed interest in frameworks that enable collaboration among agents built with different tools, incorporating LangChain, MetaGPT, AutoGPT, and even agents from platforms such as coze.com, highlighting the potential of interoperability in the AI space.

-

Calls for GUI and Course File Guidance: There was a user query about finding a specific "helper.py" file from the AI Agents LangGraph course, pointing towards a need for better resource discovery methods within technical courses such as those offered on the DLAI course page.

tinygrad (George Hotz) Discord

- Tinygrad Preps for Prime Time: George Hotz highlighted the need for updates in tinygrad before the 1.0 release, with pending PRs expected to resolve the current gaps.

- Unraveling Tensor Puzzles with UOps.CONST: AI engineers examined the role of UOps.CONST in tinygrad, which serves as address offsets in computational processes for determining index values during tensor addition.

- Decoding Complex Code: In response to confusion over a snippet of code, it was clarified that intricate conditions are often needed to efficiently manage tensor shapes and strides within the row-major data layout constraints.

- Indexing Woes Solved by Dynamic Kernel: A discussion on tensor indexing in tinygrad revealed that kernel generation is essential due to the architecture's reliance on static memory access, with the kernel in question enabling operations like Tensor[Tensor].

- Masking with Arange for Getitem Operations: The similarity between the kernel used for indexing operations and an arange kernel was noted, which facilitates the creation of a mask during getitem functions for dynamic tensor indexing.

OpenInterpreter Discord

Need for Speed with Graphics: Members are seeking advice on executing graphics output with interpreter.computer.run, specifically for visualizations like those produced by matplotlib without success thus far.

OS Mode Mayhem: Conversations highlighted troubles in getting --os mode to operate correctly with local models from LM Studio, including issues with local LLAVA models not starting screen recording.

Vision Quest on M1 Mac: Engineers expressed frustration about hardware constraints on vision models for M1 Mac, indicating a strong interest in free and accessible AI solutions, given the high costs associated with OpenAI's offerings.

Integration Anticipation for Rabbit R1: Excitement is brewing over integrating Rabbit R1 with OpenInterpreter, particularly the upcoming webhook feature, to enable practical actions.

Bash Model Request Open: A call for suggestions for an open model suitable for handling bash commands has yet to be answered, leaving an open gap for potential recommendations.

AI Stack Devs (Yoko Li) Discord

Curiosity for AI Town's Development Status: Members in AI Stack Devs sought an update on the project, with one expressing interest in progress, while another apologized for not contributing yet due to a lack of time.

Tileset Troubles in AI Town: An engineering challenge surfaced around parsing spritesheets for AI Town, with a proposal to use the provided level editor or Tiled, supported by conversion scripts from the community.

Learning to Un-Censor LLMs: A member shared insights from a Hugging Face blog post on abliteration, which uncensors LLMs, featuring instruct versions of the third generation of Llama models. They followed up by inquiring about applying this technique to OpenAI models.

Unanswered OpenAI Implementation Query: Despite sharing the study on abliteration, a call for knowledge on how to implement the technique with OpenAI models went unanswered in the thread.

For a deeper dive:

- Parsing challenges and methods: (not provided)

- Blog post on abliteration: Uncensor any LLM with abliteration.

Datasette - LLM (@SimonW) Discord

-

The Truncated Text Mystery: When working with embeddings using

llm, a member discovered that input text exceeding the model's context length doesn't necessarily trigger an error and might result in truncated input. The actual behavior varies by model and underscores a need for clearer documentation on how each model handles excessive input length. -

Embedding Jobs: To Resume or Not to Resume: A query was raised regarding the functionality of

embed-multiin resuming large embedding jobs without reprocessing completed parts. This highlights the need for features that can manage partial completions within embedding processes. -

Documentation Desire for Embedding Behaviors: The response from @SimonW pointing to a lack of clarity in model behavior documentation directed at whether inputs are truncated or produce errors, indicates a larger call from users for comprehensive and accessible documentation on these AI systems.

-

Guesswork in Model Truncation: In absence of error messages, it was posited by @SimonW that the large text inputs leading to unexpected embedding results are likely being truncated, a behavior that should be explicitly verified within specific model documentation.

-

Efficiency in Large Embedding Tasks: The discussion around whether the

embed-multican identify and skip previously processed data in rerunning large jobs showcases a concern for efficiency and the need for intelligent job handling in long-running AI processes.

Torchtune Discord

Megatron's Checkpoint Conundrum: Engineers enquired about Megatron's compatibility with fine-tuning libraries, noting its unique checkpoint format. It was agreed that converting Megatron checkpoints to Hugging Face format and utilizing Torchtune for fine-tuning was the best course of action.

Mozilla AI Discord

- Call for JSON Schema Integration Heats Up: An AI Engineer proposed the inclusion of a JSON schema in the upcoming software version to streamline application development, acknowledging some bugs but underscoring the ease it brings to building applications. No details on a timeline or potential implementation challenges were provided.

YAIG (a16z Infra) Discord

- Weekend Audio Learning on AI Infrastructure: A link to a weekend listening session was shared by a member, featuring a discussion on AI infrastructure, which could be of interest to AI engineers looking to stay abreigned of the latest trends and challenges in the field. The content is accessible on YouTube.

The LLM Perf Enthusiasts AI Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The DiscoResearch Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The AI21 Labs (Jamba) Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

PART 2: Detailed by-Channel summaries and links

{% if medium == 'web' %}

LLM Finetuning (Hamel + Dan) ▷ #general (66 messages🔥🔥):

- OSS Scraping Platform Recommendations: A member asked for an OSS scraping platform and received suggestions including Beautiful Soup and Scrapy. Another member recommended Trafilatura for scraping dynamic content from job postings and SEC filings, providing a link to Trafilatura's documentation.

- Mesop compared with Gradio and Streamlit: Google released Mesop, a Python-based framework for building UIs, which members compared favorably to Gradio and Streamlit for internal low-traffic apps. More details are provided on the Mesop homepage, piquing interest despite questions about advanced authentication features.

- Finetuning Cypher Query Generation with Mistral 7B: A member struggling with generating correct Cypher queries using Mistral 7B discussed systematic debugging steps with HamelH. The conversation emphasized writing evals, testing failure modes, and iteratively improving prompts.

- Interest in Workshops on Finetuning Techniques: Multiple members expressed interest in workshops covering topics like SFT, DPO, and ORPO using the TRL library, recommending Leandro von Werra as a potential instructor. However, space constraints and scheduling issues were mentioned as limiting factors.

- Braintrust and OpenPipe Platforms: A question was raised about Braintrust and OpenPipe, with responses noting previous office hours and talks covering these platforms. Members shared that these events answered many common questions about the platforms' purposes and utilities.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #workshop-1 (2 messages):

-

Regulatory and Research Aid through LLMs: The LLM can serve as a Research Assistant by answering technical and regulatory questions using sources like scientific papers and regulatory documents. For pre-existing repositories like Arxiv, employing RAG and prompt engineering is recommended, while paywalled sources would need finetuning.

-

Discovery Helper for Research: A secondary use case is a Research Assistant that points to promising papers by analyzing abstracts, titles, and metadata, which is valuable even if full access is restricted. Tools such as SciHub and DTIC can support this initiative by focusing on potential papers for the user.

-

LLMs for Legal Document Analysis: The aforementioned research assistant LLMs can be adapted to handle legal documents for efficient research and discovery. The poster showed interest in seeing this implemented.

-

Document Distiller for Large Organizations: This LLM is tailored for organizations with extensive document corpora (e.g., financial, government) to assist in regulatory compliance by summarizing and returning relevant documents based on inferred user intent. This idea is backed by mentions at the DataScience Salon conference by the NY Federal Reserve AI Chairman.

LLM Finetuning (Hamel + Dan) ▷ #asia-tz (5 messages):

- **Jeremyhoward loves Hainan**: *"I love hainan! 😄"*. Later, Blaine shared his love for Shenzhou Peninsula mentioning nearby beaches and passion fruits.

- **Anmol from India seeks chatbot pricing advice**: Anmol asked for advice on pricing an enterprise customer service chatbot. He expressed hope that someone with experience could assist him.

- **Hanoi to Germany transition**: Hehehe0803 introduced themselves from Hanoi, Vietnam, currently living in Germany. They mentioned joining late and expressed hope to connect with others.

LLM Finetuning (Hamel + Dan) ▷ #🟩-modal (8 messages🔥):

- **Modal Privacy Policy Sought**: A user inquired about the privacy policy of Modal. Another user provided a link to a Google search for further information: [Privacy Policy Modal Labs](https://www.google.com/search?q=privacy+policy+modal+labs).

- **Confusion on LLM Inference Setup**: A user asked about setting up a server to run an LLM and expose an endpoint, referencing a [Modal example script on GitHub](https://github.com/modal-labs/modal-examples/blob/main/06_gpu_and_ml/llm-serving/text_generation_inference.py#L240C20-L240C30). They were unsure about how to get the base URL for calling the endpoint from REST clients like Postman.

- **Praise for Modal from a GPU Enthusiast**: A user who typically trains locally with multiple GPUs tried Modal and found it "super cool". They expressed their appreciation with emojis: 👍👏.

- **Dataset Handling Issue with Axolotl Configs**: A user experienced issues with Modal's insistence on passing a dataset, which overrode their existing axolotl configuration. They mentioned hacking the `train.py` to remove the dataset code, which resolved the issue for them.

Link mentioned: modal-examples/06_gpu_and_ml/llm-serving/text_generation_inference.py at main · modal-labs/modal-examples: Examples of programs built using Modal. Contribute to modal-labs/modal-examples development by creating an account on GitHub.

LLM Finetuning (Hamel + Dan) ▷ #learning-resources (1 messages):

- Loving the Discovery of Old News: A member shared their excitement about a discovery they found, attaching this paper from arXiv. They acknowledged it might be "old news" but expressed that they "love it".

LLM Finetuning (Hamel + Dan) ▷ #jarvis-labs (2 messages):

- CUDA Version Mismatch Error: A member encountered a mismatch error when installing the

xformersPIP module due to differing CUDA versions, 11.8 and 12.1. They asked for the recommended way to upgrade the CUDA library in the Jarvis Labs container. - Solution for Updating CUDA: Another member provided a documentation link to Jarvis Labs for guidance on updating the CUDA version on the Jarvis Labs instance.

Link mentioned: Debugging and Troubleshooting | Jarvislabs: Some common troubleshooting tips for updating Cuda, Freeing up the disk space and many more.

LLM Finetuning (Hamel + Dan) ▷ #hugging-face (3 messages):

-

Members still awaiting credits: Multiple users reported filling out a web form but haven't received any credits as expected. "filled out the web form, but haven't received any as far as I can tell."

-

New form on the way: An update on the situation mentions that a new form will be available soon, with a status check currently taking place. "We’ll have a new form out soon, let me check on the status of it."

LLM Finetuning (Hamel + Dan) ▷ #replicate (9 messages🔥):

- Redeem credits through email works: One member had to accept credits via email and shared that "this worked" for them.

- Unreceived credits inquiry: "Hi @zeke6585 haven't received the email" stated a member, prompting another to check records and found the form was not filled out for replicate credits.

- Duplicate form submissions still unresolved: A member expressed confusion, having filled out the form twice and received credits from other services like OAI, HF, and Modal but not from Replicate.

- Comment clarification on credit status: A member clarified they were not spamming every channel but only the ones where credits were still pending, and noted an immediate resolution from Ankur on BrainTrust credits.

LLM Finetuning (Hamel + Dan) ▷ #langsmith (29 messages🔥):

-

Langsmith Input Missing Issue Solved: A member struggled with the

@traceabledecorator in Langsmith capturing outputs but not inputs in LLM calls. They resolved it by adding an argument to their function, realizing that inputs were essentially the arguments to the function without which nothing gets captured. -

LangSmith Credit Confusion: Multiple members expressed confusion and frustration over not receiving compute credits or understanding credit types. One highlighted that "Beta credits are only available for people who were LangSmith beta users."

-

Billing and Credits Follow-Up: Several users who set up billing complained about not receiving their credits. They were directed to reach out to a contact at LangSmith to address the issue by sending their organization IDs for resolution.

-

HIPAA Compliance and Enterprise Options: LangSmith aims to be HIPAA compliant by July 1st and offers self-hosted options for enterprise customers. Details about plans and features are available, and members were guided to contact for more information.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #workshop-3 (2 messages):

- Workshop videos receive high praise: A member expressed gratitude for the speakers and topics in the LLM Evals videos, stating the course has significantly helped them structure their approach more effectively than a year of self-research. They thanked <@525830737627185170> and <@916924724003627018> for putting it together.

- Facilitators appreciate positive feedback: One of the organizers responded to the praise with thanks, sharing that such encouragement is highly motivating. "It makes my day, and is super motivating!"

LLM Finetuning (Hamel + Dan) ▷ #workshop-4 (4 messages):

- Conference talk initially lacks sound: A member noted that the recording for "Conference Talk: Best Practices For Fine Tuning Mistral w/ Sophia Yang" appeared to lack audio. They later clarified, stating, "never mind. it has voice."

- Replicate vs. Modal deployment clarified: A member sought confirmation on the differing deployment processes between Replicate and Modal, particularly regarding where the Docker build process occurs. Another member confirmed, "the Modal build process runs remotely."

LLM Finetuning (Hamel + Dan) ▷ #clavie_beyond_ragbasics (2 messages):

- Ben's talk rescheduled due to illness: The team has rescheduled Ben's talk to next week because he isn't feeling well. Wishing that Ben gets well soon ❤️🔥.

LLM Finetuning (Hamel + Dan) ▷ #jason_improving_rag (150 messages🔥🔥):

- Frustration with Infographics and Tables in RAG: Users discussed the difficulties of extracting data from PDFs, especially tables and infographics, in RAG systems. Tools like PyMuPDF, AWS Textract, and converting PDFs to Markdown were mentioned as potential solutions, but issues persist as noted: "The markdown tables are malformed most of the time for my use case."

- Debate on Chunking Strategies: There was a lively debate on the best practices for chunking text data for RAG, with recommendations ranging from 500 to 800 tokens with a 50% overlap. A consensus formed around the complexity and necessity of chunking correctly to ensure accurate context and retrieval.

- Optimizing RAG with Fine-Tuning and Embeddings: The importance of fine-tuning embedding models for better RAG performance was highlighted, with a suggestion to use generated synthetic data. One member noted, "I think any company that is making money from RAG is leaving money on the table from not fine-tuning embedding models."

- Discussion on LanceDB for Multimodal AI: LanceDB was discussed as an alternative to databases like Pinecone and SQL for managing embeddings on large-scale, multimodal data. This database promises to be "easy-to-use, scalable, and cost-effective" with support for hybrid search solutions.

- Links Shared for Further Reading and Tools: Multiple links were shared covering resources like fine-tuning pipelines, embedding quantization, and tools for PDF handling and RAG implementations. Key links include Langchain Multi-modal RAG, LlamaParse, and Creating Synthetic Data for Embeddings.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #jeremy_python_llms (6 messages):

- Exclusive sneak peek discussed: Jeremy Howard shared a special sneak peek with members, instructing them, "so keep it to yourself, folks!" Despite the exclusivity, he mentioned it's fine to discuss it within this Discord.

- Anticipation builds for demo: A member expressed intent to wait for Jeremy Howard's demo after sneaking a peek at the codebase.

- Talk scheduled at an inconvenient time: Ashpun noted that the talk timings inconveniently fell at 3:30am IST for them.

- Setting multiple alarms: To not miss the talk, one member humorously mentioned setting "10 alarms" while another member appreciated having company for the early hour.

LLM Finetuning (Hamel + Dan) ▷ #yang_mistral_finetuning (2 messages):

-

Miscommunication cleared up with empathy: Aaah, okay, I understand now. That makes sense, and something I would expect as well. Sorry for misunderstanding you earlier.

-

Excitement about Mistral API: One member expressed enthusiasm about an upcoming workshop and stated, I will try the official Mistral API.

LLM Finetuning (Hamel + Dan) ▷ #axolotl (13 messages🔥):

-

Dependency Conflicts During Axolotl Installation: A user encountered errors while installing Axolotl with CUDA 12.1, Python 3.11, and PyTorch 2.3.1 due to dependency conflicts between multiple packages like Axolotl, Accelerate, Bitsandbytes, and Xformers. They sought resolutions for these conflicts.

-

Recommendation to Install Axolotl Without Xformers: One member suggested that the issue was specifically with Xformers, not Axolotl, and recommended first installing Axolotl without Xformers. They also mentioned the alternative of using the Docker image for Axolotl.

-

Switching to Python 3.10 Resolves Partial Issues: The user resolved some issues by switching to Python 3.10 and PyTorch 2.1.2, which allowed them to run the preprocess step but encountered a new error related to Flash Attention.

-

Flash Attention Requires Recompilation for CUDA 12.1: The user faced an ImportError related to

libcudart.so.11.0with Flash Attention, indicating a mismatch with their installed CUDA version (12.1). The suggested solution was to rebuild/recompile Flash Attention, which resolved the issue.

Link mentioned: Dependency Resolution - pip documentation v24.1.dev1: no description found

LLM Finetuning (Hamel + Dan) ▷ #zach-accelerate (12 messages🔥):

-

Safe tensors vary in merged adapters: A member questioned why merging an adapter to a base model sometimes results in 3 safe tensors and other times 6, while the base model only has 3 to start with, indicating variability in outcomes.

-

Enhance TPU efficiency with Keras's

steps_per_execution: A member shared a TensorFlow blog about usingsteps_per_executionto reduce Python overhead and maximize TPU performance. An alternate approach in PyTorch XLA involves adjusting calls toxm.mark_step()for similar benefits. -

Use

xm.mark_step()judiciously: A detailed explanation was given on how to manage TPU performance in PyTorch XLA usingxm.mark_step()by adjusting the frequency of its calls within the training loop to balance performance and reliability, suggesting a potential feature request for the accelerate library. -

FSDP tutorial by Less Wright: For those interested in FSDP, an excellent ten-part series on YouTube by Less Wright (former fastai alumn) was recommended as a comprehensive introduction.

-

Quantization process clarified: It was confirmed that quantization happens during model load and is handled by the CPU before passing it to the GPU. Relevant documentation and resources were provided, including Hugging Face Accelerate's quantization usage guide and the Accelerate GitHub repository.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #freddy-gradio (2 messages):

-

Watch the YouTube Link: A YouTube video was shared which can be accessed here.

-

Python Handles Server-Side Code: Discussion around the server-side code indicates that it’s still managed in Python. Points include scaling in Spaces and handling concurrency with Python.

LLM Finetuning (Hamel + Dan) ▷ #charles-modal (12 messages🔥):

- Fine-tuning with Modal can be complex but is worth exploring: Charles mentioned the complexity of the fine-tuning stack with Modal but suggested users check out their simpler Dreambooth example. This example demonstrates the core Modal concepts through finetuning the Stable Diffusion XL model using textual inversion.

- Adapt existing datasets for fine-tuning on Modal: Users can point to a Hugging Face dataset directly, as suggested by Charles. Adjustments should be made to avoid using Volumes for storing this data.

- Use batch processing for validation: Charles advised writing a custom

batch_generatemethod and using.mapfor processing and generating validation examples. He referenced the "embedding Wikipedia" example for further guidance. - Exploring Modal for cost-efficiency in app hosting: Alan was advised by Charles on potentially moving the retrieval part of his Streamlit app to Modal or considering moving the entire app there. Concerns about cold starts and cost with a 24/7 deployment were discussed.

- Quick support for script errors: Chaos highlighted an issue with the

vllm_inference.pyscript on GitHub, and Charles quickly responded, hinting at potential problems during the build step or GPU availability. Charles emphasized Modal’s culture of speed in support and communication.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #langchain-langsmith (1 messages):

- Langsmith tracing obstacles with streaming non-OpenAI models: A user discussed their challenge of capturing traces with Langsmith while using Groq/Llama3 in a streaming fashion. They noted that using the

@traceabledecorator doesn't work with streaming outputs, as the result stays ageneratorobject.

LLM Finetuning (Hamel + Dan) ▷ #credits-questions (22 messages🔥):

-

Last-minute enrollees can still claim credits: A user who enrolled on May 30th asked how to claim credits, and Dan clarified that while some platforms like OAI won't offer credits, others like Modal might still be available. He shared a link to claim OAI credits: OAI credits.

-

Modal credits now redeemable: For users who enrolled after May 30th, Dan directed them to claim their Modal credits via a provided form Modal form. He also provided a step-by-step guide for using Modal's platform and shared multiple example projects.

-

Credit claims still being processed: Dan explained that credits are being processed in batches and reassured users who haven't yet received theirs to check if they have filled out the necessary forms. He urged those without credits to use the Modal platform to receive additional credits before the Tuesday deadline.

-

Replicate account issues resolved: A user who hadn't received credits verified their Replicate account setup. After confirming the correct email and noticing the account creation date discrepancy, they found the credits in their email.

-

Replicate billing setup questions redirected: Dan asked a user who received a Replicate invite to direct their billing-related questions to a more appropriate channel to get faster responses from the Replicate team.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #strien_handlingdata (1 messages):

- Praise for Data-Talk Presentation: One member expressed strong appreciation for a particular presentation on data, emphasizing its foundational importance for tasks like evaluations and fine-tuning. They felt it would have been beneficial as the opening talk of the conference.

LLM Finetuning (Hamel + Dan) ▷ #fireworks (28 messages🔥):

-

Account ID mix-ups lead to credit problems: Multiple users reported issues with not receiving credits, often due to mistakenly filling out wrong account details like emails instead of account IDs in forms. Examples include user IDs such as biggafish8-37cf1d and jain-nehil-ab4ee8.

-

Credits now visible after corrections: A user confirmed that their credits became visible after providing the correct account ID, which was szilvia-beky-38bda3. This suggests successful resolution for some users once the correct details were processed.

-

Expired credits notice: When asked about the validity of credits, it was clarified that they expire after a year. This was confirmed by aravindputrevu stating “Yes, they expire after a year.”

-

Frequent guidance on form filling issues: It was highlighted by hamelh that incorrect form filling, such as entering email addresses instead of account IDs, was a frequent issue. project_disaster acknowledged this with an apology for the mistake.

-

Continued calls for assistance: Users continued to seek help with their account credits, providing their account IDs like raul-brebenaru-2d3d45 and roger-6803a6, indicating ongoing issues even after initial error rectification efforts.

LLM Finetuning (Hamel + Dan) ▷ #emmanuel_finetuning_dead (98 messages🔥🔥):

- Fine-tuning is not dead but niche: A member humorously suggested the title "Fine-tuning is not dead, it's niche" for clarity, emphasizing that fine-tuning has a specialized, expensive application. They stated, “fine-tuning can add hallucinations for Q&A,” highlighting its complexity and cost.

- Anthropic and Speculative Thoughts: Members discussed Anthropic's view on significant future changes, humor followed with mentions of 'Cylons'. The talk by an Anthropic representative was highlighted: “Anthropic betting 8 years from now humans might not be around in present form.”

- Resource Sharing for Fine-Tuning and RAG: Multiple resources were shared regarding fine-tuning and RAG, like Simon Willison's blog and Anthropic's research. Emmanuel’s book and tools like LLM CLI were mentioned as valuable for understanding fine-tuning's applied aspects.

- Prompting over Fine-Tuning: Members preferred focusing on prompt engineering over fine-tuning for many applications, suggesting reading materials like Emmanuel's spreadsheets on prompt engineering and HuyenChip's blog. The point was made with humor: "Do the boring thing!" as advice over complex fine-tuning.

- Dynamic Few-Shot and RAG Discussions: The talk concluded with insights into using dynamic few-shot prompting and RAG as viable alternatives or complements to fine-tuning. Links like dynamic few-shot prompting article were shared to emphasize practical approaches in evolving applications.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #braintrust (29 messages🔥):

- Braintrust Inputs Not Captured: A user noted that the Braintrust decorator wasn't capturing the inputs to their LLM function but was capturing the outputs. Another pointed out that the function had no arguments and suggested wrapping the OpenAI client with

wrap_openai. - Exploring Braintrust Tracing Methods: There was a discussion on three methods to trace in Braintrust:

wrap_openai, the@traceddecorator, and spans, with spans offering the most flexibility. One user considered using spans for a project integrating Braintrust with ZenML. - Credits Issue Resolved with UI Clarification: A user named "project_disaster" mentioned not seeing their credits, with "ankrgyl" clarifying that the absence of an "Upgrade" button indicates applied credits. The user suggested having a visible gauge for tracking credit consumption over time.

- Interest in TypeScript and Tracing Example: A user expressed an off-topic appreciation for the use of TypeScript. Another inquired about starting with a tracing example for an LLM project, eventually planning to move towards DPO finetuning, with "ankrgyl" suggesting they begin with the logging guide on Braintrust's documentation.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #europe-tz (2 messages):

- **Local Roots Shout-Out**: One user mentioned living in London but originally being from Portugal. Another user opted to keep their origin a secret with a *"🤐"* emoji.

LLM Finetuning (Hamel + Dan) ▷ #announcements (2 messages):

-

Fill out the OpenAI credits form: If you missed filling out the form the first time and want your OpenAI credits, please put your OAI org id in the form at this link.

-

Find your Organization ID for OpenAI credits: To see your Org ID, please go to this website after you are logged in under

Organization ID(this is available even if you are not part of an org with someone*).*

Link mentioned: no title found: no description found

LLM Finetuning (Hamel + Dan) ▷ #predibase (3 messages):

- Questions on starting model format: A user asked if they should start with awq or gptq format before training LoRAs for best performance. No specific guidance was provided in the conversation.

- Excitement for Predibase example: A member expressed excitement about using Predibase's example shared in the documentation. Predibase claims to offer the fastest way to fine-tune and serve open-source LLMs.

- Inquiry about credit expiry: A user asked about the expiration of credits and mentioned the requirement to add a credit card to be upgraded to the Developer Tier on the site. No further details about the credit expiry were discussed.

Link mentioned: Quickstart | Predibase: Predibase provides the fastest way to fine-tune and serve open-source LLMs. It's built on top of open-source LoRAX.

LLM Finetuning (Hamel + Dan) ▷ #openpipe (4 messages):

- Members still await their credits: Several members reported they have not yet received their credits. One provided an email for follow-up, urging a quick resolution.

LLM Finetuning (Hamel + Dan) ▷ #openai (69 messages🔥🔥):

<!-- Summary -->

- **OpenAI credits applied retroactively**: Several users noted that their credits were applied to their existing API balance, making it similar to adding funds via a credit card. [Members discussed](https://platform.openai.com/settings/organization/billing/overview) potential improvements for those new to the API.

- **Finalizing Tier 2 API status for students**: OpenAI granted Tier 2 API status to those who filled out the form in time, allowing them to utilize the additional credits. Users should stay tuned for updates if they missed the initial registration.

- **Late submission form for credits**: To rectify earlier submission errors, [a new form for additional credit requests](https://maven.com/parlance-labs/fine-tuning/1/forms/f2d68f) has been shared and needs to be correctly filled out.

- **Internal thoughts during fine-tuning**: There was an in-depth discussion regarding how to handle "internal thoughts" in long multi-turn conversations during OpenAI model fine-tuning. Delimiters and separate examples were proposed as potential solutions.

- **Public acknowledgment and kudos**: The group appreciated the efforts of OpenAI team members for their swift and effective support, highlighted in a [Twitter post](https://x.com/TheZachMueller/status/1798674326633247143) expressing gratitude.

Links mentioned:

OpenAI ▷ #ai-discussions (266 messages🔥🔥):

- **GPTs at their limits with advanced programming questions**: A user noted that their programming questions have become more specific and complex as their project advanced, leading to struggles with GPT models. They expressed concern that these models may be "pushing their limits for programming assistance 😁".

- **GPTs sometimes fail at simple corrections**: Another user pointed out a problem where the GPT could not correct an incorrect math equation despite being prompted, showcasing issues with basic logical consistency in the model.

- **Continuous Learning and Real-time Adjustments**: Discussion involved the idea that making models agentic and capable of continuous learning could be costly and pose regulatory challenges. Continuous learning could also lead to issues with personality drift and potential security risks.

- **Generative AI's current and future impact**: There was debate about the immediate usefulness and future potential of generative AI, with some users highlighting its potential to assist or significantly change job structures, while others were skeptical of its broader economic impacts.

- **Community discussions on AI advances and resource requirements**: Users conversed about the computational power required for training AI models, referencing specific hardware like A100 and H100 GPUs, and speculating on developments with upcoming models like GPT-5.

OpenAI ▷ #gpt-4-discussions (20 messages🔥):

- Voice chat removal concerns: Users discussed why voice chat was removed and speculated on its return with a new model. One user mentioned a new voice model based on GPT-4o is expected soon.

- Issues with generating Chinese text: A member reported that generating responses in Chinese using the GPT model sometimes results in special characters like \ufffd appearing. This issue occurs around 15% of the time and significantly impacts the text quality.