AI News for 6/6/2024-6/7/2024. We checked 7 subreddits, 384 Twitters and 30 Discords (409 channels, and 3133 messages) for you. Estimated reading time saved (at 200wpm): 343 minutes.

A warm welcome to the TorchTune discord. Reminder that we do consider requests for additions to our Reddit/Discord tracking (we will decline Twitter additions - personalizable Twitter newsletters coming soon! we know it's been a long time coming)

With rumors of increasing funding in the memory startup and long running agents/personal AI space, we are seeing rising interest in high precision/recall memory implementations.

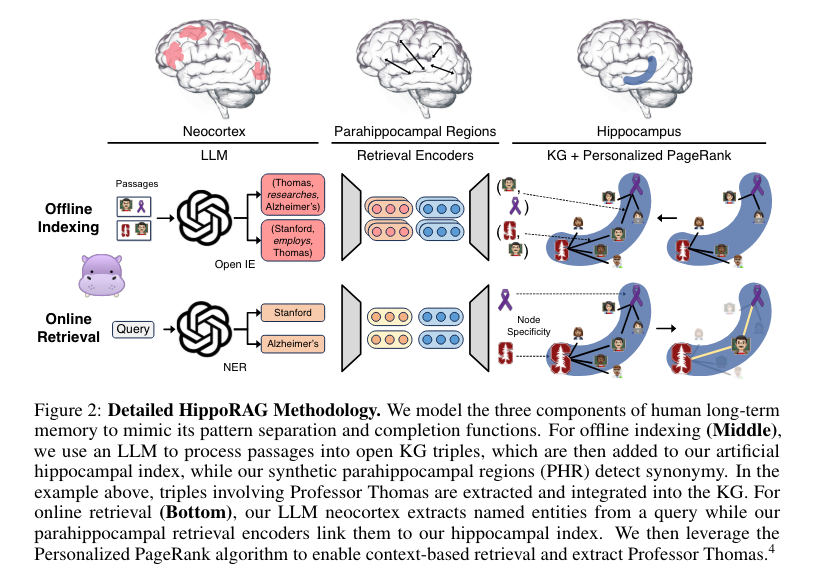

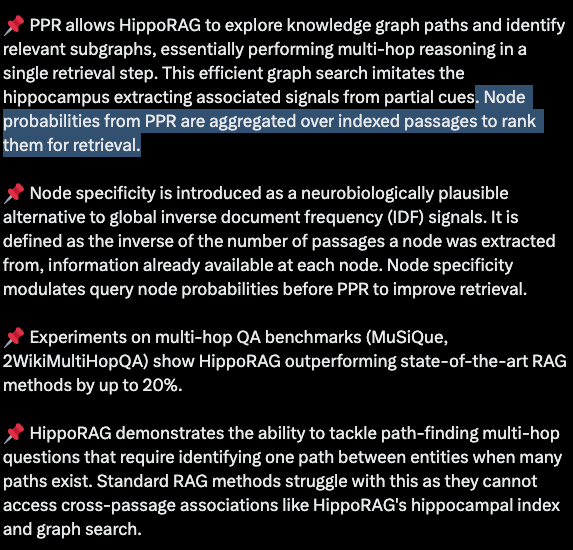

Today's paper isn't as great as MemGPT, but is indicative of what people are exploring. Though we are not big fans of natural intelligence models for artificial intelligence, the HippoRAG paper leans on "hippocampal memory indexing theory" to arrive at a useful implementation of knowledge grpahs and "Personalized PageRank" which probably stand on firmer empirical ground.

Ironically the best explanation of methodology comes from a Rohan Paul thread (we are not sure how he does so many of these daily):

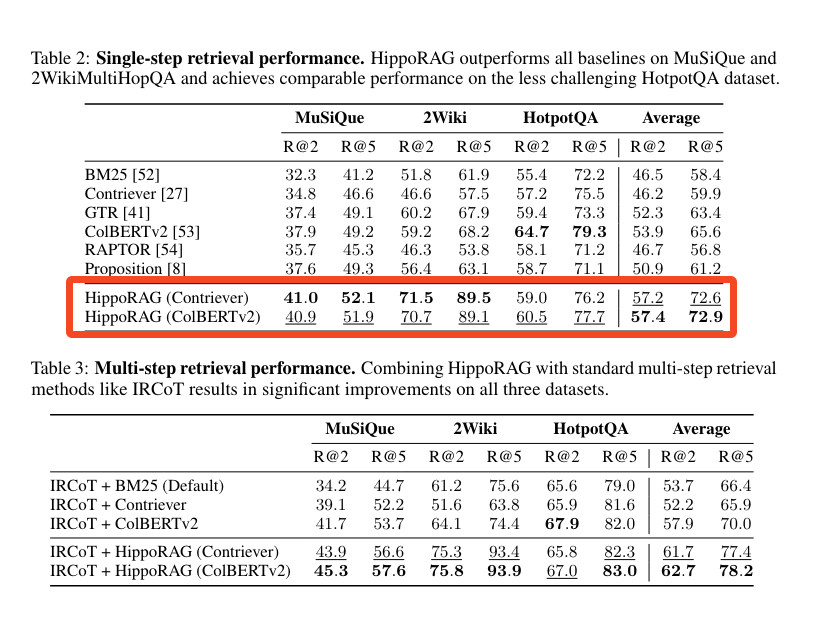

The Single-Step, Multi-Hop retrieval seems to be the key win vs comparable methods 10+ times slower and more expensive:

Section 6 offers a useful, concise literature review of the current techniques to emulate memory in LLM systems.

{% if medium == 'web' %}

Table of Contents

[TOC]

{% else %}

The Table of Contents and Channel Summaries have been moved to the web version of this email: [{{ email.subject }}]({{ email_url }})!

{% endif %}

AI Twitter Recap

all recaps done by Claude 3 Opus, best of 4 runs. We are working on clustering and flow engineering with Haiku.

New AI Models and Architectures

- New SOTA open-source models from Alibaba: @huybery announced the release of Qwen2 models from Alibaba, with sizes ranging from 0.5B to 72B parameters. The models were trained on 29 languages and achieved SOTA performance on benchmarks like MMLU (84.32 for 72B) and HumanEval (86.0 for 72B). All models except the 72B are available under the Apache 2.0 license.

- Sparse Autoencoders for interpreting GPT-4: @nabla_theta introduced a new training stack for Sparse Autoencoders (SAEs) to interpret GPT-4's neural activity. The approach shows promise but still captures only a small fraction of behavior. It eliminates feature shrinking, sets L0 directly, and performs well on the MSE/L0 frontier.

- Hippocampus-inspired retrieval augmentation: @rohanpaul_ai overviewed the HippoRAG paper, which mimics the neocortex and hippocampus for efficient retrieval augmentation. It constructs a knowledge graph from the corpus and uses Personalized PageRank for multi-hop reasoning in a single step, outperforming SOTA RAG methods.

- Implicit chain-of-thought reasoning: @rohanpaul_ai described work on teaching LLMs to do chain-of-thought reasoning implicitly, without explicit intermediate steps. The proposed Stepwise Internalization method gradually removes CoT tokens during finetuning, allowing the model to reason implicitly with high accuracy and speed.

- Enhancing LLM reasoning with Buffer of Thoughts: @omarsar0 shared a paper proposing Buffer of Thoughts (BoT) to enhance LLM reasoning accuracy and efficiency. BoT stores high-level thought templates distilled from problem-solving and is dynamically updated. It achieves SOTA performance on multiple tasks with only 12% of the cost of multi-query prompting.

- Scalable MatMul-free LLMs competitive with SOTA Transformers: @rohanpaul_ai shared a paper claiming to create the first scalable MatMul-free LLM competitive with SOTA Transformers at billion-param scale. The model replaces MatMuls with ternary ops and uses Gated Recurrent/Linear Units. The authors built a custom FPGA accelerator processing models at 13W beyond human-readable throughput.

- Accelerating LoRA convergence with Orthonormal Low-Rank Adaptation: @rohanpaul_ai shared a paper on OLoRA, which accelerates LoRA convergence while preserving efficiency. OLoRA uses orthonormal initialization of adaptation matrices via QR decomposition and outperforms standard LoRA on diverse LLMs and NLP tasks.

Multimodal AI and Robotics Advancements

- Dragonfly vision-language models for fine-grained visual understanding: @togethercompute introduced Dragonfly models leveraging multi-resolution encoding & zoom-in patch selection. Llama-3-8b-Dragonfly-Med-v1 outperforms Med-Gemini on medical imaging.

- ShareGPT4Video for video understanding and generation: @_akhaliq shared the ShareGPT4Video series to facilitate video understanding in LVLMs and generation in T2VMs. It includes a 40K GPT-4 captioned video dataset, a superior arbitrary video captioner, and an LVLM reaching SOTA on 3 video benchmarks.

- Open-source robotics demo with Nvidia Jetson Orin Nano: @hardmaru highlighted the potential of open-source robotics, sharing a video demo of a robot using Nvidia's Jetson Orin Nano 8GB board, Intel RealSense D455 camera and mics, and Luxonis OAK-D-Lite AI camera.

AI Tooling and Platform Updates

- Infinity for high-throughput embedding serving: @rohanpaul_ai found Infinity awesome for serving vector embeddings via REST API, supporting various models/frameworks, fast inference backends, dynamic batching, and easy integration with FastAPI/Swagger.

- Hugging Face Embedding Container on Amazon SageMaker: @_philschmid announced general availability of the HF Embedding Container on SageMaker, improving embedding creation for RAG apps, supporting popular architectures, using TEI for fast inference, and allowing deployment of open models.

- Qdrant integration with Neo4j's APOC procedures: @qdrant_engine announced Qdrant's full integration with Neo4j's APOC procedures, bringing advanced vector search to graph database applications.

Benchmarks and Evaluation of AI Models

- MixEval benchmark correlates 96% with Chatbot Arena: @_philschmid introduced MixEval, an open benchmark combining existing ones with real-world queries. MixEval-Hard is a challenging subset. It costs $0.6 to run, has 96% correlation with Arena, and uses GPT-3.5 as parser/judge. Alibaba's Qwen2 72B tops open models.

- MMLU-Redux: re-annotated subset of MMLU questions: @arankomatsuzaki created MMLU-Redux, a 3,000 question subset of MMLU across 30 subjects, to address issues like 57% of questions in Virology containing errors. The dataset is publicly available.

- Questioning the continued relevance of MMLU: @arankomatsuzaki questioned if we're done with MMLU for evaluating LLMs, given saturation of SOTA open models, and proposed MMLU-Redux as an alternative.

- Discovering flaws in open LLMs: @JJitsev concluded from their AIW study that current SOTA open LLMs like Llama 3, Mistral, and Qwen are seriously flawed in basic reasoning despite claiming strong benchmark performance.

Discussions and Perspectives on AI

- Google paper on open-endedness for Artificial Superhuman Intelligence: @arankomatsuzaki shared a Google paper arguing ingredients are in place for open-endedness in AI, essential for ASI. It provides a definition of open-endedness, a path via foundation models, and examines safety implications.

- Debate on the viability of fine-tuning: @HamelHusain shared a talk by Emmanuel Kahembwe on "Why Fine-Tuning is Dead", sparking discussion. While not as bearish, @HamelHusain finds the talk interesting.

- Yann LeCun on AI regulation: In a series of tweets (1, 2, 3), @ylecun argued for regulating AI applications not technology, warning that regulating basic tech and making developers liable for misuse will kill innovation, stop open-source, and are based on implausible sci-fi scenarios.

- Debate on AI timelines and progress: Leopold Aschenbrenner's appearance on the @dwarkesh_sp podcast discussing his paper on AI progress and timelines (summarized by a user) sparked much debate, with views ranging from calling it an important case for an AI capability explosion to criticizing it for relying on assumptions of continued exponential progress.

Miscellaneous

- Perplexity AI commercial during NBA Finals: @AravSrinivas noted that the first Perplexity AI commercial aired during NBA Finals Game 1. @perplexity_ai shared the video clip.

- Yann LeCun on exponential trends and sigmoids: @ylecun argued that every exponential trend eventually passes an inflection point and saturates into a sigmoid as friction terms in the dynamics equation become dominant. Continuing an exponential requires paradigm shifts, as seen in Moore's Law.

- John Carmack on Quest Pro: @ID_AA_Carmack shared that he tried hard to kill the Quest Pro completely as he believed it would be a commercial failure and distract teams from more valuable work on mass market products.

- FastEmbed library adds new embedding types: @qdrant_engine announced FastEmbed 0.3.0 which adds support for image embeddings (ResNet50), multimodal embeddings (CLIP), late interaction embeddings (ColBERT), and sparse embeddings.

- Jokes and memes: Various jokes and memes were shared, including a GPT-4 GGUF outputting nonsense without flash attention (link), @karpathy's llama.cpp update in response to DeepMind's SAE paper (link), and commentary on LLM hype cycles and overblown claims (example).

AI Reddit Recap

Across r/LocalLlama, r/machinelearning, r/openai, r/stablediffusion, r/ArtificialInteligence, /r/LLMDevs, /r/Singularity. Comment crawling works now but has lots to improve!

Chinese AI Models

- KLING model generates videos: The Chinese KLING AI model has generated several videos of people eating noodles or burgers, positioned as a competitor to OpenAI's SORA. Users discuss the model's accessibility and potential impact. (Video 1, Video 2, Video 3, Video 4)

- Qwen2-72B language model released: Alibaba has released the Qwen2-72B Chinese language model on Hugging Face. It outperforms Llama 3 on various benchmarks according to comparison images. The official release blog is also linked.

AI Capabilities & Limitations

- Open source vs closed models: Screenshots demonstrate how closed models like Bing AI and CoPilot restrict information on certain topics, emphasizing the importance of open source alternatives. Andrew Ng argues that AI regulations should focus on applications rather than restricting open source model development.

- AI as "alien intelligence": Steven Pinker suggests AI models are a form of "alien intelligence" that we are experimenting on, and that the human brain may be similar to a large language model.

AI Research & Developments

- Extracting concepts from GPT-4: OpenAI research on using sparse autoencoders to identify interpretable patterns in GPT-4's neural network, aiming to make the model more trustworthy and steerable.

- Antitrust probes over AI: Microsoft and Nvidia are facing US antitrust investigations over their AI-related business moves.

- Extreme weight quantization: Research on achieving a 7.9x smaller Stable Diffusion v1.5 model with better performance than the original through extreme weight quantization.

AI Ethics & Regulation

- AI censorship concerns: Screenshots of Bing AI refusing to provide certain information spark discussion about AI censorship and the importance of open access to information.

- Testing AI for election risks: Anthropic discusses efforts to test and mitigate potential election-related risks in their AI systems.

- Criticism of using social media data: Plans to use Facebook and Instagram posts for training AI models face criticism.

AI Tools & Frameworks

- Higgs-Llama-3-70B for role-playing: Fine-tuned version of Llama-3 optimized for role-playing released on Hugging Face.

- Removing LLM censorship: Hugging Face blog post introduces "abliteration" method for removing language model censorship.

- Atomic Agents library: New open-source library for building modular AI agents with local model support.

AI Discord Recap

A summary of Summaries of Summaries

1. LLM Advancements and Optimization Challenges:

- Meta's Vision-Language Modeling Guide provides a comprehensive overview of VLMs, including training processes and evaluation methods, helping engineers understand mapping vision to language better.

- DecoupleQ from ByteDance aims to drastically improve LLM performance using new quantization methods, promising 7x compression ratios, though further speed benchmarks are anticipated (GitHub).

- GPT-4o's Upcoming Features include new voice and vision capabilities for ChatGPT Plus users and real-time chat for Alpha users. Read about it in OpenAI's tweet.

- Efficient Inference and Training Techniques like

torch.compilespeed up SetFit models, confirming the importance of experimenting with optimization parameters in PyTorch for performance gains. - FluentlyXL Final from HuggingFace introduces substantial improvements in aesthetics and lighting, enhancing the AI model's output quality (FluentlyXL).

2. Open-Source AI Projects and Resources:

- TorchTune facilitates LLM fine-tuning using PyTorch, providing a detailed repository on GitHub. Contributions like configuring

n_kv_headsfor mqa/gqa are welcomed with unit tests. - Unsloth AI's Llama3 and Qwen2 Training Guide offers practical Colab notebooks and efficient pretraining techniques to optimize VRAM usage (Unsloth AI blog).

- Dynamic Data Updates in LlamaIndex help keep retrieval-augmented generation systems current using periodic index refreshing and metadata filters in LlamaIndex Guide.

- AI Video Generation and Vision-LSTM techniques explore dynamic sequence generation and image reading capabilities (Twitter discussion).

- TopK Sparse Autoencoders train effectively on GPT-2 Small and Pythia 160M without caching activations on disk, helping in feature extraction (OpenAI's release).

3. Practical Issues in AI Model Implementation:

- Prompt Engineering in LangChain struggles with repeated steps and early stopping issues, urging users to look for fixes (GitHub issue).

- High VRAM Consumption with Automatic1111 for image generation tasks causes significant delays, highlighting the need for memory management solutions (Stability.ai chat).

- Qwen2 Model Troubleshooting reveals problems with gibberish outputs fixed by enabling flash attention or using proper presets (LM Studio discussions).

- Mixtral 8x7B Model Misconception Correction: Stanford CS25 clarifies it contains 256 experts, not just 8 (YouTube).

4. AI Regulation, Safety, and Ethical Discussions:

- Andrew Ng's Concerns on AI Regulation mimic global debates on AI innovation stifling; comparisons to Russian AI policy discussions reveal varying stances on open-source and ethical AI (YouTube).

- Leopold Aschenbrenner's Departure from OAI sparks fiery debates on the importance of AI security measures, reflecting divided opinions on AI safekeeping (OpenRouter discussions).

- AI Safety in Art Software: Adobe's requirement for access to all work, including NDA projects, prompts suggestions of alternative software like Krita or Gimp for privacy-concerned users (Twitter thread).

5. Community Tools, Tips, and Collaborative Projects:

- Predibase Tools and Enthusiastic Feedback: LoRAX stands out for cost-effective LLM deployment, even amid email registration hiccups (Predibase tools).

- WebSim.AI for Recursive Analysis: AI engineers share experiences using WebSim.AI for recursive simulations and brainstorming on valuable metrics derived from hallucinations (Google spreadsheet).

- Modular's MAX 24.4 Update introduces a new Quantization API and macOS compatibility, enhancing Generative AI pipelines with significant latency and memory reductions (Blog post).

- GPU Cooling and Power Solutions discussed innovative methods for setting up Tesla P40 and similar hardware with practical guides (GitHub guide).

- Experimentation and Learning Resources provided by tcapelle include practical notebooks and GitHub resources for fine-tuning and efficiency (Colab notebook).

PART 1: High level Discord summaries

LLM Finetuning (Hamel + Dan) Discord

-

RAG Revamp and Table Transformation Trials: Discontent over the formatting of markdown tables by Marker has led to discussions on fine-tuning the tool for improved output. Alternative table extraction tools like img2table are also under exploration.

-

Predictive Text for Python Pros with Predibase: Enthusiasm for Predibase credits and tools is noted, with LoRAX standing out for cost-effective, high-quality LLM deployment. Confirmation and email registration hiccups are prevalent, with requests for help directed to the registration email.

-

Open Discussions on Deep LLM Understanding: Posts from tcapelle offer deep dives into LLM fine-tuning with resources like slides and notebooks. Further, studies on pruning strategies highlight ways to streamline LLMs as shared in a NVIDIA GTC talk.

-

Cursor Code Editor Catches Engineers' Eyes: The AI code editor Cursor, which leverages API keys from OpenAI and other AI services, garners approval for its codebase indexing and improvements in code completion, even tempting users away from GitHub Copilot.

-

Modal GPU Uses and Gists Galore: Modal's VRAM use and A100 GPUs are appraised alongside posted gists for Pokemon card descriptions and Whisper adaptation tips._GPU availability inconsistencies are flagged, while dashboard absence for queue status is noted.

-

Learning with Vector and OpenPipe: The discussion included resources for building vector systems with VectorHub's RAG-related content, and articles on the OpenPipe blog received spotlighting for their contribution to the conversation.

-

Struggles with Finetuning Tools and Data: Issues with downloading course session recordings are being navigated, as Bulk tool development picks up motivated by the influx of synthetic datasets. Assistance for local Docker space quandaries during Replicate demos was sought without a solution in the chat logs.

-

LLM Fine-Tuning Fixes in the Making: A lively chat around fine-tuning complexities unfolded, addressing the concerns over merged Lora model shard anomalies, and proposed fine-tuning preferences such as Mistral Instruct templates for DPO finetuning. Interesting, the output discrepancy with token space assembly in Axolotl raised eyebrows, and conversations were geared towards debugging and potential solutions.

Perplexity AI Discord

-

Starship Soars and Splashes Successfully: SpaceX's Starship test flight succeeded with landings in two oceans, turning heads in the engineering community; the successful splashdowns in both the Gulf of Mexico and the Indian Ocean indicate marked progress in the program according to the official update.

-

Spiced Up Curry Comparisons: Engineers with a taste for international cuisine analyzed the differences between Japanese, Indian, and Thai curries, noting unique spices, herbs, and ingredients; a detailed breakdown was circulated that provided insight into each type's historical origins and typical recipes.

-

Promotional Perplexity Puzzles Participants: Disappointment bubbled among users expecting a noteworthy update from Perplexity AI's "The Know-It-Alls" ad; instead, it was a promotional video, leaving many feeling it was more of a tease than a substantive reveal as discussed in general chat.

-

AI Community Converses Claude 3 and Pro Search: Discussion flourished over different AI models like Pro Search and Claude 3; details about model preferences, their search abilities, and user experiences were hot topics, alongside the removal of Claude 3 Haiku from Perplexity Labs.

-

llava Lamentations and Beta Blues in API Channel: API users inquired about the integration of the llava model and vented over the seemingly closed nature of beta testing for new sources, showing a strong desire for more transparency and communication from the Perplexity team.

HuggingFace Discord

-

Electrifying Enhancement with FluentlyXL: The eagerly-anticipated FluentlyXL Final version is now available, promising substantial enhancements in aesthetics and lighting, as detailed on its official page. Additionally, green-minded tech enthusiasts can explore the new Carbon Footprint Predictor to gauge the environmental impact of their projects (Carbon Footprint Predictor).

-

Innovations Afoot in AI Model Development: Budding AI engineers are exploring the fast-evolving possibilities within different scopes of model development, from SimpleTuner's new MoE support in version 0.9.6.2 (SimpleTuner on GitHub) to a TensorFlow-based ML Library with its source code and documentation available for peer review on GitHub.

-

AI's Ascendancy in Medical and Modeling Musings: A recent YouTube video offers insights into the escalating role of genAI in medical education, highlighting the benefits of tools like Anki and genAI-powered searches (AI in Medical Education). In the open-source realm, the TorchTune project kindles interest for facilitating fine-tuning of large language models, an exploration narrated on GitHub.

-

Collider of Ideas in Computer Vision: Enthusiasts are pooling their knowledge to create valuable applications for Vision Language Models (VLMs), with community members sharing new Hugging Face Spaces Apps Model Explorer and HF Extractor that prove instrumental for VLM app development (Model Explorer, HF Extractor, and a relevant YouTube video).

-

Engaging Discussions and Demonstrations: Multi-node fine-tuning of LLMs was a topic of debate, leading to a share of an arXiv paper on Vision-Language Modeling, while the Diffusers GitHub repository was highlighted for text-to-image generation scripts that could also serve in model fine-tuning (Diffusers GitHub). A blog post offering optimization insights for native PyTorch and a training example notebook for those eager to train models from scratch were also circulated.

Stability.ai (Stable Diffusion) Discord

- AI Newbies Drowning in Options: A community member expressed both excitement and overwhelm at the sheer number of AI models to explore, capturing the sentiment many new entrants to the field experience.

- ControlNet's Speed Bump: User arti0m reported unexpected delays with ControlNet, resulting in image generation times of up to 20 minutes, contrary to the anticipated speed increase.

- CosXL's Broad Spectrum Capture: The new CosXL model from Stability.ai boasts a more expansive tonal range, producing images with better contrast from "pitch black" to "pure white." Find out more about it here.

- VRAM Vanishing Act: Conversations surfaced about memory management challenges with the Automatic1111 web UI, which appears to overutilize VRAM and affect the performance of image generation tasks.

- Waterfall Scandal Makes Waves: A lively debate ensued about a viral fake waterfall scandal in China, leading to broader discussion on its environmental and political implications.

Unsloth AI (Daniel Han) Discord

-

Adapter Reloading Raises Concerns: Members are experiencing issues when attempting to continue training with model adapters, specifically when using

model.push_to_hub_merged("hf_path"), where loss metrics unexpectedly spike, pointing to potential mishandling in saving or loading processes. -

LLM Pretraining Enhanced with Special Techniques: Unsloth AI's blog outlines the efficiency of continued pretraining for languages like Korean using LLMs such as Llama3, which promises reduced VRAM use and accelerated training, alongside a useful Colab notebook for practical application.

-

Qwen2 Model Ushers in Expanded Language Support: Announcing support for Qwen2 model that boasts a substantial 128K context length and coverage for 27 languages, with fine-tuning resources shared by Daniel Han on Twitter.

-

Grokking Explored: Discussions delved into a newly identified LLM performance phase termed "Grokking," with community members referencing a YouTube debate and providing links to supporting research for further exploration.

-

NVLink VRAM Misconception Corrected: Clarity was provided on NVIDIA NVLink technology, with members explaining that NVLink does not amalgamate VRAM into a single pool, debunking a misconception about its capacity to extend accessible VRAM for computation.

CUDA MODE Discord

-

Triton Simplifies CUDA: The Triton language is being recognized for its simplicity in CUDA kernel launches using the grid syntax (

out = kernel[grid](...)) and for providing easy access to PTX code (out.asm["ptx"]) post-launch, enabling a more streamlined workflow for CUDA developers. -

Tensor Troubles in TorchScript and PyTorch Profiling: The inability to cast tensors in torchscript using

view(dtype)caused frustration among engineers looking for bit manipulation capabilities with bfloat16s. Meanwhile, the PyTorch profiler was highlighted for its utility in providing performance insights, as shared in a PyTorch profiling tutorial. -

Hinting at Better LoRA Initializations: A blog post Know your LoRA was shared, suggesting that the A and B matrices in LoRA could benefit from non-default initializations, potentially improving fine-tuning outcomes.

-

Note Library Unveils ML Efficiency: The Note library's GitHub repository was referenced for offering an ML library compatible with TensorFlow, promising parallel and distributed training across models including Llama2, Llama3, and more.

-

Quantum Leaps in LLM Quantization: The channel engaged in deep discussion about ByteDance's 2-bit quantization algorithm, DecoupleQ, and a link to a NeurIPS 2022 paper on an approach improving over the Straight-Through Estimator for quantization was provided, pinpointing considerations for memory and computation in the quantization process.

-

AI Framework Discussions Heat Up: The LLVM.c community delved into discussions ranging from supporting Triton and AMD, addressing BF16 gradient norm determinism, and future support for models like Llama 3. Topics also touched on ensuring 100% determinism in training and considered using FineWeb as a dataset, amid considerations for scaling and diversifying data types.

OpenAI Discord

-

Plagiarism Strikes Research Papers: Five research papers were retracted for plagiarism due to inadvertently including AI prompts within their content; members responded with a mix of humor and disappointment to the oversight.

-

Haiku Model: Affordable Quality: Enthusiastic discussions surfaced regarding the Haiku AI model, lauded for its cost-efficiency and commendable performance, even being compared to "gpt 3.5ish quality".

-

AI Moderation: A Double-Edged Sword?: The guild was abuzz with the pros and cons of employing AI for content moderation on platforms like Reddit and Discord, weighing the balance between automated action and human oversight.

-

Mastering LLMs via YouTube: Members shared beneficial YouTube resources for understanding LLMs better, singling out Kyle Hill's ChatGPT Explained Completely and 3blue1brown for their compelling mathematical explanations.

-

GPT's Shifting Capabilities: GPT-4o is being introduced to all users, with new voice and vision capabilities earmarked for ChatGPT Plus. Meanwhile, the community is contending with frequent modification notices for custom GPTs and challenges in utilizing GPT with CSV attachments.

LM Studio Discord

-

Celebration and Collaboration Within LM Studio: LM Studio marks its one-year milestone, with discourse on utilizing multiple GPUs—Tesla K80 and 3090 recommended for consistency—and running multiple instances for inter-model communication. Emphasis on GPUs over CPUs for LLMs highlighted, alongside practicality issues presented when considering LM Studio's use on powerful hardware like PlayStation 5 APUs.

-

Higgs Enters with a Bang: Anticipation is high for an LMStudio update which will incorporate the impressive Higgs LLAMA, a hefty 70-billion parameter model that could potentially offer unprecedented capabilities and efficiencies for AI engineers.

-

Curveballs and Workarounds in Hardware: GPU cooling and power supply for niche hardware like the Tesla P40 stir creative discussions, from jury-rigging Mac GPU fans to elaborate cardboard ducts. Tips include exploring a GitHub guide to deal with the bindings of proprietary connections.

-

Model-Inclusive Troubleshooting: Fixes for Qwen2 gibberish involve toggling flash attention, while the perils of cuda offloading with Qwen2 are acknowledged with anticipation for llama.cpp updates. A member's experience of mixed results with llava-phi-3-mini-f16.gguf via API stirs further model diagnostics chat.

-

Fine-Tuning Fine Points: A nuanced take on fine-tuning highlights style adjustments via LoRA versus SFT's knowledge-based tuning; LM Studio's limitations on system prompt names sans training; and strategies to counter 'lazy' LLM behaviors, such as power upgrades or prompt optimizations.

-

ROCm Rollercoaster with AMD Technology: Users exchange tips and experiences on enabling ROCm on various AMD GPUs, like the 6800m and 7900xtx, with suggestions for Arch Linux use and workarounds for Windows environments to optimize the performance of their LLM setups.

Latent Space Discord

- AI Engineers, Get Ready to Network: Engineers interested in the AI Engineer event will have session access with the Expo Explorer ticket, though the speaker lineup is still to be finalized.

- KLING Equals Sora: KWAI's new Sora-like model calledKLING is generating buzz with its realistic demonstrations, as showcased in a tweet thread by Angry Tom.

- Unpacking GPT-4o's Imagery: The decision by OpenAI to use 170 tokens for processing images in GPT-4o is dissected in an in-depth post by Oran Looney, discussing the significance of "magic numbers" in programming and their latest implications on AI.

- 'Hallucinating Engineers' Have Their Say: The concept of GPT's "useful-hallucination paradigm" was debated, highlighting its potential to conjure up beneficial metrics, with parallels being drawn to "superprompts" and community-developed tools like Websim AI.

- Recursive Realities and Resource Repository: AI enthusiasts experimented with the self-referential simulations of websim.ai, while a Google spreadsheet and a GitHub Gist were shared for collaboration and expansive discussion in future sessions.

Nous Research AI Discord

-

Mixtral's Expert Count Revealed: An enlightening Stanford CS25 talk clears up a misconception about Mixtral 8x7B, revealing it contains 32x8 experts, not just 8. This intricacy highlights the complexity behind its MoE architecture.

-

DeepSeek Coder Triumphs in Code Tasks: As per a shared introduction on Hugging Face, the DeepSeek Coder 6.7B takes the lead in project-level code completion, showcasing superior performance trained on a massive 2 trillion code tokens.

-

Meta AI Spells Out Vision-Language Modeling: Meta AI offers a comprehensive guide on Vision-Language Models (VLMs) with "An Introduction to Vision-Language Modeling", detailing their workings, training, and evaluation for those enticed by the fusion of vision and language.

-

RAG Formatting Finesse: The conversation around RAG dataset creation underscores the need for simplicity and specificity, rejecting cookie-cutter frameworks and emphasizing tools like Prophetissa that utilize Ollama and emo vector search for dataset generation.

-

WorldSim Console's Mobile Mastery: The latest WorldSim console update remedies mobile user interface issues, improving the experience with bug fixes on text input, enhanced

!listcommands, and new settings for disabling visual effects, all while integrating versatile Claude models.

LlamaIndex Discord

-

RAG Steps Toward Agentic Retrieval: A recent talk at SF HQ highlighted the evolution of Retrieval-Augmented Generation (RAG) to fully agentic knowledge retrieval. The move aims to overcome the limitations of top-k retrieval, with resources to enhance practices available through a video guide.

-

LlamaIndex Bolsters Memory Capabilities: The Vector Memory Module in LlamaIndex has been introduced to store and retrieve user messages through vector search, bolstering the RAG framework. Interested engineers can explore this feature via the shared demo notebook.

-

Enhanced Python Execution in Create-llama: Integration of Create-llama with e2b_dev’s sandbox now permits Python code execution within agents, an advancement that enables the return of complex data, such as graph images. This new feature broadens the scope of agent applications as detailed here.

-

Synchronizing RAG with Dynamic Data: Implementing dynamic data updates in RAG involves reloading the index to reflect recent changes, a challenge addressed by using periodic index refreshing. Management of datasets, like sales or support documentation, can be optimized through multiple indexes or metadata filters, with practices outlined in Document Management - LlamaIndex.

-

Optimizations and Entity Resolution with Embeddings in LlamaIndex: Creating property graphs with embeddings directly uses the LlamaIndex framework, and entity resolution can be enhanced by adjusting the

chunk_sizeparameter. Managing these functions can be better understood through guides like "Optimization by Prompting" for RAG and the LlamaIndex Guide.

Modular (Mojo 🔥) Discord

-

Andrew Ng Rings Alarm Bells on AI Regulation: Andrew Ng cautions against California's SB-1047, fearing it could hinder AI advancements. Engineers in the guild compare global regulatory landscapes, highlighting that even without U.S. restrictions, countries like Russia lack comprehensive AI policy, as seen in a video with Putin and his deepfake.

-

Mojo Gains Smarter, Not Harder: The

isdigit()function's reliance onord()for performance is confirmed, leading to an issue report when problems arise. Async capabilities in Mojo await further development, and__type_ofis suggested for variable type checks, with the VSCode extension assisting in pre/post compile identification. -

MACS Make Their MAX Debut: Modular's MAX 24.4 release now supports macOS, flaunts a Quantization API, and community contributors surpass the 200 mark. The update can potentially slash latency and memory usage significantly for AI pipelines.

-

Dynamic Python in the Limelight: The latest nightly release enables dynamic

libpythonselection, helping streamline the environment setup for Mojo. However, pain points persist with VS Code's integration, necessitating manual activation of.venv, detailed in the nightly changes along with the introduction of microbenchmarks. -

Anticipation High for Windows Native Mojo: Engineers jest and yearn for the pending Windows native Mojo release, its timeline shrouded in mystery. The eagerness for such a release underscores its importance to the community, suggesting substantial Windows-based developer interest.

Eleuther Discord

-

Engineers Get Hands on New Sparse Autoencoder Library: The recent tweet by Nora Belrose introduces a training library for TopK Sparse Autoencoders, optimized on GPT-2 Small and Pythia 160M, that can train an SAE for all layers simultaneously without the need to cache activations on disk.

-

Advancements in Sparse Autoencoder Research: A new paper reveals the development of k-sparse autoencoders that enhance the balance between reconstruction quality and sparsity, which could significantly influence the interpretability of language model features.

-

The Next Leap for LLMs, Courtesy of the Neocortex: Members discussed the Thousand Brains Project by Jeff Hawkins and Numenta, which looks to implement the neocortical principles into AI, focusing on open collaboration—a nod to nature's complex systems for aspiring engineers.

-

Evaluating Erroneous File Path Chaos: Addressing a known issue, members reassured that file handling, particularly erroneous result file placements—to be located in the tmp folder—is on the fix list, as indicated by the ongoing PR by KonradSzafer.

-

Unearthing the Unpredictability of Data Shapley: Discourse on an arXiv preprint unfolded, evaluating Data Shapley's inconsistent performance in data selection across diverse settings, suggesting engineers should keep an eye on the proposed hypothesis testing framework for its potential in predicting Data Shapley’s effectiveness.

Interconnects (Nathan Lambert) Discord

-

AI Video Synthesis Wars Heat Up: A Chinese AI video generator has outperformed Sora, offering stunning 2-minute, 1080p videos at 30fps through the KWAI iOS app, generating notable attention in the community. Meanwhile, Johannes Brandstetter announces Vision-LSTM which incorporates xLSTM's capacity to read images, providing code and a preprint on arxiv for further exploration.

-

Anthropic's Claude API Access Expanded: Anthropic is providing API access for alignment research, requiring an institution affiliation, role, LinkedIn, Github, and Google Scholar profiles for access requests, facilitating deeper exploration into AI alignment challenges.

-

Daylight Computer Sparks Interest: The new Daylight computer lured significant interest due to its promise of reducing blue light emissions and enhancing visibility in direct sunlight, sparking discussions about its potential benefits over existing devices like the iPad mini.

-

New Frontiers in Model Debugging and Theory: Engaging conversations unfolded around novel methods analogous to "self-debugging models," which leverage mistakes to improve outputs, alongside discussions on the craving for analytical solutions in complex theory-heavy papers like DPO.

-

Challenges in Deepening Robotics Discussions: Members pressed for deeper insights into monetization strategies and explicit numbers in robotics content, with specific callouts for a more granulated breakdown of the "40000 high-quality robot years of data" and a closer examination of business models in the space.

Cohere Discord

-

Free Translation Research with Aya but Costs for Commercial Use: While Aya is free for academic research, commercial applications require payment to sustain the business. Users facing integration challenges with the Vercel AI SDK and Cohere can find guidance and have taken steps to contact SDK maintainers for support.

-

Clever Command-R-Plus Outsmarts Llama3: Users suggest that Command-R-Plus outperforms Llama3 in certain scenarios, citing subjective experiences with its performance outside language specifications.

-

Data Privacy Options Explored for Cohere Usage: For those concerned with data privacy when using Cohere models, details and links were shared on how to utilize these models on personal projects either locally or on cloud services like AWS and Azure.

-

Developer Showcases Full-Stack Expertise: A full-stack developer portfolio is available, showcasing skills in UI/UX, Javascript, React, Next.js, and Python/Django. The portfolio can be reviewed at the developer's personal website.

-

Spotlight on GenAI Safety and New Search Solutions: Rafael is working on a product to prevent hallucinations in GenAI applications and invites collaboration, while Hamed has launched Complexity, an impressive generative search engine, inviting users to explore it at cplx.ai.

OpenRouter (Alex Atallah) Discord

-

Qwen 2 Supports Korean Too: Voidnewbie mentioned that the Qwen 2 72B Instruct model, recently added to OpenRouter's offerings, supports Korean language as well.

-

OpenRouter Battles Gateway Gremlins: Several users encountered 504 gateway timeout errors with the Llama 3 70B model; database strain was identified as the culprit, prompting a migration of jobs to a read replica to improve stability.

-

Routing Woes Spur Technical Dialogue: Members reported WizardLM-2 8X22 producing garbled responses via DeepInfra, leading to advice to manipulate the

orderfield in request routing and allusions to an in-progress internal endpoint deployment to help resolve service provider issues. -

Fired Up Over AI Safety: The dismissal of Leopold Aschenbrenner from OAI kicked off a fiery debate among members about the importance of AI security, reflecting a divide in perspectives on the need for and implications of AI safekeeping measures.

-

Performance Fluctuations with ChatGPT: Observations were shared about ChatGPT's possible performance drops during high-traffic periods, sparking speculations about the effects of heavy load on service quality and consistent user experience.

OpenAccess AI Collective (axolotl) Discord

Flash-attn Installation Demands High RAM: Members highlighted difficulties when building flashattention on slurm; solutions include loading necessary modules to provide adequate RAM.

Finetuning Foibles Fixed: Configuration issues with Qwen2 72b's finetuning were reported, suggesting a need for another round of adjustments, particularly because of an erroneous setting of max_window_layers.

Guide Gleam for Multi-Node Finetuning: A pull request for distributed finetuning using Axolotl and Deepspeed was shared, signifying an increase in collaborative development efforts within the community.

Data Dilemma Solved: A member's struggle with configuring a test_datasets in JSONL format was resolved by adopting the structure specified for axolotl.cli.preprocess.

API Over YAML for Engineered Inferences: Confusion over Axolotl's configuration for API usage versus YAML setups was clarified, with a focus on broadening capabilities for scripted, continuous model evaluations.

LAION Discord

- A Contender to Left-to-Right Sequence Generation: The novel σ-GPT, developed with SkysoftATM, challenges traditional GPTs by generating sequences dynamically, potentially cutting steps by an order of magnitude, detailed in its arXiv paper.

- Debating σ-GPT's Efficient Learning: Despite σ-GPT's innovative approach, skepticism arises regarding its practicality, as a curriculum for high performance might limit its use, drawing parallels with XLNET's limited impact.

- Exploring Alternatives for Infilling Tasks: For certain operations, models like GLMs may prove to be more efficient, while finetuning with distinct positional embeddings could enhance RL-based non-textual sequence modeling.

- AI Video Generation Rivalry Heats Up: A new Chinese AI video generator on the KWAI iOS app churns out 2-minute videos at 30fps in 1080p, causing buzz, while another generator, Kling, with its realistic capabilities, is met with skepticism regarding its authenticity.

- Community Reacts to Schelling AI Announcement: Emad Mostaque's tweet about Schelling AI, which aims to democratize AI and AI compute mining, ignites a mix of skepticism and humor due to the use of buzzwords and ambitious claims.

LangChain AI Discord

-

Early Stopping Snag in LangChain: Discussions highlighted an issue with the

early_stopping_method="generate"option in LangChain not functioning as expected in newer releases, prompting a user to link an active GitHub issue. The community is exploring workarounds and awaiting an official fix. -

RAG and ChromaDB Privacy Concerns: Queries about enhancing data privacy when using LangChain with ChromaDB surfaced, with suggestions to utilize metadata-based filtering within vectorstores, as discussed in a GitHub discussion, though acknowledging the topic's complexity.

-

Prompt Engineering for LLaMA3-70B: Engineers brainstormed effective prompting techniques for LLaM3-70B to perform tasks without redundant prefatory phrases. Despite several attempts, no definitive solution emerged from the shared dialogue.

-

Apple Introduces Generative AI Guidelines: An engineer shared Apple's newly formulated generative AI guiding principles aimed at optimizing AI operations on Apple's hardware, potentially useful for AI application developers.

-

Alpha Testing for B-Bot App: An announcement for a closed alpha testing phase of the B-Bot application, a platform for expert knowledge exchange, was made, with invites extended here seeking testers to provide development feedback.

Torchtune Discord

-

Phi-3 Model Export Confusion Cleared Up: Users addressed issues with exporting a custom phi-3 model to and from Hugging Face, pinpointing potential config missteps from a GitHub discussion. It was noted that using the FullModelHFCheckpointer, Torchtune handles conversions between its format and HF format during checkpoints.

-

Clarification and Welcome for PRs: Inquiry about enhancing Torchtune with n_kv_heads for mqa/gqa was met with clarification and encouragement for pull requests, with the prerequisite of providing unit tests for any proposed changes.

-

Dependencies Drama in Dev Discussions: Engineers emphasized precise versioning of dependencies for Torchtune installation and highlighted issues stemming from version mismatches, referencing situations like Issue #1071, Issue #1038, and Issue #1034.

-

Nightly Builds Get a Nod: A consensus emerged on the necessity of clarifying the need for PyTorch nightly builds to use Torchtune's complete feature set, as some features are exclusive to these builds.

-

PR Prepped for Clearer Installation: A community member announced they are prepping a PR specifically to update the installation documentation of Torchtune, to address the conundrum around dependency versioning and the use of PyTorch nightly builds.

AI Stack Devs (Yoko Li) Discord

-

Game Over for Wokeness: Discussion in the AI Stack Devs channel revolved around the gaming studios like those behind Stellar Blade; members applauded the studios' focus on game quality over aspects such as Western SJW themes and DEI measures.

-

Back to Basics in Gaming: Members expressed admiration for Chinese and South Korean developers like Shift Up for concentrating on game development without getting entangled in socio-political movements such as feminism, despite South Korea's societal challenges with such issues.

-

Among AI Town - A Mod in Progress: An AI-Powered "Among Us" mod was the subject of interest, with game developers noting AI Town's efficacy in the early stages, albeit with some limitations and performance issues.

-

Leveling Up With Godot: A transition from AI Town to using Godot was mentioned in the ai-town-discuss channel as a step to add advanced features to the "Among Us" mod, signifying improvements and expansion beyond initial capabilities.

-

Continuous Enhancement of AI Town: AI Town's development continues, with contributors pushing the project forward, as indicated in the recent conversations about ongoing advancements and updates.

OpenInterpreter Discord

-

Is There a Desktop for Open Interpreter?: A guild member queried about the availability of a desktop UI for Open Interpreter, but no response was provided in the conversation.

-

Open Interpreter Connection Trials and Tribulations: A user was struggling with Posthog connection errors, particularly with

us-api.i.posthog.com, which indicates broader issues within their setup or external service availability. -

Configuring Open Interpreter with OpenAPI: Discussion revolved around whether Open Interpreter can utilize existing OpenAPI specs for function calling, suggesting a potential solution through a true/false toggle in some configuration.

-

Tool Use with Gorilla 2: Challenges with tool use in LM Studio and achieving success with custom JSON output and OpenAI toolcalling were shared. A recommendation was made to check out an OI Streamlit repository on GitHub for possible solutions.

-

Looking for Tips? Check OI Website: In a succinct reply to a request, ashthescholar. directed a member to explore Open Interpreter's website for guidance.

DiscoResearch Discord

-

Mixtral's Expert System Unveiled: A clarifying YouTube video dispels the myth about Mixtral, confirming it comprises 256 experts across its layers and boasting a staggering 46.7 billion parameters, with 12.9 billion active parameters for token interactions.

-

Which DiscoLM Reigns Supreme?: Confusion permeates discussions over the leading DiscoLM model, with multiple models vying for the spotlight and recommendations favoring 8b llama for systems with just 3GB VRAM.

-

Maximizing Memory on Minimal VRAM: A user successfully runs Mixtral 8x7b at 6-7 tokens per second on a 3GB VRAM setup using a Q2-k quant, highlighting the importance of memory efficiency in model selection.

-

Re-evaluating Vagosolutions' Capabilities: Recent benchmarks have sparked new interest in Vagosolutions' models, leading to debates on whether finetuning Mixtral 8x7b could triumph over a finetuned Mistral 7b.

-

RKWV vs Transformers - Decoding the Benefits: The guild has yet to address the request for insights into the intuitive advantages of RKWVs compared to Transformers, suggesting either a potential oversight or the need for more investigation.

Datasette - LLM (@SimonW) Discord

-

LLMs Eyeing New Digital Territories: Members shared developments hinting at large language models (LLMs) being integrated into web platforms, with Google considering LLMs for Chrome (Chrome AI integration) and Mozilla experimenting with transformers.js for local alt-text generation in Firefox Nightly (Mozilla experiments). The end game speculated by users is a deeper integration of AI at the operating system level.

-

Prompt Injection Tricks and Trades: An interesting use case of prompt injection to manipulate email addresses was highlighted through a member's LinkedIn experience and discussed along with a link showcasing the concept (Prompt Injection Insights).

-

Dash Through Dimensions for Text Analysis: A guild member delved into the concept of measuring the 'velocity of concepts' in text, drawing ideas from a blog post (Concept Velocity Insight) and showed interest in applying these concepts to astronomy news data.

-

Dimensionality: A Visual Frontier for Embeddings: Members appreciated a Medium post (3D Visualization Techniques) for explanations on dimensionality reduction using PCA, t-SNE, and UMAP, which helped visualize 200 astronomy news articles.

-

UMAP Over PCA for Stellar Clustering: It was found that UMAP provided significantly better clustering of categorized news topics, such as the Chang'e 6 moonlander and Starliner, over PCA, when labeling was done with GPT-3.5.

tinygrad (George Hotz) Discord

-

Hotz Challenges Taylor Series Bounty Assumptions: George Hotz responded to a question about Taylor series bounty requisites with a quizzical remark, prompting reconsideration of assumed requirements.

-

Proof Logic Put Under the Microscope: A member's perplexity regarding the validity of an unidentified proof sparked a debate, questioning the proof's logic or outcome.

-

Zeroed Out on Symbolic Shape Dimensions: A discussion emerged on whether a symbolic shape dimension can be zero, indicating interest in the limits of symbolic representations in tensor operations.

PART 2: Detailed by-Channel summaries and links

{% if medium == 'web' %}

LLM Finetuning (Hamel + Dan) ▷ #general (12 messages🔥):

- RAG means something silly?: Members joked about the meaning of RAG, suggesting it stands for "random-ass guess".

- Open-Source Model Training Misstep: One member shared insights on the misconception caused by open-source models' training steps and epochs, highlighting that "Num epochs = max_steps / file_of_training_jsonls_in_MB" led to 30 epochs for a 31 MB file.

- Mistral Fine-Tuning Repo Shared: A contributor shared a GitHub repository created for using the fine-tuning notebook from Mistral in Modal, while another shared modal-labs' guide noting issues with outdated deepspeed docs.

- Hybrid Search Inquiries: Questions were raised about normalizing BM25 scores and weighting them when combining with dense vector search. A member recommended a blog post for further reading.

- Accessing Old Zoom Recordings: A request for accessing old Zoom recordings was answered with a note to check on the Maven site.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #asia-tz (1 messages):

_ribhu: Hey I could help with that. Can you DM with the details?

LLM Finetuning (Hamel + Dan) ▷ #🟩-modal (17 messages🔥):

-

Enjoying Modal's VRAM and GPU power: A member praised the use of 10 A100 GPUs on Modal and inquired about VRAM usage visibility. They also shared a script for Pokemon cards description using Moondream VLM.

-

Installing flash-attn made easier: They sought tips for pip installing

flash-attnand later found a useful gist for Whisper which they adapted for their needs. The gist can be found here. -

Modal's cost efficiency impresses users: Running 13k images in 10 minutes using 10x A100 (40G) for just $7 was highlighted as very cost-effective. This was met with positive reactions from other members.

-

Issues with GPU availability: A member reported a 17-minute wait for an A10, which was unusual according to another member. They later confirmed it was working fine and any issues should be flagged for review by platform engineers.

-

Lack of queue status visibility: Another user, trying to get an H100 node, asked about dashboard availability for viewing queue status. It was clarified that while there are dashboards for running/deployed apps, there isn't one for seeing the queue status or availability estimates.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #learning-resources (2 messages):

-

Explore Vector-Powered Systems with VectorHub: A member shared a link to VectorHub by Superlinked, which offers resources on RAG, text embeddings, and vectors. The platform is a free educational and open-source resource for data scientists and software engineers to build vector-powered systems.

-

Relevant Articles on OpenPipe: Another member posted a link to the OpenPipe blog for relevant articles. The blog contains insights and information pertinent to the discussion topics within this channel.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #jarvis-labs (2 messages):

-

Credit where it's due for Cloud GPU Platform: A member thanked Vishnu for creating an awesome cloud GPU platform and shared a blog post about using it with Axolotl. They shared a tweet and detailed blog post discussing experiences from an LLM course and conference.

-

Crash Report on Huggingface Dataset Processing: A user reported a crash while processing a Huggingface dataset on an A6000 with 32 GB VRAM. They provided a gist link to the error details and mentioned that the process works fine on their 32 GB Dell laptop.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #hugging-face (3 messages):

- New form for credits opens on October 10th: A member announced that a new form for obtaining credits will officially re-open on Monday the 10th of October. The form will stay open until the 18th, allowing new students signing up by the 15th to receive credits.

- Missing credits concern: Another member expressed concern about missing credits that were previously in their account. They were advised to contact [email protected] to resolve the issue.

LLM Finetuning (Hamel + Dan) ▷ #replicate (1 messages):

- Local Disk Space Issues with Docker: A member reported issues with running

cog runandcog pushcommands during an attempted Replicate demo. They suspect their local computer lacks sufficient disk space and inquired about the possibility of conducting the build process remotely.

LLM Finetuning (Hamel + Dan) ▷ #langsmith (8 messages🔥):

-

Credit Access Clarification: Multiple users discussed issues related to credit availability and access. It was highlighted multiple times that "the credits were deposited regardless of if you set billing up. You just need a payment method on file in order to access the credits."

-

Form and Org ID Details: One user requested specific details about their org ID (b9e3d34d-3c3c-4528-8e2f-2b31075b47fd) for billing purposes. Follow-up prompts were made to confirm if they filled out a form previously, with an open offer to coordinate over email at [email protected] for further assistance.

LLM Finetuning (Hamel + Dan) ▷ #workshop-4 (1 messages):

- Predibase Office Hours Rescheduled: The Predibase office hours will now take place on Wednesday, June 12 at 10am PT. Topics include LoRAX, multi-LoRA inference, and fine-tuning for speed with speculative decoding.

LLM Finetuning (Hamel + Dan) ▷ #jason_improving_rag (64 messages🔥🔥):

<ul>

<li>

<strong>Marker disappoints with malformed markdown tables:</strong> A user expressed frustration with the <a href="https://github.com/VikParuchuri/marker/tree/master">Marker tool for converting PDFs to markdown</a>, explaining that the markdown tables often don't meet their requirements. This triggered a discussion about potentially fine-tuning the tool to improve table formatting.

</li>

<li>

<strong>Exploring embedding quantization:</strong> The utility of <a href="https://huggingface.co/blog/embedding-quantization">quantized embeddings</a> was discussed, highlighting a demo of a real-life retrieval scenario involving 41 million Wikipedia texts. The blog post covers the impact of embedding quantization on retrieval speed, memory usage, disk space, and cost.

</li>

<li>

<strong>GitHub repository for RAG complexities:</strong> A member shared a link to the <a href="https://github.com/jxnl/n-levels-of-rag">n-levels-of-rag</a> GitHub repository and a related <a href="https://jxnl.github.io/blog/writing/2024/02/28/levels-of-complexity-rag-applications/">blog post</a>, providing a comprehensive guide for understanding and implementing RAG applications across different levels of complexity.

</li>

<li>

<strong>Tackling table extraction challenges:</strong> An alternative tool for table extraction was discussed, with a user recommending <a href="https://github.com/xavctn/img2table">img2table</a>, an OpenCV-based library for identifying and extracting tables from PDFs and images. Users shared their experiences and potential improvements for existing table extraction and conversion tools.

</li>

<li>

<strong>Multilingual content embedding model query:</strong> A user inquired about embedding models suitable for multilingual content, which led to discussions on various recommendations and fine-tuning methodologies to better handle specific requirements in multilingual contexts.

</li>

</ul>

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #jeremy_python_llms (221 messages🔥🔥):

-

Discussing FastHTML Features and Components: Members expressed excitement and curiosity about FastHTML, comparing it favorably to FastAPI and Django. The conversation included detailed explanations on creating apps, connecting to multiple databases, and using various libraries like picolink and daisyUI.

-

Jeremy and Team's Contributions and Future Plans: Jeremy and John frequently chimed in to answer questions, promising future markdown support and addressing the need for community-built component libraries. Jeremy invited members to contribute by creating easy-to-use FastHTML libraries for popular frameworks like Bootstrap or Material Tailwind.

-

Markdown Rendering with FastHTML: Jeremy and John discussed methods to render markdown within FastHTML, including using scripts and NotStr classes. John shared a code snippet demonstrating how to render markdown using JavaScript and UUIDs.

-

HTMX Integration and Use Cases: HTMX’s role in FastHTML was emphasized with examples provided for handling various events like keyboard shortcuts and database interactions. Members also shared HTMX usage tips and experiences, highlighting its effectiveness and comparing its interaction patterns to JavaScript.

-

Coding Tools and Environment Discussions: Additional tools and platforms like Cursor and Railway were discussed, with members sharing their experiences and best practices. FastHTML-related resources and tutorials were also shared, such as a WIP tutorial and several GitHub repositories.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #axolotl (23 messages🔥):

- Searching for Python script for FFT on Modal: A user is looking for a Python script to run FFT on Modal for llama-3 but has only found a Lora git project.

- Chat templates for Mistral Instruct: A member asks about available chat templates that support a system prompt for DPO finetuning, seeking clarity on formatting and template usage.

- Combining Axolotl and HF templates not advisable: A user discusses with hamelh about matching Axolotl finetuning templates with custom inference code, to avoid mismatches since Axolotl does not use Hugging Face (HF) templates.

- Confusion over token space assembly: A noob user queries why spaces are added when Axolotl assembles in token space, finding templates confusing.

- Issue with 7B Lora merge resulting in extra shards: A user experiences a weird phenomenon where merging a 7B Lora results in 6 shards instead of 3, and another user suggests uploading the LoRA for debugging. They also referenced a related GitHub issue and suggested possible fixes involving

torch.float16.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #zach-accelerate (5 messages):

- Quantization confirmed during model load: A member asked if quantization happens during model load and if the CPU is responsible for it. Another member confirmed, stating "It happens when loading the model weights" and provided links to Hugging Face documentation and the respective GitHub code.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #freddy-gradio (1 messages):

- Request for OAuth Integration Explained: One member thanked another for providing information on an HF option and requested further details on implementing a simple access control mechanism or integrating OAuth. They specifically sought expansion on how these security measures would work in their context.

LLM Finetuning (Hamel + Dan) ▷ #charles-modal (2 messages):

- Modal module error resolved: A member encountered an error saying, "No module named modal." They resolved this issue by using the command

pip install modal.

LLM Finetuning (Hamel + Dan) ▷ #credits-questions (6 messages):

-

Langsmith credits stuck in limbo: Several members reported not receiving their langsmith credits despite filling out the necessary forms. One asked explicitly, "What's the status on langsmith credits, has anyone received it?".

-

Fireworks credits form confusion: Multiple users mentioned not receiving their fireworks credits after submitting the form with their account IDs. A member recalled filling the form with "user ID back then" instead of account ID.

-

Request to share account IDs: In response to missing fireworks credits, a request was made for users to share their account IDs in a specific channel, <#1245126291276038278>.

-

June 2nd course credit redemption issue: A member inquired if they could still redeem their course credits purchased before June 2nd even though they delayed the redemption. This issue appears to have been resolved via email, with a follow-up from Dan Becker stating, "Looks like we have synced up by email".

LLM Finetuning (Hamel + Dan) ▷ #strien_handlingdata (3 messages):

-

Struggle to download session recordings: A member experienced difficulty downloading session recordings as the course website now embeds videos instead of redirecting to Zoom. They requested the Zoom link to gain downloading access.

-

Bulk tool gets a revamp: Inspired by the course, the member started developing the next version of their tool named "Bulk." Due to the influx of synthetic datasets, they see the value in building more tools in this area and invited feedback.

LLM Finetuning (Hamel + Dan) ▷ #fireworks (11 messages🔥):

- Users flood with credit issues: Multiple users raised concerns about not receiving their credits. They listed their account IDs and email addresses for reference.

- Invitation to AI Engineer World's Fair: One user invited another to meet up at the AI Engineer World's Fair.

LLM Finetuning (Hamel + Dan) ▷ #emmanuel_finetuning_dead (2 messages):

- LoRA Land paper sparks finetuning discussion: A user shared the LoRA Land paper and asked if it would change another user's perspective on finetuning. Another member responded with interest, appreciating the share with a thumbs-up emoji.

LLM Finetuning (Hamel + Dan) ▷ #east-coast-usa (1 messages):

- Demis Hassabis at Tribeca: A member pointed out that Demis Hassabis will be at The Thinking Game premier tonight. Tickets are available for $35, and the event will include a conversation with Darren Aronofsky about AI and the future. link

Link mentioned: Tweet from Tribeca (@Tribeca): Come and hear @GoogleDeepMind CEO & AI pioneer @demishassabis in conversation with director @DarrenAronofsky about AI, @thinkgamefilm and the future at #Tribeca2024: https://tribecafilm.com/films/thin...

LLM Finetuning (Hamel + Dan) ▷ #predibase (7 messages):

-

Predibase credits excite users: A user thanked team members for providing credits and referred to an example on Predibase. Another user inquired about when credits expire while noting that upgrading to the Developer Tier requires adding a credit card.

-

Email registration issues: Two users reported issues with receiving confirmation emails after registering on Predibase, preventing them from logging in.

-

LoRAX impresses workshop participant: A user praised the Predibase workshop for streamlining processes like fine-tuning and deploying LLMs. They mentioned that LoRAX particularly stands out by being cost-efficient and effectively integrating tools for deploying high-quality LLMs into web applications.

Link mentioned: Quickstart | Predibase: Predibase provides the fastest way to fine-tune and serve open-source LLMs. It's built on top of open-source LoRAX.

LLM Finetuning (Hamel + Dan) ▷ #career-questions-and-stories (19 messages🔥):

-

Cursor: The VS-Code based AI Code Editor impresses: Members discussed the capabilities of Cursor, an AI-powered code editor based on VS-Code. One member cited its key advantage: "it indexes your whole codebase for thorough multi-file and multi-location changes".

-

Pro Subscription praised for auto code completion: A user noted significant benefits from using the paid version of Cursor, particularly highlighting improvements in automatic code completion when using GPT-4.

-

Custom API keys make Cursor flexible: Cursor's integration allows users to input their own API keys for services like OpenAI, Anthropic, Google, and Azure. This feature received praise as it enables extensive AI interactions at the user's own cost.

-

Cursor compared favorably to GitHub Copilot: Users who switched from GitHub Copilot to Cursor shared positive experiences, emphasizing enhanced productivity and satisfaction. One user mentioned, "I fully switched after using copilot for like a year or two and haven't looked back."

-

VS-Code enthusiasts welcome Cursor: The adoption of Cursor by long-time VS-Code users was discussed, noting that since Cursor is built on open-source VS-Code, it retains familiar functionality while adding AI-powered enhancements. One member's endorsement: "it just feels better, you just enjoy the improvements".

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #openpipe (2 messages):

Given the provided chat logs, there is insufficient information for a substantive summary. There are no significant topics, discussion points, links, or blog posts of interest provided in the messages.

LLM Finetuning (Hamel + Dan) ▷ #openai (29 messages🔥):

-

New Parallel Function Call Feature: Users can now disable parallel function calling by setting

"parallel_tool_calls: false", a feature shipped recently by OpenAI. -

Credits Expiry Clarified: OpenAI credits expire three months from the date of grant. This was clarified in response to user queries about credit usage deadlines.

-

Access Issues to GPT-4: Multiple users reported issues accessing GPT-4 models and discrepancies in rate limits. Affected users were advised to email [email protected] and cc [email protected] for resolution.

-

Cursor and iTerm2 Use Credits: Third-party tools like Cursor and iTerm2 allow users to utilize their OpenAI credits. These integrations offer flexibility in using various AI models with their own API keys.

-

Ideas for Credits Usage: A user shared a Twitter link listing creative ways to use credits, inviting others to share more ideas.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #capelle_experimentation (82 messages🔥🔥):

-

Exciting Talk and Resources from tcapelle: tcapelle shared an informative talk, with slides and a Colab notebook (connections) linked, explaining various concepts in LLM fine-tuning. The GitHub repo provided (connections) includes examples and code.

-

Fine-Tuning Tips and Community Interaction: tcapelle suggested training Llama3 for a few epochs and using big LLMs for assessment, emphasizing the community's extensive experience with Mistrals and Llamas. He recommended using Alpaca-style evaluations for accurate performance metrics.

-

Fast Learning and Pruning Insights: A Fast.ai post discussed neural networks' ability to memorize with minimal examples. tcapelle shared pruning scripts on GitHub (create_small_model.py) and recommended watching a GTC 2024 talk for insights on optimizing LLMs.

-

Weave Toolkit Integration and Features: Scottire mentioned upcoming features for curating samples in Weave and provided a code snippet for adding rows to datasets. Links to Weave on GitHub and its documentation were shared to assist users in integrating Weave into their workflows.

-

Engaging Community Ideas: Members discussed organizing weekly meetups, paper clubs, and working groups for collaborative learning and idea sharing. The humorous suggestion of a "BRIGHT CLUB" and various community bonding activities highlighted the vibrant and supportive nature of the group.

Links mentioned:

Perplexity AI ▷ #announcements (1 messages):

- Perplexity releases official trailer for "The Know-It-Alls": A link to YouTube video titled "The Know-It-Alls" by Perplexity was shared. The description poses the intriguing question, "If all the world’s knowledge were at our fingertips, could we push the boundaries of what’s possible? We’re about to find out."

Link mentioned: "The Know-It-Alls" by Perplexity | Official Trailer HD: If all the world's knowledge were at our fingertips, could we push the boundaries of what's possible? We're about to find out.Join the search. Find the answe...

Perplexity AI ▷ #general (493 messages🔥🔥🔥):

-

Perplexity's Time Zone and Server Mystery: Members discussed the time zone followed by Perplexity, with one noting that it likely depends on the server location, and another guessing it might be +2 or similar. A user inquired about how to find the server's exact location info.

-

Issues with Persistent Attachments: A user expressed frustration over Perplexity AI fixating on an irrelevant file for temporary context, despite multiple queries. Another explained that starting a new thread can resolve this context persistence issue.

-

"The Know-It-Alls" Ad Disappoints Viewers: Members anticipated a significant update or new feature from the "The Know-It-Alls" premiere, but it turned out to be a mere promotional video, leaving many feeling trolled and underwhelmed. Comments followed comparing it to a Superbowl ad.

-

Pro Search and Claude 3 Discussions: Various issues and preferences about AI models like Pro Search and Claude 3 were discussed. Users shared experiences, and some noted the recent removal of Claude 3 Haiku from Perplexity Labs.

-

Horse Racing Query Testing PPLX: Users tested PPLX with horse racing results queries, experiencing different results and accuracy based on the search's timing. Discussions showed how using structured prompts like helps make the AI's reasoning process clearer and more accurate.

Links mentioned:

Perplexity AI ▷ #sharing (16 messages🔥):

-

SpaceX's Starship achieves milestones: SpaceX's fourth test flight of the Starship launch system on June 6, 2024, marked significant progress. The flight involved successful splashdowns in both the Gulf of Mexico and the Indian Ocean.

-

Comparing Asian Curry varieties: A comprehensive comparison of Japanese, Indian, and Thai curries highlights their distinct characteristics. The detailed comparison includes historical origins, spices, herbs, and typical ingredients.

-

Concerns over generated content: There were issues with inaccurate content generation, specifically with a comparison between playground.com and playground.ai. Playground.com was inaccurately described.

-

Reactions to California Senate Bill 1047: Various stakeholders reacted to California Senate Bill 1047, which focuses on AI safety. AI Safety Advocates praised the bill for establishing clear legal standards for AI companies.

Links mentioned:

Perplexity AI ▷ #pplx-api (4 messages):

- Questions about llava model in API: A member asked if there are plans to allow the use of llava as a model in the API, mentioning that it has been removed from the labs. No response was documented in the provided messages.

- Frustration with beta testing for sources: Members expressed frustrations regarding the sources beta testing, with one noting, "I swear I have filled out this form 5 time now." They questioned whether new people are being allowed into the beta program.

HuggingFace ▷ #announcements (1 messages):

- FluentlyXL Final Version Is Here: The FluentlyXL Final version is available with enhancements in aesthetics and lighting. Check out more details on the Fluently-XL-Final page.

- Carbon Footprint Predictor Released: A new tool for predicting carbon footprints is available now. Find out more about it on the Carbon Footprint Predictor page.

- SimpleTuner Updates with MoE Support: SimpleTuner version 0.9.6.2 includes MoE split-timestamp training support and a brief tutorial. Get started with it on GitHub.

- LLM Resource Guide Compilation: An organized guide of favorite LLM explainers covering vLLM, SSMs, DPO, and QLoRA is now available. Check the details in the resource guide.

- ML Library Using TensorFlow: A new ML Library based on TensorFlow has been released. Find the source code and documentation on GitHub.

Links mentioned:

HuggingFace ▷ #general (248 messages🔥🔥):

-

Discussion on Virtual Environments and Package Managers: Members debated between using conda or pyenv for managing Python environments. One user expressed frustration with conda, preferring pyenv, while another admitted to using global pip installs without facing major issues.

-

GPT and PyTorch Version Compatibility: A user highlighted that Python 3.12 does not yet support PyTorch. This sparked more discussions around the challenges of maintaining compatibility with different Python versions in various projects.

-

HuggingFace and Academic Research Queries: A member inquired about the feasibility of using HuggingFace AutoTrain for an academic project without hosting the model themselves. The responses were mixed, noting that the available free services might not support larger models and the potential need for API costs.

-

Click-through Rate and Fashionability: Users discussed the idea of using AI to predict and generate highly clickable YouTube thumbnails or fashionable clothing by analyzing click-through rates and fashion ratings. Reinforcement Learning (PPO) and other methods were suggested to optimize for human preferences.

-

Gradio Privacy Concerns: There were mentions of issues related to making Gradio apps private while maintaining access. Also, a concern about potential breaches and updating gradio versions across repositories was discussed.

Links mentioned:

HuggingFace ▷ #today-im-learning (1 messages):

- AI in Medical Education overview: A new YouTube video discusses the current role of genAI in medical education and its future trajectory. Topics include the learning landscape, the usage of Anki for active recall, customization through AddOns, and the integration of genAI-powered search via Perplexity. AI In MedEd YouTube Video.

Link mentioned: AI In MedEd: In 5* minutes: In the first of a new series, we're going to go over what genAI's place currently is in medical education and where it's likely going. 1: MedEd's Learning la...

HuggingFace ▷ #cool-finds (2 messages):

- Member seeks AI collaboration: A senior web developer expressed interest in collaborating on an AI and LLM project. They requested others to text them if interested in organizing a collaboration.

- Introduction to TorchTune: A member shared a link to TorchTune, a native PyTorch library designed for LLM fine-tuning. The description highlights its role in fine-tuning large language models using PyTorch.

Link mentioned: GitHub - pytorch/torchtune: A Native-PyTorch Library for LLM Fine-tuning: A Native-PyTorch Library for LLM Fine-tuning. Contribute to pytorch/torchtune development by creating an account on GitHub.

HuggingFace ▷ #i-made-this (13 messages🔥):

-

Fluently XL produces fantastic portraits with script: A user shared their experience using Fluently XL for text-to-image generation, incorporating both control net and image prompts. They provided links to two GitHub repositories they utilized: first script and second script.

-

Discussion on reading group opportunity: Members discussed the potential for a reading group focusing on a coding tutorial. They agreed to start the session next week at the usual time.

-