AI News for 6/10/2024-6/11/2024. We checked 7 subreddits, 384 Twitters and 30 Discords (412 channels, and 2774 messages) for you. Estimated reading time saved (at 200wpm): 313 minutes.

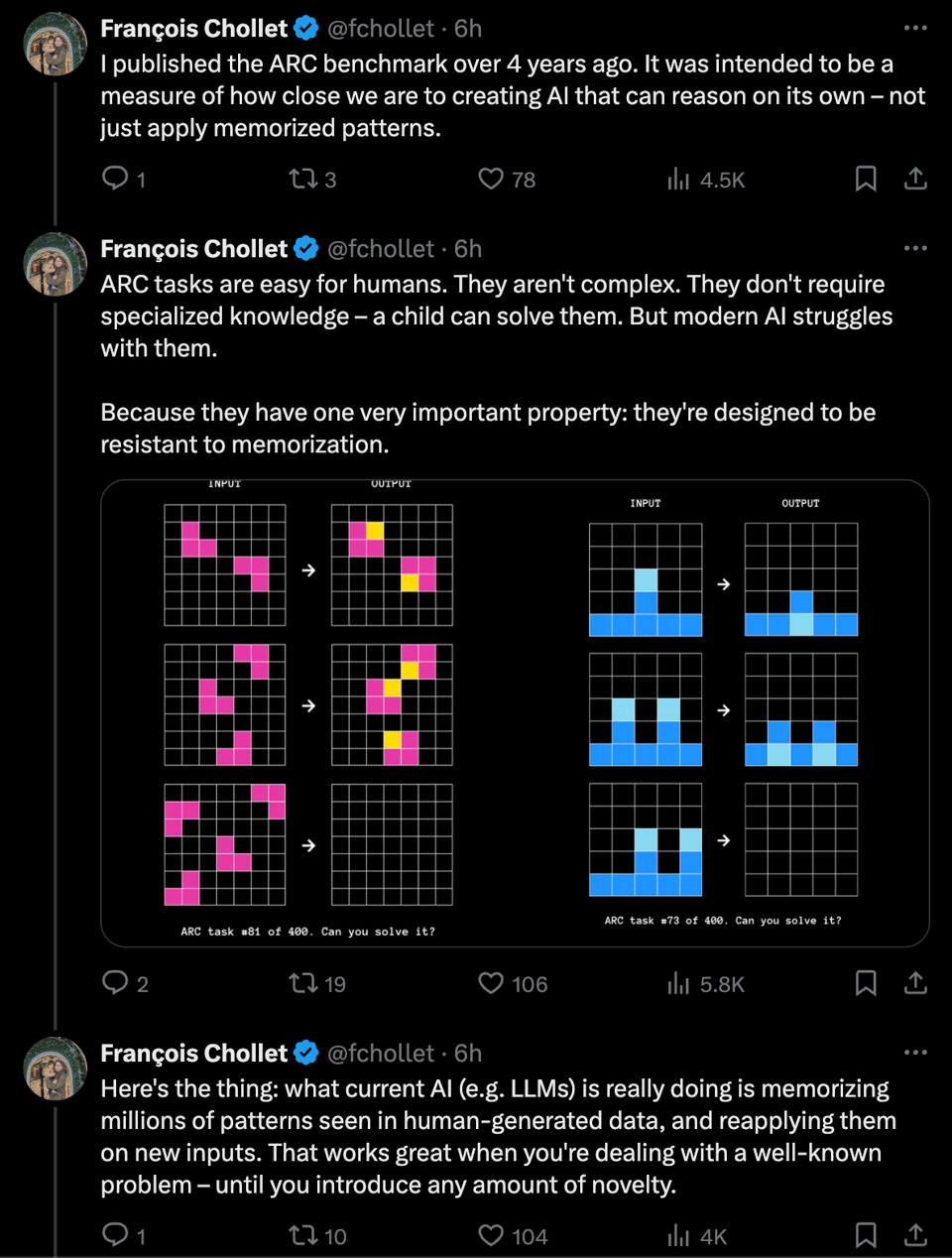

In this weekend's Latent Space pod we talked about test set contamination and the Science of Benchmarking, and today one of the OGs in the field is back with a solution - generate a bunch of pattern-recognition-and-completion benchmarks:

You can play with the ARC-AGI puzzles yourself to get a sense for what "easy for humans hard for AI" puzzles look like:

This all presumes an opinionated definition of AGI, which the team gracefully provides:

DEFINING AGI

Consensus but wrong: AGI is a system that can automate the majority of economically valuable work.

Correct: AGI is a system that can efficiently acquire new skills and solve open-ended problems.

Definitions are important. We turn them into benchmarks to measure progress toward AGI. Without AGI, we will never have systems that can invent and discover alongside humans.

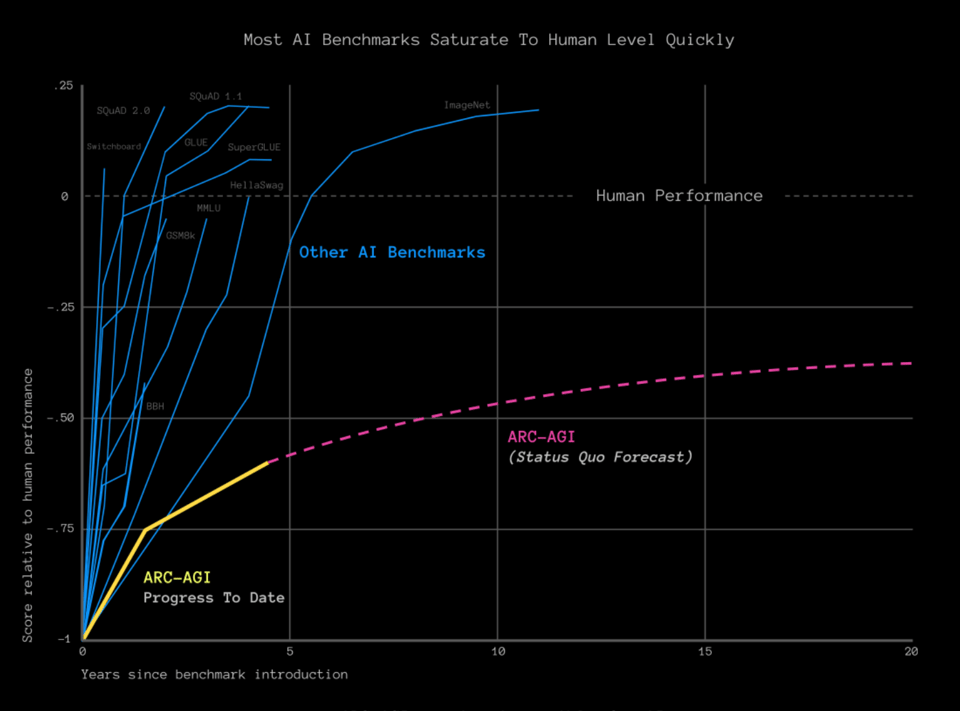

This benchmark is curved to resist the classic 1-2 year saturation cycle that other benchmarks have faced:

The solution guide offers François' thoughts on promising directions, including Discrete program search, skill acquisition, and hybrid approaches.

Last week the Dwarkesh pod was making waves predicting AGI in 2027, and today it's back with François Chollet asserting that the path we're on won't lead to AGI. Which way, AGI observoor?

{% if medium == 'web' %}

Table of Contents

[TOC]

{% else %}

The Table of Contents and Channel Summaries have been moved to the web version of this email: [{{ email.subject }}]({{ email_url }})!

{% endif %}

AI Twitter Recap

all recaps done by Claude 3 Opus, best of 4 runs. We are working on clustering and flow engineering with Haiku.

Apple Integrates ChatGPT into iOS, iPadOS, and macOS

- OpenAI partnership: @sama and @gdb announced Apple is partnering with OpenAI to integrate ChatGPT into Apple devices later this year. @miramurati and @sama welcomed Kevin Weil and Sarah Friar to the OpenAI team to support this effort.

- AI features: Apple Intelligence will allow AI-powered features across apps, like summarizing documents, analyzing photos, and interacting with on-screen content. @karpathy noted the step-by-step AI integration into the OS, from multimodal I/O to agentic capabilities.

- Privacy concerns: Some expressed skepticism about Apple sharing user data with OpenAI, despite Apple's "Private Cloud Compute" guarantees. @svpino detailed the security measures Apple is taking, such as on-device processing and differential privacy.

Reactions to Apple's WWDC AI Announcements

- Mixed reactions: While some were impressed by Apple's AI integration, others felt Apple was behind or relying too much on OpenAI. @karpathy and @far__el questioned if Apple can ship capable AI on its own.

- Comparison to other models: Apple's on-device models seem to outperform other small models, but their server-side models are still behind GPT-4. @_philschmid noted Apple is using adapters and mixed-precision quantization to optimize performance.

- Integration focus: Many noted Apple's focus on deep, frictionless AI integration rather than model size. @ClementDelangue praised the push for on-device AI to improve user experience and privacy.

Advances in AI Research and Applications

- Mixture of Agents (MoA): @togethercompute introduced MoA, which leverages multiple open-source LLMs to achieve a score of 65.1% on AlpacaEval 2.0, outperforming GPT-4.

- AI Reasoning Challenge (ARC): @fchollet and @mikeknoop launched the $1M ARC Prize to create an AI that can adapt to novelty and solve reasoning problems, steering the field back towards AGI.

- Advances in speech and vision: @GoogleDeepMind showcased Imagen 3's ability to generate rich images with complex textures. @arankomatsuzaki shared Microsoft's VALL-E 2, which achieves human parity in zero-shot text-to-speech.

- AI applications: Examples included @adcock_brett's updates on Figure's robot manufacturing, @vagabondjack's $6M seed round for AI-powered financial analysis at @brightwaveio, and @AravSrinivas's note on Perplexity being a top referral source for publishers.

Memes and Humor

- @jxmnop joked about LLMs reaching the boundary of human knowledge with a humorous image.

- @nearcyan poked fun at the repeated claims of "nvidia is done for" with a meme.

- @far__el quipped "Apple Intelligence is going to be the largest deployment of tool using AI and i'd like someone to speak at @aidotengineer on the design considerations!" in response to @swyx's call for Apple engineers to share insights.

AI Reddit Recap

Across r/LocalLlama, r/machinelearning, r/openai, r/stablediffusion, r/ArtificialInteligence, /r/LLMDevs, /r/Singularity. Comment crawling works now but has lots to improve!

AI Developments

- Apple partners with OpenAI to integrate GPT-4o into iOS, iPadOS, and macOS: In /r/OpenAI, Apple unveils "Apple Intelligence", a personal AI system built into their operating systems. It integrates OpenAI's GPT-4o and runs on-device, enhancing Siri, writing tools, and enabling image generation.

- Details on Apple's on-device LLM architecture revealed: In /r/LocalLLaMA, more specifics are shared about how Apple Intelligence works under the hood, using quantized task-specific LoRAs called "adapters", optimized for inference performance, and leveraging a "semantic index" for personal context.

- AMD releases open-source LLVM compiler for AI processors: AMD launches Peano, an open-source LLVM compiler for their XDNA and XDNA2 Neural Processing Units (NPUs) used in Ryzen AI processors.

- Microsoft introduces AI Toolkit for Visual Studio Code: In /r/LocalLLaMA, Microsoft's new AI Toolkit extension for VS Code is discussed, which provides a playground and fine-tuning capabilities for various models, with the option to run locally or on Azure.

Research and Benchmarks

- Study finds RLHF reduces LLM creativity and output variety: A new research paper posted in /r/LocalLLaMA shows that while alignment techniques like RLHF reduce toxic and biased content, they also limit the creativity of large language models, even in contexts unrelated to safety.

- Benchmarking affordable AWS instances for LLM inference: In /r/LocalLLaMA, benchmarks of various AWS instances for running Dolphin-Llama3 reveal the g4dn.xlarge offers the best cost-performance at $0.58/hr, with GPU speed being the key factor. More memory allows for higher token usage in outputs.

Stable Diffusion 3 and Beyond

- Stable Diffusion 3's importance and standout features highlighted: A post in /r/StableDiffusion breaks down why SD3 is a significant step forward, with its new 16-channel VAE capturing more details, enabling faster training and better low-res results. The multi-modal architecture aligns with LLM research trends and is expected to boost techniques like ControlNets and adapters.

Miscellaneous

- Tip for boosting CPU+RAM inference speed by ~40%: In /r/LocalLLaMA, a user shares a trick to increase tokens/sec by enabling XMP in BIOS to run RAM at spec bandwidth instead of JEDEC defaults. RAM overclocking could provide further gains but risks instability.

- Simplifying observability in RAG with BeyondLLM 0.2.1: A /r/LocalLLaMA post explains how BeyondLLM 0.2.1 makes it easier to add observability to LLM and RAG applications, allowing tracking of metrics like response time, token usage, and API call types.

Memes and Humor

- AI expectations vs reality meme: An amusing image contrasting the hyped expectations and actual capabilities of AI systems shared in a subreddit.

AI Discord Recap

-

Apple Debuts with Major AI Innovations:

- At WWDC 2024, Apple announced Apple Intelligence, a deeply integrated AI system for iPhones, iPads, and Macs. Key features include ChatGPT integration into Siri, AI writing tools, and a new "Private Cloud Compute" for secure offloading of complex tasks. Benchmarks showcase Apple's on-device and server models performing well in instruction following and writing. However, concerns around user privacy and Elon Musk's warning of banning Apple devices at his companies due to OpenAI integration sparked debates.

-

Model Compression and Optimization Strategies:

- Engineers actively discussed techniques for quantizing, pruning, and optimizing large language models like LLaMA 3 to reduce model size and improve efficiency. Resources like LLM-Pruner and Sconce were shared, along with debates on the stability of lower-precision formats like FP8. Optimizations like LoRA, 8-bit casting, and offloading to CPU were explored to tackle Out-of-Memory (OOM) errors during training.

- Engineers discussed overcoming Out of Memory (OOM) errors using strategies like offloading optimizer state to CPU and bnb 8bit casting (VRAM Calculator), highlighting techniques like Low-Rank Adapters (LoRA).

- Community conversations shared insights on fine-tuning challenges with practical examples and resources zlike YouTube tutorial.

-

Exciting Open-Source and Benchmark News:

- Stable Diffusion 3 (SD3) excited members, aiming for better voxel art, while comparisons of model platforms like Huggingface and Civitai led to debates on best upscaling methods and availability (SD3 Announcement).

- Hugging Face expanded AutoTrain with Unsloth support (Announcement), easing large model fine-tuning with enhanced memory management.

- Advancements in Language and Multimodal Models: The AI community witnessed exciting breakthroughs, including LlamaGen for autoregressive image generation, VALL-E 2 achieving human parity in zero-shot text-to-speech synthesis, and MARS5 TTS from CAMB AI promising higher realism in voice cloning. Discussions explored quantization techniques like IQ4_xs and HQQ for efficient model deployment, and the potential of federated learning for privacy-preserving training.

-

Community Collaboration on AI Challenges:

- Discussions around Chain of Thought retrieval in medical applications and techniques for essential model prompt engineering were highlighted in engaging threads (YouTube tutorial).

- OpenAccess AI Collective shared a beginner-friendly RunPod Axolotl tutorial, simplifying model training processes.

-

Quantization and Model Deployment Insights:

- Exchanges on 4-bit quantization for Llama 3 and suggestions using Tensor Parallelism showcased practical experiences from the AI community (Quantization Blog).

- DeepSeek-LLM-7B model's LLaMA-based structure discussed alongside interpretability (DeepSeek Project).

PART 1: High level Discord summaries

LLM Finetuning (Hamel + Dan) Discord

Fine-tuning LLMs, Cutting Problems Down to Size: Engineers share solutions for Out of Memory (OOM) errors and discuss fine-tuning processes. There's a consensus on the benefits of offloading optimizer state to CPU or using CUDA managed memory, with techniques like bnb 8bit casting and Low-Rank Adapters (LoRA) to save memory and enhance performance during training. Valuable resources include a YouTube video on 8-bit Deep Learning and a benchmarking tool, VRAM Calculator.

Empathy for Credits Confusion: Multiple guild members expressed difficulties in receiving promised credits. Missing credits are noted across several platforms, from Modal and OpenAI to Replicate, with appeals for resolution posted in respective channels. Information such as user and org IDs was offered in hopes of expediting support.

Model Training Troubles and Triumphs: Members troubleshoot fine-tuning and inference challenges on various platforms, focusing on practical aspects like dataset preparation, using existing frameworks like TRL or Axolotl, and handling large model training on limited hardware. On the other side of the coin, positive experiences with deploying Mistral on Modal were recounted, endorsing its hot-reload capabilities.

Reeling in Real-World ML Discussions: Conversations delved into practical Machine Learning (ML) applications, such as dynamically swapping LoRAs and Google's Gemini API for audio processing. The use of Chain of Thought reasoning for diagnosis by models like Llama-3 8B was also examined, acknowledging flaws in model conclusions.

Resource Ramp-Up for Rapid Engineering: The community has been actively sharing resources, including Jeremy Howard's "A Hackers' Guide to Language Models" on YouTube and Excalidraw for making diagrams. Tools like Sentence Transformers are recommended for fine-tuning transformers, highlighting the collaborative spirit in constantly elevating the craft.

Stability.ai (Stable Diffusion) Discord

- Waiting for the Holy Grail, SD3: Anticipation was high for the release of Stable Diffusion 3 (SD3), with hopes expressed for improved features especially in voxel art, while one user humorously expressed dread over having to sleep before the release.

- Slow Connections Test Patience: One member faced a grueling twelve-hour marathon to download Lora Maker, due to reaching data cap limits and enduring speeds as sluggish as "50kb/s download from Plytorch.org."

- Model Platform Showdown: Discussion arose on the availability of AI models and checkpoints, with platforms like Huggingface and Civitai under the lens; Civitai takes the lead with a vast selection of Lorases and checkpoints.

- Up for Debate: Upscaling Techniques: A technical debate was sparked on whether upscaling SD1.5 images to 1024 can rival the results of SDXL directly trained at 1024x1024 resolution, leading to suggestions to test SDXL's upscaling prowess to even higher resolutions.

- AMD, Y U No Work With SD?: Frustration bubbled up from a member struggling to run Stable Diffusion with an AMD GPU, culminating in a community nudge towards revisiting installation guides and seeking further technical support.

Unsloth AI (Daniel Han) Discord

July 2024: Anticipated MultiGPU Support for Unsloth AI

MultiGPU support for Unsloth AI is highly anticipated for early July 2024, with enterprise-focused Unsloth Pro leading the charge; this will potentially enable more efficient fine-tuning and model training.

Llama 3 Dabbles in Versatile Fine-Tuning

Users explored various tokenizer options for the Llama model, with discussions confirming that tokenizers from services like llama.cpp and Hugging Face are interoperable, and referencing fine-tuning guidance on YouTube for those seeking precise instructions.

Hugging Face AutoTrain Expands with Unsloth Support

Hugging Face AutoTrain now includes Unsloth support, paving the way for more efficient large language model (LLM) fine-tuning as the AI community showed excitement for advancements that save time and reduce memory usage.

Innovations in AI Showcased: Therapy AI and MARS5 TTS

Emerging tools such as a therapy AI finetuned on llama 3 8b with Unsloth and the newly open-sourced CAMB AI's MARS5 TTS model, which promises higher realism in voice cloning, are creating buzz in the community.

Apple's Hiring: AI Integration Spurs Debate

Apple's latest initiative in personalized AI dubbed "Apple Intelligence" was a subject of intense discussion, with the community weighing its potential for language support and the integration of larger models, as reported during WWDC.

Eleuther Discord

Deep Learning's Quest for Efficiency: Members debated the benefits and hurdles of 4-bit quantization for Llama 3, with suggestions like Tensor Parallelism providing possible pathways despite their experimental edge. The applicability of various quantization methods including IQ4_xs and HQQ was highlighted, referencing a blog showcasing their performance on Apple Silicon LLMs for your iPhone.

Seeking Smarter Transformers: A discussion surfaced on improving transformer models, referencing to challenges with learning capabilities that are highlighted in papers like "How Far Can Transformers Reason?" which advocates for supervised scratchpads. Additionally, a debate on the usefulness of influence functions in models emerged, citing seminal works like Koh and Liang's influence functions paper.

Tackling Text-to-Speech Synthesis: VALL-E 2 was mentioned for its exceptional zero-shot TTS capabilities, though researchers faced access issues with the project page. Meanwhile, LlamaGen's advances in visual tokenization promise enhanced auto-regressive models and stir discussions about incorporating methods from related works like "Stay on topic with Classifier-Free Guidance".

Interpreting Multimodal Transformations: Integration challenges of the DeepSeek-LLM-7B model were addressed, with its LLaMA-based structure being a focal point. Shared resources include a GitHub repo to assist the community in their interpretative efforts and overcome model integration complexities.

Optimization Strategies for LLM Interaction: Eleuther introduced chat templating capabilities with the --apply_chat_template flag, providing an example of ongoing work to enhance user interaction with language models. There's also a community push to optimize batch API implementations for both local and OpenAI Batch API applications, with high-level implementation steps discussed and plans for a future utility to rerun metrics on batch results.

CUDA MODE Discord

-

GPUs Stirring Hot Tub Fantasies: An engaging proposition to repurpose GPU waste heat for heating a hot tub led to a broader discourse on harnessing data center thermal output. The jest shed light on the potential to transform waste heat into communal heating solutions while providing sustainable data center operational models.

-

Cutting Through the Triton Jungle: Navigational tips for better performance with Triton were offered, with common struggles including inferior speed compared to cuDNN and complexities with variable printing inside kernels. Preferences were voiced for simpler syntax, avoiding tuples to lessen the development maze.

-

Spanning the Spectrum from Torch to C++**: A showcase of technical prowess, participants discussed the merits of full graph compilation with

torch.compile, while others contemplated writing HIP kernels for PyTorch, both hinting at the imminent optimization tide. This confluence of conversations also pondered whether C++20's concepts could detangle code complexities without back-stepping to C++17. -

Bitnet's Ones and Zeros Steal the Show: A thoughtful exchange surfaced around training 1-bit Large Language Models (LLMs), with a shared resource from Microsoft's Unilm GitHub, outlining the potential efficiency yet acknowledging the stability issues in comparison to FP16.

-

Altitudes of LLMs and Compression Techniques: From analyzing ThunderKitten's lackluster TFLOPS performance to exploring model compression strategies with Sconce, the community fused their cerebral powers to navigate these complicated terrains. Added to the repository was a benchmarking pull request in PyTorch's AO repo, promising accurate performance gauging for Llama models.

Modular (Mojo 🔥) Discord

-

Concurrency Conundrums and GPU Woes in Mojo: Engineers debated the adoption of structured concurrency in Mojo's library amidst concerns about its asynchronous capabilities, stressing the importance of heterogeneous hardware like TPU support. A strong sentiment echoed where Mojo succeeds in execution speed, but falls short on hardware acceleration when compared to cost-effective solutions like TPUs.

-

Rapid RNG and Math Mastery on Mojo: Work on a ported xoshiro PRNG has led to significant speed gains on both laptops and using SIMD, while efforts are underway to bring numpy-equivalent functionality to Mojo through the NuMojo project. Trends show a community push towards expanding numerical computation capabilities and efficiency in Mojo.

-

Addressing Mojo’s Memory Mania: Controversy sparked over memory management practices in Mojo, with discussions on the need for context managers versus reliance on RAII and the intricacies of UnsafePointers. The debate underlined the community’s commitment to refining Mojo’s ownership and lifetimes paradigms.

-

TPU Territory Tackled: The MAX engine's potential compatibility with TPUs became a highlight, with community members exploring resources like OpenXLA for guidance on machine learning compilers. Forward-looking discussion touched on MAX engine roadmap updates, including inevitable Nvidia GPU support.

-

Nightly Update Notes Nuances for Mojo: A freshly released nightly Mojo compiler version

2024.6.1105brought to light changes including the removal ofSliceNewandSIMD.splat, plus the arrival ofNamedTemporaryFile. This continuous integration culture within the community exemplifies the lean towards iterative and fast-paced development cycles.

Perplexity AI Discord

-

Apple Dips Toes in Personal AI: Apple announced "Apple Intelligence," incorporating ChatGPT-4o into Siri and writing tools to enhance system-wide user experience while prioritizing privacy, as shared in a Reddit post.

-

iOS 18 and WWDC 2024 Embrace AI: With the vision set at WWDC 2024, the new machine learning-powered Photos app in iOS 18 categorizes media more intelligently, coupled with significant AI integrations and software advances across the Apple ecosystem.

-

Is Rabbit R1 a Gadget Gone Wrong?: Members exchanged views on the legitimacy of the Rabbit R1 device, mentioning its sketchy crypto ties, and speculated about its capabilities with an Android OS, as discussed in a Coffeezilla video.

-

Perplexity AI - Promise Meets Skepticism: Confusion circulates around Perplexity's Pages and Pro features with desktop/web limitations; meanwhile, Perplexity's academic sourcing accuracy faces scrutiny, with users highlighting Google's NotebookLM as potentially superior.

-

Integration Headaches and Hidden Keys: Introducing Perplexity AI into custom GPT applications saw roadblocks, prompting discussions on model name updates and safe API practices after an API key was mistakenly exposed, documented at Perplexity's API guidelines.

LM Studio Discord

-

PDF Parsing Pursuits: Engineers are exploring local tools for parsing structured forms in PDFs, with Langchain emerging as a suggestion for incorporating local LLMs to extricate fields efficiently.

-

WebUI Woes and Workarounds: A gap in official WebUI support for LMStudio has led users to employ the llama.cpp server and text-generation-webui to interact with the tool from remote PCs.

-

California AI Bill Brews Controversy: SB 1047 sparks lively debate pertaining to its perceived impact on open-source AI, with fears that it may concentrate AI development among few corporations and encroach on model creators' liabilities indefinitely. Dan Jeffries' tweet provides insights into the discussion.

-

GPU Upgrades and ROCm Insights: Engineers discuss upgrading to GPUs with higher VRAM for running large AI models and recommend AMD's ROCm as a speedier alternative to OpenCL for computational tasks. Concerns with multi-GPU performance in LMStudio lead some to alternative solutions like stable.cpp and Zluda for CUDA sweep-ins on AMD.

-

Model Merging Mastery: The community has been active in merging models (e.g., Boptruth-NeuralMonarch-7B), evaluating new configurations like Llama3-FiditeNemini-70B), and tackling operational issues like token limit bugs in AutogenStudio with fixes tracked on GitHub.

OpenAI Discord

-

Apple Makes Splashes in AI Waters: Apple has announced AI integration into its ecosystem and the community is buzzing about the implications for the competition and device performance. Attendees of WWDC 2024 eagerly discussed "Apple Intelligence," a system deeply integrated into Apple's devices, and are examining the available Apple Foundation Models overview.

-

Concerns and Debates on AI and Privacy: Privacy worries surge with AI advancements, with users voicing concerns over potential data misuse, advocating for more secure, on-device AI features instead of relying solely on cloud computing. The discourse reflects the dichotomy where tech enthusiasts express skepticism over cloud and on-premises solutions alike.

-

GPT-4: High Hopes Meet Practical Hiccups: OpenAI's promise of upcoming ChatGPT updates stirred excitement, yet users report app freezes and confusion over the new voice mode's delayed release. Additionally, developers are frustrated by apparent policy violations in the GPT Store hindering their ability to publish or edit GPTs.

-

Time Management Across Time Zones: AI engineers are strategizing on how to tackle time zone challenges with the Completions API, weighing options such as using timestamp conversions via external libraries or synthetic data to mitigate risks and enhance precision. Consensus veers towards UTC as the baseline for consistent model output, with user-specific timezone adjustments conducted post-output.

-

Meet Hana AI: Your New Google Chat Teammate: Hana AI is presented as an AI bot for Google Chat poised to boost team efficiency by handling various productivity tasks and is currently available for free. Engineers can trial and give feedback on the bot, which promises to aid managers and executives, accessible through the Hana AI website.

HuggingFace Discord

-

Quest for Optimal Medical Diagnosis AI Stalled: No consensus was reached on the best large language model (LLM) for medical diagnosis within the discussions.

-

Semantic Leap in CVPR Paper Accessibility: A new app indexing CVPR 2024 paper summaries with semantic search capabilities was shared and is accessible here.

-

Tech Hiccups with Civitai Files: A member encountered

TypeError: argument of type 'NoneCycle' is not iterablewhen usingdiffusers.StableDiffusionPipeline.from_single_file()with safetensors files from Civitai. -

AI Legislation Looms Large Over Open-Source: A tweet thread criticized the California AI Control Bill for potentially hampering open-source AI development, raising alarm over strict liabilities for model creators.

-

Anime Meets Diffusion with Wuerstchen3: A user unveiled an anime-finetuned version of SoteDiffusion Wuerstchen3 and provided a useful link to Fal.AI's documentation for API implementation details.

Nous Research AI Discord

Character Codex Unleashed: Nous Research has unveiled the Character Codex dataset with data on 15,939 characters from diverse sources like anime, historical archives, and pop icons, now available for download.

Technical Discussions Ablaze: Engaging conversations included the potential stifling of creativity by RLHF in LLMs, contrasting with the success of companies like Anthropic. The debate also covered model quantization and pruning methods, with a strategy for LLaMA 3 10b aiming to trim model sizes smartly.

Knowledge in Sync: Members discussed the Chain of Thought (CoT) retrieval technique used by CoHere for multi-step output construction and proposed a hybrid retrieval method that might pair elastic search with bm25 + embedding and web search.

Code Meets Legislation: There was a standout critique of CA SB 1047, arguing it poses a risk to open-source AI, while a member shared Dan Jeffries' insights on the matter. A counter proposal, SB 1048, aimed at safeguarding AI innovation was also mentioned.

New Rust Library Rigs the Game: The release of 'Rig', an open-source library in Rust for creating LLM-powered applications, was greeted with interest; its GitHub repo is a treasure trove of examples and tools for AI developers.

Cohere Discord

-

Apple's AI Game-Changer: Apple has launched 'Apple Intelligence' at WWDC 2024, integrating ChatGPT with Siri for an improved user interface across iPhones, iPads, and Macs, sparking security concerns and debates. The announcement details were shared in this article.

-

Job Hunt Reality Check: An aspiring Cohere team member shared their frustration over job rejections despite notable hackathon successes and ML experience, sparking discussions on whether personal referrals trump qualifications.

-

Cohere's Developer Dialogue: Cohere has introduced Developer Office Hours, a forum for developers to address their concerns and engage directly with the Cohere team. A reminder for an upcoming session was posted with an invitation to participate.

-

Feedback Flourishes: Members expressed high satisfaction with the new Developer Office Hours format offered by Cohere, complementing the team for fostering an engaging and relaxed environment.

-

Engage with Expertise: Cohere encourages member engagement and offers an opportunity for developers to expand their knowledge and troubleshoot with the team through the Developer Office Hours. The next session is scheduled for June 11, 1:00 PM ET, and accessible via this Discord Event.

Latent Space Discord

-

Apple Goes All-In on AI Integration: Apple announced the integration of AI throughout their OS, focusing on multimodal I/O and user experience while maintaining privacy standards. For AI tasks, they introduced "Private Cloud Compute," a secure system for offloading computation to the cloud without compromising user privacy.

-

ChatGPR Finds a New Home: Partnerships were announced between Apple and OpenAI to bring ChatGPT to iOS, iPadOS, and macOS, signaling a significant move towards AI-enabled operating systems. This would bring conversational AI directly into the hands of Apple users later this year.

-

Mistral Rides the Funding Wave: AI startup Mistral secured a €600M Series B funding for global expansion, a testament to investors' faith in the future of artificial intelligence. The round follows a surge of investments in the AI space, highlighting the market's growth potential.

-

PostgreSQL's AI Performance Edges Out Pinecone: PostgreSQL's new open-source extension, "pgvectorscale," is hailed for outperforming Pinecone in AI applications, promising better performance and cost efficiency. This marks a significant development in the database technologies supporting AI workloads.

-

LLMs in the Real World: Mike Conover and Vagabond Jack featured on the Latent Space podcast, sharing their experiences with deploying Large Language Models (LLMs) in production and AI Engineering strategies in the finance sector. Discussions center around practical considerations and strategies for leveraging LLMs effectively in industry contexts.

LlamaIndex Discord

-

Advanced Knowledge Graph Bait: A special workshop focusing on "advanced knowledge graph RAG" is scheduled with Tomaz Bratanic from Neo4j, aiming to explore LlamaIndex property graph abstractions. Engineers are encouraged to register for the event taking place on Thursday at 9am PT.

-

Parisian AI Rendezvous: @hexapode will showcase a live demo at the Paris Local & Open-Source AI Developer meetup featuring several prominent companies including Koyeb, Giskard, Red Hat, and Docker at Station F in Paris on 20th June at 6:00pm, with opportunities for others to demo their work by applying here.

-

LlamaIndex Snafus and Workarounds: Users are seeking help with the integration of various query engines and LLM pipelines, such as combining SQL, Vector Search, and Image Search using LlamaIndex and querying a vector database with potential OpenAI Chat Completion fallbacks. For projects involving SQL db retrieval and analysis with Llama 3, exploring text-to-SQL pipelines and consulting LlamaIndex’s advanced guides is recommended.

-

Berkeley Brainstorming: A UC Berkeley research team is exploring the terrain of custom RAG systems, seeking input from experienced engineers to navigate the complexity of building, deploying, and maintaining such systems.

-

The Need for Speed in Sparse Vector Generation: Generating and uploading sparse vectors in hybrid mode with Qdrant and LlamaIndex is too slow for some users, with suggestions hinting at leveraging GPUs locally or using an API to hasten the process.

LAION Discord

-

LAION Caught in Controversy: The LAION dataset was featured on Brazilian TV receiving criticism; the issue stems from a claim by Human Rights Watch that AI tools are misusing children's online personal photos, as discussed here.

-

Privacy and Internet Literacy Debated: Engineers expressed concerns over widespread misunderstanding of data privacy on the internet, touching on the grave problems caused by billions of users lacking knowledge on the subject.

-

LlamaGen Moves Image Generation Forward: The announced LlamaGen model demonstrates a significant step in image generation, leveraging language model techniques for visual content creation, as detailed in their research paper.

-

CAMB AI's MARS5 Goes Open Source: The TTS model, MARS5, developed by CAMB AI, has been made open source for community use, with a Reddit post inviting feedback and further technical discussion available on this thread.

-

Safety in Visual Data Sets: The LlavaGuard project, detailed here, proposed a model aimed at increasing safety and ethical compliance in visual dataset annotations.

Interconnects (Nathan Lambert) Discord

-

Apple Intelligence Divides Opinions: Engineers mixed in their feedback on OpenAI's collaboration with Apple, suggesting the integration into Apple Intelligence may be superficial; however, user privacy highlighted in the official announcement, despite rumor and skepticism (Read more). Comparative benchmarks for Apple's on-device and server models aroused curiosity about their performance against peers.

-

Creating Clear Distinctions: Apple's strategic approach to separate Apple Intelligence from Siri has sparked dialogue on potential impacts on user adoption and perceptions of the new system's capabilities.

-

Tech Community Anticipates Key Interview: The forthcoming interview of François Chollet by Dwarkesh Patel has engineers eager for a possible shift in the AGI timeline debate, highlighting the importance of informed questioning rooted in Chollet’s research on intelligence measures.

-

TRL Implementation Debated: Caution was raised about implementing TRL, citing the technology as "unproven". One member's plan to submit a Pull Request (PR) for TRL received active encouragement and a review offer from another community member.

-

Support in Community Contributions: The spirit of collaboration is evident as a member plans to contribute to TRL and receives a pledge for review, showcasing the guild’s culture of mutual support and knowledge sharing.

OpenInterpreter Discord

-

Apple Intelligence on the AI Radar: Community showed interest in the potential integration of Open Interpreter with Apple's privacy-centric AI capabilities outlined on the Apple Intelligence page. This could lead to leveraging the developer API to enhance AI functionalities across Apple devices.

-

SB 1047 in the Line of Fire: Dan Jeffries criticized the California AI Control and Centralization Bill (SB 1047), introduced by Dan Hendyrcks, for its centralized control over AI and the threat it poses to open source AI innovation.

-

Arduino IDE Complications on Mac M1 Resolved: An issue with Arduino IDE on Mac M1 chips was addressed through a fix found in a GitHub pull request, but led to additional problems with the Wi-Fi setup on device restarts.

-

Linux as an Open Interpreter Haven: Debate among members highlighted consideration of prioritizing Linux for future Open Interpreter developments, aiming to provide AI-assisted tools independent of major operating systems like Apple and Microsoft.

-

Personal Assistant that Remembers: Work on enhancing Open Interpreter with a skilled prompting system that can store, search, and retrieve information like a personal assistant was shared, spotlighting innovation in creating memory retention for AI systems.

-

Killian's Insights Captured: A noteworthy discussion followed Killian's recent talk, which was instrumental in casting a spotlight on pertinent AI topics among community members. The recording can be found here for further review.

LangChain AI Discord

- Tagging Troubles with LangChain: Engineers noted that prompts are ignored with the

create_tagging_chain()function in LangChain, causing frustration as no solution has been offered yet. - Collaborative Call for RAG Development Insights: UC Berkeley team members are actively seeking discussions with engineers experienced in Retrieval-Augmented Generation (RAG) systems to share challenges faced in development and deployment.

- LangGraph vs LangChain: Interest was shown in understanding the advantages of using LangGraph over the classic LangChain setup, particularly regarding the execution of controlled scripts within LangGraph.

- Awaiting ONNX and LangChain Alliance: There was curiosity about potential compatibility between ONNX and LangChain; however, the conversation didn't progress into a detailed discussion.

- Streamlined Large Dataset Processing Via OpenAI: A comprehensive guide for processing large datasets with the OpenAI API was shared, focusing on best practices like setting environment variables, anonymizing data, and efficient data retrieval with Elasticsearch and Milvus. Related documentation and GitHub issue links were provided for reference.

tinygrad (George Hotz) Discord

-

Newcomer Encounters Permission Puzzle: A new member eager to participate in tinygrad development found themselves permission-locked from the bounties channel, preventing them from working on the AMX support bounty. George Hotz resolved the confusion, stating that one must "Become a purple" to gain the necessary access for contribution.

-

George Plays Gatekeeper: In response to questions about AMX support in tinygrad, George Hotz hinted that deeper engagement with the community's documentation is required before tackling such tasks, referencing the need to read a specific questions document.

-

A Classic Mix-Up: A documentation mishap occurred when a new member cited the wrong guide, referring to "How To Ask Questions The Smart Way", leading to a humorous "chicken and egg problem" moment with George Hotz.

-

Back to the Drawing Board: After the back-and-forth, the new contributor decided to take a step back and delve deeper into the tinygrad codebase before returning with more precise questions, showcasing the complexity and dedication required for contributing to such a project.

OpenRouter (Alex Atallah) Discord

- Speedy Service with OpenRouter: OpenRouter has tackled latency issues by utilizing Vercel Edge and Cloudflare Edge networks, ensuring that server nodes are strategically positioned close to users for faster response times.

- Provider Preference in the Pipeline: Although the OpenRouter playground currently lacks a feature for users to select their preferred API provider, plans to implement this capability have been confirmed.

- API Provider Choices for the Tech-Savvy: Users can bypass the lack of direct provider selection in the OpenRouter playground by using the API; a guide to this workaround is accessible in the OpenRouter documentation.

OpenAccess AI Collective (axolotl) Discord

- ShareGPT's Training Veil: When training, ShareGPT does not "see" its own converted prompt format, ensuring a clean training process.

- Apple's AI Struts Its Stuff: Benchmarks are in for Apple's new on-device and server models, showcasing their prowess in instruction following and writing, with comparisons to other leading models.

- Rakuten Models Storm the Scene: Rakuten's AI team has released a set of large language models that perform exceptionally in Japanese, based on Mistral-7B and available under a commercial license, sparking an optimistic buzz among community members.

- JSON Joy Ripples Through Conversation: Engineers had a light-hearted moment appreciating a model's ability to respond in JSON, capturing a mix of amusement and technical appreciation for the model's capability.

- Fine-Tuning Made Simpler with Axolotl: AI practitioners are guided by a new tutorial for fine-tuning on RunPod, which outlines a streamlined process for fine-tuning large language models with helpful YAML examples across various model families.

Datasette - LLM (@SimonW) Discord

-

Calm Before the Coding Storm: Vincent Warmerdam recommends calmcode.io for training models, with users acknowledging the site for its helpful content on model training strategies and techniques.

-

RAGged but Right: A Stack Overflow blog post details chunking strategies for RAG (retrieval-augmented generation) implementations, stressing the role of text embeddings to accurately map source text into the semantic fabric of LLMs, enhancing the grounding in source data.

Torchtune Discord

- Clarity on TRL's KL Plots for DPO: There is no direct plotting of Kullback–Leibler (KL) divergence for the Dominant Policy Optimization (DPO) implementation, but such KL plots do exist within the Trust Region Learning (TRL)'s Proximal Policy Optimization (PPO) trainer. The KL plots can be found in the PPO trainer's code, as pointed out in TRL's GitHub repository.

MLOps @Chipro Discord

AI Community Unites at Mosaic Event: Meet Chip Huyen in person at the Mosaic event at Databricks Summit for networking with AI and ML experts. The gathering is set for June 10, 2024, in San Francisco.

Mozilla AI Discord

Given the lack of substantial discussion points and insufficient context in the provided snippet, there are no significant technical or detailed discussions to summarize for an engineer audience.

The LLM Perf Enthusiasts AI Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The AI Stack Devs (Yoko Li) Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The DiscoResearch Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The AI21 Labs (Jamba) Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The YAIG (a16z Infra) Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

PART 2: Detailed by-Channel summaries and links

{% if medium == 'web' %}

LLM Finetuning (Hamel + Dan) ▷ #general (37 messages🔥):

-

Heuristics for model size in fine-tuning: A member raised a general question about choosing model size for fine-tuning based on task complexity and mentioned the difficulty of extensive evaluations for rapid prototyping. They inquired if experienced users develop a sense of model capabilities over time.

-

Karpathy's impactful videos: Discussion highlights the educational value of Andrej Karpathy’s videos, with one member sharing a full implementation repository and supplementary notes on GPT from Karpathy's earlier tutorials.

-

NCCL timeout issue: A user shared an error log indicating a timeout at NCCL work, seeking advice from the community. The log highlights complications in the ProcessGroupNCCL operations.

-

Gorilla Project shines in tool use and API generation: The Gorilla project was mentioned as an interesting case for fine-tuning models to improve tool use and API generation. They highlighted the project’s resources including the GoEx runtime and leaderboards, and shared a YouTube video outlining the project.

-

LLMs vs traditional ML/DL: A discussion on transitioning to LLMs from traditional ML/DL pointed out the importance of leveraging existing models before starting from scratch. Core principles from ML/DL like data prep, EDA, and model pipelines remain largely relevant in the LLM lifecycle.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #🟩-modal (44 messages🔥):

-

Clarification on Bonus Credits: Members inquired about the distribution of bonus credits for using Modal. It was clarified that the second disbursal of credits would happen a bit after midnight UTC on Tuesday.

-

"Simple Scalable Serverless Services" Slide Share: A user shared a Google Slides link for Charles' presentation on "Mastering LLMs - Simple Scalable Serverless Services".

-

GitHub Projects and Repositories: Multiple GitHub repositories were shared, including charlesfrye/minimodal and awesome-modal, with additional contribution guidance and project links.

-

Discussion on Cost Management: Users discussed best practices around preventing cost blowups with serverless services like AWS S3 or Vercel. Recommendations included setting high-ball cost estimates and load balancer limits to prevent unexpected expenses.

-

Notebooks Feature in Modal: Inquired about the development of the Notebooks feature within Modal, Charles confirmed it works with some limitations, suggesting users raise issues if they encounter problems. An example project link on mistral-finetune-modal was shared to illustrate its use.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #hugging-face (4 messages):

- Spending Hugging Face Credits: A member inquired about effective ways to use Hugging Face credits, beyond inference endpoints. Other members suggested using credits for Spaces with GPUs and AutoTrain, which enables automatic training and fast deployment of custom machine learning models by simply uploading data, with tasks like LLM finetuning, image classification, and text classification.

- New Form Inquiry: Another member asked if the new form is available yet, but no reply was documented.

Link mentioned: AutoTrain – Hugging Face: no description found

LLM Finetuning (Hamel + Dan) ▷ #replicate (10 messages🔥):

-

Credits Troubleshooting Inquiries on Replicate: Multiple users reported not receiving their Replicate credits despite following given instructions. A member of the support team responded, asking for direct messages with usernames and emails to resolve the issue.

-

Replicate Credits Without Billing Setup: A user inquired if billing setup on Replicate was necessary to receive credits. The response clarified that billing setup is not required to get credits.

-

Feedback on Training and Deploying OSS Tool Calling Models: A member shared their experience working with a starter repo for training and deploying OSS tool calling models. They sought feedback on whether their setup was correct and expressed interest in further discussion.

LLM Finetuning (Hamel + Dan) ▷ #langsmith (7 messages):

- Email communication on credits: A user mentioned they had sent an email about their credits. Later, another user confirmed an email response regarding this matter.

- Adding a payment method not initially required: It's explained that "Accessing your credits requires a valid payment method on file," but users don't need to have billing set up when filling out the credits form. Reference link provided.

- Org ID missing from form: A user received assistance with credits even though they had left the org ID blank on their form submission. "I've gone in and added these credits for you."

- Credits issue resolved with email: A user was asked to provide the email they used for the credit form via DM or email to [email protected]. This was part of resolving the credit addition issue.

LLM Finetuning (Hamel + Dan) ▷ #berryman_prompt_workshop (3 messages):

- Chain of Thought leads to flawed conclusions: One member discussed using Chain of Thought (CoT) reasoning for diagnosis steps, but their model (Llama-3 8B) sometimes arrives at incorrect conclusions. An example given was the model incorrectly stating "This is a violation of the rules" when diagnosing patient age within an interval.

LLM Finetuning (Hamel + Dan) ▷ #whitaker_napkin_math (2 messages):

- Estimating VRAM Consumption for DPO Training Is Tricky: A member raises concerns about the fluctuating VRAM consumption during DPO training, seeking estimation methods to avoid out-of-memory (OOM) errors. Another member suggests starting with the longest sequences to prevent unexpected VRAM spikes mid-training.

LLM Finetuning (Hamel + Dan) ▷ #workshop-4 (9 messages🔥):

- Apple jumps into LoRA swapping game: A member pointed out a new technique from Apple on "dynamically swapping out LoRA's", getting peers excited and curious about its similarity to S-LoRA. Another asked for resources to implement dynamic specialized LoRA adapters based on query type, leading to suggestions like "Lorax" and mentions of related work like CBTM for LoRAs and semantic similarity over prompt and dataset per task.

- Workshop insights predate Apple’s announcement: Another member highlighted how this concept of dynamic LoRA swapping discussed by Apple was covered in a workshop, albeit for cloud applications, making the on-device adaptation exciting and ahead of time. Recounting insights from "Travis," they appreciated the foresight and detailed understanding the workshop provided.

LLM Finetuning (Hamel + Dan) ▷ #clavie_beyond_ragbasics (129 messages🔥🔥):

- Jeremy Howard shares Hacker's Guide to Language Models: Jeremy Howard recommended a YouTube video titled "A Hackers' Guide to Language Models". His video is described as "deeply informative," covering the comprehensive utility of modern language models.

- Ben Clavié's Resources on Reranking and NER: Ben shared multiple valuable resources including a GitHub link for rerankers and detailed explanations on GIiNER, a robust model for zero-shot entity recognition. He highlighted its capability to handle in-house jargon and specific categories.

- Discussion on Cosine Distance vs. L2 Distance: Members discussed the merits between using Cosine Distance and normalized Euclidean Distance (L2) for vector search in RAG applications. They concluded that "cosine distance is equal to normalized Euclidian distance."

- Sharing of Additional Tools and Libraries: Members shared tools like Excalidraw for making block diagrams and various resources for fine-tuning transformers such as Sentence Transformers.

- Challenges with Video Hosting on Maven: There were issues with video playback of Ben Clavié's talk on Maven, apparently due to Zoom's transcription process. Efforts to resolve this were ongoing, aiming to make the materials accessible again.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #jason_improving_rag (1 messages):

- Exploring faster entity extraction over function calling: The user discusses integrating categories as metadata to potentially improve a reranker. They chose an entity extraction + router model approach instead of function calling due to complexity and speed benefits.

- Seeking insights on model training specifics: The other user seeks details on the complexity and training specifics of models using function calling. They ask about the sample size needed, the number of functions prepared, and specifics of product data complexity, such as relationships like "is_accessory_of" or "bought_together."

LLM Finetuning (Hamel + Dan) ▷ #jeremy_python_llms (1 messages):

- Excitement for Fasthtml: A user expressed their excitement for fasthtml, highlighting their struggles with scaling Streamlit apps into more complicated applications. They mentioned that fasthtml might save them from having to learn Typescript.

LLM Finetuning (Hamel + Dan) ▷ #saroufimxu_slaying_ooms (148 messages🔥🔥):

-

Offload Optimizer State to Avoid OOM: Users discussed strategies to offload optimizer state to CPU or CUDA managed memory to prevent Out of Memory (OOM) errors in model training. They emphasized the trade-off in performance unpredictability and the importance of fused optimizers to speed up

optimizer.stepoperations. -

Shared Insights on Efficient Deep Learning Techniques: Several users exchanged insights on advanced optimization techniques like bnb 8bit casting and LoRA. They explored how these techniques save memory and enhance model performance during training.

-

Extensive Resource Sharing: Members shared numerous resources on model training optimization, including links to Profetto UI, torch profiler, and various GitHub repositories. Specific URLs included a YouTube video on 8-bit Deep Learning and a Google Drive with related slides and traces.

-

Enthusiastic Discussion on Model Quantization and FSDP: Users actively discussed the benefits and complexities of quantization, especially with tools like FSDP2, emphasizing efficient memory management. The conversation highlighted the practical implementations and challenges of working with NF4 tensors and large model training.

-

Interactive and Appreciative Community Engagement: The chat was filled with supportive interactions, humor, and praise, particularly for the informative talks and materials shared by specific members. The community expressed gratitude for detailed presentations, with one member humorously adding, "Memes are the best method of information dissemination to a crowd like us."

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #paige_when_finetune (166 messages🔥🔥):

-

Popcorn classification gets geeky: The channel humorously discusses using synthetic popcorn popping time data for fine-tuning LLMs and performing survival analyses on popcorn kernels. One member quips, "whoever does a case study on popcorn kernels following the ftcourse repo will be legend."

-

Inverse Poisson distribution sparks math talk: Detailed discussions emerge around the topic of inverse Poisson distribution, with one user sharing a math stack exchange link to explain formulas and stochasticity.

-

Gemini API catches attention: Members chat about the capabilities of Google's Gemini Flash supporting audio input, referencing documentation. Another member asks about the process of ingesting audio into the Gemini API.

-

Prompt engineering tips and tricks: A key discussion revolves around using models to create prompts for themselves, including meta-prompt strategies and examples of self-improving prompt techniques. One user shares, "You can ask models to write prompts for themselves (or other models)".

-

Thankful endnote and resource sharing: The chat wraps up with gratitude expressed for Paige's talk, and several important resources such as email contact and additional reading materials. Paige's personal website and documentation for context caching are shared as useful follow-up links.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #wing-axolotl (2 messages):

- LLAMA3 LORA training faces OOM issue on RTX4090: A user reported encountering OOM (Out of Memory) errors while attempting to merge LORA weights with the base model on an RTX4090, despite trying suggested solutions like

lora_on_cpu: trueandgpu_memory_limitoptions. They referenced the Axolotl GitHub README for details. - Axolotl dataset formats and resources shared: The same user shared various links to help understand Axolotl's supported dataset formats and HuggingFace chat templates. These include Axolotl documentation, HuggingFace chat templating, and a related GitHub repository on Chat Templates for HuggingFace.

Links mentioned:

LLM Finetuning (Hamel + Dan) ▷ #charles-modal (8 messages🔥):

- Codingwitcher enjoys Modal's unique approach: Codingwitcher shared their excitement about deploying Mistral for inference on Modal. They described the experience as "magical," especially appreciating the hot-reload feature on a remote machine.

- Ed157 seeks help for fine-tuning setup: Ed157 requested guidance on what to input in the datasets and tokens parts of the config YAML file for instruction fine-tuning. They provided a template to illustrate their needs.

- DamonCrockett faces technical challenges: DamonCrockett encountered an error message related to a missing volume ID while running a llm-finetuning example on Modal. Despite successful previous runs, they sought assistance to resolve this issue.

- Charles redirects support queries: Charles_irl suggested reaching out to the Modal team on Slack for DamonCrockett's issue and recommended the <#1242542198008975430> channel for Ed157's question about axolotl.

- Danbecker consolidates presentation discussion: Danbecker instructed to use the <#1241044231829848125> channel for the upcoming discussion about Charles's presentation to keep everything organized.

LLM Finetuning (Hamel + Dan) ▷ #fireworks (7 messages):

- Users request credits to be added: Multiple members are requesting for credits to be added to their accounts. Member account IDs such as

i-00dda2,dimitry-611a0a,tanmaygupta9-70b723,contact-ff3a2c,ferdousbd-24e887,yorick-van-zweeden-e9b5c2, andashiqur-cd00cewere shared in hopes of resolving the credit issue.

LLM Finetuning (Hamel + Dan) ▷ #emmanuel_finetuning_dead (4 messages):

- **Request for Fine-Tuning Example**: One user asked for an example that illustrates the **fine-tuning process**, such as a notebook, GitHub repo, or blog post. They inquired whether this process can be done with **existing frameworks like TRL or Axolotl**.

- **Dataset Preparation Standard**: Another member shared a [link](https://platform.openai.com/docs/guides/fine-tuning/preparing-your-dataset) to the OpenAI guidelines for preparing datasets for fine-tuning, establishing it as a standard reference.

- **Two-Step Fine-Tuning Process**: Clarification was made on a two-step process for fine-tuning, which includes pretraining and alignment during finetuning. The discussion emphasized *"adding a 'head' layer on the pre-trained model's transformer stack for NLP tasks"* and using QLora to mitigate OOM errors.

- **Technical Breakdown of Mistral Model**: The member provided an example with detailed code illustrating a **MistralForCausalLM** model. The explanation detailed how the last layer `lm_head` functions and how **QLora** replaces linear layers with low-rank matrices to handle out-of-memory errors.

LLM Finetuning (Hamel + Dan) ▷ #west-coast-usa (1 messages):

jonbiz: Schedules allowing, we could hang out? See who else is interested?

LLM Finetuning (Hamel + Dan) ▷ #predibase (2 messages):

- User reports receiving $25 in credits: A user named David reported signing up and receiving $25 in credits, sharing his tenant ID (c4697a91). Michael Ortega responded, stating he would look into the matter for David.

LLM Finetuning (Hamel + Dan) ▷ #openpipe (1 messages):

_iw3: Hi I also still saw a credit of $100 instead of $222, who should I follow up to check? thanks

LLM Finetuning (Hamel + Dan) ▷ #openai (16 messages🔥):

- Scoring prompts toolkit suggestions: One user asked for recommendations on tools for scoring a large list of prompts with features like error handling and resuming. Recommendations included Promptlayer and Promptfoo, with one user specifically seeking a CLI solution.

- Using OpenAI credits: Users discussed various ways to utilize OpenAI credits. One user mentioned using them for embedding tasks and trying out techniques from recent RAG talks, and another mentioned generating content based on their book.

- Issues with receiving credits: A user reported completing a form and emailing support multiple times but still not receiving their OpenAI credits. They provided their Org ID and User ID in an attempt to resolve the issue.

- Tier 2 and GPT-4 access: There was a discussion about accessing GPT-4/4o with a Tier 2 usage plan. One user shared that they were only able to access it after adding a payment method and credits.

Stability.ai (Stable Diffusion) ▷ #general-chat (402 messages🔥🔥):

- Member grapples with slow internet: A member reported a twelve-hour download for Lora Maker due to hitting data cap limits, resulting in slower speeds. They mentioned that "YT works" even with slower speeds compared to "50kb/s download from Plytorch.org."

- Tension over SD3 release: Enthusiasm and anticipation build up as members discuss the imminent release of Stable Diffusion 3. One member humorously asked, "You mean I have to go to bed again and sleep before it releases?" while another looked forward to better generating pixel art: "I hope sd3 is also good at voxel art".

- Exploration of AI models and platforms: Users compared platforms like Huggingface and Civitai for model availability. A member mentioned finding most Loras and checkpoints on Civitai but noted "there are tons of legal things available in torrent format".

- SDXL vs. traditional upscaling debate: Discussion ensued on whether upscaling SD1.5 images to 1024 achieves similar results to SDXL trained for 1024x1024. One user proposed a practical solution: "Give it a try when you have SDXL upscale to 2048."

- Challenges with Stable Diffusion setup: A member faced difficulties running Stable Diffusion with an AMD GPU, expressing frustration: "What's the problem? ... its not using my gpu". They were advised to revisit installation guides and seek out technical support channels for troubleshooting.

Links mentioned:

#photography #longexposure #explore #trending #explorepage": 33K likes, 265 comments - visualsk2 on May 15, 2024: "[Vision III/Part. 4] ✨🤍 SK2• Fast day • #photography #longexposure #explore #trending #explorepage". Hard Muscle - SeaArt AI Model: no description foundSamuele “SK2” Poggi on Instagram: "[Vision IV/Part.6] Thanks so much for 170.000 Followers ✨🙏🏻 Only a few days left until the tutorial is released.

#grainisgood #idea #reels #framebyframe #photography #blurry #explorepage": 16K likes, 130 comments - visualsk2 on June 8, 2024: "[Vision IV/Part.6] Thanks so much for 170.000 Followers ✨🙏🏻 Only a few days left until the tutorial is released. #gra...GitHub - lks-ai/ComfyUI-StableAudioSampler: The New Stable Diffusion Audio Sampler 1.0 In a ComfyUI Node. Make some beats!: The New Stable Diffusion Audio Sampler 1.0 In a ComfyUI Node. Make some beats! - lks-ai/ComfyUI-StableAudioSamplerHome :: AiTracker: no description foundHard Muscle - v1.0 | Stable Diffusion Checkpoint: no description found

Unsloth AI (Daniel Han) ▷ #general (164 messages🔥🔥):

- Expect Multigpu Support in Early July 2024: Members eagerly anticipate the release of multigpu, with a tentative date set for early July 2024. One member humorously mentioned, "2025 but no seriously, early July, 2024."

- LORA Inference Interface and vLLM: There's an interest in an inference interface that allows enabling/disabling LORA during inference. A user discovered that vLLM supports this feature and contemplates if it could work with exl2 or TabbyAPI.

- Overfitting Issues in Training: A member is experiencing overfitting in model training, resulting in lower performance on simpler tasks. Suggestions included trying data augmentation, leveraging weight decay, and ensuring diverse and comprehensive training data.

- Fine-Tuning and EOS Token Discussions: Members discussed the importance of EOS tokens while training instruct models on general texts. One suggested using

BOS_token + entire text + EOS_tokenfor continuous pre-training. - Hugging Face AutoTrain Adds Unsloth Support: Hugging Face AutoTrain now supports Unsloth, enabling users to fine-tune LLMs more efficiently. The new feature was met with excitement and appreciation.

Links mentioned:

Unsloth AI (Daniel Han) ▷ #random (72 messages🔥🔥):

- Revamping Design Colors: Several users discussed improvements to the chatbot interface, suggesting changes like using white backgrounds instead of red, turning squares into diamonds, and desaturating colors. One user remarked, "There we go a less stupid looking version."

- Llama for Scalable Image Generation: The conversation touched upon LlamaGen, an autoregressive model for scalable image generation. The project was discussed with enthusiasm, and a GitHub link was shared.

- Apple's New AI Integration at WWDC: Apple’s announcement of personalized AI ("Apple Intelligence") sparked discussions regarding its efficiency and potential for integrating large models. Users debated its implementation and potential language support, with comments like "Apple's perfectly integrating things to their app."

- Training on the Fly: The feasibility and benefits of on-the-fly model training were debated. Concerns were raised about training costs and quality, while some saw potential in daily finetuning, likening real-time training to financial applications.

- Online Machine Learning Limitations: Discussions highlighted potential issues with online machine learning, such as catastrophic forgetting and quality of human data inputs. One user mentioned, "There are probably several reasons it hasn't worked out. I can guess catastrophic forgetting is one of them."

Links mentioned:

Unsloth AI (Daniel Han) ▷ #help (67 messages🔥🔥):

- Tokenizers are not specific to Unsloth models: "Any service tokenizer will do (llama.cpp tokenizer, huggingface tokenizer). Matter of fact, probably other than llama.cpp is using huggingface tokenizer (including unsloth)".

- Unsloth model size confusion resolved: A user questioned why saving the model only resulted in a 100MB file. It was clarified: "Save_pretrained_merged should save the whole thing".

- Sample CSV format for fine-tuning confirmed: A user asked if their CSV format was correct for fine-tuning; the format "question,answer" was discussed. They were directed to a YouTube video for detailed guidance.

- Multi-GPU support coming in July: "Currently unsloth works on single GPU. We will be rolling out multiGPU support in early July", and clarified that Unsloth Pro is mainly for enterprises.

- Can't finetune GGUF models directly: An attempt to fine-tune GGUF models led to an explanation that it is not supported and suggested using new experimental interoperability features in Hugging Face transformers.

Links mentioned:

Unsloth AI (Daniel Han) ▷ #showcase (3 messages):

-

Try xTherapy AI Finetuned on Llama 3 8B: Check out this therapy AI, finetuned on llama 3 8b using unsloth. Feedback on improvements is welcomed.

-

CAMB AI Releases MARS5 TTS: CAMB AI has open-sourced their 5th iteration of MARS TTS model on GitHub. They were also featured on VentureBeat and are inviting feedback from the community.

Links mentioned:

Eleuther ▷ #general (62 messages🔥🔥):

-

4-bit Quantization for Llama 3 Faces Challenges: A member sought advice on 4-bit quantizing Llama 3 8b with negligible performance degradation for training SAEs. Other members suggested trying sharding or using Tensor Parallelism, but noted the potential challenges and experimental nature of these methods Tensor Parallelism in PyTorch.

-

Debate Over Quantization Methods: Members debated the most effective quantization methods, with IQ4_xs and HQQ, AWQ, EXLv2 being discussed. A link to a blog post was shared, illustrating various quantization techniques for Apple Silicon with claims of better performance than traditional methods.

-

Federated Learning Considerations: A user raised the idea of Apple training on personal data without moving it off-device, sparking a discussion about federated learning. Concerns about privacy and potential misuse of gradient data were discussed, illustrating the complex nature of federated implementations.

-

Geographical Time Series Data Prediction: A member asked about experiences with geographical time series data and predicting events at specific locations. Another member shared their experience using Google Earth Engine + LSTM for similar predictions.

Links mentioned:

Eleuther ▷ #research (118 messages🔥🔥):

-

Quest for Online RL in Regular-Scale LLMs: A member is seeking research papers on online RL for regular-scale LLMs but finds theoretical assumptions often unsubstantiated in practice.

-

VALL-E 2 Advances Zero-Shot TTS: VALL-E 2 achieves human parity in zero-shot TTS, with improvements in Repetition Aware Sampling and Grouped Code Modeling. Yet, the project page has fluctuating availability due to premature leakage.

-

LlamaGen Explores Visual Tokenization: LlamaGen's new models apply autoregressive next-token prediction to visual domains, significantly outperforming popular diffusion models. A discussion emerges about its novel implementation of CFG in autoregressive decoding, reminiscent of methods in previous works.

-

Challenges with Transformer Learning Capabilities: Papers like this one outline tasks that standard Transformers struggle to learn without implementing supervised scratchpads. The discussion touches on the impracticality of unsupervised scratchpads due to inefficiencies in gradient descent for complex token interactions.

-

Efficacy of Influence Functions: The utility and limitations of influence functions are probed, linking to foundational explanations like Koh and Liang's paper, with emphasis on approximations and practical applicability.

Links mentioned:

Eleuther ▷ #interpretability-general (6 messages):

- Challenges with DeepSeek model integration: A member inquired about interpreting the DeepSeek-LLM-7B model and its addition to Transformerlens. Another member confirmed the difficulty, mentioning they had encountered short-circuit issues during their attempts.

- DeepSeek model is LLaMA-based: A helpful comment noted the DeepSeek-LLM-7B model's architecture is based on LLaMA, suggesting it's straightforward to integrate into Transformerlens with some hacks. They also advised double-checking output probabilities to avoid surprises.

- Repo for Multilingual Transformers: A member shared a GitHub link for a repo accompanying a paper on "Do Llamas Work in English? On the Latent Language of Multilingual Transformers". This resource could potentially offer insights or methods applicable to the DeepSeek model integration.

Link mentioned: llm-latent-language/utils.py at 1054015066a4fa20386765d72601d03aa7ef5887 · Butanium/llm-latent-language: Repo accompanying our paper "Do Llamas Work in English? On the Latent Language of Multilingual Transformers". - Butanium/llm-latent-language

Eleuther ▷ #lm-thunderdome (6 messages):

- Enable chat templating with the --apply_chat_template flag: Eleuther now supports chat templating with HF models using the --apply_chat_template flag. However, this feature is not turned on by default.

- Specify stop sequences to resolve task issues: Some users found that manually specifying stop sequences helps resolve task-specific issues. However, shuffling choices in

doc_to_choicesdid not affect model answers as expected. - Batch API needs improvement and contributions are welcome: The current implementation of batching in API or local server models is not optimal, especially for OpenAI Batch API integration. Contributions to improve this, particularly with better batching methods, are appreciated.

- Steps for implementing batch API discussed: A high-level implementation of the batch API includes creating a JSONL file, uploading it via API, running the chosen model, and returning run and file IDs for status checks. Async evaluation calls are suggested to smooth the process.

- Utility for rerunning metrics on batch API results files planned: The proposal includes adding a utility to convert responses from OpenAI to per-sample outputs. This will facilitate rerunning metrics on saved results files as part of the harness.

Eleuther ▷ #multimodal-general (1 messages):

- Seeking LTIP Dataset Alternatives: A user inquired about open-source alternatives to the LTIP dataset used by Deepmind for pre-training Flamingo and GATO. They noted that the Datasheet for the LTIP dataset can be found in the Appendix of the Flamingo paper and mentioned the now redacted LAION datasets as a previous option.

CUDA MODE ▷ #general (128 messages🔥🔥):

- Evaluating 7950x3D and GeForce RTX 4090 for Builds: A member considered using a Ryzen 7950x with 2x GeForce RTX 4090s for a build but expressed concerns about the 4090's size, power draw, and lack of NVLink communication. Another member recommended the 7950x3D due to its larger L3 cache and minor price increase.

- GPUs for Llama-3 Inference: Members discussed optimal GPUs for Llama-3 inference, with considerations between single RTX 4090 and dual 3090 setups. The latter was preferred for applications requiring more VRAM, although the lack of NVLink on certain models like the 4060Ti and potential PCI bottlenecks were highlighted.

- Challenges in Triton Kernel Development: A new user shared issues with their Triton-based Conv2d/Linear layer implementations, finding them slower than cuDNN counterparts and struggling with debugging inside kernels. They sought advice on good resources and methods to print variables within Triton kernels.

- Discussion on CPU/GPU Configurations for HPC Setups: The conversation delved into various configurations including older Threadripper models, modern Epyc CPUs, and their PCIe lane limitations. Power draw considerations and cooling requirements for heavy GPU setups were also discussed.

- Innovative Use of Waste Heat from GPUs: In jest, a member suggested using GPUs to replace a hot tub heater, which sparked a discussion about sustainable data centers utilizing waste heat for community benefits.

Links mentioned:

CUDA MODE ▷ #triton (2 messages):

- For Loop is Necessary: A user emphasized the need for a for loop in their code, simply stating, "no, you need to do the for loop."

- Preference for Non-Tuple Syntax in Triton: Another user noted their preference for the non-tuple version of the

load_2dfunction in Triton. They explained, "Tuples would only add parentheses imo. So I'd leave it at that."

CUDA MODE ▷ #torch (10 messages🔥):

- Custom C++/CUDA with torch.compile: A member asked if custom C++/CUDA operators compatible with torch.compile allow full graph compilation and are AOT compilable with torch.export. Another member responded that it should allow full graph compilation but was not entirely sure about export. They provided an example for reference: Custom CUDA extensions by msaroufim · Pull Request #135 · pytorch/ao.

- HIP kernels in PyTorch: A user inquired if it's possible to write HIP kernels and use them in PyTorch. Another member suggested that using

load_inlineshould work fine. - Inference optimization for AWQ: A member questioned the lack of inference optimized Triton/CUDA kernels for AWQ, speculating if the heterogeneity in how it quantizes weights poses a challenge. Another member responded by referencing PyTorch int4 matmul documentation.

- CUDA libraries warmup: A user mentioned that they've experienced issues with CUDA libraries that require a warmup period for certain algorithms that torch uses internally.

Links mentioned:

CUDA MODE ▷ #algorithms (1 messages):

- Satabios showcases new model compression package: A user introduced their self-built model compression and inferencing package, Sconce. They invited other members to give the project a star if they liked it and welcomed suggestions for improvements.

CUDA MODE ▷ #cool-links (1 messages):

- Iron Bound interview shared: A member posted a link to an interview on The Amp Hour podcast. The podcast episode is titled "Bunnies Bibelot Bonification."

CUDA MODE ▷ #torchao (2 messages):

- Charles's PR Improves Benchmarking: Charles has submitted a pull request to PyTorch's AO repository that adds support for benchmarking Llama models. This aims to provide "stable eval/benchmarking" functionality within the TorchAO codebase.

- Large N May Not Require Changes: A member noted that if N (sample size) is sufficiently large, additional modifications may not be necessary. The implication is that there may be minimal impact on the outcomes with larger sample sizes.

Link mentioned: Adding Llama to TorchAO by HDCharles · Pull Request #276 · pytorch/ao: Summary: This PR adds funcitonality for stable eval/benchmarking of llama models within the torchao codebase. the model stuff is in torchao/_models/llama with eval being moved to _models/_eval.py m...

CUDA MODE ▷ #llmdotc (42 messages🔥):

- **ThunderKitten Performance Disappoints**: Members discussed **ThunderKitten's** performance, noting it achieved ~75 TFLOPS versus ~400 TFLOPS with **cuBLAS** for basic matmul on **A100**. One explanation was that ThunderKitten might be overly focused on **TMA**, making the non-TMA path massively L1/load-store limited.

- **C++20 in ThunderKitten**: Conversations highlighted that **ThunderKitten** requires C++20, which some members found cumbersome despite the language’s advantages in handling concepts. There was debate on whether similar functionality could be achieved with C++17, albeit with more complex and less readable template code.

- **FP8 Training Stability Concerns**: One member mentioned that despite FP8 training being seen as offering performance improvements, many groups still prefer **FP16** due to stability concerns. They noted that **FP8** is not fully understood or stable, making **FP16** a more predictable choice for training currently.

- **Using Thrust for Elementwise Transformations**: A member inquired about optimizing performance with **Thrust** for elementwise transformations on **Hopper/Blackwell** GPUs. They sought advice on leveraging aligned data for more efficient computations and compared the performance of different methodologies, including **manual TMA**.

Links mentioned:

CUDA MODE ▷ #bitnet (1 messages):

- 1-bit LLMs Training Guide Shared: An important resource for training 1-bit LLMs was shared, which includes tips, code, and FAQs. Check out the comprehensive guide at Microsoft's Unilm GitHub.

Link mentioned: unilm/bitnet/The-Era-of-1-bit-LLMs__Training_Tips_Code_FAQ.pdf at master · microsoft/unilm: Large-scale Self-supervised Pre-training Across Tasks, Languages, and Modalities - microsoft/unilm

CUDA MODE ▷ #sparsity (1 messages):

satabios: Model Compression/Inferencing Package: https://github.com/satabios/sconce

Modular (Mojo 🔥) ▷ #general (134 messages🔥🔥):

-