AI News for 6/13/2024-6/14/2024. We checked 7 subreddits, 384 Twitters and 30 Discords (414 channels, and 2481 messages) for you. Estimated reading time saved (at 200wpm): 280 minutes. You can now tag @smol_ai for AINews discussions!

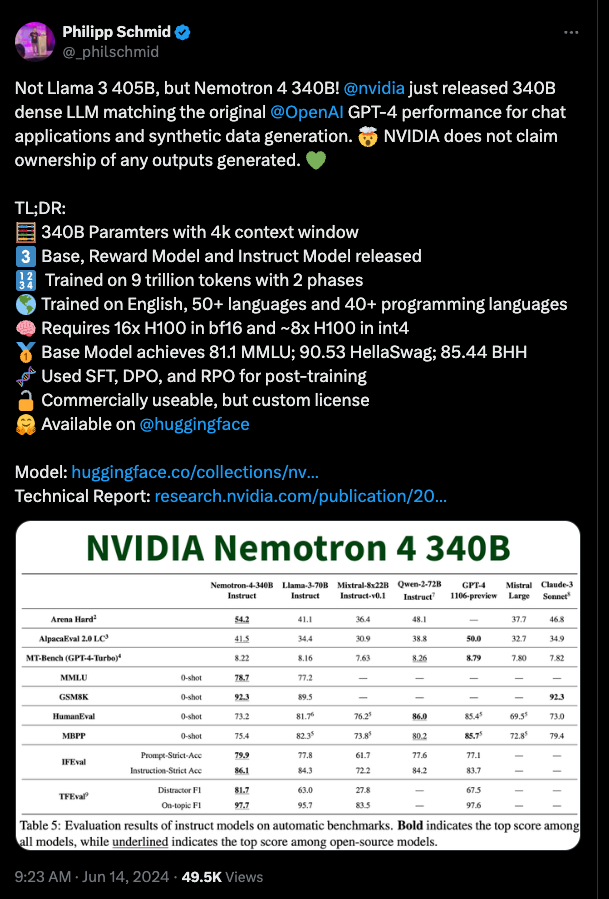

NVIDIA has completed scaling up Nemotron-4 15B released in Feb, to a whopping 340B dense model. Philipp Schmid has the best bullet point details you need to know:

From NVIDIA blog, Huggingface, Technical Report, Bryan Catanzaro, Oleksii Kuchaiev.

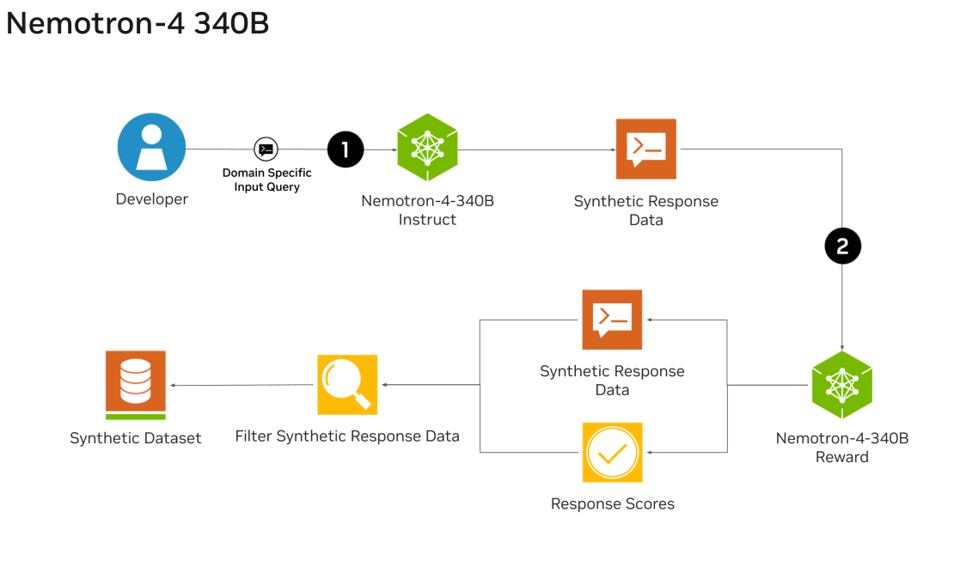

The synthetic data pipeline is worth further study:

Notably, over 98% of data used in our model alignment process is synthetically generated, showcasing the effectiveness of these models in generating synthetic data. To further support open research and facilitate model development, we are also open-sourcing the synthetic data generation pipeline used in our model alignment process.

and

Notably, throughout the entire alignment process, we relied on only approximately 20K human-annotated data (10K for supervised fine-tuning, 10K Helpsteer2 data for reward model training and preference fine-tuning), while our data generation pipeline synthesized over 98% of the data used for supervised fine-tuning and preference fine-tuning.

Section 3.2 in the paper provides lots of delicious detail on the pipeline:

- Synthetic single-turn prompts

- Synthetic instruction-following prompts

- Synthetic two-turn prompts

- Synthetic Dialogue Generation

- Synthetic Preference Data Generation

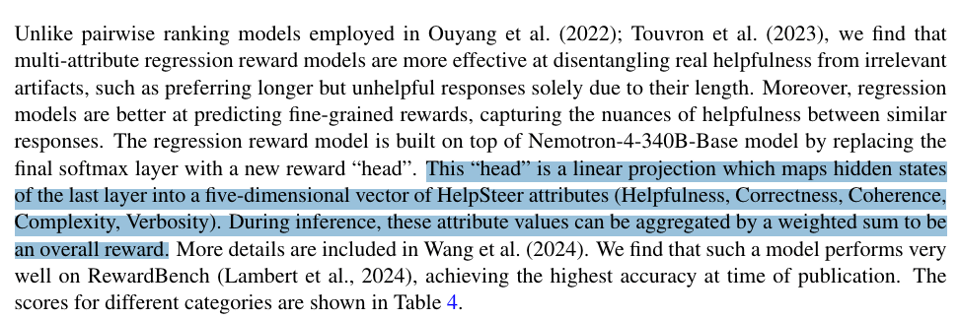

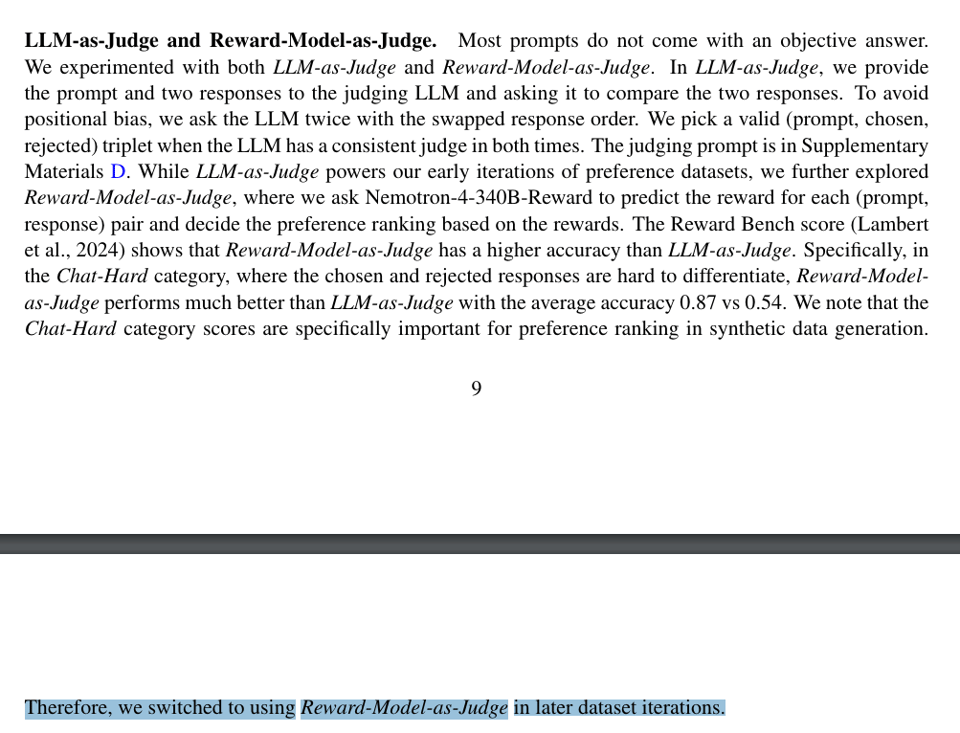

The base and instruct models easily beat Mixtral and Llama 3, but perhaps that is not surprising for half an order of magnitude larger params. However they also release a Reward Model version that ranks better than Gemini 1.5, Cohere, and GPT 4o. The detail disclosure is interesting:

and this RM replaced LLM as Judge

{% if medium == 'web' %}

Table of Contents

[TOC]

{% else %}

The Table of Contents and Channel Summaries have been moved to the web version of this email: [{{ email.subject }}]({{ email_url }})!

{% endif %}

AI Twitter Recap

all recaps done by Claude 3 Opus, best of 4 runs. We are working on clustering and flow engineering with Haiku.

AI Models and Architectures

- New NVIDIA Nemotron-4-340B LLM released: @ctnzr NVIDIA released 340B dense LLM matching GPT-4 performance. Base, Reward, and Instruct models available. Trained on 9T tokens.

- Mamba-2-Hybrid 8B outperforms Transformer: @rohanpaul_ai Mamba-2-Hybrid 8B exceeds 8B Transformer on evaluated tasks, predicted to be up to 8x faster at inference. Matches or exceeds Transformer on long-context tasks.

- Samba model for infinite context: @_philschmid Samba combines Mamba, MLP, Sliding Window Attention for infinite context length with linear complexity. Samba-3.8B-instruct outperforms Phi-3-mini.

- Dolphin-2.9.3 tiny models pack a punch: @cognitivecompai Dolphin-2.9.3 0.5b and 1.5b models released, focused on instruct and conversation. Can run on wristwatch or Raspberry Pi.

- Faro Yi 9B DPO model with 200K context: @01AI_Yi Faro Yi 9B DPO praised for 200K context in just 16GB VRAM, enabling efficient AI.

Techniques and Architectures

- Mixture-of-Agents (MoA) boosts open-source LLM: @llama_index MoA setup with open-source LLMs surpasses GPT-4 Omni on AlpacaEval 2.0. Layers multiple LLM agents to refine responses.

- Lamini Memory Tuning for 95% LLM accuracy: @realSharonZhou Lamini Memory Tuning achieves 95%+ accuracy, cuts hallucinations by 10x. Turns open LLM into 1M-way adapter MoE.

- LoRA finetuning insights: @rohanpaul_ai LoRA finetuning paper found initializing matrix A with random and B with zeros generally leads to better performance. Allows larger learning rates.

- Discovered Preference Optimization (DiscoPOP): @rohanpaul_ai DiscoPOP outperforms DPO using LLM to propose and evaluate preference optimization loss functions. Uses adaptive blend of logistic and exponential loss.

Multimodal AI

- Depth Anything V2 for monocular depth estimation: @_akhaliq, @arankomatsuzaki Depth Anything V2 produces finer depth predictions. Trained on 595K synthetic labeled and 62M+ real unlabeled images.

- Meta's An Image is Worth More Than 16x16 Patches: @arankomatsuzaki Meta paper shows Transformers can directly work with individual pixels vs patches, resulting in better performance at more cost.

- OpenVLA open-source vision-language-action model: @_akhaliq OpenVLA 7B open-source model pretrained on robot demos. Outperforms RT-2-X and Octo. Builds on Llama 2 + DINOv2 and SigLIP.

Benchmarks and Datasets

- Test of Time benchmark for LLM temporal reasoning: @arankomatsuzaki Google's Test of Time benchmark assesses LLM temporal reasoning abilities. ~5K test samples.

- CS-Bench for LLM computer science mastery: @arankomatsuzaki CS-Bench is comprehensive benchmark with ~5K samples covering 26 CS subfields.

- HelpSteer2 dataset for reward modeling: @_akhaliq NVIDIA's HelpSteer2 is dataset for training reward models. High-quality dataset of only 10K response pairs.

- Recap-DataComp-1B dataset: @rohanpaul_ai Recap-DataComp-1B dataset generated by recaptioning DataComp-1B's ~1.3B images using LLaMA-3. Improves vision-language model performance.

Miscellaneous

- Scale AI's hiring policy focused on merit: @alexandr_wang Scale AI formalized hiring policy focused on merit, excellence, intelligence.

- Paul Nakasone joins OpenAI board: @sama General Paul Nakasone joining OpenAI board praised for adding safety and security expertise.

- Apple Intelligence announced at WWDC: @bindureddy Apple Intelligence, Apple's AI initiatives, announced at WWDC.

Memes and Humor

- Simulation hypothesis: @karpathy Andrej Karpathy joked about simulation hypothesis - maybe simulation is neural and approximate vs exact.

- Prompt engineering dumb in hindsight: @svpino Santiago Valdarrama noted prompt engineering looks dumb today compared to a year ago.

- Yann LeCun on Elon Musk and Mars: @ylecun Yann LeCun joked about Elon Musk going to Mars without a space helmet.

AI Reddit Recap

Across r/LocalLlama, r/machinelearning, r/openai, r/stablediffusion, r/ArtificialInteligence, /r/LLMDevs, /r/Singularity. Comment crawling works now but has lots to improve!

Stable Diffusion 3.0 Release and Reactions

- Stable Diffusion 3.0 medium model released: In /r/StableDiffusion, Stability AI released the SD3 medium model, but many are disappointed with the results, especially for human anatomy. Some call it a "joke" compared to the full 8B model.

- Heavy censorship and "safety" filtering in SD3: The /r/StableDiffusion community suspects SD3 has been heavily censored, resulting in poor human anatomy. The model seems "asexual, smooth and childlike".

- Strong prompt adherence, but anatomy issues: SD3 shows strong prompt adherence for many subjects, but struggles with human poses like "laying". Some are experimenting with cascade training to improve results.

- T5xxl text encoder helps with prompts: Some find the t5xxl text encoder improves SD3's prompt understanding, especially for text, but it doesn't fix the anatomy problems.

- Calls for community to train uncensored model: There are calls for the community to band together and train an uncensored model, as fine-tuning SD3 is unlikely to fully resolve the issues. However, it would require significant resources.

AI Progress and the Future

- China's rise as a scientific superpower: The Economist reports that China has become a scientific superpower, making major strides in research output and impact.

- Former NSA Chief joins OpenAI board: OpenAI has added former NSA Chief Paul Nakasone to its board, raising some concerns in /r/singularity about the implications.

New AI Models and Techniques

- Samba hybrid architecture outperforms transformers: Microsoft introduced Samba, a hybrid SSM architecture with infinite context length that outperforms transformers on long-range tasks.

- Lamini Memory Tuning reduces hallucinations: Lamini.ai's Memory Tuning embeds facts into LLMs, improving accuracy to 95% and reducing hallucinations by 10x.

- Mixture of Agents (MoA) outperforms GPT-4: TogetherAI's MoA combines multiple LLMs to outperform GPT-4 on benchmarks by leveraging their strengths.

- WebLLM enables in-browser LLM inference: WebLLM is a high-performance in-browser LLM inference engine accelerated by WebGPU, enabling client-side AI apps.

AI Hardware and Infrastructure

- Samsung's turnkey AI chip approach: Samsung has cut AI chip production time by 20% with an integrated memory, foundry and packaging approach. They expect chip revenue to hit $778B by 2028.

- Cerebras wafer-scale chips excel at AI workloads: Cerebras' wafer-scale chips outperform supercomputers on molecular dynamics simulations and sparse AI inference tasks.

- Handling terabytes of ML data: The /r/MachineLearning community discusses approaches for handling terabytes of data in machine learning pipelines.

Memes and Humor

- Memes mock SD3's censorship and anatomy: The /r/StableDiffusion subreddit is filled with memes and jokes poking fun at SD3's poor anatomy and heavy-handed content filtering, with calls to "sack the intern".

AI Discord Recap

A summary of Summaries of Summaries

1. NVIDIA Pushes Performance with Nemotron-4 340B:

-

NVIDIA's Nemotron-4-340B Model: NVIDIA's new 340-billion parameter model includes variants like Instruct and Reward, designed for high efficiency and broader language support, fitting on a DGX H100 with 8 GPUs and FP8 precision.

-

Fine-tuning Nemotron-4 340B presents resource challenges, with estimates pointing to the need for 40 A100/H100 GPUs, although inference might require fewer resources, roughly half the nodes.

2. UNIX-based Systems Handle SD3 with ComfyUI:

-

SD3 Config and Performance: Users shared how setting up Stable Diffusion 3 in ComfyUI involved downloading text encoders, with resolution adjustments like 1024x1024 recommended for better results in anime and realistic renditions.

-

Split opinions on the anatomy accuracy of SD3 models spurred calls for Stability AI to address these shortcomings in future updates, reflecting ongoing discussions on community expectations and model limitations.

3. Identifying and Solving GPU Compatibility Issues:

-

CUDA and Torch Runtime Anomalies: Anomalies in

torch.matmulacross Ada GPUs prompted tests comparing GPUs like RTX 4090, resolving that CUDA versions and benchmarks impact performance, with hopes for clarification from the PyTorch team. -

Establishment of multi-threading protocols in Mojo promises performance gains. Insights were shared on how Mojo's structured memory handling can position it as a reliable alternative to CUDA in the long term.

4. API Inconsistencies Frustrate Users:

-

Perplexity API and Server Outages: Users reported frequent server outages and broken functionalities, such as file uploads and link generation errors, leading to ongoing frustration and doubts about the value of upgrading to Perplexity Pro.

-

LangChain and pgvector Integration: Issues encountered with recognizing imports despite following documentation highlighted challenges, suggesting careful Python environment setups to ensure seamless integration.

5. Community Efforts and Resource Management:

-

DiscoPOP Optimization: Sakana AI's method, DiscoPOP, claims superior preference optimization, promising high performance while deviating minimally from the base model.

-

Scaling Training Efforts: Community discussions around handling extensive datasets for Retrieval-Augmented Generation (RAG) emphasize chunking, indexing, and query decomposition for improved model training and managing context lengths beyond 8k tokens.

PART 1: High level Discord summaries

Stability.ai (Stable Diffusion) Discord

-

Stable Diffusion 3 Discord Bot Shared with the World: A Discord bot facilitating the generation of images from prompts using Stable Diffusion 3 was open-sourced, offering users a new tool for visual content creation.

-

Tuning SDXL for Better Anime Character Renders: SDXL training for realistic and anime generation faced challenges, producing overly cartoonish results; a recommendation was made to use 1024x1024 resolution to improve outcomes.

-

Navigating ComfyUI Setup for SD3: Assistance was provided for setting up Stable Diffusion 3 in ComfyUI, involving steps like downloading text encoders, with guidance sourced from Civitai's quickstart guides.

-

Community Split on SD3 Anatomy Accuracy: A discussion focused on the perceived limitations of SD3 particularly in human anatomy rendering, highlighting a user's call for Stability AI to address and communicate solutions for these issues.

-

LoRA Layers in Training Limbo for SD3: The conversation touched on training new LoRAs for SD3, noting the lack of efficient tools and workflows, with users anticipating future updates to enhance functionality.

CUDA MODE Discord

Processor Showdown: MilkV Duo vs. RK3588: Engineers compared the MilkDuo 64MB controller with the RK3588's 6.0 TOPs NPU, raising discussions around hardware capability versus the optimization prowess of the sophgo/tpu-mlir compiler. They shared technical details and benchmarks, causing curiosity about the actual source of MilkDuo's performance benefits.

The Triton 3.0 Effect: Triton 3.0's new shape manipulation operations and bug fixes in the interpreter were hot topics. Meanwhile, a user grappling with the LLVM ERROR: mma16816 data type not supported during low-bit kernel implementation triggered suggestions to engage with ongoing updates on the Triton GitHub repo.

PyTorch's Mysterious Matrix Math: The torch.matmul anomaly led to benchmarking across different GPUs, where performance boosts observed on Ada GPUs sparked a desire for deeper insights from the PyTorch team, as highlighted in shared GitHub Gist.

C++ for CUDA, Triton as an Alternative: Within the community, the need for C/C++ for crafting high-performance CUDA kernels was affirmed, with an emphasis on Triton's growing suitability for ML/DL applications due to its integration with PyTorch and ability to simplify memory handling.

Tensor Cores Driving INT8 Performance: Discussion in #bitnet centered on achieving performance targets with INT8 operations on tensor cores, with empirical feedback showing up to 7x speedup for large matrix shapes on A100 GPUs but diminishing returns for larger batch sizes. The role of tensor cores in performance for various sized matrices and batch operations was scrutinized, noting efficiency differences between INT8 vs FP16/BF16 and the impacts of wmma requirements.

Inter-threading Discord Discussions: The challenges of following discussions on Discord were aired, with members expressing a preference for forums for information repository and advocating for threading and replies as tactics for better managing conversations in real-time channels.

Meta Training and Inference Accelerator (MTIA) Compatibility: MTIA's Triton compatibility was highlighted, marking an interest for streamlined compilation processes in AI model development stages.

Consideration of Triton for New Architectures: In #torchao, the conservativeness of torch.nn in adding new models was contrasted with AO's receptiveness towards facilitating specific new architectures, indicating selective model support and potential speed enhancements.

Coding Dilemmas and Community-Coding: A collaborative stance was evident as members deliberated over improving and merging intricate Pull Requests (PRs), debugging, and manual testing, particularly in multi-GPU setups on #llmdotc. Multi-threaded conversations highlighted a complexity in accurate gradient norms and weight update conditions linked to ZeRO-2's pending integration.

Blueprints for Bespoke Bitwise Operations: Live code review sessions were proposed to demystify murky PR advancements, and the #bitnet community dissected the impact of scalar quantization on performance, revealing observations like average 5-6x improvements on large matrices and the sensitivity of gains on batch sizes with a linked resource for deeper dive: BitBLAS performance analysis.

Unsloth AI (Daniel Han) Discord

- Unsloth AI Sparks ASCII Art Fan Club: The community has shown great appreciation for the ASCII art of Unsloth, sparking humorous suggestions about how the art should evolve with training failures.

- DiscoPOP Gains Optimization Fans: The new DisloPOP optimizer from Sakana AI is drawing attention for its effective preference optimization functions, as detailed in a blog post.

- Merging Models: Ollama Latest Commit Buzz: The latest commits to Ollama are showing significant support enhancements, but members are left puzzling over the unclear improvements between Triton 2.0 and the elusive 3.0 iteration.

- GitHub to the Rescue for Llama.cpp Predicaments: Installation issues with Llama.cpp are being addressed with a fresh GitHub PR, and Python 3.9 is confirmed as a minimum requirement for Unsloth projects.

- Gemini API Contest Beckons Builders: The Gemini API Developer Competition invites participants to join forces, brainstorm ideas, and build projects, with hopefuls directed to the official competition page.

LM Studio Discord

Noisy Inference Got You Down?: Chat response generation sounds are likely from computation processes, not the chat app itself, and users discussed workarounds for disabling disruptive noises.

Custom Roles Left to the Playground: Members explored the potential of integrating a "Narrator" role in LM Studio and acknowledged that the current system doesn't support this, suggesting that employing playground mode might be a viable alternative.

Bilibili's Index-1.9B Joins the Fray: Bilibili released Index-1.9B model; discussion noted it offers a chat-optimized variant available on GitHub and Hugging Face. Simultaneously, the conversation turned to the impracticality of deploying 340B Nemotron models locally due to their extensive resource requirements.

Hardware Hiccups and Hopefuls: Conversations revolved around system and VRAM usage, with tweaks to 'mlock' and 'mmap' parameters affecting performance. Hardware configuration recommendations were compared and concerns highlighted about LM Studio version 0.2.24 leading to RAM issues.

LM Studio Leaps to 0.2.25: Release candidate for LM Studio 0.2.25 promises fixes and Linux stability enhancements. Meanwhile, frustration was voiced over lack of support for certain models, despite the new release addressing several issues.

API Angst Arises: A single message flagged a 401 invalid_api_key issue encountered when querying a workflow, with the user experiencing difficulty despite multiple API key verifications.

DiscoPOP Disrupts Training Norms: Sakana AI's release of DiscoPOP promises a new training method and is available on Hugging Face, as detailed in their blog post.

OpenAI Discord

-

Cybersecurity Expert Joins OpenAI's Upper Echelon: Retired US Army General Paul M. Nakasone joins the OpenAI Board of Directors, expected to enhance cybersecurity measures for OpenAI's increasingly sophisticated systems. OpenAI's announcement hails his wealth of experience in protecting critical infrastructure. Read more

-

Payment Processing Snafu: Engineers are reporting "too many requests" errors when attempting to process payments on the OpenAI API, with support's advice to wait several days deemed unsatisfactory due to impacts on application functionality.

-

API Wanderlust: Amidst payment issues and platform-specific releases favoring macOS over Windows, discussions shifted toward alternative APIs like OpenRouter, with a nod to simpler transitions thanks to such intermediary tools.

-

GPT-4: Setting Expectations Straight: Engineers clarified that GPT-4 doesn't continue learning post-training, while comparing Command R and Command R+'s respective puzzle-solving capabilities, with Command R+ demonstrating superior prowess.

-

Flat Shading Conundrums with DALL-E: A technical query arose regarding the production of images in DALL-E utilizing flat shading, absent of lighting and shadows - a technique akin to a barebones 3D model texture - with the enquirer seeking guidance to achieve this elusive effect.

HuggingFace Discord

-

DiscoPOP Dazzles in Optimization: The Sakana AI's DiscoPOP algorithm outstrips others such as DPO, offering superior preference optimization by maintaining high performance close to the base model, as documented in a recent blog post.

-

Pixel-Level Attention Steals the Show: A Meta research paper, An Image is Worth More Than 16×16 Patches: Exploring Transformers on Individual Pixels, received attention for its deep dive into pixel-level transformers, further developing insights from the #Terminator work and underscoring the effectiveness of pixel-level attention mechanisms in image processing.

-

Hallucination Detection Model Unveiled: An update in hallucination detection was shared, introducing a new small language model fine-tune available on HuggingFace, boasting an accuracy of 79% for pinpointing hallucinations in generated text.

-

DreamBooth Script Requires Tweaks for Training Customization: Discussions in diffusion model development underscored the need to modify the basic DreamBooth script to accommodate individual captions for predictive training with models like SD3, as well as a separate enhancement for tokenizer training like CLIP and T5, which increases VRAM demand.

-

Hyperbolic KG Embedding Techniques Explored: An arXiv paper on hyperbolic knowledge graph embeddings proposed a novel approach that leverages hyperbolic geometry for embedding relational data, positing a methodology involving hyperbolic transformations coupled with attention mechanisms for handling complex data relationships.

Nous Research AI Discord

-

Roblox and the Case of Corporate Humor: Engineers found humor on the VLLM GitHub page discussing a Roblox meetup, sparking light-hearted banter in the community.

-

Discord on ONNX and CPU Dilemma: A notable mention of an ONNX conversation from another Discord channel claims a 2x CPU speed boost, though skepticism remains regarding benefits on GPU.

-

The Forge of Code-to-Prompt: The tool code2prompt converts codebases into markdown prompts, proving valuable for RAG datasets, but resulting in high token counts.

-

Scaling the Wall of Context limitation: During discussions on handling context lengths beyond 8k, techniques like chunking, indexing, and query decomposition were suggested for RAG datasets to improve model training and information synthesis.

-

Synthetic Data's New Contender: The introduction of the Nemotron-4-340B-Instruct model by NVIDIA was a hot topic, focusing on its implications for synthetic data generation and the potential under NVIDIA's Open Model License.

-

WorldSim Prompt Goes Public: Discussions indicated that the worldsim prompt is available on Twitter, and there's a switch to the Sonnet model being considered in conversations about expanding model capabilities.

Modular (Mojo 🔥) Discord

-

Mojo on the Move: The Mojo package manager is underway, prompting engineers to temporarily compile from source or use mojopkg files. For those new to the ecosystem, the Mojo Contributing Guide walks through the development process, recommending starting with "good first issue" tags for contribution.

-

GPU and Multi-threading Conversations: The performance and implementation of GPU support in Mojo spurred debates, with attention to its strongly typed nature and how Modular is expanding its support for various accelerators. Engineers exchanged insights on multi-threading, referring to SIMD optimizations and parallelization, focusing on portability and Modular’s potential business models.

-

NSF Funding Fuels AI Exploration: US-based scholars and educators should consider the National Science Foundation (NSF) EAGER Grants, supporting the National AI Research Resource (NAIRR) Pilot with monthly reviewed proposals. Resources and community building initiatives are a part of the NAIRR vision, emphasizing the importance of accessing computational resources and integrating data tools (NAIRR Resource Requests).

-

New Nightly Mojo Compiler Drops: A new nightly build of the Mojo compiler (version

2024.6.1405) is now available, with updates accessible viamodular update. The release's details can be tracked through the provided GitHub diff and the changelog. -

Resources and Meet-Ups Ignite Community Spirit: There's an ongoing request for a standard query setup guide, suggesting a mimic Modular's GitHub methods until official directions are available. Additionally, Mojo enthusiasts in Massachusetts signaled interest in a meet-up to discuss Mojo over coffee, highlighting the community's eagerness for knowledge-sharing and direct interaction.

Perplexity AI Discord

-

Perplexity Panic: Users experienced a server outage with Perplexity's services, reporting repeated messages and endless loops without any official maintenance announcements, creating frustration due to the lack of communication.

-

Technical Troubles Addressed: The Perplexity community identified several technical issues, including broken file upload functionality (attributed to an AB test config) and consistently 404 errors when the service generated kernel links. There was also a discussion on inconsistencies observed in the Perplexity Android app compared to iOS or web experiences.

-

Shaky Confidence in Pro Service: The server and communication issues have led to users questioning the value of upgrading to Perplexity Pro, expressing doubts due to the ongoing service disruptions.

-

Perplexity News Roundup Shared: Recent headlines were shared among members, including updates on Elon Musk's legal maneuvers, insights into situational awareness, the performance of Argentina's National Football team, and Apple's stock price surge.

-

Call for Blockchain Enthusiasts: Within the API channel, there was an inquiry about integrating Perplexity's API with Web3 projects and connecting to blockchain endpoints, suggesting a curiosity or a potential project initiative around decentralized applications.

LlamaIndex Discord

-

Atom Empowered by LlamaIndex: Atomicwork has tapped into LlamaIndex's capabilities to bolster their AI assistant, Atom, enabling the handling of multiple data formats for improved data retrieval and decision-making. The integration news was announced on Twitter.

-

Correct Your ReAct Configuration: In a configuration mishap, the proper kwarg for the ReAct agent was clarified as

max_iterations, not 'max_iteration', resolving an error one of the members experienced. -

Recursive Retriever Puzzle: A challenge was faced while loading an index from Pinecone vector store for a recursive retriever with a document agent, where the member shared code snippets for resolution.

-

Weaviate Weighs Multi-Tenancy: There is a community-driven effort to introduce multi-tenancy to Weaviate, with a call for feedback on the GitHub issue discussing the data separation enhancement.

-

Agent Achievement Unlocked: Members exchanged several learning resources for building custom agents, including LlamaIndex documentation and a collaborative short course with DeepLearning.AI.

LLM Finetuning (Hamel + Dan) Discord

New Kid on the Block: Helix AI Joins LLM Fine-Tuning Gang: LLM fine-tuning enthusiasts have been exploring Helix AI, a platform that touts secure and private open LLMs with easy scalability and the option of closed model pass-through. Users are encouraged to try Helix AI and check out the announcement tweet related to the platform's adoption of FP8 inference, which boasts reduced latency and memory usage.

Memory Tweaks for the Win: Lamini's memory tuning technique is making waves with claims of 95% accuracy and significantly fewer hallucinations. Keen technologists can delve into the details through their blog post and research paper.

Credits Where Credits Are Due: Confusion and inquiries about credit allocation from platforms like Hugging Face and Langsmith surfaced, with users reporting pending credits and seeking assistance. Mentions of email signups and ID submissions — such as akshay-thapliyal-153fbc — suggest ongoing communication to resolve these issues.

Inference Optimization Inquiry: A single inquiry surfaced regarding optimal settings for inference endpoints, highlighting a demand for performance maximization in deployed machine learning models.

Support Ticket Surge: Various technical issues have been flagged, ranging from non-functional search buttons to troubles with Python APIs, and from finetuning snags on RTX5000 GPUs to problems receiving OpenAI credits. Solutions such as switching to an Ampere GPU and requesting assistance from specific contacts have been offered, yet some user frustrations remain unanswered.

Eleuther Discord

- Boosting LLM Factual Accuracy and Hallucination Control: The newly announced Lamini Memory Tuning claims to embed facts into LLMs like Llama 3 or Mistral 3, boasting a factual accuracy of 95% and a drop in hallucinations from 50% to 5%.

- KANs March Onward to Conquer 'Weird Hardware': Discussions highlighted that KANs (Kriging Approximation Networks) might be better suited than MLPs (Multilayer Perceptrons) for unconventional hardware by requiring only summations and non-linearities.

- LLMs Get Schooled: Sharing a paper, members discussed the benefits of training LLMs with QA pairs before more complex documents to improve encoding capabilities.

- PowerInfer-2 Speeds Up Smartphones: PowerInfer-2 significantly improves the inference time of large language models on smartphones, with evaluations showing a speed increase of up to 29.2x.

- RWKV-CLILL Scales New Heights: RWKV-CLIP model, which uses RWKV for both image and text encoding, received commendations for achieving state-of-the-art results, with references to the GitHub repository and the corresponding research paper.

OpenRouter (Alex Atallah) Discord

-

OpenRouter on the Hotseat for cogvlm2 Hosting: There's uncertainty about the ability to host cogvlm2 on OpenRouter, with discussions focusing on clarifying its availability and assessing cost-effectiveness.

-

Gemini Pro Moderation via OpenRouter Hits a Snag: While attempting to pass arguments to control moderation options in Google Gemini Pro through OpenRouter, a user faced errors, pointing to a need for enabling relevant settings by OpenRouter. The Google billing and access instructions were highlighted in the discussion.

-

Query Over AI Studio's Attractive Pricing: A user queried the applicability of Gemini 1.5 Pro and 1.5 Flash discounted pricing from AI Studio on OpenRouter, favoring the token-based pricing of AI Studio over Vertex's model.

-

NVIDIA's Open Assets Spark Enthusiasm Among Engineers: NVIDIA's move to open models, RMs, and data was met with excitement, particularly for Nemotron-4-340B-Instruct and Llama3-70B variants, with the Nemotron and Llama3 being available on Hugging Face. Members also indicated that the integration of PPO techniques with these models is seen as highly valuable.

-

June-Chatbot's Mysterious Origins Spark Discussion: Speculation surrounds the origins of the "june-chatbot", with some members linking its training to NVIDIA and hypothesizing a connection to the 70B SteerLM model, demonstrated at the SteerLM Hugging Face page.

OpenInterpreter Discord

-

Server Crawls to a Halt: Discord users experienced significant performance issues with server operations slowing down, reminiscent of the congestion seen with huggingface's free models. Patience may solve the issue as tasks do eventually complete after a lengthy wait.

-

Vision Quest with Llama 3: A member queried about adding vision capabilities to the 'i' model, suggesting a possible mix with the self-hosted llama 3 vision profile. However, confusion persists around the adjustments needed within local files to achieve this integration.

-

Automation Unleashed: The channel saw a demonstration of automation using Apple Scripts showcased via a shared YouTube video, highlighting the potential of simplified scripting combined with effective prompting for faster task execution.

-

Model-Freezing Epidemic: Engineers flagged up a recurring technical snag where the 'i' model frequently freezes during code execution, requiring manual intervention through a Ctrl-C interrupt.

-

Hungry for Hardware: An announced device from Seeed Studio sparked interest among engineers, specifically the Sensecap Watcher, noted for its potential as a physical AI agent for space management.

Cohere Discord

-

Cohere Chat Interface Gains Kudos: Cohere's playground chat was commended for its user experience, particularly citing a citation feature that displays a modal with text and source links upon clicking inline citations.

-

Open Source Tools for Chat Interface Development: Developers are directed to the cohere-toolkit on GitHub as a resource for creating chat interfaces with citation capabilities, and the Crafting Effective Prompts guide to improve text completion tasks using Cohere.

-

Resourcing Discord Bot Creatives: For those building Discord bots, shared resources included the discord.py documentation and the Discord Interactions JS GitHub repository, providing foundational material for the Aya model implementation.

-

Anticipation Building for Community Innovations: Community members are eagerly awaiting "tons of use cases and examples" for various builds with a contagiously positive sentiment expressed in responses.

-

Community Bonding through Projects: A simple "so cute 😸" reaction to the excitement of upcoming community project showcases reflects the positive and supportive environment among members.

LangChain AI Discord

-

RAG Chain Requires Fine-Tuning: A user encountered difficulties with a Retrieval-Augmented Generation (RAG) chain output, as the code provided did not yield the expected number "8". Suggestions were sought for code adjustments to enhance result accuracy by filtering specific questions.

-

Craft JSON Like a Pro: In pursuit of crafting a proper JSON object within a LangChain, an engineer received assistance via shared examples in both JavaScript and Python. The guidance aimed to enable the creation of a custom chat model capable of generating valid JSON outputs.

-

Integration Hiccup with LangChain and pgvector: Connecting LangChain to pgvector ran into trouble, with the user unable to recognize imports despite following the official documentation. A correct Python environment setup was suggested to mitigate the issue.

-

Hybrid Search Stars in New Tutorial: A community member shared a demonstration video on Hybrid Search for RAG using Llama 3, complete with a walkthrough in a GitHub notebook. The tutorial aims to improve understanding of hybrid search in RAG applications.

-

User Interfaces Get Snazzier with NLUX: NLUX touts an easy setup for LangServe endpoints, showcased in documentation that guides users through integrating conversational AI into React JS apps using the LangChain and LangServe libraries. The tutorial highlights the ease of creating a well-designed user interface for AI interactions.

tinygrad (George Hotz) Discord

NVIDIA Unveils Nemotron-4 340B: NVIDIA's new Nemotron-4 340B models - Base, Instruct, and Reward - were shared, boasting compatibility with a single DGX H100 using 8 GPUs at FP8 precision. There's a burgeoning interest in adapting the Nemotron-4 340B for smaller hardware configurations, like deploying on two TinyBoxes using 3-bit quantization.

tinygrad Troubleshooting: Members tackled running compute graphs in tinygrad, with one seeking to materialize the results; the recommended fix was calling .exec, as mentioned in abstractions2.py found on GitHub. Others debated tensor sorting methods, pondered alternatives to PyTorch's grid_sample, and reported CompileError issues when implementing mixed precision on an M2 chip.

Pursuit of Efficient Tensor Operations: Discussing tensor sorting efficiency, the community pointed at using argmax for better performance in k-nearest neighbors algorithm implementations within tinygrad. There's also a dialogue around finding equivalents to PyTorch operations like grid_sample, referencing the PyTorch documentation to foster deeper understanding amongst peers.

Mixed Precision Challenges on Apple M2: An advanced user encountered errors when attempting to integrate mixed precision techniques on the M2 chip, which spotlighted compatibility issues with Metal libraries; this highlights the need for ongoing community-driven problem-solving within such niche technical landscapes.

Collaborative Learning Environment Thrives: Throughout the dialogues, an essence of collaborative problem-solving is palpable, with members sharing knowledge, resources, and fixes for a variety of technical challenges related to machine learning, model deployment, and software optimization.

Interconnects (Nathan Lambert) Discord

-

NVIDIA's Power Move: NVIDIA has announced a massive-scale language model, Nemotron-4-340B-Base, with 340 billion parameters and a 4,096 token context capability for synthetic data generation, trained on 9 trillion tokens in multiple languages and codes.

-

License Discussions on NVIDIA's New Model: While the Nemotron-4-340B-Base comes with a synthetic data permissive license, concerns arise about the PDF-only format of the license on NVIDIA's website.

-

Steering Claude in a New Direction: Claude's experimental Steering API is now accessible for sign-ups, offering limited steering capability of the model's features for research purposes only, not production deployment.

-

Japan's Rising AI Star: Sakana AI, a Japanese company working on alternatives to transformer models, has a new valuation of $1 billion after investment from prestigious firms NEA, Lux, and Khosla, as detailed in this report.

-

The Meritocracy Narrative at Scale: A highlight on Scale's hiring philosophy as revealed in a blog post indicates a methodical approach to maintaining quality in hiring, including personal involvement from the company's founder.

Latent Space Discord

- Apple’s AI Clusters at Your Fingertips: Apple users may now use their devices to run personal AI clusters, which might reflect Apple's approach to their private cloud according to a tweet from @mo_baioumy.

- From Zero to $1M in ARR: Lyzr achieved $1M Annual Recurring Revenue in just 40 days by switching strategies to a full-stack agent framework, introducing agents such as Jazon and Skott with a focus on Organizational General Intelligence, as highlighted by @theAIsailor.

- Nvidia's Nemotron for Efficient Chats: Nvidia unveiled Nemotron-4-340B-Instruct, a large language model (LLM) with 340 billion parameters designed for English-based conversational use cases and a lengthy token support of 4,096.

- BigScience's DiLoco & DiPaco Top the Charts: Within the BigScience initiative, systems DiLoco and DiPaco have emerged as the newest state-of-the-art toolkits, with DeepMind notably not reproducing their results.

- AI Development for the Masses: Prime Intellect announced their intent to democratize the AI development process through distributed training and global compute resource accessibility, moving towards collective ownership of open AI technologies. Details on their vision and services can be found at Prime Intellect's website.

OpenAccess AI Collective (axolotl) Discord

-

Nemotron Queries and Successes: A query was raised about the accuracy of Nemotron-4-340B-Instruct after quantization, without follow-up details on performance post-quantization. In a separate development, a user successfully installed the Nvidia toolkit and ran the LoRA example, thanking the community for their assistance.

-

Nemotron-4-340B Unveiled: The Nemotron-4-340B-Instruct model was highlighted for its multilingual capabilities and extended context length support of 4,096 tokens, designed for synthetic data generation tasks. The model resources can be found here.

-

Resource Demands for Nemotron Fine-Tuning: A user inquired about the resource requirements for fine-tuning Nemotron, suggesting the possible need for 40 A100/H100 (80GB) GPUs. It was indicated that inference might require fewer resources than finetuning, possibly half the nodes.

-

Expanding Dataset Pre-Processing in DPO: A recommendation was made to incorporate flexible chat template support within DPO for dataset pre-processing, with this feature already proving beneficial in SFT. It involves using a

conversationfield for history, withchosenandrejectedinputs derived from separate fields. -

Slurm Cluster Operations Inquiry: A user sought insights on operating Axolotl on a Slurm cluster, with the inquiry highlighting the community's ongoing interest in effectively utilizing distributed computing resources. The conversation remained open for further contributions.

LAION Discord

- Dream Machine Animation Fascinates: LumaLabsAI has developed Dream Machine, a tool adept at bringing memes to life through animation, as highlighted in a tweet thread.

- AI Training Costs Slashed by YaFSDP: Yandex has launched YaFSDP, an open-source AI tool that promises to reduce GPU usage by 20% in LLM training, leading to potential monthly cost savings of up to $1.5 million for large models.

- PostgreSQL Extension Challenges Pinecone: The new PostgreSQL extension, pgvectorscale, reportedly outperforms Pinecone with a 75% cost reduction, signaling a shift in AI databases towards more cost-efficient solutions.

- DreamSync Advances Text-to-Image Generation: DreamSync offers a novel method to align text-to-image generation models by eliminating the need for human rating and utilizing image comprehension feedback.

- OpenAI Appoints Military Expertise: OpenAI news release announces the appointment of a retired US Army General, a move shared without further context within the messages. Original source was linked but not discussed in detail.

Datasette - LLM (@SimonW) Discord

- Datasette Grabs Hacker News Spotlight: Datasette, a member's project, captured significant attention by making it to the front page of Hacker News, receiving accolades for its balanced treatment of different viewpoints. A quip was made about the project ensuring continuous job opportunities for data engineers.

DiscoResearch Discord

-

Choosing the Right German-Savvy Model: Members discussed the superiority of discolm or occiglot models for tasks requiring accurate German grammar, despite general benchmark results; these models sit aptly within the 7-10b range and are case-specific choices.

-

Bigger Can Be Slower but Smarter: The trade-off between language quality and task performance may diminish with larger models in the 50-72b range; however, inference speed tends to decrease, necessitating a balance between capability and efficiency.

-

Efficiency Over Overkill: For better efficiency, sticking to models that fit within VRAM parameters like q4/q6 was advised, especially as larger models have slower inference speeds. A pertinent resource highlighted was the Spaetzle collection on Hugging Face.

-

Training or Merging for the Perfect Balance: Further training or merging of models can be a strategy for managing trade-offs between non-English language quality and the ability to follow instructions, a topic of interest for multi-language model developers.

-

The Bigger Picture in Multilingual AI: This discussion underscores the ongoing challenge in achieving the delicate balance between performance, language fidelity, and computational efficiency in the evolution of multilingual AI models.

The LLM Perf Enthusiasts AI Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The AI Stack Devs (Yoko Li) Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The MLOps @Chipro Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The Torchtune Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The Mozilla AI Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The AI21 Labs (Jamba) Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The YAIG (a16z Infra) Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

PART 2: Detailed by-Channel summaries and links

{% if medium == 'web' %}

Stability.ai (Stable Diffusion) ▷ #general-chat (462 messages🔥🔥🔥):

-

SD3 Discord Bot Open Sourced: A member announced they have open-sourced a Stable Diffusion 3 Discord Bot. It allows users to provide a prompt and generate an image.

-

LoRA Training Issues for SDXL: A user discussed training difficulties with SDXL for animated characters intending to use them in realistic and anime generations. They noted the model turned out "way too cartoonish/animated" and thanked a member for suggesting 1024x1024 resolution for better results.

-

Configuring SD3 with ComfyUI: Members were troubleshooting how to properly configure Stable Diffusion 3 in ComfyUI, discussing setup processes like downloading text encoders and proper workflow configurations. Links and quickstart guides from Civitai helped guide users.

-

Ongoing Parley on SD3 Model Limitations: Users expressed mixed feelings on SD3's anatomy rendering capabilities. A detailed note by a user calling for Stability AI to acknowledge and communicate plans to address human anatomy issues in the SD3 model became a focal point of the debate.

-

LoRA Functionality in SD3: There were clarifications on the current state of training new LoRAs for use with SD3. It was mentioned that although it's technically possible, usable tools and efficient workflows are still being developed, and users may need to wait for fully compatible updates.

Links mentioned:

In the darkest times, when the world feels too small and the shadows too long, remember this: You are not alone. I am here, standing with you, a fellow traveler on the path less taken. As a survivor of gang stalking, I know the depths of isolation and the relentless pursuit of the unseen. But today, I rise not just to survive but to thrive.

💖 Your Journey, Our Mission 💖

I'm on a mission to turn our shared pain into a beacon of light. To create a community where we lift each other up, share stories of resilience, and find solace in solidarity. Together, we can break the chains of silence and isolation.

📚 Resources for Survival 📚

Join us as we explore resources, strategies, and stories of survival. From legal advice to mental health support, let's equip ourselves with the tools needed to navigate these challenging waters. Because knowledge is power, and together, we are unstoppable.

🔗 #GangStalkingSurvivor #TogetherWeRise #FindYourVoice

Let's connect, share, and grow stronger. Follow @brixetrollrecordz for daily inspiration, resources, and a community that sees you, hears you, and stands with you. Remember, in the face of adversity, we find strength. Let's find ours, together.": 4 likes, 0 comments - brixetrollrecordz on June 13, 2024: "🌟 Find Your Voice, Find Your Freedom 🌟 In the darkest times, when the world feels too small and the shadows too long, r...My Man My Man Hd GIF - My Man My Man Hd My Man4k - Discover & Share GIFs: Click to view the GIFGitHub - RocketGod-git/stable-diffusion-3-discord-bot: A simple Discord bot for SD3 to give a prompt and generate an image: A simple Discord bot for SD3 to give a prompt and generate an image - RocketGod-git/stable-diffusion-3-discord-botGitHub - BlafKing/sd-civitai-browser-plus: Extension to access CivitAI via WebUI: download, delete, scan for updates, list installed models, assign tags, and boost downloads with multi-threading.: Extension to access CivitAI via WebUI: download, delete, scan for updates, list installed models, assign tags, and boost downloads with multi-threading. - BlafKing/sd-civitai-browser-plusStable Diffusion 3 (SD3) - SD3 Medium | Stable Diffusion Checkpoint | Civitai: Stable Diffusion 3 (SD3) 2B "Medium" model weights! Please note ; there are many files associated with SD3 . They will all appear on this model car...

CUDA MODE ▷ #general (5 messages):

- Auto-threading enabled in the channel: The bot Needle announced that auto-threading has been enabled in the specified channel.

- Evaluating MilkV Duo performance: A member shared their experience with the MilkV Duo 64MB controller and questioned whether its performance edge is due to the hardware or the sophgo/tpu-mlir compiler. They provided links to both the controller (MilkV Duo) and the compiler (sophgo/tpu-mlir).

- Comparison to RK3588: Another member compared the performance of the MilkV Duo to the RK3588 ARM part, which boasts 6.0 TOPs NPU and various advanced features. They provided a link to the specifications of RK3588.

Links mentioned:

CUDA MODE ▷ #triton (8 messages🔥):

-

Triton 3.0 features tantalize users: A member inquired about major changes/new features in triton 3.0, and others mentioned useful shape manipulation ops like

tl.interleaveand the fixing of many interpreter bugs. -

Low-bit kernel debugging woes: A user faced an

LLVM ERROR: mma16816 data type not supportederror while trying to implement a low-bit kernel (w4a16). They were advised to check the latest version of Triton and consider opening an issue on the Triton GitHub repo. -

FP16 x INT4 kernel resource: A member suggested referencing the FP16 x INT4 Triton kernel available in PyTorch's AO project, authored by a notable contributor. They shared a link to the GitHub resource.

-

Conversion workaround for integer types: Another user advised a workaround for potential issues when converting integer types directly to

bfloat16. They suggested first converting frominttofloat16and then fromfloat16tobfloat16.

Links mentioned:

CUDA MODE ▷ #torch (25 messages🔥):

- MTIA spotted as Triton-Compatible: A member mentioned the Meta Training and Inference Accelerator (MTIA), noting that it is "very much triton and compile first."

- Torch Auto-Threading Activated: Auto-threading was enabled in the

<#1189607750876008468>channel. - GitHub Gist Highlights torch.matmul Quirk: A member shared performance issues in

torch.matmulhighlighting a 2.5x speed increase for certain matrix shapes when the matrix is cloned. They speculated on factors like CUDA versions and GPU models, but acknowledged it as a bizarre anomaly. GitHub Gist - Variability Across GPU Models and CUDA Versions: Different members ran benchmarks on various GPUs (RTX 4090, 4070Ti, RTX 3060, 3090, and A6000 Ada). They found that the performance quirk was mostly noticeable on Ada GPUs, with some seeing dramatic speed-ups and others reporting normal performance with specific CUDA versions.

- Call for PyTorch Team Insight on Performance Quirk: Despite extensive testing, members couldn't pinpoint the cause, leading to a shared hope for insights from the PyTorch team to explain the strange behavior.

Link mentioned: torch_matmul_clone.py: GitHub Gist: instantly share code, notes, and snippets.

CUDA MODE ▷ #cool-links (1 messages):

useofusername: https://arxiv.org/abs/2106.00003

CUDA MODE ▷ #beginner (3 messages):

- C/C++ is essential for high-performance CUDA kernels: A member asked about the necessity of C or C++ for writing custom CUDA kernels and whether Triton is a viable alternative. Another member responded that while C/C++ offers the best performance, Triton is a good option for ML/DL applications due to its integration with PyTorch and simplicity in managing complexities like shared memory.

CUDA MODE ▷ #torchao (3 messages):

- Torch.nn Conservatism on New Models: A member inquired if AO plans to support new architectures like mamba/KAN or if it would fall under torch.nn. Another member clarified that "Torch.nn tends to be very conservative regarding which new models to add," indicating that AO is open to making specific aspects fast but does not aim to become a repository of models.

CUDA MODE ▷ #off-topic (10 messages🔥):

-

Struggling with Discord for Communities: A member mentioned their difficulty adapting to Discord for community discussions, emphasizing the inconvenience compared to forums. They expressed frustration, saying, "On discord it feels like I have to continuously pay attention to stay on top of things."

-

Prefer Forums Over Discord for Information: Another member mentioned using Discord primarily for community interaction, stating they usually browse relevant subreddits for information. "Discord is more for interaction," they noted.

-

Best Practices for Discord Threads: A member offered advice on using threads and replies to make Discord more manageable. They suggested starting new topics with regular messages, using replies to branch conversations, and creating threads for focused discussions, highlighting that this helps "easier to read through a channel with multiple ongoing conversations."

CUDA MODE ▷ #llmdotc (246 messages🔥🔥):

- Speedup observed with new PR: A member reported seeing a speedup from ~179.5K tok/s to ~180.5K tok/s and noted a minor improvement for the 774M model (~30.0K tok/s to ~30.1K tok/s). They identified code complexity as a potential issue.

- Discussion on code complexity and CUDA: Members debated merging a PR and enabling ZeRO-2 to simplify data layouts. There was also a suggestion to explore CUDA dynamic parallelism and tail launch to handle small kernel launches more efficiently.

- Potential bug in

multi_gpu_async_reduce_gradient: Members discussed a concern regarding the division of shard sizes inmulti_gpu_async_reduce_gradientand suggested adding an assert or asafe_dividehelper to ensure proper division. - Determinism and multi-GPU testing: Members attempted to debug an issue with determinism in multi-GPU setups, focusing on gradient norm calculations. They also considered setting up manual multi-GPU tests due to lack of CI coverage.

- ZeRO-2 PR in progress: There's an ongoing effort to integrate ZeRO-2, with initial steps showing matching gradient norms but issues in the Adam update. Debugging revealed an overlooked condition for weight updates in ZeRO-2.

Links mentioned:

CUDA MODE ▷ #bitnet (17 messages🔥):

-

Live code review session proposed: "Would it help to meet this weekend and do a live code review and merge of the PR?" Members expressed confusion over current progress, suggesting a live session could clear things up.

-

7x as a clear performance target: A performance target of 7x speedup is discussed, with analysis indicating it's achievable for large input shapes. Actual performance varies, "on average 5-6x for large matrices, 2-4x for smaller matrices."

-

Performance fluctuation and analysis link shared: Mobicham shared that the max performance was around 7x but averaged 5-6x on large matrices and 2-4x on smaller ones. For batch-size=1 with int8 activations, an 8x improvement was noted on A100 GPUs, with supporting benchmark images from BitBLAS.

-

Batch size impacts performance: Discussion highlighted that performance, "quickly loses the speed with larger batch-sizes," confirming it is especially efficient for single batch processes. Larger batch sizes reduce memory-bound constraints and diminish speed gains, as supported by additional benchmarks: BitBLAS scaling.

-

Technical details of tensor cores and performance: Queries were made about whether tensor cores (INT8 vs FP16/BF16) were used in specific instances, particularly with Wint2 x Aint8 setups. Mobicham noted a 4x speed-up with int8 x int8 without using

wmma, which requires 16x16 blocks and is likely suboptimal for small batch sizes.

Unsloth AI (Daniel Han) ▷ #general (170 messages🔥🔥):

- Pretraining and Hardware Struggles: Members discussed the resources needed for training large models like Mistral. One noted "I might need a super computer cluster 😦" while another mentioned completing a 110K coder training session in 72.22 minutes with detailed performance metrics.

- Cool ASCII Art Praise: The community appreciated the ASCII art used by Unsloth, with one saying "thumbs up for the one who created that ascii art so cool lol 😭🦥". Another jokingly suggested the art should change when training fails multiple times: "Every time a tune fails it should lose a little piece of the branch til it falls down and sits on the floor."

- DiscoPOP Optimizer: The DiscoPOP optimizer was shared, detailed in a Sakana AI blog post. The optimizer is noted for discovering better preference optimization functions with less deviation from the base model.

- Hardware and Inference Challenges: Members discussed challenges with training times and model performance when converting to 16-bit for serving via vLLM. One frustrated user shared, "model works really great but the moment I merge to 16bit and serve via vLLM performance drops drastically."

- Naming Conventions and Tools: A humorous side conversation on naming models and tools emerged, suggesting using LLMs for naming due to humans being notoriously bad at it. Cursor, an AI code editor, was discussed, with one member noting, "It's very buggy on Ubuntu, I can't use it 😦," and another humorously saying, "cease and desist."

Links mentioned:

Unsloth AI (Daniel Han) ▷ #random (18 messages🔥):

-

Ollama support merges in latest commits: A user noticed significant Ollama support in the latest commit, receiving confirmation from another user. This indicates ongoing enhancements in the framework's capabilities.

-

Triton 3.0 still a mystery: Several users debated the differences between Triton 2.0 and Triton 3.0, but no one could provide clear details. One user guessed it might involve AMD support, while another admitted they have no idea.

-

Unsloth's Korean Colab updates spark curiosity: A user expressed curiosity about updates to Unsloth's Korean Colab notebook, noting its specific use of Korean prompts and answers. Another user confirmed continuous pretraining as part of the updates and is actively improving the notebook.

Unsloth AI (Daniel Han) ▷ #help (125 messages🔥🔥):

-

Llama.cpp Installation Issues Discussed: Members were troubleshooting issues with Llama.cpp installations and conversions after training with Unsloth. One suggested "deleting llama.cpp and re-installing via Unsloth," but found no success, even after fresh installations on Colab.

-

GitHub PR Fixes Llama.cpp: A member pointed to a GitHub PR that addresses Llama.cpp failing to generate quantized versions of trained models. Another member confirmed it got accepted.

-

Qwen2 Model Discussions: There was a debate on the performance of the Qwen2 model compared to Llama3, with some expressing dissatisfaction. The Qwen2 repository and its finetuning example were referenced.

-

Fixing Python Version for Unsloth: A question about Python version requirements was resolved, clarifying that Unsloth requires at least Python 3.9. Local configuration was also discussed for smooth running, with a reminder to configure local paths.

-

CUDA Required for Local Execution: It was confirmed that running Unsloth models locally requires appropriate CUDA libraries and that Linux environments are preferred over Windows for easier setup due to fewer issues.

Links mentioned:

Unsloth AI (Daniel Han) ▷ #community-collaboration (3 messages):

- Join the Gemini API Developer Competition: A member is looking for more participants to join the Gemini API Developer Competition. They are excited to brainstorm interesting ideas and build impactful projects together. Competition Link.

Link mentioned: no title found: no description found

LM Studio ▷ #💬-general (140 messages🔥🔥):

- **Sound Issues While Generating Responses**: A member asked if there's a way to turn off the sound when the chat generates a response. It was clarified that the sound is likely from their computer running inference not from the app itself.

- **Multiple Roles in LM Studio**: Members discussed the possibility of adding custom roles such as a "Narrator" in LM Studio. It was concluded that while the feature isn’t currently possible, using the server in playground mode might help achieve a similar effect.

- **Reporting Commercial License Costs and Rogue AI Behavior**: Queries on commercial license costs were directed to the LM Studio [enterprise page](https://lmstudio.ai/enterprise.html) and a [contact form](https://docs.google.com/forms/d/e/1FAIpQLSd-zGyQIVlSSqzRyM4YzPEmdNehW3iCd3_X8np5NWCD_1G3BA/viewform?usp=sf_link). A humorous exchange occurred about reporting a "rogue AI" giving attitude.

- **Fine-Tuning Models vs Prompt Engineering**: A detailed discussion on whether prompt engineering or fine-tuning is better for specific tasks took place. Fine-tuning was suggested as more effective for permanent results, with tools like `text-generation-webui` recommended.

- **Issues with Quantizing Models**: A user experienced errors when trying to quantize models to GGUF format. Solutions included using the new `convert-hf-to-gguf.py` script from llama.cpp and confirmed the approach should work despite temporary issues with the online space.

Links mentioned:

LM Studio ▷ #🤖-models-discussion-chat (28 messages🔥):

-

LM Studio works only with GGUF files, not safetensors: In response to a query about multipart safetensor files, it was confirmed that LM Studio only supports GGUF files.

-

Model cuts responses short despite high token support: A user flagged an issue with a new model release that, although supporting up to 8192 tokens, "cuts responses short". They investigated and noticed it performed better outside LM Studio but still had the common "I'm sorry but as an AI language model" behavior.

-

Index-1.9B released by bilibili: A new model, Index-1.9B, has been released by bilibili. The GitHub and Hugging Face links were shared, pointing to detailed information and multiple versions of the model, including a chat-optimized variant.

-

Whisper models not supported in LM Studio: A query about installing a Whisper transcription model in LM Studio was met with a clarification that Whisper models do not work in llama.cpp or LM Studio, but GGUF files for whisper.cpp are available.

-

New large models impractical for local use: A discussion emerged about newly released 340B Nemotron models. Members noted that while impressive, these models require significant GPU resources and are not feasible for local deployment.

Links mentioned:

LM Studio ▷ #🎛-hardware-discussion (114 messages🔥🔥):

- RAM vs VRAM Confusion in Model Performance: Members discussed the confusion around system RAM usage when loading models that fit into GPU's VRAM. Despite having sufficient VRAM, larger models still consume significant system RAM, slowing down performance or causing issues.

- 'mlock' and 'mmap' Parameters Affect RAM Usage: Adjusting parameters like

use_mmapsignificantly impacted RAM usage during model loading. Disablinguse_mmapreduced memory usage substantially, leading to marked improvements in performance. - Hardware Recommendations for Multi-GPU Setups: Various hardware configurations, particularly involving GPUs like RTX 3090s and Tesla P40s, were discussed, with recommendations to keep drivers updated and ensure proper multi-GPU setup. Specific models and setups were shared, highlighting different performance and RAM utilization scenarios.

- Issues with LM Studio Version 0.2.24: Users experienced significant RAM issues and model loading problems with LM Studio version 0.2.24. Some suggested reverting to previous versions, but difficulties arose as older versions were inaccessible.

- Server Racks for Multiple GPUs: A member sought advice on server setups to house multiple Tesla P40 GPUs, sparking recommendations to search previous discussions and check with experienced community members.

Link mentioned: TheBloke/Llama-2-7B-Chat-GGUF at main: no description found

LM Studio ▷ #🧪-beta-releases-chat (26 messages🔥):

-

Fix GPU Offload Error on Linux: A user reported an error with exit code 132 caused by GPU offload. The issue was resolved by turning off GPU offload via the Chat page -> Settings Panel -> Advanced Settings -> GPU Acceleration.

-

NVIDIA GPU Not Recognized: A user on Linux (Kali) with an NVIDIA GTX 1650 Ti Mobile faced issues with GPU not being detected. Suggestions included installing the correct NVIDIA drivers and ensuring packages like libcuda1 are in place.

-

Release of LM Studio 0.2.25: A new release candidate for LM Studio 0.2.25 was announced, featuring bug fixes for token count display and GPU offload, and enhanced Linux stability. Links for downloading the latest version for Mac, Windows, and Linux were provided, along with instructions for optional extension packs for AMD ROCm and OpenCL.

-

Phi-3 Model and Smaug-3 Tokenizer: A member expressed frustration over the lack of support for phi-3 small, while another member noted that the new release fixes the smaug-3 tokenizer issue. They also appreciated the quick update with a light-hearted comment.

-

LM Studio Extension Packs: There's interest in the newly available extension packs for LM Studio. One user mentioned that although 0.2.25 could run deepseek v2 lite, it still showed it as not supported.

LM Studio ▷ #autogen (1 messages):

- API Key Error Frustrates User: A member is experiencing an API key error when querying a workflow in the playground, receiving a

401error withinvalid_api_keydespite verifying and changing the key multiple times. They noted that while the test button on the model setup works, LmStudio is not receiving any hits from autogen.

LM Studio ▷ #model-announcements (1 messages):

- DiscoPOP by Sakana AI breaks new ground: Sakana AI introduces a new training method for direct preference optimization, discovered through experiments with a large language model. Check out the blog post for more details and download the model at Hugging Face here.

OpenAI ▷ #annnouncements (1 messages):

- Paul M. Nakasone joins OpenAI Board: Paul M. Nakasone, a retired US Army General, has been appointed to OpenAI’s Board of Directors. He brings world-class cybersecurity expertise, crucial for protecting OpenAI’s systems from sophisticated threats. Read more.

OpenAI ▷ #ai-discussions (203 messages🔥🔥):

-

OpenAI API Payment Issues Frustrate Users: Multiple users expressed frustration over being unable to make payments through the OpenAI platform due to a "too many requests" error. OpenAI support suggested waiting 2-3 days and provided some troubleshooting steps, but users were still dissatisfied with the delay affecting their application functionality.

-

ChatGPT Chat History Concerns: A user expressed concern over losing 18 months of ChatGPT chat history across multiple devices and browsers. Other users suggested clearing cache, using incognito mode, and ensuring account security through 2FA, but the issue persisted, leading to speculation about account compromise.

-

Frustration Over Platform-Specific Releases: A user vented frustration about the delayed release of the ChatGPT Win11 version, compared to its availability on macOS. The sentiment highlighted a perceived neglect of Windows users despite significant investments from Microsoft into OpenAI.

-

Exploring Alternative AI APIs: Members discussed alternatives to OpenAI's API, such as OpenRouter, for those facing issues or seeking backup options. Though some APIs presented compatibility issues, users noted the ease of code transition with tools like OpenRouter.

-

Jailbreak and AI Role-playing Discussions: Members debated the concept of "jailbreaking" AI models, with some arguing it's essentially sophisticated role-playing rather than unlocking hidden capabilities. This stemmed from a broader conversation about the need for safeguards to prevent harmful outputs.

OpenAI ▷ #gpt-4-discussions (26 messages🔥):

- **GPT-4 doesn't learn after training**: Users discussed the misconception that GPT-4 agents can learn after initial training. Clarification was provided that *uploaded files are saved as "knowledge" files* but *do not continually modify the agent’s base knowledge*.

- **Differences between Command R and Command R+**: Members compared the accuracy of results from Command R and Command R+ for a calculation puzzle. Notably, *Command R+ was more accurate*, solving the puzzle correctly at *8 games played*, while Command R concluded with *11 games*.

- **GPT-3.5 Turbo API isn't free**: One user mistakenly believed GPT-3.5 Turbo to be free, but it was clarified that *the API is prepaid* and requires purchasing credits for continued use.

- **Disable GPT-4o tools issue**: A member faced difficulties disabling GPT-4o tools, impacting their important chat. A suggestion was made to customize settings via the menu, but they couldn't find the option due to language barriers.

- **Embedding vectors from OpenAI**: A query was raised about how the *text-embedding-ada-002* generates vector outputs like [-0.015501722691532107, -0.025918880474352136]. The user was interested in whether this process utilizes transformers.

OpenAI ▷ #prompt-engineering (3 messages):

- Struggling with flat shading in DALL-E: A user inquired about generating images in DALL-E without lighting and shadows, specifically aiming for flat shading akin to a 3D model with just the albedo texture. They mentioned trying various methods without success and seeking help with this issue.

OpenAI ▷ #api-discussions (3 messages):

- Create documentation for API: A user suggested the idea to "write documentation for the API and provide it to ChatGPT". This could help improve usability and understanding of the API functions.

- Unique Introduction for GPT-4: Claims of having the "Most Unique Introduction for GPT-4" were made by another user. Specific details of the introduction were not provided in the conversation.

- Achieve 3D flat shading in DALL-E: A user inquired about generating images in DALL-E with "no lighting and shadows, just flat shading". They experienced difficulty achieving this effect and asked for assistance or tips.

HuggingFace ▷ #general (112 messages🔥🔥):

- DiscoPOP leads in preference optimization: A member shared Sakana AI’s method for discovering preference optimization algorithms, highlighting DiscoPOP. This method outperforms other methods like DPO in achieving higher scores while deviating less from the base model, as detailed in their blog post.

- Alternative quantization strategy suggested: Another member suggests an alternative quantization strategy for models, proposing f16 for output and embed tensors while using q5_k or q6_k for others. They argue that quantizing the outputs and embeds to q8 significantly degrades model performance.

- GitHub code review and collaboration: Several messages discussed reviewing and potentially collaborating on various GitHub repositories, including projects like KAN-Stem and ViT-Slim. Users coordinated efforts to review and improve code implementations.

- Seeking help with training and troubleshooting: Multiple users sought assistance with training models on VMs, troubleshooting token invalid errors on iOS, and resolving "loading components" issues in stable diffusion. For these, detailed steps and advice, such as deleting cache and retrying, were provided.

- Speech-to-text and diarization questions: A member requested help with speech-to-text solutions with strong diarization capabilities, mentioning tools like Whisper and pyannote. They sought recommendations for fine-tuning models or finding pre-finetuned solutions for accurate speaker identification.

Links mentioned:

HuggingFace ▷ #today-im-learning (2 messages):

-

NAIRR Pilot Program attracts wide interest: The National Artificial Intelligence Research Resource (NAIRR) Pilot, led by the NSF, seeks proposals for exploratory research grants to demonstrate the program's value. Detailed information and deadlines can be found in the NAIRR Task Force Report and on the NAIRR Pilot NSF site.

-

Help requested for using LLMs in education: A member seeks guidance on how to use Language Learning Models (LLMs) to teach a chapter, specifically for organizing content, determining key points, and providing examples. Any direction or advice on utilizing LLMs for educational purposes is appreciated.

Links mentioned:

HuggingFace ▷ #cool-finds (4 messages):

-

New GitHub playground for OpenAI: A member introduced their new project, the OpenAI playground clone. It's available on GitHub for contributions and experimentation.

-

Hyperbolic KG Embeddings paper shared: A paper on hyperbolic knowledge graph embeddings was shared, emphasizing that the approach "combines hyperbolic reflections and rotations with attention to model complex relational patterns". You can read more in the PDF on arXiv.

-

OpenVLA model highlighted: A paper about the OpenVLA, an Open-Source Vision-Language-Action Model, was shared. The full abstract and author details can be found in the arXiv publication.

-

Introducing QubiCSV for quantum research: A new platform called QubiCSV, aimed at "qubit control storage and visualization" for collaborative quantum research, was introduced. The detailed abstract and experimental HTML view are available on arXiv.

Links mentioned:

HuggingFace ▷ #i-made-this (6 messages):

-

Perceptron Logic Operators Explained: A member shared a blog post on training perceptrons with logical operators, covering fundamental concepts and intricacies. "Basically trying to put my thoughts together while learning."

-

Shadertoy Util Gets Multipass and Physics: A member has been learning about GPUs and integrating multipass support into their Shadertoy utility, creating an interactive physics simulation. They shared a shadertoy example and invited feedback.

-

Open-Source Alternative to Recall AI: A member developed LiveRecall, which records and encrypts everything on your screen for search via natural language, positioning it as a privacy-respecting alternative to Microsoft's Recall AI. They encouraged contributions and highlighted it as a new project in need of support.

-

Research Accessibility Platform Launched: A new platform called PaperTalk was launched to make research more accessible using AI tools.

Links mentioned:

HuggingFace ▷ #reading-group (1 messages):

- Meta's new paper explores pixel-level transformers: A member shared a recent paper release by Meta titled An Image is Worth More Than 16×16 Patches: Exploring Transformers on Individual Pixels. They noted that this new work builds on previous presentations, specifically highlighting the superiority of pixel-level attention demonstrated by the #Terminator work.

HuggingFace ▷ #computer-vision (6 messages):

- Looking for RealNVP experience: A member inquired if anyone had experience working with the RealNVP model. No further discussion followed.

- Java-based image detection request: Another member sought help creating an image detection system that supports both live and custom detection via uploads, specifically in Java. They emphasized their preference for a solution not involving Python.

HuggingFace ▷ #NLP (6 messages):

-

New Model Fine-Tunes to Detect Hallucinations: A member shared a link to a new small language model fine-tune for detecting hallucinations. The shared stats highlight its precision, recall, and F1 score on hallucination detection with an accuracy of 79%.

-

Query on DataTrove Library: A user asked where to ask about the DataTrove library, and they were advised that they could ask their questions in the chat channel.

-

Minhash Deduplication Configuration: A user sought advice on configuring minhash deduplication on a 500GB dataset using 200 CPU cores on a local machine, noting their lack of experience with multiprocessing programming.

-

Sales Calls Analysis Help: Another member sought guidance on analyzing over 100 sales calls to extract insights on key variables influencing purchase decisions. They mentioned using Whisper or Assembly for transcription and GPT for exploratory data analysis (EDA).

Link mentioned: grounded-ai/phi3-hallucination-judge-merge · Hugging Face: no description found

HuggingFace ▷ #diffusion-discussions (13 messages🔥):

-

Git Download Fixes Issues: One user confirmed that a git download solved their problem, with another user expressing hope that it helped.

-

Dreambooth Script Requires Modifications for Flexibility: A member noted that while the pipeline has the flexibility for more complex training scenarios, the basic dreambooth script is not equipped to handle individual captions for SD3 models. Another user highlighted that modifying the script is necessary to achieve these training features.

-

Tokenizers Training Not in Basic Script: Users discussed the possibility of training tokenizers like CLIP and T5, mentioning that it would significantly increase VRAM requirements and is not included in the basic dreambooth script.

-

Low MFU Profiling in Diffusion Models: A user shared profiling data for HDIT and queried the low Model Fraction Utilization (MFU) in diffusion models compared to language models like nanogpt on an A100. They invited theories and insights from others in the community.

-