AI News for 6/25/2024-6/26/2024. We checked 7 subreddits, 384 Twitters and 30 Discords (416 channels, and 3358 messages) for you. Estimated reading time saved (at 200wpm): 327 minutes. You can now tag @smol_ai for AINews discussions!

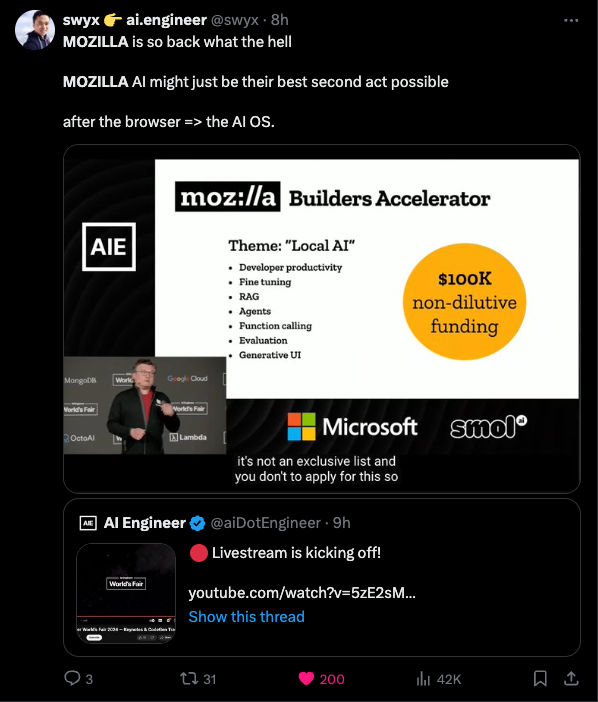

The slow decline of Mozilla's Firefox market share is well known, and after multiple rounds of layoffs its future story was very uncertain. However at the opening keynote of the AIE World's Fair today they came back swinging:

Very detailed live demos of llamafile with technical explanation from Justine Tunney herself, and Stephen Hood announcing a very welcome second project sqlite-vec that, you guessed it, adds vector search to sqlite.

You can watch the entire talk on the livestream (53mins in):

https://www.youtube.com/watch?v=5zE2sMka620&t=262s

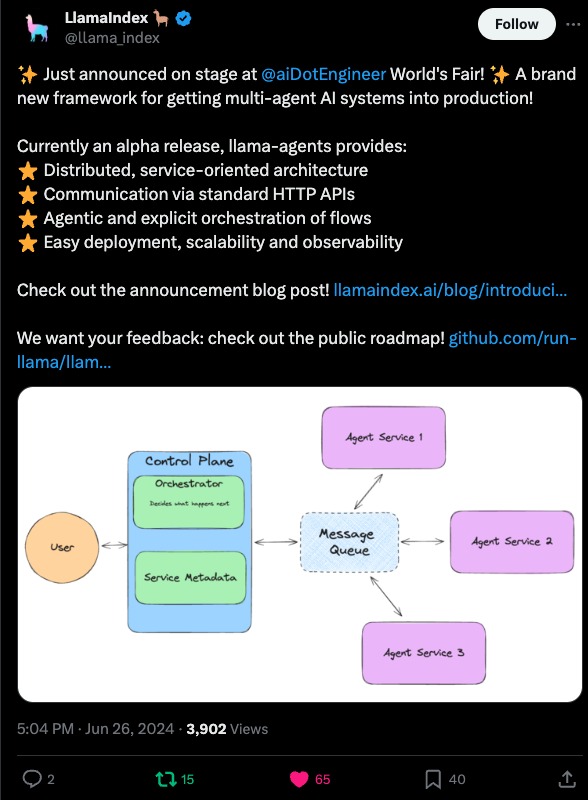

LlamaIndex also closed the day with a notable launch of llama-agents

Some mea culpas: yesterday we missed calling out Etched's big launch (questioned), and Claude Projects made a splash. The PyTorch documentary launched to crickets (weird?).

{% if medium == 'web' %}

Table of Contents

[TOC]

{% else %}

The Table of Contents and Channel Summaries have been moved to the web version of this email: [{{ email.subject }}]({{ email_url }})!

{% endif %}

AI Twitter Recap

all recaps done by Claude 3 Opus, best of 4 runs. We are working on clustering and flow engineering with Haiku.

Anthropic Claude Updates

- New UI features: @alexalbert__ noted new features in the Claude UI, including a sidebar for starring chats, shareable projects with 200K context windows for documents and files, and custom instructions to tailor responses.

- Anthropic announces Projects: @AnthropicAI introduced Projects, which allow organizing chats into shareable knowledge bases with a 200K context window for relevant documents, code, and files. Available for Claude Pro and Team users.

Hardware and Performance Benchmarks

- Etched AI specialized inference chip: @cHHillee shared thoughts on Etched's new inference chip, noting potential misleading marketing claims around silicon efficiency and performance. Benchmarks claim 500k tokens/sec (for multiple users) and replacing 160 H100s with one 8x Sohu server, but may not be normalized for key details. More info needed on benchmark methodology.

- Sohu chip enables 15 agent trajectories/sec: @mathemagic1an highlighted that 500k tokens/sec on Sohu translates to 15 full 30k token agent trajectories per second, emphasizing the importance of building with this compute assumption to avoid being scooped.

- Theoretical GPU inference limits: @Tim_Dettmers shared a model estimating theoretical max of ~300k tokens/sec for 8xB200 NVLink 8-bit inference on 70B Llama, assuming perfect implementations like OpenAI/Anthropic. Suggests Etched benchmarks seem low.

Open Source Models

- Deepseek Coder v2 beats Gemini: @bindureddy claimed an open-source model beats the latest Gemini on reasoning and code, with more details on open-source progress coming soon. A follow-up provided specifics - Deepseek Coder v2 excels at coding and reasoning, beating GPT-4 variants on math and putting open-source in 3rd behind Anthropic and OpenAI on real-world production use cases.

- Sonnet overpowers GPT-4: @bindureddy shared that Anthropic's Sonnet model continues to overpower GPT-4 variants in testing across workloads, giving a flavor of impressive upcoming models.

Biological AI Breakthroughs

- ESM3 simulates evolution to generate proteins: @ylecun shared news of Evolutionary Scale AI, a startup using a 98B parameter LLM called ESM3 to "program biology". ESM3 simulated 500M years of evolution to generate a novel fluorescent protein. The blog post has more details. ESM3 was developed by former Meta AI researchers.

Emerging AI Trends and Takes

- Data abundance is key to AI progress: @alexandr_wang emphasized that breaking through the "data wall" will require innovation in data abundance. AI models compress their training data, so continuing current progress will depend on new data, not just algorithms.

- Returns on human intelligence post-AGI: @RichardMCNgo predicted that the premium on human genius will increase rather than decrease after AGI, as only the smartest humans will understand what AGIs are doing.

- Terminology for multimodal AI: @RichardMCNgo noted it's becoming weird to call multimodal AI "LLMs" and solicited suggestions for replacement terminology as models expand beyond language.

Memes and Humor

- @Teknium1 joked about OpenAI having trouble removing "waifu features" in GPT-4 voice model updates.

- @arankomatsuzaki made a joke announcement that Noam Shazeer won the Turing Award for pioneering work on AI girlfriends.

- @willdepue joked that "AGI is solved" now that you can search past conversations in chatbots.

AI Reddit Recap

Across r/LocalLlama, r/machinelearning, r/openai, r/stablediffusion, r/ArtificialInteligence, /r/LLMDevs, /r/Singularity. Comment crawling works now but has lots to improve!

AI Progress

- AI website generation: A new AI system can generate full webpages from just a URL or description input, demonstrating progress in AI content creation capabilities. Video demo.

- OpenAI Voice Mode delay: OpenAI announced a one month delay for the advanced Voice Mode alpha release to improve safety and user experience. Plans for all Plus users to have access in the fall.

- Singularity book release: Ray Kurzweil released a sequel to his 2005 book The Singularity is Near, sparking excitement and discussion about the future of AI.

- AI agents speculation: OpenAI's acquisition of a remote desktop control startup led to speculation about integration with ChatGPT desktop for AI agents.

- AI-generated ads: Toys R Us used the SORA AI system to generate a promotional video/ad, showcasing AI in marketing.

AI Research

- New optimizer outperforms AdamW: A research paper introduced Adam-mini, a new optimizer that achieves 50% higher throughput than the popular AdamW.

- Matrix multiplication eliminated in LLMs: Researchers demonstrated LLMs that eliminate matrix multiplication, enabling much more efficient models with major implications for running large models on consumer hardware.

- Simulating evolution with AI: EvolutionaryScale announced ESM3, a generative language model that can simulate 500 million years of evolution to generate new functional proteins.

AI Products & Services

- Deepseek Coder V2 math capabilities: Users praised the math capabilities of the Deepseek Coder V2 model, a free model from China that outperforms GPT-4 and Claude.

- AI audiobook narration: An AI-narrated audiobook was well-received, implying audiobook narration is now a solved problem with AI.

- New AI apps and features: Several new AI applications and features were announced, including Tcurtsni, a "reverse-instruct" chat app, Synthesia 2.0, a synthetic media platform, and Projects in Claude for organizing chats and documents.

AI Safety & Ethics

- Rabbit data breach: A security disclosure revealed a data breach in Rabbit where all responses from their R1 model could be downloaded, raising concerns about AI company negligence.

- Hallucination concerns: An opinion post argued the "AI hallucinates" talking point is dangerous as it masks the real risks of rapidly improving AI flooding job markets.

AI Hardware

- AMD MI300X benchmarks: Benchmarks of AMD's new MI300X AI accelerator chip were released and analyzed.

- Sohu AI chip claims: A new Sohu AI chip was announced claiming 500K tokens/sec on a 70B model, with 8 chips equivalent to 160 NVIDIA H100 GPUs.

- MI300X vs H100 comparison: A comparison showed AMD's MI300X is ~5% slower but 46% cheaper with 2.5X the memory of NVIDIA's H100 on the LLaMA-2 70B model.

AI Art

- A8R8 v0.7.0 release: A new version of the A8R8 Stable Diffusion UI was released with Comfy integration for regional prompting and other updates.

- ComfyUI new features: A detailed post reviewed new features in the ComfyUI Stable Diffusion environment like samplers, schedulers, and CFG implementations.

- Magnific AI relighting tool: Results from Magnific AI's new relighting tool were compared to a user's workflow, finding it lacking in quality.

- SD model comparisons: Different Stable Diffusion model sizes were compared on generating specified body positions, with performance noted as "not good."

Other Notable News

- Stability AI leadership changes: Stability AI announced a new CEO, board members, funding round, and commitment to open source while expanding enterprise tools.

- AI traffic analysis: A post proposed ways to quantify bandwidth usage of major AI systems, estimating AI is still a small part of overall internet traffic.

- Politician shares false ChatGPT stats: A news article reported a Canadian politician shared inaccurate statistics generated by ChatGPT, highlighting risks of using unverified AI outputs.

- Open-source AI agent for on-call: Merlinn, an open-source AI Slack bot to assist on-call engineers, was announced.

- Living skin robots: BBC reported on research into covering robots with living human skin to make them more lifelike.

- Gene therapy progress: A tweet discussed gene therapies progressing from rare to common diseases.

- Google AI event: News that Google will reveal new AI tech and Pixel phones at an August event.

- Tempering AI release expectations: A post advised taking AI product release dates with a grain of salt due to R&D uncertainty.

- AI ending amateurism: An opinion piece argued generative AI will allow everyone to produce professional-quality work.

AI Discord Recap

A summary of Summaries of Summaries

Claude 3 Sonnet

1. 🔥 LLM Advancements and Benchmarking

- Llama 3 from Meta tops leaderboards, outperforming GPT-4-Turbo and Claude 3 Opus per ChatbotArena.

- New models: Granite-8B-Code-Instruct for coding, DeepSeek-V2 with 236B parameters.

- Skepticism around certain benchmarks, calls for credible sources to set realistic evaluation standards.

2. 🤖 Optimizing LLM Inference and Training

- ZeRO++ promises 4x reduced communication overhead on GPUs.

- vAttention dynamically manages KV-cache memory for efficient inference.

- QServe uses W4A8KV4 quantization to boost cloud serving on GPUs.

- Consistency LLMs explore parallel token decoding for lower latency.

3. 🌐 Open-Source AI Frameworks and Community Efforts

- Axolotl supports diverse formats for instruction tuning and pre-training.

- LlamaIndex powers a course on building agentic RAG systems.

- RefuelLLM-2 claims best for "unsexy data tasks".

- Modular teases Mojo's Python integration and AI extensions.

4. 🖼 Multimodal AI and Generative Modeling Innovations

- Idefics2 8B Chatty for elevated chat interactions.

- CodeGemma 1.1 7B refines coding abilities.

- Phi 3 brings powerful chatbots to browsers via WebGPU.

- Combining Pixart Sigma + SDXL + PAG aims for DALLE-3-level outputs with potential fine-tuning.

- Open-source IC-Light for image relighting techniques.

5. Stable Artisan for AI Media Creation in Discord

- Stability AI launched Stable Artisan, a Discord bot integrating Stable Diffusion 3, Stable Video Diffusion, and Stable Image Core for media generation within Discord.

- Sparked discussions around SD3's open-source status and Artisan's introduction as a paid API service.

Claude 3.5 Sonnet

-

LLMs Level Up in Performance and Efficiency:

-

New models like IBM's Granite-8B-Code-Instruct and RefuelLLM-2 are pushing boundaries in code instruction and data tasks. Communities across Discord channels are discussing these advancements and their implications.

-

Optimization techniques such as Adam-mini are gaining traction, promising 45-50% memory reduction compared to AdamW while maintaining performance. This has sparked discussions in the OpenAccess AI Collective and CUDA MODE Discords.

-

The vAttention system for efficient KV-cache memory management is being explored as an alternative to PagedAttention, highlighting the ongoing focus on inference optimization across AI communities.

-

-

Open-Source AI Flourishes with Community-Driven Tools:

-

Axolotl is gaining popularity for its support of diverse dataset formats in LLM training, discussed in both the OpenAccess AI Collective and HuggingFace Discords.

-

The LlamaIndex framework is powering new courses on building agentic RAG systems, generating excitement in the LlamaIndex and general AI development communities.

-

Mojo's potential for Python integration and AI extensions is a hot topic in the Modular Discord, with discussions on its implications for AI development workflows.

-

-

Multimodal AI Pushes Creative Boundaries:

-

The combination of Pixart Sigma, SDXL, and PAG is being explored to achieve DALLE-3 level outputs, as discussed in the Stability.ai and general AI communities.

-

Stable Artisan, a new Discord bot from Stability AI, is integrating models like Stable Diffusion 3 and Stable Video Diffusion, sparking conversations about AI-powered media creation across multiple Discord channels.

-

The open-source IC-Light project for image relighting is gaining attention in computer vision circles, showcasing the ongoing innovation in image manipulation techniques.

-

-

AI Hardware Race Heats Up:

-

AMD's Radeon Instinct MI300X is challenging Nvidia's dominance in the GPU compute market, despite software ecosystem challenges. This has been a topic of discussion in the CUDA MODE and hardware-focused Discord channels.

-

The announcement of Etched's Sohu AI chip has sparked debates across AI hardware communities about its potential to outperform GPUs in running transformer models, with claims of replacing multiple H100 GPUs.

-

Discussions about specialized AI chips versus general-purpose GPUs are ongoing, with community members in various Discord servers debating the future direction of AI hardware acceleration.

-

Claude 3 Opus

1. LLM Performance and Benchmarking:

- Discussions about the performance of various LLMs, such as Llama 3 from Meta outperforming models like GPT-4-Turbo and Claude 3 Opus on leaderboards like ChatbotArena.

- New models like IBM's Granite-8B-Code-Instruct and DeepSeek-V2 showcasing advancements in instruction following and parameter count.

- Concerns about the credibility of certain benchmarks and the need for realistic LLM assessment standards from reputable sources.

2. Hardware Advancements and Optimization Techniques:

- Techniques like ZeRO++ and vAttention being explored to optimize GPU memory usage and reduce communication overhead during LLM training and inference.

- Advancements in quantization, such as QServe introducing W4A8KV4 quantization for improved GPU performance in cloud-based LLM serving.

- Discussions about the potential of specialized AI chips like Etched's Sohu and comparisons with GPU performance for running transformer models.

3. Open-Source Frameworks and Community Efforts:

- Open-source frameworks like Axolotl and LlamaIndex supporting diverse dataset formats and enabling the development of agentic RAG systems.

- The release of open-source models like RefuelLLM-2, claiming to be the best LLM for "unsexy data tasks."

- Community efforts to integrate AI capabilities into platforms like Discord, with bots such as Stable Artisan from Stability AI for media generation and editing.

4. Multimodal AI and Generative Models:

- New models focusing on specific tasks, such as Idefics2 8B Chatty for elevated chat interactions and CodeGemma 1.1 7B for coding abilities.

- Advancements in browser-based AI chatbots, like the Phi 3 model utilizing WebGPU for powerful interactions.

- Efforts to combine techniques like Pixart Sigma, SDXL, and PAG to achieve DALLE-3-level outputs in generative models.

- Open-source projects like IC-Light focusing on specific tasks such as image relighting.

GPT4O (gpt-4o-2024-05-13)

-

Model Performance and Benchmarks:

- Llama3 70B Models Show Promise: New open LLM leaderboards hosted on 300 H100 GPUs have Qwen 72B leading, though bigger models don't always equate to better performance. Analyses highlighted differences in scope between training vs. inference benchmarks.

- Solving Grade School Arithmetic highlights skepticism where data leakage in large LLMs results in misleadingly high benchmarks despite incomplete learning. Calls for credible assessments were noted.

-

Training, Optimization and Implementation Issues:

- Push for Better Optimizers: Adam-mini optimizer offers equivalent performance to AdamW but reduces memory use by 45-50%. This optimizer simplifies storage by reducing the number of learning rates per parameter.

- Memory Management in High-Context Models: Efforts to load large models, such as Llama3 70B or Hermes, on consumer-grade GPUs are hindered by significant OOM errors, driving discussions on effective GPU VRAM utilization.

-

AI Ethics and Community Debates:

- Ethics of AI Data Use: Debates in LAION Discord stressed the controversial inclusion of NSFW content in datasets, balancing ethical concerns with the motivation for unrestricted data access.

- Model Poisoning Concerns: Discussions in LAION focused on ethical implications and potential model poisoning, where controversial techniques in training and dataset usage are encouraged without broader consideration of long-term impacts.

-

Specialized AI Hardware Trends:

- Etched's Sohu Chips Boast 10x Performance: Etched’s new transformer ASIC chips claim to outperform Nvidia GPUs significantly, with considerable financial backing. However, practical adaptability and inflexibility concerns were discussed within CUDA MODE.

- AMD's MI300X Challenges Nvidia: AMD's MI300X seeks to dethrone Nvidia in GPU compute markets, despite lagging behind Nvidia's CUDA ecosystem.

-

AI Application Integration:

- Custom GPT Apps on Hugging Face Flourish: Growing interest in custom GPT-based applications, citing niche tasks like Japanese sentence explanations, remains strong. Collaborative efforts in the community have driven the creation of resources and toolkits for ease of implementation.

- AI-Assisted Tools Expand Academic Reach: The new GPA Saver platform leverages AI for academic assistance, indicating growing integration of AI in streamlined educational tools. Community discussions about improving AI-driven functionalities highlighted potential and current constraints.

PART 1: High level Discord summaries

OpenAI Discord

Quick Access with a Shortcut: The ChatGPT desktop app for macOS is now available, featuring a quick-access Option + Space shortcut for seamless integration with emails and images.

Voice Mode Hiccup: The anticipated advanced Voice Mode for ChatGPT has been postponed by a month to ensure quality before alpha testing; expect more capabilities like emotion detection and non-verbal cues in the fall.

OpenAI vs Anthropic's Heavyweights: Discussions are heating with regards to GPTs agents' inability to learn post-training and Anthropic's Claude gaining an edge over ChatGPT due to technical feats, such as larger token context windows and a rumored MoE setup.

Customization Craze in AI: Enthusiasts are creating custom GPT applications using resources like Hugging Face, with a particular interest in niche tasks like explaining Japanese sentences, as well as concerns about current limitations in OpenAI's model updates and feature rollout.

GPT-4 Desktop App and Performance Threads: Users noted the limitation of the new macOS desktop app to Apple Silicon chips and shared mixed reviews on GPT-4's performance, expressing desire for Windows app support and improvements in response times.

HuggingFace Discord

-

RAG Under the Microscope: A discussion centered on the use of Retrieval-Augmented Generation (RAG) techniques highlighted consideration for managing document length with SSM like Mamba and using BM25 for keyword-oriented retrieval. A GitHub resource related to BM25 can be found here.

-

Interactive Hand Gestures: Two separate contexts highlighted a Python-based "Hand Gesture Media Player Controller," shared via a YouTube demonstration, indicating burgeoning interest in applied computer vision to control interfaces.

-

PAG Boosts 'Diffusers' Library: An integration of Perturbed Attention Guidance (PAG) into the

diffuserslibrary promises enhanced image generation, as announced in HuggingFace's core announcements, thanks to a community contribution. -

Cracking Knowledge Distillation for Specific Languages: Queries around knowledge distillation were prominent, with one member proposing a distilled multilingual model for a single language and another recommending SpeechBrain for tackling the task.

-

LLMs and Dataset Quality in Focus: Alongside advances such as the Phi-3-Mini-128K-Instruct model by Microsoft, the community spotlighted the importance of dataset quality. Concurrently, concerns related to data leakage in LLMs were addressed through papers citing the issue here and here.

-

Clamor for AI-driven Tools: From a request for a seamless AI API development platform, referenced through a feedback survey, to the challenge of identifying data in handwritten tables, there's a clear demand for AI-powered solutions that streamline tasks and inject efficiency into workflows.

LAION Discord

-

AI Ethics Take Center Stage: Conversations arose about the ethics in AI training, where a member expressed concerns about active encouragement of model poisoning. Another member debated the offered solution to the AIW+ problem as incorrect, mentioning it overlooks certain familial relationships, thus suggesting ambiguity and ethical considerations.

-

Music Generation with AI Hits a High Note: Discussions involved using RateYourMusic ID to generate songs and lyrics, with an individual confirming its success and describing the outcomes as "hilarious."

-

The Great NSFW Content Debate: A debate surged regarding whether NSFW content should be included in datasets, highlighting the dichotomy between moral concerns and the argument against excessively cautious model safety measures.

-

GPU Showdown and Practicality: Members exchanged insights on the trade-offs between A6000s, 3090s, and P40 GPUs, noting differences in VRAM, cooling requirements, and model efficiency when applied to AI training.

-

ASIC Chips Enter the Transformer Arena: An emerging topic was Etched's Sohu, a specialized chip for transformer models. Its touted advantages sparked discussions on its practicality and adaptability to various AI models, contrasting with skepticism concerning its potential inflexibility.

Eleuther Discord

-

ICML 2024 Papers on the Spotlight: EleutherAI researchers gear up for ICML 2024 with papers addressing classifier-free guidance and open foundation model impacts. Another study delves into memorization in language models, examining issues like privacy and generalization.

-

Multimodal Marvels and Gatherings Galore: Huggingface's leaderboard emerges as a handy tool for those seeking top-notch multimodal models; meanwhile, ICML's Vienna meet-up attracts a cluster of enthusiastic plans. The hybrid model Goldfinch also became a part of the exchange, merging Llama with Finch B2 layers for enhanced performance.

-

Papers Prompting Peers: Discussion in the #research channel flared around papers from comparative evaluations of Synquid to the application of Hopfield Networks in transformers. Members dissected topics ranging from multimodal learning efficiencies to experimental approaches in generalization and grokking.

-

Return of the Hopfields: Members offered insights on self-attention in neural networks by corralling it within the framework of (hetero)associative memory, bolstered by references to continuous modern Hopfield Networks and their implementation as single-step attention.

-

Sparse and Smart: Sparse Autoencoders (SAEs) take the stage for their aptitude in unearthing linear features from overcomplete bases, as touted in LessWrong posts. Additionally, a noteworthy mention was a paper on multilingual LLM safety, demonstrating cross-lingual detoxification from directionally poisoned optimization (DPO).

CUDA MODE Discord

AMD's Radeon MI300X Takes on Nvidia:

The new AMD Radeon Instinct MI300X is positioned to challenge Nvidia's dominant status in the GPU compute market despite AMD's software ecosystem ROCm lagging behind Nvidia's CUDA, as detailed in an article on Chips and Cheese.

ASIC Chip Ambitions:

Etched's announcement of the Transformer ASIC chips aims to outpace GPUs in running AI models more efficiently, with significant investment including a $120 million series A funding round supported by Bryan Johnson, raising discussions about the future role of specialized AI chips.

Optimization Tweaks and Triton Queries:

Engineering conversations revolve around a proposed Adam-mini optimizer that operates with 45-50% less memory, with code available on GitHub, and community assistance sought for a pow function addition in python.triton.language.core as shown in this Triton issue.

PyTorch Celebrates with Documentary:

The premiere of the "PyTorch Documentary Virtual Premiere: Live Stream" has garnered attention, featuring PyTorch’s evolution and its community, substantially reiterated by users and symbolized with goat emojis to express the excitement, watchable here.

Intel Pursues PyTorch Integration for GPUs:

Building momentum for Intel GPU (XPU) support in stock PyTorch continues with an Intel PyTorch team's RFC on GitHub, signaling Intel’s commitment to becoming an active participant in the deep learning hardware space.

Discussions of AI Infrastructure and Practices:

Community dialogue featured topics like learning rate scaling, update clipping with insights from an AdamW paper, infrastructural choices between AMD and Nvidia builds, and the intrigue around the Sohu ASIC chip's promises, impacting the efficacy of large transformer models.

Perplexity AI Discord

Perplexed by Perplexity API: Engineers discussed intermittent 5xx errors with the Perplexity AI's API, highlighting the need for better transparency via a status page. There were also debates on API filters and undocumented features, with some users probing the existence of a search domain filter and citation date filters.

In Search of Better Search: The Perplexity Pro focus search faced criticism for limitations, while comparisons to ChatGPT noted Perplexity's new agentic search capabilities but criticized its tendency to hallucinate in summarizations.

Claude Leverages Context: The guild buzzed about Claude 3.5's 32k token context window for Perplexity Pro users, with Android support confirmed. Users showed a clear preference for the full 200k token window offered by Claude Pro.

Innovation Insight with Denis Yarats: The CTO of Perplexity AI dissected AI's innovation in a YouTube video, discussing how it revolutionizes search quality. In a related conversation, researchers presented a new method that could change the game by removing matrix multiplication from language model computations.

Hot Topics and Searches in Sharing Space: The community shared numerous Perplexity AI searches and pages including evidence of Titan's missing waves, China's lunar endeavors, and a study on how gravity affects perception, encouraging others to explore these curated searches on their platform.

Latent Space Discord

-

AI World's Fair Watch Party Launch: Enthusiasm stirred up for hosting a watch party for AI Engineer World’s Fair, livestreamed here, spotlighting cutting-edge keynotes and code tracks.

-

Premiere Night for PyTorch Fans: Anticipation builds around the PyTorch Documentary Virtual Premiere, highlighting the evolution and impact of the project with commentary from its founders and key contributors.

-

ChatGPT's Voice Update Muted: A delayed release of ChatGPT's Voice Mode, due to technical difficulties with voice features, causes a stir following a tweet by Teknium.

-

Bee Computer Buzzes with Intelligence: Attendees at an AI Engineer event buzz over new AI wearable tech from Bee Computer, touted for its in-depth personal data understanding and proactive task lists.

-

Neural Visuals Exceed Expectations: A breakthrough in neuroscience captures community interest with the reconstruction of visual experiences from mouse cortex activity, demonstrating incredible neuroimaging strides.

LM Studio Discord

-

Tech Troubles and Tips in LM Studio: Engineers reported errors with LM Studio (0.2.25), including an Exit code: -1073740791 when loading models. For Hermes 2 Theta Llama-3 70B, users with RTX 3060ti faced "Out of Memory" issues and considered alternatives like NousResearch's 8b. Issues were also noted when running Llama 3 70B on Apple's M Chip due to different quant types and settings.

-

RAG Gets the Spotlight: A detailed discussion on retrieval-augmented generation (RAG) took place, highlighting NVIDIA's blog post on RAG's capability to enhance information generation accuracy with external data.

-

Scam Warnings and Security Tips: Users noted the presence of scam links to a Russian site impersonating Steam and reported these for moderator action. There's awareness in the community regarding phishing attacks and the importance of securing personal and project data.

-

Hardware Conversations Heat Up: A completed build using 8x P40 GPUs was mentioned, sparking further discussions on server power management involving a 200 amp circuit and VRAM reporting accuracy in LM Studio for multi-GPU setups. The noise produced by home server setups was also humorously likened to a jet engine.

-

Innovative Ideas and SDK Expo: Members shared ideas ranging from using an LLM as a game master in a sci-fi role-playing game to solving poor performance with larger context windows in token prediction. There's a guide to building Discord bots with the SDK here and questions regarding extracting data from the LM Studio server using Python.

-

Uploading Blocks in Open Interpreter: There's frustration over the inability to upload documents or images directly into the open interpreter terminal, limiting users in interfacing with AI models and use cases.

Modular (Mojo 🔥) Discord

-

Plotting a Path with Mojo Data Types: Engineers are experimenting with Mojo data types for direct plotting without conversion to Numpy, utilizing libraries like Mojo-SDL for SDL2 bindings. The community is mulling over the desired features for a Mojo charting library, with focus areas ranging from high-level interfaces to interactive charts and integration with data formats like Arrow.

-

Vega IR for Versatile Visualization: The need for interactivity in data visualization was underscored, with the Vega specification being proposed as an Intermediate Representation (IR) to bridge web and native rendering. The conversation touched on the unique approaches of libraries like UW's Mosaic and mainstream ones like D3, Altair, and Plotly.

-

WSL as a Windows Gateway to Mojo: Mojo has been confirmed to work on Windows via the Windows Subsystem for Linux (WSL), with native support anticipated by year's end. Ease of use with Visual Studio Code and Linux directories was a highlight.

-

IQ vs. Intelligence Debate Heats Up: The community engaged in a lively debate about the nature of intelligence, with the ARC test questioned for its human-centric pattern recognition tasks. Some users view AI excelling at IQ tests as not indicative of true intelligence, while the concept of consciousness versus recall sparked further philosophical discussion.

-

Compile-Time Quirks and Nightly Builds: Multiple issues with the Mojo compiler were aired, ranging from reported bugs in type checking and handling of boolean expressions to the handling of

ListandTensorat compile time. Encouragement to report issues, even if resolved in nightly builds, was echoed across the threads. Specific commits, nightly build updates, and suggestions for referencing immutable static lifetime variables were also discussed, rallying the community around collaborative debugging and improvement.

Interconnects (Nathan Lambert) Discord

-

LLM Leaderboard Bragging Rights Questioned: Clement Delangue's announcement of a new open LLM leaderboard boasted the use of 300 H100 GPUs to rerun MMLU-pro evaluations, prompting sarcasm and criticisim about the necessity of such computing power and the effectiveness of larger models.

-

API Security Gone Awry at RabbitCode: Rabbitude's discovery of hardcoded API keys, including ones for ElevenLabs and others, has left services like Azure and Google Maps vulnerable, causing concerns over unauthorized data access and speculation about the misuse of ElevenLabs credits.

-

Delay in ChatGPT's Advanced Voice Mode: OpenAI has postponed the release of ChatGPT’s advanced Voice Mode for Plus subscribers till fall, aiming to enhance content detection and the user experience, as shared via OpenAI's Twitter.

-

Murmurs of Imbue’s Sudden Success: Imbue's sudden $200M fundraise drew skepticism among members, exploring the company's unclear history and comparing their trajectory with the strategies of Scale AI and its subsidiaries for data annotation and PhD recruitment for remote AI projects.

-

Music Industry’s AI Transformation: Udio's statement on AI's potential to revolutionize the music industry clashed with the RIAA's concerns, asserting AI will become essential for music creation despite industry pushback.

Stability.ai (Stable Diffusion) Discord

-

Challenging Stability AI to Step Up: Discussions point to growing concerns about Stability AI’s approach with Stable Diffusion 3 (SD3), stressing the need for uncensored models and updated licenses to retain long-term viability. A more practical real-world application beyond novelty creations is requested by the community.

-

Cost-Effective GPU Strategies Discussed: The comparison of GPU rental costs reveals Vast as a more economical option for running a 3090 compared to Runpod, with prices cited as low as 30 cents an hour.

-

Debate: Community Drive vs. Corporate Backup: There's an active debate on the balance between open-source initiatives and corporate influence, with some members arguing for community support as crucial and others citing Linux's success with enterprise backing as a valid path.

-

Optimizing Builds for Machine Learning: Members are sharing hardware recommendations for effective Stable Diffusion setups, with a consensus forming around the Nvidia 4090 for its performance benefit, potentially favoring dual 4090s over higher VRAM single GPUs for cost savings.

-

Nostalgia Over ICQ and SDXL Hurdles: The shutdown of the legacy messaging service ICQ triggered nostalgic exchanges, while the community also reported challenges in running SDXL, particularly for those experiencing "cuda out of memory" errors due to insufficient VRAM, seeking advice on command-line solutions.

Nous Research AI Discord

-

Introducing the Prompt Engineering Toolkit: An open-source Prompt Engineering Toolkit was shared for use with Sonnet 3.5, designed to assist with creating better prompts for AI applications.

-

Skepticism Breeds Amidst Model Performance: A demonstration of Microsoft's new raw text data augmentation model on Genstruct prompted doubts about its efficacy, showing results that seemed off-topic.

-

AI Chip Performance Heats Up Debate: The new "Sohu" AI chip sparked discussions about its potential for high-performance inference tasks, linking to Gergely Orosz's post which suggests OpenAI doesn't believe AGI is imminent despite advancing hardware.

-

70B Model Toolkit Launched by Imbue AI: Imbue AI released a toolkit for a 70B model with resources including 11 NLP benchmarks, a code-focused reasoning benchmark, and a hyperparameter optimizer, found at Imbue's introductory page.

-

Embracing the Whimsical AI: A post from a user featured AI-generated content in meme format by Claude from Anthropic, reflecting on Claude's explanation of complex topics and its humorous take on not experiencing weather or existential crises.

LangChain AI Discord

-

Streamlining AI Conversations: Engineers highlighted the

.stream()method fromlangchain_community.chat_modelsfor iterating through LangChain responses, while others discussed integrating Zep for long-term memory in AI and contemplated directBytesIOPDF handling in LangChain without temp files. -

Visualization Quest in LangChain: Discussion around live visualizing agents' thoughts in Streamlit touched on using

StreamlitCallbackbut also identified a gap in managing streaming responses without callbacks. -

Troubleshooting the Unseen: Inquiries were made about LangSmith's failure to trace execution despite proper environmental setup, with a suggestion to check trace quotas.

-

Extending Containerized Testing: A community member contributed Ollama support to testcontainers-python, facilitating LLM endpoint testing, as indicated in their GitHub issue and pull request.

-

Cognitive Crafts and Publications: A Medium article on few-shot prompting with tool calling in Langchain was shared, alongside a YouTube video exploring the ARC AGI challenges titled "Claude 3.5 struggle too?! The $Million dollar challenge".

LlamaIndex Discord

-

Chatbots Seeking Contextual Clarity: An engineer inquired about how to effectively retrieve context directly from a chat response within the LlamaIndex chatbot framework, sharing implementation details and the challenges encountered.

-

Pull Request Review on the Horizon: A member shared a GitHub PR for review, aimed at adding query filtering functionalities to the Neo4J Database in LlamaIndex, and another member acknowledged the need to address the backlog.

-

Silencing Superfluous Notifications: There was a discussion on how to suppress unnecessary notifications about missing machine learning libraries in the Openailike class, with the clarification that such messages are not errors.

-

Tuning SQL Queries with LLMs: Dialogue among users highlighted the benefits of fine-tuning language models for enhanced precision in SQL queries when using a RAG SQL layer, suggesting better performance with quality training data.

-

Balancing Hybrid Searches: Questions about hybrid search implementations in LlamaIndex have been addressed, focusing on adjusting the

alphaparameter to balance metadata and text relevance in search results. -

Boosting RAG with LlamaIndex: An article was shared highlighting ways to build optimized Retrieval-Augmented Generation systems with LlamaIndex and DSPy, providing insights and practical steps for AI engineers.

-

Open Source Contribution Perks: A call was made for feedback on an open-source project, Emerging-AI/ENOVA, for enhancing AI deployment, monitoring, and auto-scaling, with an incentive of a $50 gift card.

OpenInterpreter Discord

-

Claude-3.5-Sonnet Steps into the Spotlight: The latest Anthropic model is officially named

claude-3-5-sonnet-20240620, putting an end to name confusion among members. -

MoonDream's Vision Limitation Acknowledged: While there's interest in a MoonDream-based vision model for OpenInterpreter (OI), current conversation confirms it's not compatible with OI.

-

Multiline Input Quirks and Vision Command Errors: Technical issues arose with

-mlfor multiline inputs and theinterpreter --os --visioncommand, with one user verifying their API key but facing errors, and another member reported a ban from attempting to directly drop files into the terminal. -

01: OI's Voice Interface, Not for Sale Everywhere: 01, as the voice interface for OI, can't be bought in Spain; enthusiasts are redirected to an open-source dev kit on GitHub for DIY alternatives.

-

Constructing Your Own 01: Tutorials for DIY assembly of 01 from the open-source kit will be proliferating, including one planned for July, hinting at the community's commitment to ensuring wider access beyond commercial sale limitations.

Cohere Discord

Curiosity About Cohere's Scholars Program: One member inquired about the status of the scholars program for the current year, but no additional information or discussion followed on this topic.

Billable Preamble Tokens in the Spotlight: A user highlighted an experiment involving preamble tokens for API calls, bringing up a cost-cutting loophole that could avoid charges by exploiting non-billable preamble usage.

Designing with Rust for LLMs: An announcement was made about the release of Rig, a Rust library for creating LLM-driven applications, with an invitation to developers to engage in an incentivized feedback program to explore and review the library.

Ethical Considerations Surface in AI Usage: Concerns were brought up regarding SpicyChat AI, a NSFW bot hosting service, potentially violating Cohere's CC-BY-NA license through profit-generating use coupled with the claim of circumventing this via OpenRouter.

Learning Event on 1Bit LLMs by Hongyu Wang: An online talk titled The Era of 1Bit LLMs hosted by Hongyu Wang was announced with an invitation extended to attend through a provided Google Meet link.

OpenAccess AI Collective (axolotl) Discord

-

Adam Optimizer Slims Down: Engineers discussed an arXiv paper introducing Adam-mini, highlighting its reduced memory footprint by 45% to 50% compared to AdamW. It achieves this by using fewer learning rates, leveraging parameter block learning inspired by the Hessian structure of Transformers.

-

Training Pitfalls and CUDA Quandaries: One engineer sought advice on implementing output text masking during training, akin to

train_on_input, while another raised an issue with CUDA errors, suggesting enablingCUDA_LAUNCH_BLOCKING=1for identifying illegal memory access during model training. -

Gradient Accumulation—Friend or Foe?: The impact of increasing gradient accumulation was hotly debated; some believe it may shortcut training by running the optimizer less often, others worry it could lead to slower steps and more training time.

-

Cosine Schedules and QDora Quests: Questions arose about creating a cosine learning rate scheduler with a non-zero minimum on the Hugging Face platform, and excitement was evident over a pull request enabling QDora in PEFT.

-

Narrative Engines and Mistral Mysteries: The introduction of Storiagl, a platform for building stories with custom LLMs, was showcased, while another engineer reported a repetitive text generation issue with Mistral7B, despite high temperature settings and seeking solutions.

LLM Finetuning (Hamel + Dan) Discord

Prompting Takes the Cake in Language Learning: Researchers, including Eline Visser, have shown that prompting a large language model (LLM) outperforms fine-tuning when learning Kalamang language using a single grammar book. The findings, indicating that 'prompting wins', are detailed in a tweet by Jack Morris and further elaborated in an academic paper.

Catch the AI Engineer World’s Fair Online: The AI Engineer World's Fair 2024 is being streamed live, focusing on keynotes and the CodeGen Track, with access available on YouTube; more specifics are provided on Twitter.

Claude Contest Calls for Creatives: The June 2024 Build with Claude contest has been announced, inviting engineers to demonstrate their expertise with Claude, as outlined in the official guidelines.

Credit Where Credit is Due: An individual offered assistance with a credit form issue, asking to be directly messaged with the related email address to resolve the matter efficiently.

Model Offloading Techniques Debated: The community has observed that DeepSpeed (DS) seems to have more effective fine-grained offloading strategies compared to FairScale's Fully Sharded Data Parallel (FSDP). Additionally, the utility of these offloading strategies with LLama 70B is under consideration by members seeking to optimize settings.

Mozilla AI Discord

-

Mozilla's Builders Program Ticks Clock: Members are reminded to submit their applications for the Mozilla Builders Program before the July 8th early application deadline. For support and additional information, check the Mozilla Builders Program page.

-

'90s Nostalgia via Firefox and llamafile: Firefox has integrated llamafile as an HTTP proxy, allowing users to venture through LLM weights in a retro web experience; a demonstration video is available on YouTube.

-

Create Your Own Chat Universe: Users can create immersive chat scenarios, fusing llamafile with Haystack and Character Codex, through a shared notebook which is accessible here.

-

Cleansing CUDA Clutter in Notebooks: To keep Jupyter notebooks pristine, it's suggested to address CUDA warnings by using the utility from Haystack.

-

NVIDIA's Stock Sent on a Rollercoaster: Following a talk at AIEWF, NVIDIA's market cap fell dramatically, triggering various analyses from outlets like MarketWatch and Barrons over the catalyst of the company's financial performance.

tinygrad (George Hotz) Discord

- Tinygrad Explores FPGA Acceleration: There's chatter about tinygrad leveraging FPGAs as a backend, with George Hotz hinting at a potential accelerator design for implementation.

- Groq Alumni Launch Positron for High-Efficiency AI: Ex-Groq engineers introduced Positron, targeting the AI hardware market with devices like Atlas Transformer Inference Server, boasting a 10x performance boost per dollar over competitors like DGX-H100.

- FPGA's Role in Tailored AI with HDL: Discussion centered on the future of FPGAs equipped with DSP blocks and HBM, which could allow for the creation of model-specific HDL, although it was noted that Positron's approach is generic and not tied to a specific FPGA brand.

- PyTorch's Impact on AI Celebrated in Documentary: A documentary on YouTube highlighting PyTorch's development and its influence on AI research and tooling has been shared with the community.

AI Stack Devs (Yoko Li) Discord

-

Angry.penguin Ascends to Mod Throne: User angry.penguin was promoted to moderator to tackle the guild's spam problem, volunteering with a proactive approach and immediately cleaning up the existing spam. Yoko Li entrusted angry.penguin with these new responsibilities and spam control measures.

-

Spam No More: Newly-minted moderator angry.penguin announced the successful implementation of anti-spam measures, ensuring the guild's channels are now fortified against disruptive spam attacks. Members may notice a cleaner and more focused discussion environment moving forward.

DiscoResearch Discord

-

German Encoders Go Live on Hugging Face: AI engineers might be enticed by the newly released German Semantic V3 and V3b encoders, available on Hugging Face. V3 targets knowledge-based applications, while V3b emphasizes high performance with innovative features including Matryoshka Embeddings and 8k token context capability.

-

Finetuning Steps for German Encoders Without GGUF: Despite inquiries, the German V3b encoder does not currently have a gguf format; however, for those interested in finetuning, it is recommended to use UKPLab's sentence-transformers finetuning scripts.

-

Possibility of GGUF for Encoders Empowered by Examples: In the wake of confusion, a member clarified by comparing with Ollama, establishing that encoders like German V3 can indeed be adapted to gguf formats which may involve using dual embedders for enhanced performance.

OpenRouter (Alex Atallah) Discord

-

New AI Player in Town: OpenRouter has introduced the 01-ai/yi-large model, a new language model specialized in knowledge search, data classification, human-like chatbots, and customer service; the model supports multilingual capabilities.

-

Parameter Snafu Resolved: The Recommended Parameters tab for the model pages on OpenRouter had data display issues, which have been fixed, ensuring engineers now see accurate configuration options.

-

AI Meets Academia: The newly launched GPA Saver leverages AI to offer academic assistance and includes tools like a chat assistant, rapid quiz solver, and more; early adopters get a discount using the code BETA.

-

Easing the Integration Experience: Thanks were expressed to OpenRouter for streamlining the process of AI model integration, which was instrumental in the creation of the GPA Saver platform.

The LLM Perf Enthusiasts AI Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The MLOps @Chipro Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The Datasette - LLM (@SimonW) Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The Torchtune Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The AI21 Labs (Jamba) Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The YAIG (a16z Infra) Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

PART 2: Detailed by-Channel summaries and links

{% if medium == 'web' %}

OpenAI ▷ #annnouncements (2 messages):

-

ChatGPT desktop app for macOS releases: The ChatGPT desktop app for macOS is now available to all users. Get quicker access to ChatGPT with the Option + Space shortcut, enabling seamless chats about emails, screenshots, and more.

-

Advanced Voice Mode delayed but incoming: The rollout of the advanced Voice Mode, initially planned for late June, has been delayed by a month to ensure quality. This mode, capable of understanding emotions and non-verbal cues, will start alpha testing with a small group before expanding to all Plus users in the fall, with updates on video and screen sharing capabilities to follow.

OpenAI ▷ #ai-discussions (388 messages🔥🔥):

-

GPTs Agents and OpenAI's Progress: Members expressed frustration over GPTs agents not learning new information after initial training. They pointed out that while OpenAI's models excel in initial training, continuous improvements are held back by excessive regulations.

-

Anthropic's Claude Rises in Popularity: Discussions highlighted how Anthropic's Claude 3.5 Sonnet has gained traction, with claims it offers better performance in coding and larger context windows compared to OpenAI's models. One user speculated on the efficiency of its architecture, possibly employing a MoE setup.

-

Model Performance Comparisons: Users discussed the upper hand Anthropic's Claude has over OpenAI's ChatGPT, especially in token context windows and refusal rates. While some argued Claude is more censored, others noted Claude's technical improvements, like larger token windows and less lag in responses.

-

Open Source and Custom Models: There was interest in custom GPTs and synthetic datasets tailored for niche applications, such as Japanese sentence explanations. Users shared resources like Hugging Face datasets and local inference tools like LM Studio for further customization.

-

Criticism and Future Prospects of OpenAI: Members voiced concerns about the delayed rollout of OpenAI's voice features and the limited benefits of the ChatGPT Plus subscription. They hope for advancements in context windows and other features to match competitors like Google's Gemini and Anthropic's Claude.

OpenAI ▷ #gpt-4-discussions (21 messages🔥):

- Windows Beats Mac in Desktop App Demand: "Wouldn't Windows desktop app get way more used than mac desktop app?" sparked a discussion, with another user stating "yes, thats bs releasing it for mac."

- LaTeX Formatting and GPT-4o Performance Concerns: A member explained they get the best results specifying LaTeX format. They also noted performance issues with GPT-4o, citing failure in logic and historical research tasks.

- Mac Desktop App Limited to Apple Silicon: The discussion around the new macOS desktop app clarified it is only available for Apple Silicon (M1 or better), with no plans to support Intel Macs.

- TTS Model New Voices Inquiry: A user asked if the new voices would be available through the TTS model but did not receive a direct response.

- Slow GPT-4o Responses Frustrate Users: Members inquired and complained about the slowness of GPT-4o, wondering if there was an underlying problem.

OpenAI ▷ #prompt-engineering (1 messages):

- Context Matters for AI Errors: One member noted that understanding AI mistakes depends greatly on the topic, knowledge content, and context. They suggested that reviewing what specific errors the AI is making could be helpful.

OpenAI ▷ #api-discussions (1 messages):

- Dependence on AI's understanding of context and knowledge: The effectiveness of the AI's responses depends heavily on the topic and the context of the knowledge content. One member noted, "It'd be helpful to see what the AI is getting wrong."

HuggingFace ▷ #announcements (1 messages):

- Argilla 2.0 enhances AI dataset creation: The release of Argilla 2.0 introduces a unified framework for feedback collection, a new Python SDK, flexible UI for data annotation, and updated documentation. These features aim to assist AI builders in creating high-quality datasets more efficiently.

- Microsoft's Florence model impresses: Microsoft launched Florence, a vision model capable of multiple tasks like captioning and OCR. The models are MIT licensed and provide high quality despite their smaller sizes compared to much larger models.

- Instruction pre-training by Microsoft: Microsoft's Instruction Pre-Training can enhance LLM pretraining with instruction-response pairs, leading to comparable performance of a Llama 3 8B model to a 70B model. This method is demonstrated in a Gradio space.

- Marlin TGI features boost GPTQ models: The next Hugging Face TGI update will include Marlin features, supporting fast Marlin matrix multiplication for GPTQ-quantized models. This is achieved with the help of Neural Magic's Marlin kernel.

- Ethics and Society newsletter underscores data quality: The Ethics and Society newsletter stresses the importance of data quality. It features collaborative efforts from various members and provides insights into maintaining high-quality data standards.

Links mentioned:

HuggingFace ▷ #general (245 messages🔥🔥):

- Struggles with VSCode Coding Assistants: A user experienced issues with Codiumate crashing mid-coding task, leading to frustration with coding assistants for VSCode. They expressed a need for a reliable solution that examines files and generates fixes without failing.

- AI API Platform for Testing and Development: A member proposed building an AI-driven platform to automate testing and API code generation, sharing a survey to gather feedback. They seek full-stack developers and prompt engineers to contribute to the project.

- Phi-3-Mini-128K-Instruct Model Highlights: The Phi-3-Mini-128K-Instruct model, a lightweight and state-of-the-art open model by Microsoft, has been showcased on HuggingFace. It supports longer token contexts and undergoes advanced post-training processes to enhance instruction following and safety.

- Mozilla Builders Competition and Collaboration: Members discussed teaming up for the Mozilla Builders competition, which requires creating AI projects that run locally. Relevant resources and guidelines were shared for interested participants.

- Optimizing Stable Diffusion Inference: Users discussed methods to speed up Stable Diffusion inference, with suggestions including using the Accelerate library and the stable-fast framework for significant performance improvements.

Links mentioned:

HuggingFace ▷ #today-im-learning (3 messages):

-

Naive Bayes Algorithm on Kaggle: A user shared a link to a Kaggle code notebook that explores the Naive Bayes algorithm. The link points to a resource for studying this machine learning algorithm.

-

InfiniAttention Reproduction Progress: A user is working on a 95% reproduction of the InfiniAttention paper. They mentioned needing to fix the vanishing gradient issue and to run one final experiment to complete their work.

Link mentioned: Naive Bayes Algorithm: Explore and run machine learning code with Kaggle Notebooks | Using data from No attached data sources

HuggingFace ▷ #cool-finds (13 messages🔥):

-

Optimized RAG Systems with LlamaIndex and DSPy: An article on Medium provides a deep dive into building optimized Retrieval-Augmented Generation (RAG) systems using LlamaIndex and DSPy. Building Optimized RAG Systems

-

AI Canon: Curated Modern AI Resources: A blog post from a16z shares a curated list of resources dubbed the "AI Canon," useful for both beginners and experts in AI. It includes foundational papers, practical guides, and technical resources. AI Canon

-

Hand Gesture Media Player Controller Demo: A YouTube video demo showcases a Python-based hand gesture media player controller project. "Check out this cool project I've been working on - a Hand Gesture Media Player Controller using Python!" Hand Gesture Media Player Controller Demo

-

Protein Design Advances Noted in Nature: A Nature article discusses advances in protein design, noting challenges with traditional physics-based methods and highlighting the breakthroughs achieved with AlphaFold2. Protein Design

-

Few-Shot Prompting with Tool Calling in Langchain: An article discusses using few-shot prompting with tool calling in Langchain for improved AI model performance. Few-Shot Prompting

Links mentioned:

HuggingFace ▷ #i-made-this (66 messages🔥🔥):

-

Exploring Custom Byte Encoding in LLMs: In a detailed technical discussion, members explored the use of custom byte encoding for LLMs, predicting sequences in UTF-32. The conversation included potential issues with floating point accuracy and robustness, with one member expressing skepticism about its effectiveness but remaining curious about the results.

-

Hand Gesture Media Player Controller Demo: A member shared a YouTube video demonstrating a hand gesture-based media player controller using Python.

-

Bioinformatics Tools and Projects: Members shared various bioinformatics tools and projects, including PCALipids, a tool for PCA and related analyses of lipid motions, and other GitHub projects such as embedprepro-lib and PixUP-Upscale.

-

New Text Analysis CLI Tool Released: A member announced the release of a new text analysis command-line tool called embedprepro, designed for generating text embeddings, clustering, and visualization, aimed at researchers and developers.

-

Dataset for Optimizing LLMs for RLHF: A member released the Tasksource-DPO-pairs dataset on Hugging Face, Tasksource. This dataset is tailored for optimizing LLMs for Reward Learning from Human Feedback (RLHF) and focuses on fine-grained linguistic reasoning tasks.

Links mentioned:

HuggingFace ▷ #reading-group (15 messages🔥):

-

Thank Alex for recommending the work: A member expresses gratitude for Alex’s post, which brought attention to a particular work that no one had recommended before.

-

Discussion on data leakage in LLMs: Eleuther AI mentioned papers discussing benchmark dataset leakage in LLMs. They shared links to one paper investigating the phenomenon and another paper addressing detection of benchmark data leakage.

-

Terminator architecture introduced: A new architecture called "Terminator" was shared from a Twitter link and further detailed in a GitHub repository. This architecture notably lacks residuals, dot product attention, and normalization.

-

Saturation in LLM leaderboards: Highlighting a concern, a member shared a HuggingFace link to a blog about the saturation in LLM leaderboards, indicating community attention to the issue. The link to the blog post: HuggingFace LLM Leaderboard Blog.

Links mentioned:

HuggingFace ▷ #core-announcements (1 messages):

- Perturbed Attention Guidance now in Diffusers: HuggingFace has announced support for Perturbed Attention Guidance (PAG) in their

diffuserslibrary, enhancing image generation quality without additional training. Check out the update and kudos to the contributor who led the integration.

Link mentioned: PAG is now supported in core 🤗 · Issue #8704 · huggingface/diffusers: Hello folks! #7944 introduced support for Perturbed Attention Guidance (PAG) which enhances image generation quality training-free. Generated Image without PAG Generated Image with PAG Check out th...

HuggingFace ▷ #computer-vision (3 messages):

- Evaluation Error with

detection_utilin Folder: Someone pointed out that theevaluatefunction has issues locatingdetection_utilif it is in a folder within a space. This causes problems during evaluation as the function cannot find the required files. - Hand Gesture Media Player Controller Demo: A user shared a YouTube video demonstrating a "Hand Gesture Media Player Controller" made with Python. They encouraged others to check out their cool project.

- Developing a Handwritten Table Data Pipeline: Someone requested assistance in creating a pipeline for identifying data in handwritten tables. They mentioned trying GPT-Vision, but it did not meet their expectations.

Link mentioned: Hand Gesture Media Player Controller Demo: Hey everyone! 👋 Check out this cool project I've been working on - a Hand Gesture Media Player Controller using Python! 🎮🖐️So , I've built a Python-based ...

HuggingFace ▷ #NLP (5 messages):

-

Seek advice on multilingual model distillation: Looking for suggestions on knowledge distillation of a multilingual model for a single language.

-

Named entity recognition using RAG: A member seeks advice on using Retrieval-Augmented Generation (RAG) for recognizing named entities in long documents. Considering using SSM like Mamba for managing document length, another member suggests BM25 for keyword-oriented search and provides a GitHub link for more information.

-

Developing a pipeline for handwritten tables: A member wants to create a pipeline for identifying data in handwritten tables and finds that GPT-Vision is not meeting expectations. Asking for advice on more effective methods.

-

Experiences with LLM knowledge editing sought: A query about hands-on experiences with LLM knowledge editing and its deployment for simpler tasks like translation was raised.

Link mentioned: semantic-search-with-amazon-opensearch/Module 1 - Difference between BM25 similarity and Semantic similarity.ipynb at main · aws-samples/semantic-search-with-amazon-opensearch: Contribute to aws-samples/semantic-search-with-amazon-opensearch development by creating an account on GitHub.

HuggingFace ▷ #diffusion-discussions (3 messages):

- Exploring Knowledge Distillation for Multilingual Models: A member inquired about performing knowledge distillation for a multilingual model focusing on a single language. Another member suggested trying SpeechBrain on HuggingFace as a possible solution.

LAION ▷ #general (327 messages🔥🔥):

-

Generate Music with AI on RateYourMusic: Members discussed generating songs and lyrics of any musician by using IDs from the RateYourMusic website. One member tried this method and confirmed its effectiveness, calling it "hilarious".

-

Open Model Initiative Controversy: There's a significant discussion about LAION's withdrawal from the Open Model Initiative and their involvement in datasets with problematic content. A member speculated that LAION might have been excluded for sharing non-synthetic datasets, but others believed it was a voluntary decision.

-

Synthetic vs. Non-Synthetic Data Debate: Several members debated the inclusion of NSFW (Not Safe For Work) content in datasets for training AI models. Concerns included moral and PR implications, with some advocating for excluding NSFW content and others critical of heavy-handed safety measures on models like SD3.

-

GPU and Workstation Discussions: Members compared different GPUs, including A6000s, 3090s, and P40s for AI training, discussing the trade-offs in VRAM, cost, and performance. They also talked about practical aspects like on-system cooling, fitting models in single VRAM vs. sharding, and specific models' efficiency and compatibility with certain GPUs.

-

ASIC Chips for Transformers: There's an intriguing discussion about Etched's Sohu, a specialized chip for transformer models claimed to be faster and cheaper than GPUs. Some members doubted its practicality due to its apparent inflexibility, which might limit its use to only specific types of AI models.

Links mentioned:

LAION ▷ #research (7 messages):

- Debate on Poisoning Models: A member expressed concern that someone "actively encouraged poisoning models," indicating controversies in model training ethics.

- AIW+ Problem Harder but Solvable: Another member clarified that the AIW+ problem, although more complex than simple AIW, is still a common-sense problem and solvable. They suggested checking the paper’s supplementary material for the solution.

- Caution Against Manual Evaluation: It was advised against manual evaluation, as it can be highly misleading due to inconsistent results from repeated trials. The recommendation was to use systematic prompt variations and conduct at least 20 trials per prompt variation.

- Disagreement Over AIW+ Solution: A member disputed the provided solution for the AIW+ problem, stating it was incorrect and ambiguous due to unaccounted familial relationships. They also remarked that model agreement with this solution does not eliminate the ambiguity.

Eleuther ▷ #announcements (2 messages):

-

EleutherAI at ICML 2024: EleutherAI members shared their excitement about presenting multiple papers at ICML 2024, covering a range of topics from classifier-free guidance to the societal impacts of open foundation models. Links to their papers, such as Stay on topic with Classifier-Free Guidance and Neural Networks Learn Statistics of Increasing Complexity, were provided to keep the community informed.

-

Understanding Memorization in LMs: A member highlighted their work on better understanding memorization in language models, introducing a taxonomy to differentiate between recitation, reconstruction, and recollection. They shared a preprint and a Twitter thread to elaborate on their findings and its implications for copyright, privacy, and generalization.

Link mentioned: Tweet from Naomi Saphra (@nsaphra): Humans don't just "memorize". We recite poetry drilled in school. We reconstruct code snippets from more general knowledge. We recollect episodes from life. Why treat memorization in LMs u...

Eleuther ▷ #general (98 messages🔥🔥):

-

Finding the best multimodal models: A member inquired about locating top-performing multimodal models, specifically Image+Text to Text models, and shared a link to Huggingface for reference. This helped others looking for similar resources.

-

ICML Social Thread kicks off: A social thread for ICML in Vienna, Austria, was started to coordinate meetups and events. Members discussed logistics and planned gatherings, showing enthusiastic participation.

-

Goldfinch model details shared: Information about the hybrid Goldfinch model, featuring an improved Llama-style transformer layer paired with Finch B2, was shared. Members exchanged links and DM’d more details and discussed technical specifics.

-

Documenting OOD input handling in LLMs: A paper concerning how neural network predictions behave with out-of-distribution (OOD) inputs was discussed, specifically this arxiv link. This sparked a discussion on whether LLMs behave similarly and the implications for Bayesian DL.

-

Request for vision model recommendations: A member requested suggestions for vision models capable of performing RAG on PDFs with image data. The conversation unfortunately did not yield any specific model recommendations.

Links mentioned:

Eleuther ▷ #research (114 messages🔥🔥):

-

Comparative Evaluation of Synquid: Members debated the merits of the paper Synquid, highlighting well-thought-out experiments but expressing mixed feelings about certain missing baselines like "no activation function." One member noted, "It will also score lower on their complexity measure at random initialization," emphasizing the importance of this baseline in their analysis.

-

NRS Framework in Paper Critique: The discussion inspected the hypothesis testing in a paper on neural network initialization and inductive biases. One member stated, "The complexity at initialization correlating with downstream performance on tasks of similar complexity," while others critiqued the reinterpretations of existing work, specifically their stance on random sampling of low-loss solutions.

-

Implementation of Multimodal Metrics and Experimental Validation: Members analyzed a paper on JEST, emphasizing joint example selection for data curation in multimodal contrastive learning. They discussed the significant efficiency gains claimed in the paper, noting the approach surpasses state-of-the-art models with much fewer iterations and computational requirements.

-

Homomorphic Encryption and LLMs: Members briefly touched on the speculative nature of using homomorphic encryption for large language models, as discussed in a Zama AI blog post. The discussion noted skepticism about the practical advancements in homomorphic encryption for real-time applications.

-

Generalization and Grokking in Transformers: Members debated whether grokking and generalization are being confused in a paper, pointing out that "grokking refers specifically to a sudden shift in eval performance after a long flat period of training." They critiqued the paper's methods and the historical context of generalization research.

Links mentioned:

Eleuther ▷ #scaling-laws (15 messages🔥):

- Self-Attention confirmed as (hetero)associative memory model: One member clarified that self-attention functions as a (hetero)associative memory model, pointing out the connection to associative memory frameworks like Hopfield networks. They referenced the paper Hopfield Networks is All You Need to support this claim.

- LeCun's perspective on Transformers: A discussion referenced Yann LeCun's description of transformers as "associative memories." This is tied to the idea that self-attention mechanisms have memory model characteristics.

- Hopfield Networks paper sparks interest: The paper Hopfield Networks is All You Need generated significant discussions, with mentions of its authors and the ideas it presents about modern continuous Hopfield networks (MCHNs) relating closely to self-attention.

- Criticism of "Is All You Need" papers with exceptions: One member expressed disdain for papers titled "Is All You Need" but acknowledged that some, like Hopfield Networks is All You Need, present exceptional value. The user cited its innovative treatment of grokking and overall contributions to the field.

- Hopfield layers as single-step attention: Clarification on how Hopfield layers work in practice within neural networks was provided, noting that memorization happens during pre-training and retrieval occurs in the forward pass. Each operation is dictated as a single step of a Hopfield network, emphasizing the practical application in self-attention mechanisms.

Links mentioned:

Eleuther ▷ #interpretability-general (4 messages):

-

SAEs identified to recover linear features: A member shared a research report showing that "SAEs recover linear features from an overcomplete basis," and highlighted that using "a single layer autoencoder with an L1 penalty on hidden activations" can identify features beyond minimizing loss. They linked to the LessWrong post and acknowledged feedback from other researchers.

-

Interest in toy models for SAE testing: The same member expressed interest in exploring toy models to test SAEs, inspired by another post emphasizing the importance of feature geometry beyond the superposition hypothesis. They shared another LessWrong post on the subject, which discusses structural information in neural networks' feature vectors.

-

Excitement for multilinguality and safety work: A member shared a Twitter link about new work on multilinguality, safety, and mechanistic interpretation, highlighting that "DPO training in only English can detoxify LLM in many other languages." They also provided a link to the associated research paper on arXiv.

Links mentioned:

CUDA MODE ▷ #general (16 messages🔥):

-

AMD MI300X Challenges Nvidia's GPU Dominance: A post about AMD's Radeon Instinct MI300X highlights its aim to compete with Nvidia's GPU compute market lead. While AMD's software ecosystem ROCm trails Nvidia's CUDA, the MI300X represents an effort to overcome this hardware gap independently. Full post.

-

Etched Introduces Transformer ASIC: Etched's new Transformer ASIC chips claim to run AI models significantly faster and cheaper than GPUs by etching transformer architecture directly into silicon. The chip promises applications like real-time voice agents and the capability to run trillion-parameter models.

-

Skepticism Around Etched's ASIC Claims: Users expressed doubts about the practical advantages of Etched's ASICs, particularly whether etching just the architecture rather than also including the weights would deliver the promised performance gains. The discussion highlighted the competition and rapid advancement in AI hardware.

-

Etched Secures Major Investment: Bryan Johnson announced his excitement to invest in Etched's $120 million series A, citing the company's claim that their Sohu chip can run AI models 10x cheaper and replace 160 Nvidia H100 GPUs with one 8xSohu server. Tweet link.

-

Future of AI Chips Debated: Users debated the future role of specialized AI chips like ASICs compared to GPUs, with mentions of the industry's direction towards dedicated hardware accelerators. The potential for rapid changes in model architectures and the flexibility of tensor cores were highlighted.

Links mentioned:

CUDA MODE ▷ #triton (1 messages):

- New user seeks help on Triton issue: A new member introduced themselves and shared an issue they opened in the Triton repo. They are looking for pointers on how to add a

powfunction inpython.triton.language.coreand provided a link to the issue.

Link mentioned: How to add a pow function in python.triton.language.core? · Issue #4190 · triton-lang/triton: I tried to use pow operation in a triton.jitted function as: output = x + y**3 ^ However got AttributeError("'tensor' object has no attribute 'pow'"). In file python/trit...

CUDA MODE ▷ #torch (6 messages):

- PyTorch Documentary Premiers: Members shared a YouTube link to the "PyTorch Documentary Virtual Premiere: Live Stream" featuring key players from the project's early days to the present. This was posted repeatedly by multiple users to emphasize its importance.

- Goat Emoji Hype: A member reacted to the PyTorch Documentary link with a goat emoji (🐐), symbolizing excitement and hype. The reaction was noted and mirrored by another member to highlight this sentiment.

Links mentioned:

CUDA MODE ▷ #algorithms (1 messages):

- Adam-mini optimizer reduces memory usage: Adam-mini is proposed as an optimizer that offers equivalent or better performance than AdamW while using 45% to 50% less memory. The GitHub repository contains the code and details of the implementation.

Link mentioned: GitHub - zyushun/Adam-mini: Code for the paper: Adam-mini: Use Fewer Learning Rates To Gain More: Code for the paper: Adam-mini: Use Fewer Learning Rates To Gain More - zyushun/Adam-mini

CUDA MODE ▷ #torchao (38 messages🔥):

- Raw Kernel for Linear Algebra in PyTorch: A user shared a link to the raw kernel in the PyTorch repository. This kernel is located in the native linear algebra section of the code.

- Subclass dtype Issue in PyTorch: Members discussed issues with tensor subclasses not reflecting their actual

dtype, complicating compatibility and usability. Marksaroufim encouraged filing an issue on PyTorch and suggested looking into internal improvements. - Open Source Contributions' Value: Locknit3 questioned whether open source contributions help in job searches, sparking a debate. Gau.nernst and kashimoo affirmed their value, mentioning instances where recruiters noted their contributions.

- Integrating HQQ with TorchAO: Members discussed the potential integration of HQQ with TorchAO's

quantize()API, linking to the HQQ optimizer. They highlighted the algorithm's simplicity and suggested it could be a new baseline for INT4 quantization. - Low-bit Fused GEMV CUDA Kernels: Mobicham shared that they have been developing low-bit fused GEMV CUDA kernels, outlining their flexibility and current limitations. Gau.nernst inquired about support for odd bitwidths, to which Mobicham confirmed feasibility.

Links mentioned:

CUDA MODE ▷ #hqq (8 messages🔥):

- Axis setting affects HQQModelForCausalLM performance: A user reported issues with the