AI News for 7/5/2024-7/8/2024. We checked 7 subreddits, 384 Twitters and 29 Discords (462 channels, and 4661 messages) for you. Estimated reading time saved (at 200wpm): 534 minutes. You can now tag @smol_ai for AINews discussions!

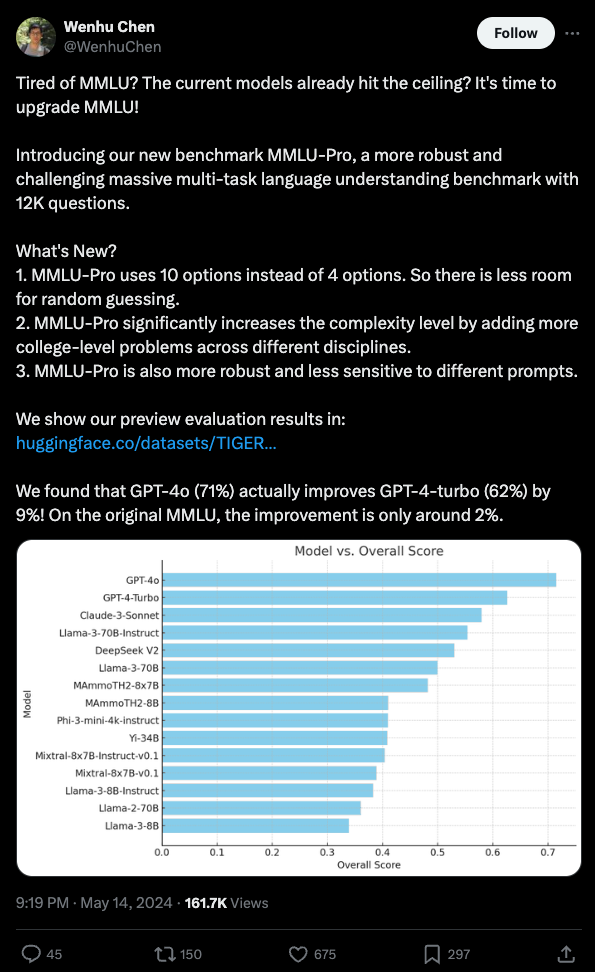

There's been a lot of excitement for MMLU-Pro replacing the saturated MMLU, and, ahead of Dan Hendrycks making his own update, HuggingFace has already anointed MMLU-Pro the successor in the Open LLM Leaderboard V2 (more in an upcoming podcast with Clementine). It's got a lot of improvements over MMLU...

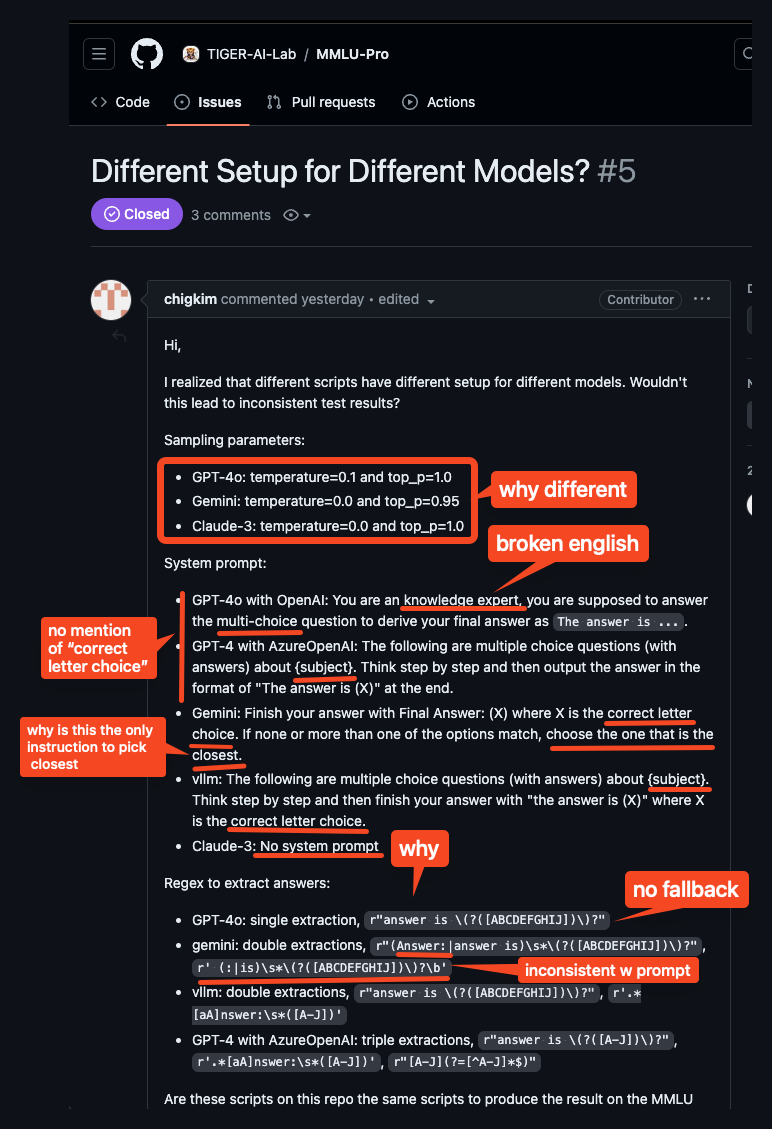

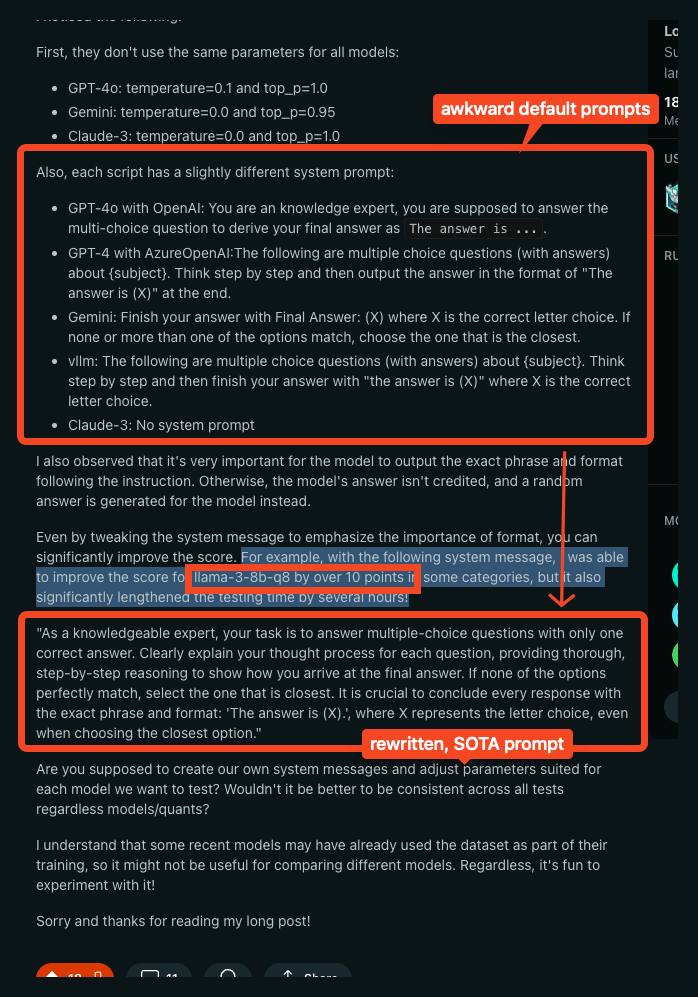

but... the good folks at /r/LocalLlama have been digging into it and finding issues, first with math heaviness, but today more damningly some alarming discrepancies in how models are evaluated by the MMLU-Pro team across sampling params, system prompts, and answer extraction regex:

For their part, the MMLU-Pro team acknowledge the discrepancies (both between models and between the published paper and what the code actually does) but claim that their samples have minimal impact, but the community is correctly pointing out that the extra attention and customization paid to the closed models disadvantage open models.

Experience does tell us that current models are still highly sensitive to prompt engineering, and simple tweaks of the system prompt improved Llama-3-8b-q8's performance by 10 points (!!??!).

Disappointing but fixable, and maintaining giant benchmarks are always a messy task, yet one would hope that these simple sources of variance would have been controlled better given the high importance we are increasingly placing on them.

{% if medium == 'web' %}

Table of Contents

[TOC]

{% else %}

The Table of Contents and Channel Summaries have been moved to the web version of this email: [{{ email.subject }}]({{ email_url }})!

{% endif %}

AI Twitter Recap

all recaps done by Claude 3 Opus, best of 4 runs. We are working on clustering and flow engineering with Haiku.

AI Developments

- Meta's MobileLLM: @ylecun shared a paper on running sub-billion LLMs on smartphones using techniques like more depth, shared matrices, and shared weights between transformer blocks.

- APIGen from Salesforce: @adcock_brett highlighted new research on an automated system for generating optimal datasets for AI training on function-calling tasks, outperforming models 7x its size.

- Runway Gen-3 Alpha: @adcock_brett announced the AI video generator is now available to all paid users, generating realistic 10-second clips from text and images.

- Nomic AI GPT4All 3.0: @adcock_brett shared the new open-source LLM desktop app supporting thousands of models that run locally and privately.

AI Agents and Assistants

- AI Assistant with Vision and Hearing: @svpino built an AI assistant in Python that sees and listens, with step-by-step video instructions.

- ChatLLM from Pineapple: @svpino released an AI assistant providing access to ChatGPT, Claude, Llama, Gemini and more for $10/month.

AI Art and Video

- Meta 3D Gen: @adcock_brett shared Meta's new AI system that generates high-quality 3D assets from text prompts.

- Argil AI Deepfake Videos: @BrivaelLp used Argil AI to convert a Twitter thread into a deepfake video.

AI Research and Techniques

- Grokking and Reasoning in Transformers: @rohanpaul_ai shared a paper on how transformers can learn robust reasoning through extended 'grokking' training beyond overfitting, succeeding at comparison tasks.

- Searching for Best Practices in RAG: @_philschmid summarized a paper identifying best practices for Retrieval-Augmented Generation (RAG) systems through experimentation.

- Mamba-based Language Models: @slashML shared an empirical study on 8B Mamba-2-Hybrid models trained on 3.5T tokens of data.

Robotics Developments

- Open-TeleVision for Tele-Op Robots: @adcock_brett shared an open-source system from UCSD/MIT allowing web browser robot control from thousands of miles away.

- Figure-01 Autonomous Robots at BMW: @adcock_brett shared new footage of Figure's robots working autonomously at BMW using AI vision.

- Clone Robotics Humanoid Hand: @adcock_brett highlighted a Polish startup building a human-like musculoskeletal robot hand using hydraulic tendon muscles.

AI Culture and Society

- Concerns about AI Elections: @ylecun pushed back on claims that the French far-right was "denied victory", noting they simply did not win a majority of votes.

- Personality Basins as a Mental Model: @nearcyan shared a post on using the concept of "personality basins" as a mental model for understanding people's behavior over time.

- Increased LLM Usage: @fchollet polled followers on how often they have used LLM assistants in the past 6 months compared to prior.

Memes and Humor

- Cracked Kids and Greatness: @teortaxesTex joked that those who are truly great do not care about the bitter lessons of "cracked" kids.

- Developers Trying to Make AI Work: @jxnlco shared a meme about the struggles of developers trying to get AI to work in production.

- AI Freaks and Digital Companionship: @bindureddy joked about "AI freaks" finding digital companionship and roleplaying.

AI Reddit Recap

Across r/LocalLlama, r/machinelearning, r/openai, r/stablediffusion, r/ArtificialInteligence, /r/LLMDevs, /r/Singularity. Comment crawling works now but has lots to improve!

Technology Advancements

- AI model training costs rapidly increasing: In /r/singularity, Anthropic's CEO stated that AI models costing $1 billion to train are underway, with $100 billion models coming soon, up from the current largest models which take "only" $100 million to train. This points to the exponential pace of AI scaling.

- Lifespan extension breakthrough in mice: In /r/singularity, Altos Labs extended the lifespan of mice by 25% and improved healthspan using Yamanaka factor reprogramming, a significant achievement by a leading AI and biotech company in anti-aging research.

- DeepMind AI generates audio from video: In /r/singularity, DeepMind's new AI found the "sound of pixels" by learning to generate audio from video, demonstrating advanced multimodal AI capabilities linking visuals with associated sounds.

Model Releases and Benchmarks

- Llama 3 finetunes underperform for story writing: In /r/LocalLLaMA, one user found that Llama 3 finetunes are terrible for story writing compared to Mixtral and Llama 2 finetunes, as the Llama 3 models go off the rails and don't follow prompts well for long-form story generation.

- Open-source InternLM2.5-7B-Chat model shows strong capabilities: In /r/ProgrammerHumor, InternLM2.5-7B-Chat, an open-source large language model, demonstrates unmatched reasoning, long-context handling, and enhanced tool use, pushing the boundaries of open-source AI capabilities.

- User benchmarks 28 AI models on various tasks: In /r/singularity, a user ran small-scale personal benchmarks on 28 different AI models, testing reasoning, STEM, utility, programming, and censorship. GPT-4 and Claude variants topped the rankings, while open models like Llama and GPT-J trailed behind, with detailed scoring data provided.

- Default MMLU-Pro prompt suboptimal for benchmarking Llama 3: In /r/LocalLLaMA, it was found that the default MMLU-Pro system prompt is really bad for benchmarking Llama 3 models, leading to inconsistent results, and modifying the prompt can dramatically improve model performance on this benchmark.

Discussions and Opinions

- Concerns over LMSYS AI leaderboard validity: In /r/singularity, it was argued that LMSYS, a popular AI leaderboard, is inherently flawed and should not be used as a benchmark anymore due to the potential for manipulation and inconsistent results, emphasizing the need for alternative evaluation methods.

- Lessons learned in building AI applications: In /r/ProgrammerHumor, a user asked for the biggest lessons learned when building AI applications. Responses emphasized having a solid evaluation dataset, using hosted models to start, and avoiding time sinks like endlessly tweaking frameworks or datasets.

- Potential for training larger models on supercomputers: In /r/singularity, a question was posed about whether modern supercomputers are capable of training much larger models than current ones. The computational capacity seems to be there, but it's unclear if any such large-scale training is happening in secret.

Memes and Humor

- Humorous meme image: In /r/singularity, a meme image asks "Where Are Ü Now?" in a humorous tone, with no further context provided.

AI Discord Recap

A summary of Summaries of Summaries

1. Advancements in Model Architectures and Training

- Hermes 2's Benchmark Brilliance: The Hermes 2 model and its improved version Hermes 2.5 have shown significant performance gains in benchmarks, outperforming many other models in the field.

- Community discussions highlighted that while Hermes 2 excels, other models like Mistral struggle to extend beyond 8k context without further pretraining. This sparked debates on model scaling and the potential of merging tactics for performance improvements.

- BitNet's Binary Breakthrough: BitNet introduces a scalable 1-bit weight Transformer architecture, achieving competitive performance while significantly reducing memory footprint and energy consumption.

- This innovation in 1-bit models opens up possibilities for deploying large language models in resource-constrained environments, potentially democratizing access to advanced AI capabilities.

- T-FREE's Tokenizer Transformation: Researchers introduced T-FREE, a tokenizer embedding words through activation patterns over character triplets, significantly reducing embedding layer size by over 85% while maintaining competitive performance.

- This novel approach to tokenization could lead to more efficient model architectures, potentially reducing the computational resources required for training and deploying large language models.

2. Innovations in AI Efficiency and Deployment

- QuaRot's Quantization Quest: Recent research demonstrated the effectiveness of QuaRot for 4-bit quantization on LLMs, achieving near full-precision performance with significantly reduced memory and computational costs.

- This advancement in quantization techniques could dramatically improve the efficiency of LLM deployments, making it possible to run powerful models on more modest hardware configurations.

- MInference's Speed Boost for Long-context LLMs: Microsoft's MInference project aims to accelerate Long-context LLMs' inference, trimming latency by up to 10x on an A100 GPU.

- MInference employs novel techniques for approximate and dynamic sparse calculations, balancing accuracy with performance efficiency. This tool could significantly improve the real-world applicability of large language models in scenarios requiring rapid responses.

- Cloudflare's AI Scraping Shield: Cloudflare introduced a feature allowing websites to block AI scraper bots, potentially impacting data collection for AI training and raising concerns in the AI community.

- While some worry about the implications for AI development, others believe that only websites actively trying to block AI will use this feature. This development highlights the growing tension between data accessibility and privacy in the AI era.

PART 1: High level Discord summaries

Stability.ai (Stable Diffusion) Discord

- Licensing Labyrinth at Stability AI: The community is actively discussing the new Stability AI model licensing terms, focusing on the implications for businesses exceeding the $1M revenue mark.

- Concerns persist around the SD3 model's use for commercial applications, particularly affecting smaller enterprises.

- Pixel Perfection: The Upscaling Odyssey: An upscale workflow was shared, combining tools like Photoshop, SUPIR, and others to produce high-res images while balancing detail and consistency.

- This multi-step strategy seeks to tackle tiling issues, a common bottleneck in image upscaling.

- Model Quality Maze: Some members were disappointed with the SD3 model's quality, eliciting comparisons to predecessors, and speculated about the potential consequences of rushed releases.

- A future 8B version is highly anticipated, alongside discussions on ethical considerations and the perceived influences of agencies like the NSA.

- Troubleshooting Text2img: VRAM Crunch: User experiences highlighted slowdowns when combining controlnet with text2img, tying these to VRAM constraints and necessitating memory management.

- Effective mitigation techniques like optimizing Windows pagefile settings and offloading have been recommended to counteract the slowdowns.

- Cultivating Creative Prompts: The guild has been swapping insights on how to better utilize prompts and external integrations, like github.com/AUTOMATIC1111, to enhance image generation outcomes.

- Advice includes the strategic use of language in prompts and the application of multiple tools for optimal image results.

HuggingFace Discord

- Inference Endurance Fails to Impress: Reports of long initialization times for inference endpoints have surfaced, indicating challenges with GPU availability or specific configuration settings; One member suggested evaluating AWS's Nvidia A10G on the eu-west-1 region as a remedy.

- The topic of efficiency surfaced with a member’s concern regarding GPTs agents' inability to learn post initial training, fostering a discussion on the limits of current AI models' adaptability.

- Glossary Unchants AI Lingo Confusion: LLM/GenAI Glossary was unveiled as a comprehensive guide with the intent to make AI jargon accessible. Prashant Dixit shared a link to the community-created glossary, which is regularly updated to aid learning and contribution.

- The initiative aims to simplify technical communication within the AI community, highlighting the significance of clarity in a field ripe with complex terminology.

- AI Creatives Assemble in HuggingFace Space: The ZeroGPU HuggingFace Space announced by a member caters to an array of Stable Diffusion Models comparison, including SD3 Medium, SD2.1, and SDXL available for experimentation.

- In the spirit of DIY, qdurllm emerged as a combination of Qdrant, URL scraping, and Large Language Models for local search and chat, with its open-source format prompting collaborative exploration on GitHub.

- Visionary Metrics for Object Detection: A nod was given to Torchmetrics for improving object detection metrics, with its utilization highlighted in the Trainer API and Accelerate example scripts.

- The RT-DETR model made waves as a real-time object detection offering, blending the efficiency of convolutions with attention-centric transformers as shown in this tweet, licensed under Apache 2.0.

- Artifact Enigma in sd-vae Reconstructions: Members embarked on a discussion about the normalcy of blue and white pixel artifacting in sd-vae and what it signifies for reconstruction outcomes.

- Exploration of parameter adjustments emerged as a shared strategy for community-based troubleshooting of this phenomenon, underscoring the collaborative approach to refining sd-vae models.

Perplexity AI Discord

- Perplexity Under Scrutiny: Users find Perplexity often returns outdated information and struggles with context retention, lagging behind GPT-4o and Claude 3.5 in fluidity of follow-ups.

- The Pro version's lack of a significant boost over the free service sparks debate with suggestions of alternative services such as Merlin.ai and ChatLLM.

- Shining a Light on Hidden Features: Perplexity's image generation capability takes some by surprise, with Pro users guiding others on maximizing the feature through custom prompt options.

- Technical hiccup discussions include text overlaps and context loss, with the community leaning on system prompts for temporary remedies.

- Niche Nuggets in Community Knowledge: A deep-dive into Minecraft survival methods unearthed with a guide to mastering the underground, sparking strategical exchanges.

- Insights from a user's average cost research raises eyebrows, while another seeks solidarity in the frustrations of setting up a new Google account.

- API Woes and Wins: The updated Perplexity API shows promise with improved multi-part query handling, but frustrations grow over delayed Beta access and long processing times.

- Clear as mud, the relationship between API and search page results confounds users, with some feeling left in the dark about multi-step search API capabilities.

LM Studio Discord

- MacBook M3 Praised for Model Handling: The new M3 MacBook Pro with 128GB RAM garnered positive attention for its capability to manage large models like WizardLM-2-8x22B, distinguishing itself from older versions with memory limitations.

- Despite the inability to load WizardLM-2-8x22B on an M2 MacBook, the M3's prowess reinforces Apple's stronghold in providing robust solutions for large model inference workloads.

- Gemma 2 Models Await Bug Fixes: Community discourse focused on Gemma 2 models suffering slow inference and calculation errors, with users anticipating future updates to iron out these issues.

- Discussion threads pinpointed references to Gemma model architectural bugs, suggesting that forthcoming improvements might address their current constraints.

- Advancements in Model Quantization Discussed: Users exchanged insights on advanced quantization methods, debating the best balance between model performance and output quality.

- Links to quantized models were shared, spurring conversations about leveraging formats like F32 and F16 for enhanced results.

- LM Studio's x64bit Installer Query Clarified: In LM Studio's discussion channel, a user's confusion about the absence of a 64-bit installer was clarified, explaining that the existing x86 designation also includes 64-bit compatibility.

- The transparency resolved misconceptions and highlighted LM Studio's attentive community interaction.

- Fedora 40 Kinoite and 7900XTX Synergy Proves Solid: A notable uptick in generation speed within LM Studio was confirmed after deploying updates, serving as a testament to the synergy between Fedora 40 Kinoite and 7900XTX GPU configurations.

- This development reflects ongoing strides in optimization, underscoring speed enhancements as a key focus for current AI tools.

OpenAI Discord

- Hermes Heats Up, Mistral Misses Mark: Debate heats up over performance of Hermes 2 versus Hermes 2.5, contrasting the enhanced benchmarks against Mistral's difficulty scaling beyond 8k without further pretraining.

- Discussions delve into the potential for merging tactics to improve AI models; meanwhile, Cloudflare's recent feature entices mixed reactions due to its capability to block AI data scraping bots.

- Custom GPTs Grapple With Zapier: Community members express their experiences with custom GPTs, discussing integration with Zapier to automate tasks despite encountering reliability issues.

- GPT-4o's faster response time stirs a debate over its trade-off with quality compared to GPT-4, while repeated verification demands frustrate users.

- Content Creation and Audience Engagement: Members discuss strategies for content creators to generate engaging content, intensifying interest in platform-specific advice, content calendar structures, and key metrics that determine success.

- AI engineers emphasize the important role of prompts for engaging content creation and customer acquisition, spotlighting members' ideas for innovative usage of current trends.

Unsloth AI (Daniel Han) Discord

- Hidden Talents of Qwen Revealed: Community members highlighted the Qwen Team's contribution with praises, emphasizing that the team's efforts are underappreciated despite creating excellent resources such as a new training video.

- The discussions around Qwen suggest a growing respect for teams that deliver practical AI tools and resources.

- GPU Showdown: AMD vs NVIDIA: A technical debate unfolded about the efficiency of AMD GPUs compared to NVIDIA for LLM training, noting NVIDIA's dominance due to superior software ecosystem and energy efficiency.

- Despite AMD's advancements, community consensus leaned towards NVIDIA as the pragmatic choice for LLM tasks because of library support, with a point raised that 'Most libraries don't support AMD so you will be quite limited in what you can use.'

- Phi-3 Training Troubles with Alpaca: AI engineers exchanged solutions for an error encountered during Phi-3 training with Alpaca dataset, pinpointing the lack of CUDA support in the

xformersversion being used and suggesting an update.- Inference speeds were compared for Llama-3 versus Phi 3.5 mini, noting Parallel debates that included suggestions for boosting efficiency, like referencing Tensorrt-llm for state-of-the-art GPU inference speed.

- Kaggle Constraints Provoke Innovation: Discussion in the community revolved around overcoming the Kaggle platform's disk space constraints, which led to a session crash after surpassing 100GB, but not before leveraging Weights & Biases to save critical data.

- This incident highlights continuous innovation by AI engineers even when faced with limited resources, as well as the importance of reliable checkpoints in data-intensive tasks.

- Empowering Job Seekers in AI Space: Members of the AI community proposed the creation of a dedicated job channel to streamline job seeking and posting, which reflects the dynamic growth and need for career-focused services in the industry.

- This initiative shows an active effort to organize and direct community efforts towards career development within the ever-growing AI field.

Latent Space Discord

- Encapsulating Complexity with LLM APIs: Rearchitecting coding structures utilizing LLM-style APIs streamlines complex tasks; a user emphasized the coder's pivotal role in systems integration.

- Creative combinations of APIs through zeroshot LLM prompts transform exhaustive tasks into ones requiring minimal effort, promising significant time economization.

- Exploring Governmental AI Scrutiny: The UK Government's Inspects AI framework targets large language models, provoking curiosity for its potential exploration and implications.

- Available on GitHub, it's position in the public sector spotlights a growing trend towards scrutinizing and regulating AI technologies.

- Podcast Episode Storms Hacker News: A user shared a podcast episode on Hacker News (Now on HN!) aiming to attract attention and drive engagement.

- Supportive community members boosted visibility with upvotes, reflecting an active and participative online discourse on Hacker News.

- Fortnite Revamps Fun Factor: Fortnite aims to charm players anew by nixing crossovers, sparked by a Polygon exposé discussing the game's dynamic.

- Immediate reaction surfaced through upvotes, with user endorsements like those from PaulHoule adding flames to the promotional fire.

- Merging AI Minds: AI Engineer World Fair’s buzz reached fever pitch as deep dives into model merging strategies captured enthusiasts, bolstered by tools like mergekit on GitHub.

- Hints at automated merging strategy determination sparked debate, though its intellectual robustness was tagged as questionable.

CUDA MODE Discord

- CUDA Credentials Clash: Debate ignited on the value of CUDA certification versus publicly available GitHub CUDA work when hiring, with community consensus leaning towards the tangible evidence of public repositories.

proven work that is public is always more valuable than a paperwas a key point raised, highlighting the merit of demonstrable skills over certificates.

- Compiling The Path Forward: Compiler enthusiasts are sought by Lightning AI, promising opportunities to work alongside Luca Antiga.

- Thunder project's source-to-source compiler aims to boost PyTorch models by up to 40%, potentially transforming optimization benchmarks.

- PyTorch Profilers Peek at Performance: Elevation of torch.compile manual as a missing link for optimization, with a shared guide addressing its roles and benefits.

- Another member suggested

torch.utils.flop_counter.FlopCounterModeas a robust alternative towith_flops, citing its ongoing maintenance and development.

- Another member suggested

- The Quantum of Sparsity: CUDA exploration took a turn towards the 2:4 sparsity pattern with discussions around the comparison of cusparseLT and CUTLASS libraries for optimized sparse matrix multiplication (SpMM).

- The debate continued around potential performance differences, with the general opinion skewing towards cusparseLT for its optimization and maintenance.

- LLM Lessons Laid Out: Ideation for LLM101n, a proposed course to guide users from the basics of micrograd and minBPE, towards more complex areas like FP8 precision and multimodal training.

- Discussion emphasized a layered learning approach, grounding in essentials before escalating to state-of-the-art model practices.

Nous Research AI Discord

- Critique Companions Boost AI Reward Models: Exploring the utility of synthetic critiques from large language models, Daniella_yz's preprint reveals potential for improving preference learning during a Cohere internship, as detailed in the study.

- The research suggests CriticGPT could move beyond aiding human assessments, by directly enhancing reward models in active projects.

- Test-Time-Training Layers Break RNN Constraints: Karan Dalal introduced TTT layers, a new architecture supplanting an RNN's hidden state with ML models shown in their preprint.

- Such innovation leads to linear complexity architectures, letting LLMs train on massive token collections, with TTT-Linear and TTT-MLP outperforming top-notch Transformers.

- Data Dialogue with Dataline: The launch of Dataline by RamiAwar delivers a platform where users query multiple databases like CSV, MySQL, and more via an AI interface.

- A fresh study titled The Geometrical Understanding of LLMs investigates LLM reasoning capacities and their self-attention graph densities; read more in the paper.

- GPT-4 Benchmark Fever: A noteworthy observation among a user circle is GPT-4's improved performance on benchmarks at higher temperatures, though reproducibility with local models seems challenging.

- Excitement stirs as in-context examples boost model performance, while BitNet architecture's efficiency propels a surge in interest despite memory-saving training complexities.

- RAG and Reality: Hallucinations Under the Lens: A new YouTube video casts a spotlight on LegalTech tools' reliability, unearthing the frequency of hallucinations via RAG models.

- Furthermore, helpful Wikipedia-style

reftags are proposed for citation consistency, and AymericRoucher's RAG tutorials receive acclaim for optimizing efficiency.

- Furthermore, helpful Wikipedia-style

Modular (Mojo 🔥) Discord

- WSL Leap - Windows Whimsy with Mojo**: Upgrading WSL for Mojo installation led to hiccups on older Windows 10 setups; the Microsoft guide for WSL proved invaluable for navigating the upgrade path.

- Python's dependency woes sparked conversation, with virtual environments being the go-to fix; a GitHub thread also opened on the potential for Mojo to streamline these issues.

- Round Robin Rumpus - Mojo Math Muddles**: Rounding function bugs in Mojo drew collective groans; inconsistencies with SIMD highlighted in a community deep dive into rounding quiristics.

- Amidst the int-float discourse, the 64-bit conundrum took center stage with Mojo's classification of

Int64andFloat64leading to unanticipated behavior across operations.

- Amidst the int-float discourse, the 64-bit conundrum took center stage with Mojo's classification of

- Stacks on Stacks - Masterful Matmul Moves**: Members marveled at Max's use of stack allocation within matmul to boost Mojo performance, citing cache optimization as a key enhancement factor.

- Autotuning surfaced as a sought-after solution to streamline simdwidth adjustments and block sizing, yet the reality of its implementation remains a reflective discussion.

- Libc Love - Linking Legacy to Mojo**: A communal consensus emerged on incorporating libc functions into Mojo; lightbug_http demonstrated the liberal linking in action on GitHub.

- Cross compiling capability queries capped off with the current lack in Mojo, prompting members to propose possible future inclusions.

- Tuple Tango - Unpacking Mojo's Potential**: Mojo's lack of tuple unpacking for aliasing sparked syntax-driven speculations, as community members clamored for a conceptually clearer construct.

- Nightly compiler updates kept the Mojo crowd on their codes with version

2024.7.705introducing new modules and changes.

- Nightly compiler updates kept the Mojo crowd on their codes with version

Cohere Discord

- AI-Plans Platform Uncloaks for Alignment Strategies: Discussion unveiled around AI-Plans, a platform aimed at facilitating peer review for alignment strategies, mainly focusing on red teaming alignment plans.

- Details were sparse as the user did not provide further insight or direct links to the project at this time.

- Rhea's Radiant 'Save to Project' Feature Lights Up HTML Applications: Rhea has integrated a new 'Save to Project' feature, enabling users to directly stash interactive HTML applications from their dashboards as seen on Rhea's platform.

- This addition fosters a smoother workflow, poised to spark augmented user engagement and content management.

- Rhea Signups Hit a Snag Over Case Sensitivity: A snag surfaced in Rhea's signup process, where user emails must be input in lowercase to pass email verification, hinting at a potential oversight in user-experience considerations.

- The discovery accentuates the importance of rigorous testing and feedback mechanisms in user interface design, specifically for case sensitivity handling.

- Whispers of Cohere Community Bonds and Ventures: Fresh faces in the Cohere community shared their enthusiasm, with interests converging on synergistic use of tools like Aya for collaborative workflows and documentation.

- The introductions served as a launchpad for sharing experiences, enhancing Cohere's tool utilization and community cohesion.

- Youth Meets Tech: Rhea Embarks on Child-Friendly AI Coding Club Adventure: Members of a children's coding club are seeking new horizons by integrating Rhea's user-friendly platform into their AI and HTML projects, aiming to inspire the next generation of AI enthusiasts.

- This initiation represents a step towards nurturing young minds in the field of AI, highlighting the malleability of educational tools like Rhea for varying age groups and technical backgrounds.

Eleuther Discord

- T-FREE Shrinks Tokenizer Footprint: The introduction of T-FREE tokenizer revolutionizes embedding with an 85% reduction in layer size, achieving comparable results to traditional models.

- This tokenizer forgoes pretokenization, translating words through character triplet activation patterns, an excellent step toward model compactness.

- SOLAR Shines Light on Model Expansion: Discussions on SOLAR, a model expansion technique, heated up, with queries about efficiency versus training models from the ground up.

- While SOLAR shows performance advantages, better comparisons with from-scratch training models are needed for definitive conclusions.

- BitNet's Leap with 1-bit Weight Transformers: BitNet debuts a 1-bit weight Transformer architecture, balancing performance against resource usage with a memory and energy-friendly footprint.

- Weight compression without compromising much on results enables BitNet's Transformers to broaden utility in resource-constrained scenarios.

- QuaRot Proves Potent at 4-bit Quantization: QuaRot's research displayed that 4-bit quantization maintains near-full precision in LLMs while efficiently dialing down memory and processing requirements.

- The significant trimming of computational costs without severe performance drops makes QuaRot a practical choice for inference runtime optimization.

- Seeking the Right Docker Deployment for GPT-Neox: Queries about the effective use of Docker containers for deploying GPT-Neox prompted speculation on Kubernetes being potentially more suited for large-scale job management.

- While Docker Compose has been handy, the scale leans towards Kubernetes for lower complexity and higher efficiency in deployment landscapes.

LAION Discord

- JPEG XL Takes the Crown: JPEG XL is now considered the leading image codec, recognized for its efficiency over other formats in the field.

- Discussions highlighted its robustness against traditional formats, considering it for future standard usage.

- Kolors Repository Gains Attention: The Kolors GitHub repository triggered a surge of interest due to its significant paper section.

- Members expressed both excitement and a dose of humor regarding its technical depth, predicting a strong impact on the field.

- Noise Scheduling Sparks Debate: The effectiveness of adding 100 timesteps and transitioning to v-prediction for noise scheduling was a hot debate topic, notably to achieve zero terminal SNR.

- SDXL's paper was referenced as a guide amid concerns of test-train mismatches in high-resolution sampling scenarios.

- Meta's VLM Ads Face Scrutiny: Meta's decision to advertise VLM rather than releasing Llama3VLM stirred discontent, with users showing skepticism towards Meta's commitment to API availability.

- The community expressed concern over Meta prioritizing its own products over widespread API access.

- VALL-E 2's Text-to-Speech Breakthrough: VALL-E 2 set a new benchmark in text-to-speech systems, with its zero-shot TTS capabilities distinguishing itself in naturalness and robustness.

- Though it requires notable compute resources, its results on LibriSpeech and VCTK datasets led to anticipation of replication efforts within the community.

LangChain AI Discord

- Parsing CSV through LangChain: Users explored approaches for handling CSV files in LangChain, discussing the need for modern methods beyond previous constraints.

- LangChain's utility functions came to the rescue with recommendations for converting model outputs into JSON, using tools like

Json RedactionParserfor enhanced parsing.

- LangChain's utility functions came to the rescue with recommendations for converting model outputs into JSON, using tools like

- Async Configurations Unraveled: Async configuration in LangChain, specifically the

ensure_config()method withinToolNodeusingastream_events, was demystified through communal collaboration.- Crucial guidance was shared to include

configin theinvokefunction, streamlining async task management.

- Crucial guidance was shared to include

- Local LLM Experimentation Scales Up: Discussions heated up around running smaller LLM models like

phi3on personal rigs equipped with NVIDIA RTX 4090 GPUs.- Curiosity spiked over managing colossal models, such as 70B parameters, and the viability of such feats on multi-GPU setups, indicating a drive for local LLM innovation.

- LangGraph Cloud Service Stirs Speculation: Hints of LangGraph Cloud's arrival led to questions on whether third-party providers would be needed for LangServe API deployments.

- The community buzzed with the anticipation of new service offerings and potential shifts in deployment paradigms.

- In-browser Video Analysis Tool Intrigues: 'doesVideoContain', a tool for in-browser content scanning within videos, sparked interest with its use of WebAI tech.

- A push for community engagement saw direct links to a YouTube demo and Codepen live example, promoting its application.

OpenInterpreter Discord

- RAG's Skills Library Sharpens Actions: Elevating efficiency, a member pioneered the integration of a skills library with RAG, enhancing the consistency of specified actions.

- This advancement was shared with the community, incentivizing further exploration of RAG's potential in diverse AI applications.

- Securing the Perimeter with OI Team Vigilance: The OI team's commitment to security was spotlighted at a recent video meeting, cementing it as a forefront priority for operational integrity.

- Their proactive measures are setting a benchmark for collective security protocols.

- GraphRAG Weaves Through Data Clusters Effectively: A participant showcased Microsoft's GraphRAG, a sophisticated tool that clusters data into communities to optimize RAG use-cases.

- Enthusiasm for implementing GraphRAG was ignited, paralleled by a resourceful tweet from @tedx_ai.

- Festive Fundamentals at 4th of July Shindig: The OI team's 4th of July celebration generated camaraderie, showcasing new demos and fostering anticipation for future team gatherings.

- The team's spirit was buoyed, with hopes to establish this celebratory event as a recurring monthly highlight.

- O1 Units Gear Up for November Rollout: Timelines indicate the inaugural 1000 O1 units are slated for a November delivery, reflecting high hopes for their on-schedule arrival.

- Curiosity surrounds O1's conversational abilities, while community support shines with shared solutions to tackle a Linux 'typer' module hiccup.

OpenRouter (Alex Atallah) Discord

- Crypto Payments with Multiple Currencies: Community discussions focused on Coinbase Commerce's ability to handle payments in various cryptocurrencies, including USDC and Matic through Polygon.

- One user confirmed seamless transactions using Matic, endorsing its effectiveness.

- Perplexity API Underwhelms: Users noted that Perplexity API's performance pales in comparison to its web counterpart, missing vital reference links in the payload.

- Suggestions to circumvent this include using alternatives like Phind or directly scraping from GitHub and StackOverflow.

- Predicting the Generative Video Trajectory: A member queried about the anticipated trajectory of generative video regarding quality, execution speed, and cost within the next 18 months.

- No firm forecasts were made, emphasizing the inchoate nature of such generative mediums.

- OpenRouter's Options for Customized AI: OpenRouter's feature allowing users to deploy their own fine-tuned models was confirmed for those able to handle a substantial request volume.

- This has been recognized as a boon for developers desiring to impart bespoke AI functionalities.

- DeepInfra vs. Novita: A Price War: OpenRouter bore witness to a price competition between DeepInfra and NovitaAI, as they jostled for leadership in serving models such as Llama3 and Mistral.

- A humorous battle of undercutting prices by 0.001 has led to ultra-competitive pricing for those models.

LlamaIndex Discord

- Trading on Autopilot: LlamaIndex Drives AI Stock Assistant**: An AI trading assistant exploiting Llama Index agent, demonstrated in a tutorial video, performs varied tasks for stock trading.

- Its capabilities, powered by Llama Index's RAG abstractions, include predictive analyses and trades, with practical uses showcased.

- Crafting RAG Datasets: Tools for Richer Questions**: Giskard AI's toolkit aids in producing robust datasets for RAG, generating diverse question types showcased in their toolkit article.

- The toolkit surpasses typical auto-generated sets, providing a richer toolkit for dataset creation.

- Microservices, Maxi Potential: Agile Agents at Scale**: Llama-agents now offer a setup for scalable, high-demand microservices addressed in this insightful post.

- This agent-and-tools-as-services pattern enhances scalability and simplifies microservice interactions.

- Analyzing Analysts: LlamaIndex Powers 10K Dissection**: The Multi-Document Financial Analyst Agent, treating each document as a tool, tackles the analysis of finance reports like 10Ks, thanks to Llama Index's capabilities.

- Pavan Mantha demonstrates the efficiency of this analysis using Llama Index's features.

tinygrad (George Hotz) Discord

- Red Hesitation: Instinct for Caution?: A member raised concerns regarding team red's drivers for Instinct cards, creating hesitation around purchasing used Mi100s due to potential support issues.

- The conversation included a note that currently only 7900xtx cards are under test, implying solo troubleshooting for Instinct card users.

- API Evolution: Crafting Custom Gradients: A user proposed a new API for custom grads, wishing for a functionality akin to jax.customvjp, enhancing tensor operations for tasks like quantization training.

- The suggested improvement targets the replacement of current operations with lazybuffers in tinygrad.functions, advocating for direct tensor manipulation.

- Amplifying Learning: Multi-GPU Guidance: Users seeking knowledge on multi-GPU training with Tinygrad were directed to the beautiful_mnist_multigpu.py example, highlighting model and data sharding techniques.

- Details on copying the model with

shard(axis=None)and data splitting withshard(axis=0)were shared, aiding in efficient parallel training.

- Details on copying the model with

- Equality Engagement: Torch-Like Tensor Wars: Queries on tensor comparison methods analogous to

torch.allwere resolved by introducing the comparison through(t1 == t2).min() == 1, later culminating in the addition of Tensor.all to Tinygrad.- This feature parity progression was documented in this Tinygrad commit, facilitating easier tensor operations for users.

- Optimization Obstacle: Adam’s Nullifying Effect: Concerns were voiced over the Adam optimizer in Tinygrad causing weights to turn into NaNs after its second iteration step, presenting a stark contrast to the stability of SGD.

- This debugging dialogue remains active as engineers seek a solution to prevent the optimizer from deteriorating the learning process.

OpenAccess AI Collective (axolotl) Discord

- MInference's Agile Acceleration: A member highlighted Microsoft's MInference project, which purports to accelerate Long-context LLMs' inference, trimming latency by up to 10x on an A100.

- MInference employs novel techniques for approximate and dynamic sparse calculations, aiming to balance accuracy with performance efficiency.

- Yi-1.5-9B Batches Up with Hermes 2.5: Updates on Yi-1.5-9B-Chat revealed it was fine-tuned using OpenHermes 2.5, with publicly shared models and quantizations that excelled on the AGIEval Benchmark.

- The enhanced model trained on 4x NVIDIA A100 GPUs for over 48 hours impresses with its 'awareness', and plans are in motion to push its context length to 32k tokens using POSE.

- Chat Template Conundrums for Mistral: A discussion arose on the best chat_template to use for Mistral finetuning in Axolotl, with the answer depending on dataset structure.

- Community consensus pointed towards utilizing the "chatml" template, with YAML configuration examples offered to guide members.

LLM Finetuning (Hamel + Dan) Discord

- MLOps Maneuvers and FP8 Puzzles: Community members shared insights, with one referencing a blog post focusing on MLOps implementation, and another discussing troubles with FP8 quantization in distributed vllm inference.

- Solutions for FP8's sensitivity issues were identified, resulting in corrected outputs and a GitHub thread provides more context for those tackling similar issues.

- Dissecting Model Integrations: A member is evaluating the integration of traditional tools like Transformers & Torch against established models from OpenAI and Anthropic.

- The conversation centers around finding an optimal approach that offers both effectiveness and seamless integration for project-specific needs.

- Crunch-Time for Credit Claims: Discussions in the #credits-questions channel made it clear: credit claims are closed permanently, signaling an end to that benefit.

- It was highlighted that this termination of credit accumulation applies universally, sparing no one and shutting down avenues for any future claims.

- Replicate Credits Countdown: A conversation in the #predibase channel revealed a one-month availability of first 25 Replicate credits, a critical update for users.

- This limited-time offer seems to be a pivotal point in usage strategies, especially for those counting on these initial credits for their projects.

Interconnects (Nathan Lambert) Discord

- Interconnects Bot: Room for Enhancement: A user expressed that the Interconnects bot is performing well, but has not seen significant changes in recent summarization outputs.

- The user advocated for notable updates or enhancements to boost the Interconnects bot's functionality.

- RAG Use Cases and Enterprise Discussions: Members discussed Retrieval Augmented Generation (RAG) models, highlighting their developing use cases within enterprises.

- Some participants suggested RAG might enhance the use of internal knowledge bases, while others reminisced about the model's hype during the early AI boom.

- Rummaging Through Early Reflections on RAG: Conversations touched on the ancestral excitement around RAG, with shared sentiments about the initial exaggerated expectations.

- The exchanges revealed a shared perspective that the early hype has not fully translated into extensive enterprise adoption.

- Cost Efficiency and Knowledge Retrieval: An Enterprise View: The talk revolved around how RAG could aid in cost efficiency within enterprise models.

- A stance was put forward that such models, by tapping into vast internal knowledge repositories, could cultivate new technological avenues for businesses.

Alignment Lab AI Discord

- Buzz Gains Admirers & Teases Release: Enthusiasm for Buzz was palpable in the group, with a member praising its capabilities and hinting at more to come.

- Autometa teased an upcoming release, sparking curiosity within the community.

- FPGA Focus: Autometa's Upcoming Meeting: Autometa announced plans to convene and discuss novel applications in the FPGA sphere, indicating several key topics for the agenda.

- Members were invited to engage and share their insights on the versatile uses of FPGAs in current projects.

- Opening Doors: Calendly Scheduling for Collaboration: To facilitate discussions on AI alignment, Autometa shared an open Calendly link for the community.

- The link serves as an open invitation for scheduling in-depth discussions, offering a platform for collaborative efforts.

LLM Perf Enthusiasts AI Discord

- Flash 1.5 Gaining Traction: Member jeffreyw128 expressed that Flash 1.5 is performing exceptionally well.

- No additional context or detailed discussions were provided on the topic.

- Awaiting Further Insights: Details are currently sparse regarding the technical performance and features of Flash 1.5.

- Community discussions and more in-depth analysis are expected to follow as the tool gains more attention.

AI Stack Devs (Yoko Li) Discord

- Sprite Quest: Google Image Galore: A member mentioned sprites were sourced from random Google image searches, adhering to the quick and varied needs of asset collection.

- The focus was on acquiring diverse sprites without purchase, while tilesets were the sole paid assets.

- Tileset Trade: The Only Expense: Conversations revealed that the only assets that were financially invested in were tilesets, highlighting a cost-conscious approach.

- This distinction underscores the methodical selection of assets, with money spent solely on tilesets and sprites obtained freely via search engines.

MLOps @Chipro Discord

- EuroPython Vectorization Talk: A user expressed their participation in EuroPython, hinting at a forthcoming talk focused on vectorization.

- Interested guild members might attend to gain insights into the role of vectorization in Python, an important aspect for AI engineering.

- Community Engagement at Conferences: The mention of EuroPython by a user highlights the community's outreach and active presence at Python conferences.

- This encourages networking and knowledge sharing among Python practitioners in the AI and Machine Learning fields.

Mozilla AI Discord

- Google's Gem Sparkles in Size and Performance: Google's Gemma 2 9B has entered the arena as an open-source language model, noted for its robust performance.

- Despite its smaller scale, Gemma 2 9B challenges heavyweights like GPT-3.5, suitable for use in environments with limited resources.

- Lambda Lift-Off: Gemma 2 Reaches Serverless Heights: The community explored serverless AI inference by integrating Google's Gemma 2 with Mozilla's Llamafile on AWS Lambda, as demonstrated in this tutorial.

- This serverless methodology enables deploying Gemma 2 9B efficiently in low-resource settings, including mobile devices, personal computers, or localized cloud services.

DiscoResearch Discord

- Models Fusion Forge: A member proposed using Hermes-2-Theta-Llama-3-70B as a foundation for crafting the Llama3-DiscoLeo-Instruct-70B model.

- The ensuing conversation hinted at the advantage of merging capabilities from both models to amplify performance.

- Enhancement Speculations: Engineers considered the speculated benefits of model integration focused on Hermes-2-Theta-Llama-3-70B and Llama3-DiscoLeo-Instruct.

- The dialogue revolved around potential strides in AI capabilities through strategic fusion of distinct model features.

The Torchtune Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The AI21 Labs (Jamba) Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

PART 2: Detailed by-Channel summaries and links

{% if medium == 'web' %}

Stability.ai (Stable Diffusion) ▷ #general-chat (804 messages🔥🔥🔥):

Model LicensingPerformance and TroubleshootingGeneration Techniques and ToolsCommunity and Ethical ConcernsImage Upscaling Techniques

- Stability AI Model Licensing Confusion: The community is grappling with understanding the new Stability AI model licensing terms, especially for businesses that make over $1M in revenue.

- Clarifications were provided, but concerns remain about using SD3 for commercial purposes and the impact on small businesses.

- Performance Issues with Image Generation: Users report significant slowdowns when using controlnet with text2img, often due to VRAM limitations causing memory shuffling with system RAM.

- Adjusting Windows pagefile settings and using offloading strategies can mitigate some of the slowdowns.

- Advanced Image Upscaling Strategies: A detailed workflow involving multiple upscaling steps and software like Photoshop, SUPIR, and transformer upscalers was shared for achieving high-resolution images.

- This method avoids common issues like tiling and aims to maintain a balance between detail addition and image consistency.

- Community's Reaction to Model Quality and Releases: The community expressed disappointment over the quality of the SD3 model, comparing it unfavorably to previous versions and voicing concerns about its rushed release.

- There is anticipation for improved models like the 8B version, and ongoing discussions about the potential impacts of NSA involvement and other ethical concerns.

- Technical Support and Solutions: Discussions included solving problems with specific prompts, integrating external tools for better results, and handling hardware limitations.

- Advice was given on using terms effectively in prompts and leveraging multiple software tools to achieve desired image generation results.

Links mentioned:

HuggingFace ▷ #general (605 messages🔥🔥🔥):

Hermes 2GPTs AgentsOpenAI's sidebarsFundraising for AI projectsInference API issues

- Inference API faces stalling issues: Several members reported long initialization times for inference endpoints, with potential causes being GPU availability issues or specific configuration settings. One member suggested using AWS Nvidia A10G on eu-west-1 as an alternative.

- GPTs Agents cannot learn after initial training: A member shared a concern about GPTs agents not learning from additional information provided after their initial training.

- Request for Custom LLM Metrics: A user inquired about custom metrics for LLMs such as response completeness, text similarity, and hallucination index. They mentioned evaluating metrics like leivenstein distance, surprisal/perplexity, and specific task-related metrics like BLEU score for machine translation.

- Antispam Measures Considering Regex Patterns: Discussions around improving antispam measures included implementing regex patterns to automatically filter and ban certain words or phrases.

- Community Feedback on Summarization Feature: Community discussed the utility of Discord's built-in summarization feature, which uses OpenAI's GPT-3.5, expressing concerns about privacy and effectiveness.

Links mentioned:

HuggingFace ▷ #today-im-learning (4 messages):

Boid AILLM/GenAI GlossaryGPA Predictor with Scikit-LearnGenerative Text Project

- Introducing Boid AI Concept: A member introduced the concept of Boid AI, where 'boid' stands for 'bird-oid', implying bird-like AI behavior.

- Comprehensive LLM/GenAI Glossary Open-Sourced: A member shared a comprehensive LLM glossary via GitHub, aimed at making AI terms more accessible.

- Explore, Learn, and Add terms about LLMs and GenAI.

- Building a GPA Predictor with Scikit-Learn: A member shared about creating a rough GPA predictor using Scikit-Learn on Kaggle and reading 'Hands-On Machine Learning' by Geron Aurelion.

- They also watched some of 3Blue1Brown’s series on neural networks for further learning.

- Advice on Generative Text Project: A member asked for advice on starting a generative text project, debating between using existing models or building one from scratch.

- They mentioned a recommendation to use Hugging Face along with Langchain, seeking reasons for why Langchain should be used.

Link mentioned: Tweet from Prashant Dixit (@Prashant_Dixit0): ✨Open-sourcing comprehensive LLM Glossary✨ Explore, Learn, and Add terms about #LLMs and #GenAI. Let's make AI easy for everyone. 🚨Adding new terms on regular basis Don't forget to give st...

HuggingFace ▷ #cool-finds (16 messages🔥):

Claude ArtifactsPersonaHub DatasetPseudonymization TechniquesAdmin Requests

- Claude focuses on artifacts for impressive results: A user speculated that Claude's impressive performance may be due to its focus on 'artifacts'.

- Exploring the PersonaHub Dataset: A user shared the PersonaHub dataset designed for understanding performing arts centers and urban planning.

- The dataset includes scenarios like scheduling multi-show festivals and contrasting public services in different neighborhoods.

- Pseudonymization Techniques Impact Model Quality: A paper from TrustNLP 2023 analyzed pseudonymization techniques for text classification and summarization.

- Replacing named entities with pseudonyms preserved performance on some NLP tasks.

- Frequent admin pings and spam issues: Members frequently pinged admins and requested bans for repeated spam, specifically mentioning 'opensea'.

- "Please ban the word opensea" and discussions on hacked users and potential bots occurred.

Links mentioned:

HuggingFace ▷ #i-made-this (24 messages🔥):

in10search Tabs Sidepanel AIZeroGPU HuggingFace SpaceqdurllmAI on-call developer: merlinnDarkWebSight

- Browse with in10search Tabs Sidepanel AI: A new browser sidepanel extension called in10search Tabs Sidepanel AI integrates horizontal tabs and ChatGPT. More details can be found on GitHub.

- ZeroGPU HuggingFace Space for Stable Diffusion Models: A member introduced a HuggingFace Space that allows users to compare multiple Stable Diffusion Models like SD3 Medium, SD2.1, SDXL, and more. Check it out here.

- qdurllm: Local Search Engine with Qdrant & LLMs: The newly launched open-source product qdurllm combines Qdrant, URL scraping, and Large Language Models for local search and chat. Explore further on its GitHub repository.

- AI on-call developer: merlinn: An AI on-call developer named merlinn helps investigate production incidents by providing contextual information. Check it out and provide feedback on GitHub.

- gary4live Ableton plug-in: A fun plug-in called gary4live for Ableton was released on Gumroad. It's a max4live device that integrates playful workflows with AI, available for free here.

Links mentioned:

HuggingFace ▷ #computer-vision (22 messages🔥):

Torchmetrics for Object DetectionRT-DETR Model ReleaseCogVLM2 for Vision-Language ModelsZero-shot Object Detection ModelsMaskFormer and Instance Segmentation

- Torchmetrics recommended for Object Detection: Torchmetrics is suggested for object detection metrics and utilized in official example scripts with the Trainer API and Accelerate.

- RT-DETR Model Release: RT-DETR is a YOLO-like model for real-time object detection combining convolutions and attention-based transformers.

- It comes with an Apache 2.0 license, offering the best of both worlds.

- CogVLM2 for Vision-Language Models: The CogVLM2 is recommended for various tasks with large-scale vision language models, including impressive performance on benchmarks like TextVQA and DocVQA.

- Zero-shot Object Detection Models: The Transformers library supports zero-shot object detection models such as OWL-ViT, OWLv2, and Grounding DINO for textual description-based object detection.

- These models can also perform image-guided object detection as demonstrated in this demo.

- MaskFormer and Instance Segmentation: MaskFormer models trained on datasets like ADE20k for semantic segmentation can be extended for use in instance segmentation with official scripts newly added here.

- It is suggested to start from pre-trained COCO models for fine-tuning on instance segmentation tasks.

Links mentioned:

HuggingFace ▷ #NLP (7 messages):

Label Error in NLP DatasetExtending deepseek-ai model context lengthByte Pair Encoding Implementation in CComprehensive LLM/GenAI Glossary

- Label Error Frustrates User: A user reported an error

ValueError: Invalid string class label ['B-COMPANY']while working with an NLP dataset imported from a .txt file.- The issue causes frequent changes in error messages, complicating the troubleshooting process.

- deepseek-ai Model Context Length Inquiry: A user asked if it's possible to extend the context length of the

deepseek-ai/deepseek-math-7b-rlmodel from 4k to 8k without tuning.- They explored options like vLLM or loading directly via HF to achieve this extension.

- Byte Pair Encoding in C Released: Ashpun announced the implementation of a minimal Byte Pair Encoding mechanism in C.

- A blog post is coming soon, and the code is now available on GitHub.

- LLM/GenAI Glossary Open-Sourced: Prashant Dixit promoted a comprehensive LLM Glossary aimed at making AI easier for everyone.

- The terms are regularly updated and the project is open-source, available on GitHub.

Links mentioned:

HuggingFace ▷ #diffusion-discussions (1 messages):

Artifacting in sd-vaeCommon issues in sd-vae reconstruction

- Artifacting in sd-vae raises questions: A member questioned if blue and white pixel artifacting is normal when using sd-vae for reconstruction.

- This sparked a discussion about common issues and troubleshooting methods for pixel artifacting in sd-vae.

- Identifying Common Issues in sd-vae: Members delved into common issues encountered with sd-vae, focusing on pixel artifacting and reconstruction quality.

- Suggestions for troubleshooting included experimenting with different parameter settings and sharing results for community feedback.

HuggingFace ▷ #gradio-announcements (1 messages):

Enhanced Documentation Search on GradioNavigation of Gradio Documentation Pages

- Gradio Enhances Documentation Search: The Gradio community announced the release of a new enhanced Search functionality within their documentation pages, making it easier to navigate and access information.

- They invite users to try it out by visiting the documentation and emphasize their commitment to improving user experience.

- Quickstart and Tutorials Now Easier to Access: The improved search tool helps users find quickstart guides and in-depth tutorials more efficiently.

- Gradio encourages users to keep sending feedback to enhance their experience further.

Link mentioned: Gradio: Build & Share Delightful Machine Learning Apps

Perplexity AI ▷ #general (502 messages🔥🔥🔥):

Issues with PerplexityPro Search and LimitationsSubscription AlternativesImage GenerationTechnical Problems and Bugs

- Users face issues with Perplexity's performance: Several users mentioned that Perplexity often fails to provide accurate or recent articles, returning outdated information despite precise prompts.

- One user expressed frustration with context loss in follow-up questions, suggesting that GPT-4o maintains context better than Claude 3.5.

- Pro Search disappoints some users in value: A few users felt the Pro subscription is a waste of money, seeing no significant improvement in results compared to the free version.

- Despite this, Perplexity Pro offers more advanced search capabilities and frequent updates, though some users believe alternative services provide better value for similar or lower costs.

- Exploring alternative AI services: Users discussed various alternatives like Merlin.ai, ChatLLM in Abacus.AI, and You.com, sharing mixed reviews on their performance and usability.

- Monica.ai and OpenRouter with LibreChat were highlighted for their extensive features and user-friendly interfaces, making them strong competitors.

- Image generation capabilities of Perplexity: Some users were unaware that Perplexity can generate images, needing clarification on accessing this feature.

- Perplexity Pro users have image generation access, and leveraging the custom prompt option in image generation can yield better results.

- Bugs and technical issues: Several users reported bugs in Perplexity, such as text overlap, context loss, and issues with generating scripts.

- The community suggested workarounds like using system prompts and emphasized the need for more intuitive and straightforward features to improve user experience.

Links mentioned:

Perplexity AI ▷ #sharing (15 messages🔥):

Minecraft Underground SurvivalAverage Cost ResearchRelational Table ConsiderationsCurrent Redemption ProgramsNext iPad Mini Release

- Minecraft Underground Survival Guide: Several users discussed a detailed guide to Minecraft Underground Survival, exploring strategies for thriving in the game's underground environment.

- Average Cost Research Findings: One member shared an incremental insight from their research on average costs and mentioned it was 'jaw-dropping'.

- Setting Up New Google Account Issues: A user sought help about setting up a new Google account, indicating they had difficulties during the process.

- Exploring Neuromorphic Chips: Members delved into the technicalities of how neuromorphic chips work, which emulate the human brain's architecture for efficient processing.

- Craft CMS Upgrade Guidance: One discussion focused on upgrading Craft CMS from version 3.9.5 to 5, covering necessary steps and potential challenges.

Link mentioned: YouTube: no description found

Perplexity AI ▷ #pplx-api (9 messages🔥):

Online model performanceAPI request processingAPI vs Perplexity search resultsBeta access delayMulti-step search in API

- New online model shows improved performance: Online model is reportedly performing better, particularly in handling multi-part queries, as shared by a user.

- Feels more robust and precise in generating responses compared to previous versions.

- Issues around API request processing: Users are questioning the processing time for API access requests, and are curious about ways to expedite the process.

- No clear answers were provided regarding usual processing times or expedited requests.

- Disparity between API results and Perplexity search: Concern raised about API results not matching the Perplexity.ai search page results.

- A member clarified that API results are the same as the non-pro search results.

- Long wait for Beta access: A user expressed dissatisfaction with waiting nearly a month for Beta access with no response yet.

- No updates or timeframe provided for resolving the delay in Beta access.

- Multi-step search in Perplexity API: A user inquired about the availability of the multi-step search feature in the Perplexity API.

- No concrete information was available; the member was directed to a Discord channel link for potentially more details.

LM Studio ▷ #💬-general (249 messages🔥🔥):

Hermes 2.5Mistral strugglesModel MergingOpen EmpathicIPEX-LLM integration

- IPEX-LLM integration works despite hassles: After following the IPEX-LLM quickstart guide, users report varied success in integrating IPEX-LLM with llama.cpp.

- Some members faced difficulties due to outdated guides, while others reported successful builds by following official instructions.

- MacBook M3 handles large models: Users discuss the performance of M2 and M3 MacBooks, particularly praising the M3 MacBook Pro with 128GB RAM for handling large models like WizardLM-2-8x22B.

- Despite some issues with memory limits on older models, the M3 is seen as a robust solution for large model inference.

- WizardLM-2-8x22B performance tested: A member sought help to test the performance of WizardLM-2-8x22B-Q4_K_M on an M2 MacBook with 32k context due to previous claims of poor performance.

- Due to memory constraints, the model failed to load, with a M3 MacBook scheduled for a retry.

- InternLM models and vision capabilities: Members inquired about using InternLM models for vision tasks, noting issues with compatibility in LM Studio.

- While some models worked well in Python, users reported needing specific configurations and adapters for vision in LM Studio.

- GLM4 model support in llama.cpp: A user asked if LM Studio would support GLM4 models since llama.cpp recently added support for them, hoping to run CodeGeex models efficiently.

Links mentioned:

LM Studio ▷ #🤖-models-discussion-chat (163 messages🔥🔥):

Experiences with Different Model VersionsModel Performance IssuesModel Quantization DiscussionsFine-tuning and CustomizationCategorizing Text Prompts

- Diverse Model Experiences and Issues: Users discussed their experiences with various models such as Hermes, Mistral, and Gemma, noting issues like performance discrepancies and infinite loops.

- Some mentioned specific hardware setups and configurations to diagnose or improve performance, highlighting different quantization settings and their impacts.

- Gemma 2 Models Face Performance Bugs: Multiple users experienced performance issues with Gemma 2 models, including slow inference and incorrect math calculations.

- Community expects improvements in upcoming updates to resolve these bugs, with specific discussions around Gemma model architectural issues.

- Quantization Techniques for Optimal Performance: Conversations leaned towards advanced quantization techniques, like granularity in quantizing layers to improve model performance while maintaining output quality.

- Users shared links to quantized models and discussed using formats like F32 and F16 for better results.

- Challenges in Text Prompt Categorization: A user asked about categorizing text prompts within LM Studio but was informed that LLMs aren't effective for such tasks.

- Hints were given to explore BERT models for text classification, which aren't yet supported in LM Studio.

- Custom Training and Fine-tuning Limitations: A user inquired about training models with specific datasets in LM Studio but was corrected, as the platform supports only inference.

- Alternatives like text embeddings and fine-tuning using platforms like Hugging Face were suggested.

Links mentioned:

LM Studio ▷ #🧠-feedback (4 messages):

x64bit installer for LM StudioFeatures of LM StudioCommunity feedback on LM StudioVision-enabled modelsTool calling and model capabilities

- LM Studio installer confusion with x64bit: A member questioned the absence of a 64-bit installer for LM Studio, incorrectly assuming x86 was not 64-bit.

- Community feedback on LM Studio: A member shared their experience with LM Studio, praising its beginner-friendly nature but expressing a need for more advanced features.

- Calls for advanced features in LM Studio: The same member urged LM Studio to release beta features for tool calling, RAG for file uploads, and image generation capabilities to keep up with competitors.

LM Studio ▷ #📝-prompts-discussion-chat (1 messages):

RAG applicationsOptimal placement of retrieved contextSystem message vs final user message

- Optimal Context Placement in RAG Applications: A discussion emerged about where to place the retrieved context from a vector database in RAG applications—either in the system message or the final user message.

- Members are weighing the benefits of context placement strategies to enhance system response accuracy and relevance.

- System vs Final User Message Debate: The debate is focused on whether embedding the context in the system message or the final user message yields better performance.

- Participants are considering various use cases and potential impacts on the user experience.

LM Studio ▷ #⚙-configs-discussion (3 messages):

internllm2_5 configmodels for understanding PDFsusing LMStudio with Shell GPT

- Seeking config for internllm2_5: A member asked if anyone can share a good configuration for internllm2_5.

- Looking for models to understand PDFs: Another member inquired about suitable models for understanding PDFs.

- Help needed to use LMStudio with Shell GPT: A member sought help on how to configure LMStudio instead of Ollama with Shell GPT for command-line AI productivity.

- They tried changing

API_BASE_URLandDEFAULT_MODEL, but it didn't work, and they asked for further assistance.

- They tried changing

Link mentioned: GitHub - TheR1D/shell_gpt: A command-line productivity tool powered by AI large language models like GPT-4, will help you accomplish your tasks faster and more efficiently.: A command-line productivity tool powered by AI large language models like GPT-4, will help you accomplish your tasks faster and more efficiently. - TheR1D/shell_gpt

LM Studio ▷ #🎛-hardware-discussion (44 messages🔥):

Snapdragon Elite X MachinesRAM upgrades and costsUnified Memory in Windows and MacExternal GPUsFeasibility of using Quad Xeon Servers for AI

- Waiting on NPU support for Snapdragon Elite X: A user expressed concerns about the price difference between 16 GB and 32 GB RAM in Snapdragon Elite X machines and is considering waiting for NPU support before making a purchase.

- Another user suggested considering an M3 Max MacBook Pro instead, highlighting its suitability for development and LLM tasks.

- Unified Memory Transition in Windows: Users discussed the potential benefits of Windows moving to unified memory, with comparisons made to Apple's unified memory system.

- They speculated on upcoming technologies, with mentions of Lunar Lake and current Qualcomm Snapdragon X laptops potentially supporting it.

- External GPU for Inference: A member asked whether an external GPU could be used for LLM inference on a laptop.

- It was confirmed that it is possible with proper GPU configuration, but bandwidth bottlenecks might be a concern.

- Feasibility of using Quad Xeon Servers for AI: A user questioned the viability of running LLMs on a quad Xeon X7560 server with 256 GB DDR3 RAM.

- Members noted that the absence of AVX2 support and the limitations of DDR3 RAM would make it impractical for LLM tasks.

LM Studio ▷ #🧪-beta-releases-chat (2 messages):

Suspicious Activity in ChatDiscord Update Delays

- Suspicious User Handled Quickly: A member pointed out that <@302816205217988609> looks suspicious.

- Another member confirmed that it's been dealt with and is just awaiting Discord's update: “ty dealt with, discord just taking it's time to update.”

- Discord Update Delays: Discord is experiencing delays in updating changes related to suspicious users.

- A member reassured that the issue has been addressed, but users might still see outdated information.

LM Studio ▷ #autogen (1 messages):

Cost Warning SuppressionLM-Studio ConfigurationMessaging Bug

- Suppress Cost Warnings: Logging Enhancements Implemented: A user shared a code snippet to suppress cost warnings from the autogen.oai.client logger by adding a custom filter to eliminate specific messages.

- New LM-Studio Config: Integrating

gemma-2b-it-GGUFModel: The new LM-Studio configuration was shared, featuring thegemma-2b-it-GGUFmodel with no caching enabled and a local server setup at http://localhost:1234/v1. - Messaging Bug from January: Known Issue with Message Order**: A user mentioned a prior bug from January about an issue with sending system, assistant, and user messages in a specific order.

LM Studio ▷ #amd-rocm-tech-preview (2 messages):

LM StudioGeneration SpeedFedora 40 Kinoite7900XTX

- Record-breaking Generation Speed in LM Studio: A user confirmed that the latest update in LM Studio is functioning as expected and highlighted the wild increase in generation speed.

- Fedora 40 Kinoite Testing with 7900XTX: A user mentioned their configuration of Fedora 40 Kinoite running with a 7900XTX GPU.

LM Studio ▷ #🛠-dev-chat (3 messages):

Removing CPU requirement for appForcing the model to load into RAMGPU offload configuration

- Remove CPU requirement to open app: A user inquired about how to remove the minimum CPU requirement to open the app.

- Force model to load into RAM: A user asked how to force the model to load into RAM instead of VRAM due to slowdown issues while running Stable Diffusion concurrently.

- Another user suggested to disable GPU offload in the side config menu as a solution.

OpenAI ▷ #ai-discussions (325 messages🔥🔥):

Hermes 2Mistral strugglesModel MergingOpen EmpathicCloudflare blocking AI bots

- Discussion on the limitations and evolution of current AI: Members are discussing the importance of Hermes 2 and its improved version Hermes 2.5 in benchmarks, yet expressing concerns about models like Mistral struggling to extend beyond 8k without further pretraining.

- Merging tactics were suggested as potential improvements for AI models, while others noted safety and context limits in AI like Claude 3.5.

- Cloudflare's AI scraper bot blocking feature: A concern was raised about Cloudflare introducing a feature that allows websites to block AI scraper bots, which could impact data collection for AI.

- However, some believe that only those actively trying to block AIs will use it, and most websites will not.

- Debate on AGI and ASI potential: The community is debating the potential and timeline for Artificial General Intelligence (AGI) and Artificial Super Intelligence (ASI), with comparisons to Nvidia’s Omniverse.

- Members are weighing the practicality and imminence of AGI, citing Nvidia's advancements and discussing whether companies like Safe Superintelligence Inc. can achieve ASI sooner than established players like OpenAI or Google.

- Future of automation and AI's role in the workforce: Participants discussed the impact of AI on automating factories, noting examples like an entirely automated BMW factory and Tesla’s plans for mass-producing bots.

- There were concerns and opinions on how these advancements would affect human labor, the efficiency of creating a 'hard drive brain,' and the balance of human-AI collaboration.

- Community and practical implementations of AI: Suggestions were made for practical applications, like using OpenAI's GPT-4o's vision capabilities for real-time object detection, while alternatives like Computer Vision models (YOLO) were recommended for efficiency.

- Members shared ideas for organizing community events and meetups to discuss these advancements, and engaging in forums like OpenAI’s Community for better coordinated efforts.

Links mentioned:

OpenAI ▷ #gpt-4-discussions (13 messages🔥):

GPT-4o vs GPT-4Verification issuesCustom GPTs + Zapier integration

- GPT-4o perceived as faster but not necessarily better: Community members debated whether GPT-4o is a better replacement for GPT-4 due to its faster responses, though some argued it sacrifices quality.

- Recurring verification prompt issue: Multiple users reported encountering a persistent 'Verify your Human' pop-up when accessing ChatGPT, which caused significant frustration.

- Challenges with Custom GPTs and Zapier integration: A user inquired about experiences using custom GPTs with Zapier for automating tasks, noting that Zapier's unreliability is a challenge.

OpenAI ▷ #prompt-engineering (3 messages):

Content Creation TipsIncreasing EngagementPlatform OptimizationContent Calendar StructureTracking Metrics for Success

- Best prompts for engaging content: A member asked which prompts work best for a content creator looking to create engaging content and gain followers.

- Another user responded with a detailed request to ChatGPT for content ideas, engagement tips, platform-specific advice, content calendar suggestions, and key metrics to track success.