AI News for 10/22/2024-10/23/2024. We checked 7 subreddits, 433 Twitters and 31 Discords (229 channels, and 3078 messages) for you. Estimated reading time saved (at 200wpm): 346 minutes. You can now tag @smol_ai for AINews discussions!

People are still very much exploring the implications of Anthropic's new Computer Use demo/usecases. Some are pointing out its failures and picking apart the terminology, others have hooked it up to phone simulators and real phones. Kyle Corbitt somehow wrote a full desktop app for Computer Use in 6 hours so you dont have to spin up the docker demo Anthropic shipped.

But not a single person in the room has any doubt that this will get a lot better very soon and very quickly.

{% if medium == 'web' %}

Table of Contents

[TOC]

{% else %}

The Table of Contents and Channel Summaries have been moved to the web version of this email: [{{ email.subject }}]({{ email_url }})!

{% endif %}

AI Twitter Recap

all recaps done by Claude 3.5 Sonnet, best of 4 runs.

Anthropic's Claude 3.5 Release and Computer Use Capability

-

New Models and Capabilities: @AnthropicAI announced an upgraded Claude 3.5 Sonnet, a new Claude 3.5 Haiku model, and a computer use capability in beta. This allows Claude to interact with computers by looking at screens, moving cursors, clicking, and typing.

-

Computer Use Details: @alexalbert__ explained that the computer use API allows Claude to perceive and interact with computer interfaces. Users feed in screenshots, and Claude returns the next action to take (e.g., move mouse, click, type text).

-

Performance Improvements: @alexalbert__ noted significant gains in coding performance, with the new 3.5 Sonnet setting a state-of-the-art on SWE-bench Verified with a score of 49%, surpassing all models including OpenAI's o1-preview.

-

Haiku Model: @alexalbert__ shared that the new Claude 3.5 Haiku replaces 3.0 Haiku as Anthropic's fastest and least expensive model, outperforming many state-of-the-art models on coding tasks.

-

Development Process: @AnthropicAI mentioned they're teaching Claude general computer skills instead of making specific tools for individual tasks, allowing it to use standard software designed for people.

-

Limitations and Future Improvements: @AnthropicAI acknowledged that Claude's current ability to use computers is imperfect, with challenges in actions like scrolling, dragging, and zooming. They expect rapid improvements in the coming months.

Other AI Model Releases and Updates

-

Mochi 1: @_parasj announced Mochi 1, a new state-of-the-art open-source video generation model released under Apache 2.0 license.

-

Stable Diffusion 3.5: @rohanpaul_ai reported the release of Stable Diffusion 3.5, including Large (8B parameters) and Medium (2.5B parameters) variants, with improvements in training stability and fine-tuning flexibility.

-

Embed 3: @cohere launched Embed 3, a multimodal embedding model enabling enterprises to build systems that can search across both text and image data sources.

-

KerasHub: @fchollet announced the launch of KerasHub, consolidating KerasNLP & KerasCV into a unified package covering all modalities, including 37 pretrained models and associated workflows.

AI Research and Development

-

Differential Transformer: @rohanpaul_ai discussed a new paper from Microsoft introducing the "Differential Transformer," which uses differential attention maps to remove attention noise and push the model toward sparse attention.

-

Attention Layer Removal: @rasbt shared findings from a paper titled "What Matters In Transformers?" which found that removing half of the attention layers in LLMs like Llama doesn't noticeably reduce modeling performance.

-

RAGProbe: @rohanpaul_ai highlighted a paper introducing RAGProbe, an automated approach for evaluating RAG (Retrieval-Augmented Generation) pipelines, exposing limitations and failure rates across various datasets.

Industry Developments and Collaborations

-

Perplexity Pro: @AravSrinivas announced that Perplexity Pro is transitioning to a reasoning-powered search agent for harder queries involving several minutes of browsing and workflows.

-

Timbaland and Suno: @suno_ai_ shared that Grammy-winning producer Timbaland is collaborating with Suno AI, exploring how AI is helping him rediscover creativity in music production.

-

Replit Integration: @pirroh mentioned that Replit has integrated Claude computer use as a human feedback replacement in their Agent, reporting that it "just works."

AI Reddit Recap

/r/LocalLlama Recap

Theme 1. Major LLM Updates: Claude 3.5 and Stable Diffusion 3.5

- Stability AI has released Stable Diffusion 3.5, comes in three variants, Medium launches October 29th. (Score: 110, Comments: 40): Stability AI has released Stable Diffusion 3.5, offering three variants: Base, Medium, and Large. The Base model is available now, with Medium set to launch on October 29th, and Large coming at a later date. This new version boasts improved image quality, better text understanding, and enhanced capabilities in areas like composition, lighting, and anatomical accuracy.

- Users humorously noted Stability AI included an image of a woman on grass in their blog, referencing a previous meme. Some tested the model's ability to generate this scene, with mixed results including unexpected NSFW content.

- Comparisons between SD3.5 and Flux1-dev were made, with users reporting that Flux1-dev generally produced more realistic outcomes and less deformities in limited testing.

- The community discussed potential applications of SD3.5, including its use as a base for fine-tuning projects. However, some noted that the license restrictions may limit its adoption for certain use cases.

- Introducing computer use, a new Claude 3.5 Sonnet, and Claude 3.5 Haiku (Score: 187, Comments: 82): Anthropic has launched Claude 3.5 Sonnet and Claude 3.5 Haiku, introducing computer use capability that allows the AI to interact with virtual machines. These models can now perform tasks like web browsing, file manipulation, and running code, with Sonnet offering improved performance over Claude 3.0 and Haiku providing a faster, more cost-effective option for simpler tasks. The new versions are available through the API and Claude web interface, with computer use currently in beta and accessible to a limited number of customers.

- Claude 3.5 Sonnet shows significant performance improvements over previous versions, with users noting its strength in coding tasks. The model now offers computer use capability in beta, allowing interaction with virtual machines for tasks like web browsing and file manipulation.

- Users express concerns about safety implications of giving Claude remote code execution capabilities. Anthropic recommends precautions such as using dedicated virtual machines and limiting access to sensitive data when utilizing the computer use feature.

- The naming convention for Claude models has become confusing, with Claude 3.5 Sonnet and Claude 3.5 Sonnet (new) causing potential mix-ups. Users suggest clearer versioning, comparing the current naming strategy to complex product names from companies like Samsung and Sony.

Theme 2. Open Source AI Model Developments and Replication Efforts

- O1 Replication Journey: A Strategic Progress Report – Part I (Score: 34, Comments: 6): The author reports on their progress in replicating OpenAI's O1 model, focusing on the first 12 billion parameters of the 120B parameter model. They outline their strategy of training smaller models to validate components before scaling up, and have successfully trained models up to 1.3B parameters using techniques like flash attention and rotary embeddings. The next steps involve scaling to 12B parameters and implementing additional features such as multi-query attention and grouped-query attention.

- The author clarifies that the focus of the article is on the learning method and results, not the dataset, in response to a question about the dataset's composition and creation process.

- The O1 Replication Journey tech report, which hasn't been widely discussed, introduces a shift from "shortcut learning" to "journey learning" and explores O1's thought structure, reward models, and long thought construction using various methodologies.

- A commenter notes the project's success in producing longer-form reasoning answers with commentary similar to O1, but points out that the research artifacts (fine-tuned models and "Abel" dataset) are not currently publicly available.

- 🚀 Introducing Fast Apply - Replicate Cursor's Instant Apply model (Score: 187, Comments: 40): Fast Apply is an open-source, fine-tuned Qwen2.5 Coder Model designed to quickly apply code updates from advanced models to produce fully edited files, inspired by Cursor's Instant Apply model. The project offers two models (1.5B and 7B) with performance speeds of ~340 tok/s and ~150 tok/s respectively using a fast provider (Fireworks), making it practical for everyday use and lightweight enough to run locally. The project is fully open-source, with models, data, and scripts available on HuggingFace and GitHub, and can be tried on Google Colab.

- The project received praise for being open-source, with users expressing enthusiasm for its accessibility and potential for improvement. The developer mentioned plans to create a better benchmark using tools like DeepSeek.

- Users inquired about accuracy comparisons between the 1.5B and 7B models. The developer shared a rough benchmark showing the 1.5B model's impressive performance for its size, recommending users start with it before trying the 7B version if needed.

- Discussion touched on potential integration with other tools like continue.dev and Aider. The developer expressed interest in submitting PRs to support and integrate the project with existing platforms that currently only support diff/whole formats.

Theme 3. AI Model Comparison Tools and Cost Optimization

- I built an LLM comparison tool - you're probably overpaying by 50% for your API (analysing 200+ models/providers) (Score: 44, Comments: 16): A developer created a free tool (https://whatllm.vercel.app/) to compare 200+ LLM models across 15+ providers, analyzing price, performance, and quality scores. Key findings include significant price disparities (e.g., Qwen 2.5 72B is 94% cheaper than Claude 3.5 Sonnet for similar quality) and performance variations (e.g., Cerebras's Llama 3.1 70B is 18x faster and 40% cheaper than Amazon Bedrock's version).

- The developer provided visualizations to help understand the data, including a chart comparing metrics like price, speed, and quality. They used Nebius AI Studio's free inference credits with Llama 70B Fast for data processing and comparisons.

- Discussion arose about the validity of the quality index, with the developer noting that Qwen 2.5 scores only slightly lower on MMLU-pro and HumanEval, but higher on Math benchmarks compared to more expensive models.

- Users expressed appreciation for the tool, with one calling it a "game changer" for finding the best LLM provider. The developer also recommended Nebius AI Studio for users looking for LLMs with European data centers in Finland and France.

- Transformers.js v3 is finally out: WebGPU Support, New Models & Tasks, New Quantizations, Deno & Bun Compatibility, and More… (Score: 75, Comments: 4): Transformers.js v3 has been released, introducing WebGPU support for significantly faster inference on compatible devices. The update includes new models and tasks such as text-to-speech, speech recognition, and image segmentation, along with expanded quantization options and compatibility with Deno and Bun runtimes. This version aims to enhance performance and broaden the library's capabilities for machine learning tasks in JavaScript environments.

- Transformers.js v3 release highlights include WebGPU support for up to 100x faster inference, 120 supported architectures, and over 1200 pre-converted models. The update is compatible with Node.js, Deno, and Bun runtimes.

- Users expressed enthusiasm for the library's performance, with one noting consistent surprise at how fast the models run in-browser. The community showed appreciation for the extensive development and sharing of this technology.

- A developer inquired about the possibility of including ONNX conversion scripts used in the release process, indicating interest in the technical details behind the library's model conversions.

Theme 4. GPU Hardware Discussions for AI Development

- What the max you will pay for 5090 if the leaked specs are true? (Score: 32, Comments: 94): The post speculates on the potential specifications and performance of NVIDIA's upcoming 5090 GPU. It suggests the 5090 might feature a 512-bit memory bus, 32GB of RAM, and be 70% faster than the current 4090 model for AI workloads.

- Users debate the value of 32GB VRAM, with some arguing it's insufficient for LLM workloads. Many prefer multiple 3090s or 4090s for their combined VRAM capacity, especially for running 70B models.

- Discussion on potential pricing of the 5090, with estimates ranging from $2000-$3500. Some speculate the 4090's price may decrease, potentially flooding the market with used GPUs.

- Comparisons made between the 5090 and other options like multiple 3090s or the A6000. Users emphasize the importance of total VRAM over raw performance for AI workloads.

Other AI Subreddit Recap

r/machinelearning, r/openai, r/stablediffusion, r/ArtificialInteligence, /r/LLMDevs, /r/Singularity

AI Model Releases and Improvements

-

Anthropic releases updated Claude 3.5 models: Anthropic announced updated versions of Claude 3.5 Sonnet and Claude 3.5 Haiku, with improved performance across various benchmarks. The new Sonnet model reportedly shows significant improvements in reasoning, code generation, and analytical capabilities.

-

Stability AI releases SD 3.5: Stability AI released Stable Diffusion 3.5, including a large 8 billion parameter model and a faster "turbo" version. Early testing suggests improvements in image quality and prompt adherence compared to previous versions.

-

Mochi 1 video generation model: A new open-source video generation model called Mochi 1 was announced, claiming state-of-the-art performance in motion quality and human rendering.

AI Capabilities and Applications

-

Claude's computer control abilities: Anthropic demonstrated Claude's new ability to control a computer and perform tasks like ordering pizza online. This capability allows Claude to interact with web interfaces and applications.

-

AI playing Paperclips game: An experiment showed Claude playing the Paperclips game autonomously, demonstrating its ability to develop strategies and revise them based on new information.

-

OpenAI developing software automation tools: Reports suggest OpenAI is working on new products to automate complex software programming tasks, potentially in response to competition from Anthropic.

AI Development and Research

-

Fixing LLM training bugs: A researcher fixed critical bugs affecting LLM training, particularly related to gradient accumulation, which could have impacted model quality and accuracy.

-

Pentagon's AI deepfake project: The US Department of Defense is reportedly seeking to create convincing AI-generated online personas for potential use in influence operations.

AI Ethics and Security

-

ByteDance intern fired for malicious code: An intern at ByteDance was fired for allegedly planting malicious code in AI models, raising concerns about AI security and access controls.

-

Claude's anti-jailbreaking measures: The updated Claude 3.5 model appears to have improved defenses against jailbreaking attempts, demonstrating more sophisticated detection of potential manipulation.

AI Discord Recap

A summary of Summaries of Summaries by O1-preview

Theme 1: Claude 3.5 Takes the AI World by Storm

- Claude 3.5 Wows with 15% Boost in Coding Skills: Communities across OpenAI and Unsloth AI are thrilled with Claude 3.5 Sonnet's 15% performance gain on SWE-bench, especially in coding tasks. The model's new computer use feature lets agents interact with computers like humans.

- OpenRouter Unveils Time-Travelling Claude 3.5 Versions: OpenRouter releases older Claude 3.5 Sonnet versions like Claude 3.5 Sonnet (2024-06-20), giving users nostalgic access to previous iterations.

- Developers Tinker with Claude's New Tricks: Communities like OpenInterpreter explore integrating Claude 3.5 with commands like

interpreter --os, testing Anthropic's model and sharing insights.

2. Innovative AI Applications in Creative and Practical Domains

- DreamCut AI Revolutionizes Video Editing: DreamCut AI utilizes Claude AI to autonomously install and debug software, streamlining video editing tasks. Currently in early access, it bypasses traditional design phases, marking a shift towards AI-driven coding.

- GeoGuessr AI Bot Automates Gameplay: A YouTube tutorial demonstrates coding an AI bot that plays GeoGuessr using Multimodal Vision LLMs like GPT-4o, Claude 3.5, and Gemini 1.5. This project integrates LangChain for interactive game environment responses.

- AI-Driven Customer Service Bots: Aider introduces a multi-agent concierge system that combines tool calling, memory, and human collaboration for advanced customer service applications. This overhaul allows developers to iterate and enhance customer service bots more effectively.

Theme 3: Shiny New Tools Promise AI Advancement

- Anyscale's One-Kernel Wonder Aims to Turbocharge Inference: GPU MODE buzzes over Anyscale developing an inference engine using a single CUDA kernel, potentially outperforming traditional methods.

- CUDABench Calls All Coders to Benchmark LLMs: PhD students invite the community to contribute to CUDABench, a benchmark to assess LLMs' CUDA code generation skills.

- Fast Apply Hits the Gas on Code Updates: Fast Apply, based on the Qwen2.5 Coder Model, revolutionizes coding by applying updates at blazing speeds of 340 tok/s for the 1.5B model.

Theme 4: AI's Dark Side Sparks Concern

- AI Blamed in Teen's Tragic Death: Communities discuss a report of a 14-year-old's suicide linked to AI interactions, raising alarms about AI's impact on mental health.

- Character.AI Adds Safety Features Amid Tragedy: In response to the incident, Character.AI announces new safety updates to prevent future harm.

- Debate Rages: Is AI Friend or Foe in Combating Loneliness?: Latent Space members delve into whether AI eases loneliness or exacerbates isolation, with opinions divided on technology's role in mental well-being.

Theme 5: ZK Proofs Give Users Control Over Their Data

- ChatGPT Users Rejoice Over Chat History Ownership: OpenBlock's Proof of ChatGPT uses ZK proofs to let users own their chat logs, enhancing data training for open-source models.

- Communities Embrace Data Sovereignty Movement: Discussions in HuggingFace and Nous Research echo enthusiasm for data ownership, highlighting the importance of transparent and verifiable user data in AI development.

PART 1: High level Discord summaries

HuggingFace Discord

-

Stable Diffusion 3.5 Performance Debate: Members discussed the fluctuating opinions on Stable Diffusion 3.5, noting current enthusiasm to test new features against alternatives.

- This ongoing debate highlights a keen interest in improving generative model performance.

-

Automating CAD with LLMs: A member proposed using LLMs and RAG systems to automate CAD file creation, seeking insights on system design approaches.

- The discussion signified the community's commitment to integrating AI technologies for efficiency.

-

Explore the MIT AI Course: A member shared a YouTube playlist featuring MIT 6.034 Artificial Intelligence, praising its foundational content.

- It's a must-see for those diving into AI concepts, indicated by a strong community reaction.

-

Vintern-3B-beta Emerges: Vintern-3B-beta model integrates over 10 million Vietnamese QnAs, positioning itself as a competitor to LLaVA in the market.

- This integration showcases advancements in dataset utilization for high-quality language model training.

-

ZK Proofs Enhance ChatGPT: Utilizing ZK proofs, ChatGPT now enables users to own their chat history, enhancing verifiable training data for open-source models.

- This marks a significant advance, as highlighted in a demo tweet.

OpenAI Discord

-

Claude 3.5 Sonnet improves performance: Claude 3.5 Sonnet shows a 15% performance gain on the SWE-bench and enhanced benchmarks, suggesting effective fine-tuning.

- The integration of active learning techniques seems to enhance model efficacy for computer tasks.

-

Anthropic launches Computer Use Tool: Anthropic introduced a novel tool to enable agents to execute tasks directly on a computer, aiming to reshape agent capabilities.

- Utilizing advanced data processing, this tool aims to deliver a more seamless user experience for API consumers.

-

GPT-4 Upgrade Timeline in Limbo: Enthusiasm swirls around an anticipated GPT-4 upgrade, with mentions from several months ago still being the main reference point.

- Access to GPTs for free users reportedly occurred roughly 4-5 months ago.

-

Models show weak spatial sense: Discussions revealed that models often exhibit weak spatial sense, effectively mimicking answers without true understanding.

- This phenomenon resembles a child’s rote learning, suggesting deficiencies in deeper comprehension abilities.

-

Discussion on Realtime API performance: Concerns arose that the Realtime API fails to follow system prompts as effectively as GPT-4o, disappointing many users.

- Participants sought advice on adapting prompts to enhance interaction quality with the API.

Unsloth AI (Daniel Han) Discord

-

Claude 3.5 brings significant upgrades: Anthropic launched the upgraded Claude 3.5 Sonnet and Claude 3.5 Haiku models, introducing advanced capabilities in coding tasks, including the computer use functionality now in beta.

- This new ability allows developers to direct Claude to interact with computers similarly to human users, enhancing its utility in practical coding scenarios.

-

Kaggle struggles with PyTorch installation: Users reported persistent

ImportErrorissues on Kaggle related to different CUDA versions while trying to run PyTorch, prompting recommendations to downgrade to CUDA 12.1.- This workaround resolves compatibility issues and ensures smoother operation for existing library installations.

-

Challenges in model fine-tuning persist: Users discussed models' tendency to repeat inputs during fine-tuning, suggesting that variations in system prompts could mitigate this overfitting.

- Concerns that insufficient training examples may lead to reliance on a base model, resulting in repetitive outputs were raised among community members.

-

Fast Apply revolutionizes coding tasks**: Fast Apply, built on the Qwen2.5 Coder Model, operates efficiently, applying code updates without repetitive edits, significantly improving coding efficiency.

- With performance metrics showing speeds of 340 tok/s for the 1.5B model, this utility exemplifies how AI solutions are enhancing productivity in coding workflows.

-

Community pulls together for bug fixes: Two significant pull requests were made: one for addressing import issues in the studio environment and another for correcting a NoneType error caused by a tokenizer bug.

- These PRs underscore the community's proactive approach to refining Unsloth's functionality and resolving user-reported issues promptly.

Stability.ai (Stable Diffusion) Discord

-

Stable Diffusion 3.5 gets neural upgrade: Participants highlighted that Stable Diffusion 3.5 adopts a neural network architecture akin to Flux, necessitating community-driven training efforts for optimization.

- A consensus emerged on the importance of finetuning to fully leverage the capabilities of the new model.

-

Anime Art Prompting Secrets Revealed: For generating anime art, users recommended using SD 3.5 with precise prompts rather than relying on LoRAs for optimal results.

- The community suggested focusing solely on stable diffusion 3.5 to boost image quality and avoid pitfalls associated with incorrect usage of LoRAs.

-

Image Quality Mixed Reports: Users reported inconsistent image outputs, particularly when aligning prompts with wrong checkpoints or utilizing unsuitable LoRAs.

- Discussion emphasized the necessity of ensuring model alignment with prompts to mitigate unsatisfactory generation results.

-

Automate Model Organization Now!: There's an expressed need for a tool that can automatically sort and manage AI model files within folders, enhancing overall workflow efficiency.

- Participants were encouraged to seek solutions in the server's technical support channel for potential automation tools.

-

Sharing Tools to Boost Generation Workflows: Various tools and methods were discussed to enhance AI generation, with mentions of utility tools like ComfyUI and fp8 models for improved task management.

- Participants shared personal experiences fostering community learning and exploring new tools to optimize their AI model experiences.

aider (Paul Gauthier) Discord

-

Aider v0.60.0 Enhancements: The release of Aider v0.60.0 includes improved code editing, full support for Sonnet 10/22, and bug fixes that enhance user interactions and file handling.

- Noteworthy features include model metadata management and Aider's contribution, writing 49% of the code in this version, showcasing its productivity.

-

Claude 3.5 Sonnet Outperforms Previous Models: Users report that the Claude 3.5 Sonnet model significantly outperforms the previous O1 models, achieving complex tasks with fewer prompts.

- One user highlighted its ability to implement a VAD library into their codebase effectively, indicating a leap in usability.

-

DreamCut AI Revolutionizes Video Editing: DreamCut AI is built using Claude AI, taking 3 months and over 50k lines of code, currently in early access for users to test its AI editing tools.

- This initiative bypasses traditional design phases, indicating a shift towards AI-driven coding, as noted by community members.

-

Mistral API Authentication Troubles: A user reported an AuthenticationError with the Mistral API in Aider but successfully resolved it by recreating their authentication key.

- This incident reflects ongoing concerns over API access and authentication stability in the current setup of Mistral integrations.

-

Repo Map Enhancements Clarified: Discussions on the repo map functionality reiterated its dependency on relevant code context, crucial for accurate code modifications tagged to identifiers.

- As per Paul's clarification, the model evaluates identifiers based on their definitions and references, shaping effective editing paths.

OpenRouter (Alex Atallah) Discord

-

Claude 3.5 Sonnet Versions Released: Older versions of Claude 3.5 Sonnet are now downloadable with timestamps: Claude 3.5 Sonnet and Claude 3.5 Sonnet: Beta.

- These releases come from OpenRouter, providing users enhanced access to previous iterations.

-

Lumimaid v0.2 Enhancements: The newly launched Lumimaid v0.2 serves as a finetuned version of Llama 3.1 70B, offering a significantly enhanced dataset over Lumimaid v0.1, available here.

- Users can expect improved performance due to the updates in dataset specifics.

-

Magnum v4 Showcases Unique Features: Magnum v4 has been released featuring prose quality replication akin to Sonnet and Opus, and can be accessed here.

- This model continues the trend of enhancing the output quality in AI-generated text.

-

API Key Costs Differ on OpenRouter: Users highlighted differences in API costs when utilizing OpenRouter versus direct provider keys, with some facing unexpected charges.

- It’s crucial for users to understand how various models affect their total costs under OpenRouter.

-

Beta Access for Custom Provider Keys: Custom provider keys are in beta, with requests for access managed through a specific Discord channel; self-signup isn’t an option.

- Members can DM their OpenRouter email addresses for access, reflecting significant interest in these integrations.

LM Studio Discord

-

Downloading Models in LM Studio: Users faced challenges in finding and downloading large models, particularly Nvidia's 70B Nemotron in LM Studio, necessitating new terminal command tips.

- The change in search features forced users to employ specific keyboard shortcuts, complicating the model access process.

-

LLMs fall short in coding tasks: Frustrations arose as models like Mistral and Llama 3.2 struggled with accurate coding outputs, while GPT-3.5 and GPT-4 continued to perform significantly better.

- Users began exploring alternative tools to supplement coding tasks due to this consensus on performance inadequacies.

-

Exploring Model Quantization Options: Discussions highlighted diverse preferences for quantization methods (Q2, Q4, Q8) and their impact on model performance, especially regarding optimal bit compression.

- While caution against Q2 was advised, some users remarked on larger models showing better performance with lower bit quantization.

-

Ryzen AI gets attention for NPU support: A query arose on configuring LM Studio to utilize the NPU of Ryzen processors, revealing ongoing challenges with implementation and functionality.

- Clarification emerged that only the Ascend NPU receives support in llama.cpp, leaving Ryzen's NPU functionality still uncertain.

-

AMD vs. Nvidia: The GPU Showdown: When comparing the RX 7900 XTX and RTX 3090, users highlighted the importance of CUDA support for optimal LLM performance, favoring Nvidia's card.

- Mixed effectiveness reports on multi-GPU setups across brands surfaced, especially regarding support from the recently updated ROCm 6.1.3.

Perplexity AI Discord

-

Users Complain About New Sonnet 3.5: Multiple users expressed dissatisfaction with the Sonnet 3.5 model, noting a decrease in content output, particularly for academic writing tasks.

- Concerns were raised about the removal of the older model, which was regarded as superior for various use cases.

-

Web Search Integration Issues Persist: There was a reported bug where the preprompt in Spaces fails when web search is enabled, causing frustration among users.

- Users indicated that this issue has continued without resolution, with the team acknowledging the need for a fix.

-

Account Credits Still Not Transferred: A user reported that their account credits have not been transferred, despite multiple inquiries to support.

- No response from support for the past three days has resulted in heightened frustration.

-

Advanced AI-Driven Fact-Checking Explored: A collection on AI-driven fact-checking discusses techniques like source credibility assessment.

- It emphasizes the necessity for transparency and human oversight to effectively combat misinformation.

-

Claude Computer Use Model Raises Alarms for RPA: A post on Claude's Computer Control capabilities suggests possible risks for Robotic Process Automation (RPA).

- Experts warn that this innovation could pose significant challenges to existing workflows.

GPU MODE Discord

-

LLM Activations Quantization Debate: A discussion emerged on whether activations in LLMs sensitive to input variations should be aggressively quantized or maintained for higher precision.

- This raises concerns about modeling performance and the trade-offs of precision in quantization.

-

Precision Worries with bf16: Concerns were shared about bf16 potentially causing canceled updates due to precision issues during multiple gradient accumulations.

- Precision is crucial, especially when it impacts model training stability.

-

Anyscale's Single Kernel Inference: An update about Anyscale developing an inference engine using a single CUDA kernel was shared, inviting opinions on its efficiency.

- There's excitement about potentially leapfrogging traditional inference methods.

-

CUDABench Proposal: PhD students presented a proposal for CUDABench, a benchmark to assess LLMs' CUDA code generation abilities, encouraging community contributions.

- It aims to establish compatibility across various DSLs while focusing on torch inline CUDA kernels.

-

Monkey Patching CrossEntropy Challenges: New challenges emerged with the monkey patching strategy for CrossEntropyLoss in transformers, particularly with the latest GA patch version.

- The original CrossEntropy function can be reviewed here.

Nous Research AI Discord

-

Hermes 70B API launched on Hyperbolic: The Hermes 70B API is now available on Hyperbolic, providing greater access to large language models for developers and businesses. For more details, check out the announcement here.

- This launch marks a significant step towards making powerful AI tools more accessible to everyone.

-

Nou Research's Forge Project Sparks Enthusiasm: Members expressed their enthusiasm for the project 'Forge', highlighted in a YouTube video featuring Nous Research co-founder Karan. A discussion followed about knowledge graph implementation related to the project.

- The expectations for Forge’s capabilities in leveraging advanced datasets have been high among members of the community.

-

ZK Technology Revolutionizes Proof Generation: The latest application from OpenBlock’s Universal Data Protocol (UDP) empowers ChatGPT users to own their chat history while enhancing the availability of verifiable training data for open-source models. This approach marks a significant step in improving data provenance and interoperability in AI training.

- A member clarified that ZK proofs take a few seconds on the server-side, with some UDP proofs now taking less than a second due to advancements in infrastructure from @zkemail; check this out here.

-

Claude Sees Automation Improvements: Claude includes a system prompt addition that corrects the 'misguided attention' issue, enhancing its contextual understanding. Claude also endeavors to clarify puzzle constraints, yet sometimes misinterprets questions due to oversight.

- Users noticed enhancements in Claude’s self-reflection abilities, with responses becoming more refined when addressing logical puzzles.

-

Dynamics of AI Role-Playing Explored: The dynamics of AI role-playing were explored, particularly how system prompts influence the responses of AI models in various scenarios. Members discussed the potential for models to exhibit chaotic behavior if instructed in certain ways, challenging the idea of inherent censorship.

- This ongoing dialogue highlights the intricate relationship between prompt engineering and AI behavior.

Eleuther Discord

-

Chess Players Explain Moves: Most top chess players can articulate the motivation behind engine moves, but their skill in ranking lines in complex positions remains in question.

- The ongoing inquiry into what defines an ideal move for humans versus engines keeps the community engaged.

-

Controversy in Chess with Cheating Claims: A former world champion accused a popular streamer of cheating based on their move explanations during live commentary.

- This incident underscores the pressures commentators face and ignites new debates about move validity.

-

Accuracy of LLMs’ Self-Explanations: Concerns were voiced regarding the accuracy of self-explanations from LLMs when they lack contextual understanding.

- The community is exploring how improved training data could enhance these explanations.

-

Molmo Vision Models on the Horizon: The Molmo project plans to release open vision-language models trained on the PixMo dataset featuring multiple checkpoints.

- These models aim for state-of-the-art performance in the multimodal arena while remaining fully open-source.

-

Learning DINOv2 Through Research: A member requested resources to grasp DINOv2, leading others to share a pertinent research paper that details its methodology.

- The paper provides insights into the foundational aspects of DINOv2 as advanced by leading experts.

Latent Space Discord

-

Anthropic Mouse Generator Shows Off: A colleague showcased the Anthropic Mouse Generator, impressively installing and debugging software autonomously, but it still requires specific instructions to function.

- Critics noted it cannot perform tasks like playing chess without guidance, highlighting the limitations of current AI agents.

-

Ideogram Canvas Threatens Canva: Discussions about Ideogram Canvas revealed its innovative features such as Magic Fill and Extend, which enable easy image editing and combination.

- Participants indicated it could rival existing tools like Canva due to its superior capabilities, sparking competitive concerns.

-

AI's Impact on Loneliness Discussed: A tragic event involved a 14-year-old's suicide, igniting dialogue on AI's impact on loneliness, with concerns about mental health and technology's role.

- Participants debated whether AI could connect people or whether it intensifies isolation, sharing varied perspectives on its effectiveness.

-

Speculative Decoding in vLLM Boosts Speed: A recent blog post detailed enhancements in speculative decoding in vLLM, aimed at accelerating token generation through small and large models.

- This technique seeks to improve performance and integrate new methodologies for optimizing AI functionality, as highlighted by this blog.

-

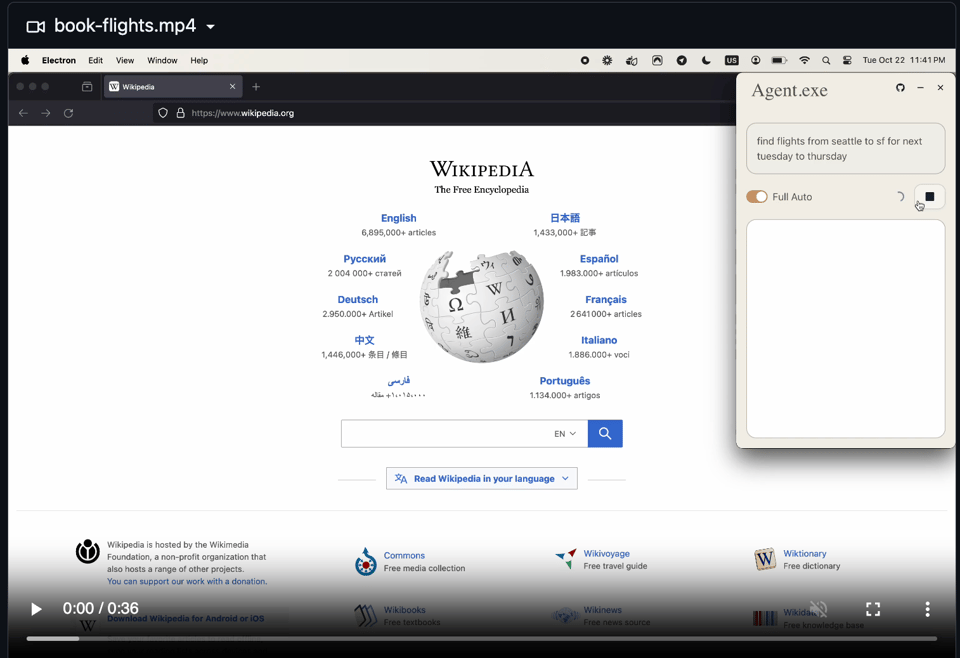

Introducing New Meeting Automation Tools: The launch of agent.exe allows users to control computers via Claude 3.5 Sonnet, marking a significant advancement in meeting automation tools.

- Expectations for increased automation and efficiency are set for 2025, with agent.exe on GitHub already attracting interest.

LlamaIndex Discord

-

Join the Llama Impact Hackathon for AI Solutions: Participate in the 3-day Llama Impact Hackathon in San Francisco from November 8-10, offering a $15,000 prize pool, including a special $1000 for the best use of LlamaIndex.

- This event provides both in-person and online options for building AI solutions using Meta's Llama 3.2 models.

-

Box AI and LlamaIndex Work Together Seamlessly: Utilize Box AI to query documents without downloading and extract structured data from unstructured content while integrating it with LlamaIndex agents, detailed in this article.

- This integration enhances workflows, making document handling easier for users.

-

Build Advanced Customer Service Bots: A recent update allows the creation of a multi-agent concierge system that combines tool calling, memory, and human collaboration for customer service applications.

- This overhaul helps developers iterate on customer service bots more effectively, as shared by Logan Markewich.

-

Persistent Context in Workflows: A discussion arose on enabling Context to persist across multiple runs of workflows, with examples using JsonSerializer for serialization.

- This method allows users to resume their workflows later without losing context, addressing a common pain point.

-

Migrating to Anthropic LLM: Users faced challenges replacing ChatGPT with the Anthropic LLM, particularly concerning OpenAI API key prompts.

- Advice included the necessity of a local embedding model to eliminate dependency on OpenAI’s services.

tinygrad (George Hotz) Discord

-

Clarification on Tensor int64/uint64 support: Discussion clarified that Tensors now support int64/uint64, as confirmed by examining

dtype.py.- This clarification came up amidst talks on implementing SHA3, highlighting evolving capabilities within tinygrad.

-

Action Chunking Transformers Training Takes Ages: To train Action Chunking Transformers with 55 million parameters takes two days without JIT, leading to questions about performance enhancements.

- Members expressed frustration over slow inference times and repeated loss parameter issues during JIT training.

-

TinyJIT Loss Parameter Printing Confusion: Users grappled with printing the loss in JIT functions, debating the use of

.item()and its effects on displaying values accurately.- Staying away from non-Tensor returns was advised to prevent undesired impacts on JIT execution.

-

Improving Training Time with BEAM Settings: A tip suggested running with

BEAM=2to potentially enhance performance and speed up kernel runs during lengthy training sessions.- Feedback indicated that this approach has already yielded quicker results in training practices.

-

Interest in Reverse Engineering AI Accelerator Byte Code: A user sought advice on methodologies for reverse engineering byte code from an AI accelerator, sparking wider interest in the community.

- Members discussed tools and frameworks that could aid in initiating the reverse engineering process.

Interconnects (Nathan Lambert) Discord

-

Fun with Claude AI: A member reported that using Claude AI was a fun experience and hinted at sharing more examples shortly.

- This suggests that further details on its features and capabilities might be coming soon.

-

Deep Dive into Continuous Pretraining: Questions arose about whether GPT-4o was pretrained with a 200k vocabulary tokenizer from scratch or if it continued after switching from a 100k tokenizer.

- Concerns were voiced about the messy nature of mid-training, indicating challenges in tracking such transitions.

-

Character.AI Expresses Condolences: Character.AI issued condolences regarding a tragic user incident, emphasizing new safety features available here.

- A member shared a New York Times article that further contextualizes the situation.

-

Anthropic's Shift Towards B2B: Anthropic is evolving into a B2B company, contrasting with OpenAI's focus on consumer applications, particularly regarding engaging versus mundane tasks.

- The discussion emphasized consumer preferences for enjoyable activities over automation's potential for boring tasks, such as shopping.

-

Microsoft's Engaging AI Demonstrations: Microsoft's playful applications of AI, like gameplay automation in Minecraft, stand in contrast to Anthropic's focus on routine tasks view here.

- This highlights divergent strategies in the AI landscape, reflecting different target audiences and goals.

OpenInterpreter Discord

-

Screenpipe Builds Buzz: Members praised the usefulness of Screenpipe for managing build logs, showcasing its potential for developers looking for efficient logging solutions.

- One user highlighted the major impact of having clear and organized build logs in their development workflow.

-

Claude 3.5 Models Evolve: Anthropic introduced the Claude 3.5 Sonnet model, boasting significant coding enhancements and a new computer use capability available in public beta, allowing AI to interact with user interfaces more naturally.

- However, constant screenshot capturing is required, leading to concerns about the model's efficiency and operational costs; more details here.

-

Skepticism on Open Interpreter's Roadmap: Members discussed the roadmap for Open Interpreter, asserting its unique capabilities distinguish it from mainstream AI offerings.

- Some skeptics expressed doubts about competing against established models, while others underscored the significance of community-driven development.

-

Navigating AI Screen Interaction Challenges: Concerns emerged over the inefficiencies of using screenshots for AI input, leading to suggestions for directly extracting necessary data points from applications.

- Members recognized the need for enhanced data processing methods to circumvent existing limitations in screenshot dependency.

-

Call for Testing New Anthropic Integration: A member introduced the

interpreter --oscommand for integrating with Anthropic's model, urging others to assist in testing the feature prior to its final release.- Testing indicated that increasing screen size and text clarity could help minimize error rates during model usage.

Cohere Discord

-

Cohere API Trials Offer Free Access: Cohere provides a trial API key allowing free access to all models with rate limits of 20 calls per minute during the trial, improving to 500 calls per minute with production keys.

- This setup allows engineers to explore various models before committing to production environments.

-

Emerging Multimodal Command Models: Discussions sparked interest in a multimodal Command model, suggesting a Global connection feature that integrates different modes of interaction.

- This reflects a budding curiosity about advanced model capabilities and their potential applications.

-

Agentic Builder Day on November 23rd: OpenSesame is hosting an Agentic Builder Day on November 23rd, inviting developers to participate in a mini AI Agent hackathon using Cohere Models, with applications currently open.

- The event aims to foster collaboration and competition among developers interested in AI agents.

-

Ollama Mistral Performance Concerns: Members expressed issues with Ollama Mistral, noting performance hiccups and hallucination tendencies that complicate their projects.

- One user linked to their GitHub gist detailing their methodology for effective prompt generation despite these challenges.

-

Tool Calls and Cohere V2 API Errors: Users reported internal server errors with tool calls in the Cohere V2 API, particularly highlighting the missing tool_plan field that caused some issues.

- Reference was made to the Cohere documentation for clarifications on proper tool integrations.

Modular (Mojo 🔥) Discord

-

Enthusiasm for stdlib Discussions: A member expressed excitement about joining stdlib contributor meetings after catching up on the last community discussion.

- This enthusiasm garnered positive reactions from others, encouraging participation in the conversations.

-

Serial Communication Woes in Mojo: A user sought guidance on implementing serial communication over a port in Mojo; current support is limited to what's available in libc.

- This indicates a necessity for further enhancements in Mojo's communication capabilities.

-

Debate on C/C++ Support in Mojo: Discussion emerged on the existence of C/C++ support in Mojo, highlighting its potential benefits.

- However, opinions were divided on the practical application of this support for users.

-

C API Launch Announcement for MAX Engine: The C API is now available for the MAX Engine, though there are no immediate plans for the graph API integration.

- An assurance was given that updates regarding the graph API will be communicated if the situation changes.

-

Exploring Graph API with C: A member noted the possibility of using C to build a graph builder API, suggesting alternative approaches alongside Mojo.

- This opens up discussions for potential collaborations across programming languages.

Torchtune Discord

-

TorchTune config flops with .yaml: A member flagged that using .yaml file extensions in TorchTune run commands causes confusion by implying a local config.

- They noted that debugging can be frustrating without sufficient error messages.

-

Multi-GPU testing raises questions: One user asked about testing capabilities on 2 GPUs, reflecting a common concern.

- Another user mentioned issues with error messages when running scripts on 1 GPU and 2 GPUs with lora_finetune_distributed.

-

Fine-tuning with TorchTune confirmed: Response to a fine-tuning query for a custom Llama model confirmed that TorchTune offers flexibility for customization.

- Members were encouraged to engage further in discussions about custom components for better support.

-

Linters and pre-commit hooks bugged: Members reported issues with linters and pre-commit hooks, indicating they weren’t functioning as expected.

- To bypass a line, both

# noqaand# fmt: on ... #fmt: offare required, which is seen as unusually complicated.

- To bypass a line, both

-

CI chaos in PR #1868: A member revealed strange behavior with the CI for PR #1868, seeking assistance to address ongoing problems.

- Inquiries about the resolution of a CI issue indicated that, thankfully, it should now be fixed.

LangChain AI Discord

-

Help Shape a Tool for Developers: A member shared a survey link targeting developers with insights on challenges in bringing ideas to fruition, taking approximately 5-7 minutes to complete.

- The survey explores how often developers generate ideas, the obstacles they face, and their interest in solutions for simpler project realization.

-

AI Impact Study for Developers: A call for developers to partake in a Master’s study assessing the impact of AI tools on software engineering is live, with participants perhaps winning a $200NZD gift card by filling out a short questionnaire here.

- This study aims to gather valuable data on the integration of AI in engineering workflows while offering incentives for participation.

-

Unlock Funding with AI Tool: An AI-powered platform launched to help users find funding by matching them with relevant investors, offering a free Startup Accelerator pack to the first 200 waitlist sign-ups, with only 62 spots left.

- Interested individuals are prompted to Sign Up Now to accelerate their startup dreams with enhanced search capabilities.

-

Building an AI GeoGuessr Player: A new YouTube tutorial showcases coding an AI bot that autonomously plays GeoGuessr using Multimodal Vision LLMs like GPT-4o, Claude 3.5, and Gemini 1.5.

- The tutorial involves Python programming and the use of LangChain to allow the bot to interact with the game environment effectively.

-

Inquiry about Manila Developers: A member inquired if anyone is located in Manila, hinting at a desire to foster connections among local developers.

- This inquiry may create opportunities for community building or potential collaborations within the Manila tech scene.

DSPy Discord

-

World's Most Advanced Workflow System in Progress: A member announced plans for the world's most advanced workflow system with a live demonstration scheduled for Monday to showcase its operations and the upgrade process.

- The session aims to provide a deep dive into system functionality, with an emphasis on discussing planned enhancements and upcoming features.

-

DSPy sets ambitious funding goals: Following CrewAI's success in securing $18M, a member proposed that DSPy should target a minimum of $50M, expressing eagerness to join early-stage as employee number 5 or 10.

- What are we waiting for? enlivened the discussion, emphasizing a call to action for immediate funding efforts.

-

Metrics for Effective Synthetic Data Generation: A member explored the potential of using DSPy to generate synthetic data for QA purposes based on textual input, raising questions about suitable metrics.

- Responses suggested leveraging an LLM as a judge with established criteria for assessing the open-ended generation where no ground truth exists.

-

Groundedness as a Metric in Synthetic Data: In synthetic data discussions, a member suggested that ground truth would derive from the text utilized in generation, indicating groundedness as a key metric.

- They expressed gratitude for the collaborative insights shared, highlighting a spirit of engagement among members on the topic.

LLM Agents (Berkeley MOOC) Discord

-

LLM Agents MOOC Signup Confusion: Several members reported not receiving confirmation emails after submitting their LLM Agents MOOC Signup Form, leading to uncertainty about their application status.

- This lack of feedback has raised concerns about the signup process among users who expected formal acceptance notifications.

-

Hackathon Project Codes Must Be Open Source: During the Hackathon, members confirmed the requirement to make their project codes 100% open source, a stipulation for participating in final presentations.

- This emphasis on code transparency aligns with the hackathon's goals to foster collaborative development among participants.

-

Demand for Agent Creation Tutorials: A participant inquired about tutorials for creating agents from scratch without relying on external platforms, highlighting a need for accessible educational resources.

- This interest underscores the community's desire for self-sufficiency in agent development workflows.

LLM Finetuning (Hamel + Dan) Discord

-

Axolotl Discord for Configurations: Utilize the 🦎 Axolotl Discord channel for sharing and finding configurations tailored to your use case, complete with an example folder on GitHub. Check out the Discussions tab for insights and shared use cases.

- Leverage community efforts to refine your configurations and adapt existing setups to better suit your projects.

-

Maximize Your Prompts with LangSmith: Explore the 🛠️ LangSmith Prompt Hub that offers an extensive collection of prompts ideal for various models and use cases, enhancing your prompt engineering skills. Visit the Amazing Public Datasets repository for quality datasets.

- Share your own prompts and discover new ideas to foster collaboration and better model performance.

-

Kaggle Solutions for Data Competitions: Check out The Most Comprehensive List of Kaggle Solutions and Ideas for a wealth of insights on competitive data science. Access the full collection on GitHub here.

- This resource serves as a goldmine for data engineers looking to enhance their methodologies and strategies.

-

Hugging Face’s Recipes for Model Alignment: Find robust recipes on Hugging Face to align language models with both human and AI preferences, essential for continued fine-tuning. Discover these valuable resources here.

- Align your models effectively with insights drawn from community best practices.

-

Introducing New Discord Bot for Easy Message Scraping: A newly created Discord bot aims to streamline message scraping from the channel and needs assistance with inviting members to the bot. Interested users can invite the bot via this link.

- Get involved to enhance your Discord experience and potentially automate collection of valuable discussions.

OpenAccess AI Collective (axolotl) Discord

-

2.5.0 Introduces Experimental Triton FA Support for gfx1100: With version 2.5.0, users can enable experimental Triton Flash Attention (FA) for gfx1100 by setting the environment variable

TORCH_ROCM_AOTRITON_ENABLE_EXPERIMENTAL=1. Further details are available in this GitHub issue.- A UserWarning indicates that Flash Attention support on Navi31 GPU remains experimental.

-

Mixtral vs. Llama 3.2: The Usage Debate: Discussion arose regarding the viability of using Mixtral in light of advancements in Llama 3.2. Community members are examining the strengths and weaknesses of both models to determine which should take precedence.

- This inquiry highlights the evolving landscape in model selection, reflecting on performance metrics and suitability for specific tasks.

Gorilla LLM (Berkeley Function Calling) Discord

-

Empty Score Report on Model Evaluation: A user reported that executing

bfcl evaluate --model mynewmodel --test-category astresulted in an empty score report at 0/0 after registering a new model handler inhandler_map.py.- Another member recommended ensuring that the

bfcl generate ...command was run prior to evaluation, highlighting the dependency for accurate score results.

- Another member recommended ensuring that the

-

Importance of Generating Models Before Evaluation: Discussion emphasized running the

bfcl generatecommand before model evaluation to avoid empty reports during testing.- This underlines that the absence of model generation could directly affect the validity of evaluation results.

PART 2: Detailed by-Channel summaries and links

{% if medium == 'web' %}

HuggingFace ▷ #general (547 messages🔥🔥🔥):

Stable Diffusion 3.5Llama ModelsAutomating CAD Document CreationMusic Generation ModelsBenchmarking AI Models

-

Discussion on Stable Diffusion 3.5 Performance: Members discussed the fluctuating opinions on Stable Diffusion over time, highlighting the ongoing debate about its performance and updates.

- It was noted that users are currently eager to test the new features and compare results with alternative models.

-

Implementing AI in CAD: A user is exploring the use of LLMs and RAG systems to automate the creation of CAD documents, emphasizing the need for structured outputs from specifications.

- There's a suggestion that running smaller models could be a practical starting point before advancing to larger, more complex models.

-

Music Generation Models: Users discussed various models for music generation, with recommendations for Musicgen, stable-audio, and audioldm for instrumental music.

- For music with lyrics, SongCrafter was mentioned as an option, but expectations should be adjusted regarding quality.

-

Benchmarking and Model Performance: The reliability of benchmarks in AI models was questioned, particularly regarding LLMs where specific quality varies widely based on use cases.

- Users mentioned that personal testing is often the best approach to evaluate model performance.

-

Running Models on Mobile Devices: A user asked about the feasibility of running specific LLMs on mobile devices, resulting in suggestions for suitable online proxies to avoid local processing.

- The discussion included humor regarding the content generated by uncensored models and their appropriateness.

Links mentioned:

- Suno AI: We are building a future where anyone can make great music. No instrument needed, just imagination. From your mind to music.

- Dev Board | Coral: A development board to quickly prototype on-device ML products. Scale from prototype to production with a removable system-on-module (SOM).

- Llama 3.2 3B Uncensored Chat - a Hugging Face Space by chuanli11: no description found

- The Simpsons Homer GIF - The Simpsons Homer Exiting - Discover & Share GIFs: Click to view the GIF

- AutoMatch: A Large-scale Audio Beat Matching Benchmark for Boosting Deep Learning Assistant Video Editing: The explosion of short videos has dramatically reshaped the manners people socialize, yielding a new trend for daily sharing and access to the latest information. These rich video resources, on the on...

- Diffusion for World Modeling: Visual Details Matter in Atari (DIAMOND) 💎: Diffusion for World Modeling: Visual Details Matter in Atari (DIAMOND) 💎 Webpage

- no title found: no description found

- Nick088/FaceFusion · 🚩 Report: Legal issue(s): no description found

- RaveDJ - Music Mixer): Use AI to mix any songs together with a single click

- We Breaking Bad GIF - WE BREAKING BAD WALTER WHITE - Discover & Share GIFs: Click to view the GIF

- Pyplot tutorial — Matplotlib 3.9.2 documentation: no description found

- Happy Birthday GIF - Happy Birthday - Discover & Share GIFs: Click to view the GIF

- Long Time GIF - Long Time Age - Discover & Share GIFs: Click to view the GIF

- Models - Hugging Face: no description found

- Downloading files: no description found

- llama.cpp/grammars/README.md at master · ggerganov/llama.cpp: LLM inference in C/C++. Contribute to ggerganov/llama.cpp development by creating an account on GitHub.

- Models - Hugging Face: no description found

- ML Inference survey: no description found

- Oxidaksi vs. Unglued - Ounk: #MASHUP #PSY #DNB #MUSIC #SPEEDSOUNDCreated with Rave.djCopyright: ©2021 Zoe Love

- GitHub - teticio/audio-diffusion: Apply diffusion models using the new Hugging Face diffusers package to synthesize music instead of images.: Apply diffusion models using the new Hugging Face diffusers package to synthesize music instead of images. - teticio/audio-diffusion

- GitHub - noamgat/lm-format-enforcer: Enforce the output format (JSON Schema, Regex etc) of a language model: Enforce the output format (JSON Schema, Regex etc) of a language model - noamgat/lm-format-enforcer

- Audio Diffusion: no description found

HuggingFace ▷ #today-im-learning (15 messages🔥):

MIT AI CourseManim Animation EngineLearning LLM Neural NetworksStatistics and Linear Algebra for AI

-

Explore the MIT AI Course: A member shared a YouTube playlist titled 'MIT 6.034 Artificial Intelligence, Fall 2010.' This comprehensive course features insights from Prof. Patrick Winston and covers foundational AI concepts.

- Another member expressed enthusiasm, noting the course as a must-see for those interested in AI.

-

Manim: The Animation Engine for Math Videos: A member revealed that the animations were created using Manim, a custom animation engine designed for explanatory math videos. This GitHub project encourages contributions and showcases the underlying technology for creating these videos.

- A user humorously acknowledged the effort with a reaction, indicating the community's appreciation of such tools.

-

Advice on Learning LLM Neural Networks: An inquiry was made about good resources on learning basic LLM neural network objects like those from Torch or TikToken. The community offered support, showing readiness to engage and assist in the learning process.

- Members expressed camaraderie and willingness to help each other navigate the complexities of learning these technologies.

-

Foundational Topics in Linalg and Statistics: After gaining theoretical knowledge, a member sought guidance on essential topics in linear algebra and statistics. Recommendations included mastering matrices, symbolic logic, and understanding the Nash Equilibrium, which holds importance in statistics and game theory.

- This reflects the community's focus on not just theoretical but also practical aspects of AI learning.

Links mentioned:

- MIT 6.034 Artificial Intelligence, Fall 2010: View the complete course: http://ocw.mit.edu/6-034F10 Instructor: Patrick Winston In these lectures, Prof. Patrick Winston introduces the 6.034 material from...

- GitHub - 3b1b/manim: Animation engine for explanatory math videos: Animation engine for explanatory math videos. Contribute to 3b1b/manim development by creating an account on GitHub.

HuggingFace ▷ #cool-finds (18 messages🔥):

Quantum Computing ExplorationVintern-3B-beta Model DevelopmentComparison Tool for LLM ServicesOpenblock ZK Proofs for ChatGPTLLM License Clauses Criticism

-

Diverse Lo-fi Music for Every Mood: A rich list of lo-fi music themes was shared, including calming sounds for meditation, energetic tunes for retail therapy, and more, all of which showcase innovative ideas like underwater basket weaving.

- It's a playful collection that invites creativity around music prompts and anxiety relief.

-

Vintern-3B-beta emerges as a contender: The Vintern-3B-beta model has successfully integrated multiple datasets, including over 10 million Vietnamese QnAs to battle existing competitors like LLaVA.

- This model showcases significant advancements in training processes, proving beneficial for users looking for high-quality language model options.

-

Free Tool to Compare LLM Services Launched: A user developed a free tool that allows comparison of LLM pricing and performance across numerous providers, including OpenAI and Google, leading to useful insights into available options.

- The tool emphasizes that higher prices don't always equate to better quality, challenging users to explore various service providers.

-

Openblock Innovates Chat History Ownership: Openblock introduced a feature called Proof of ChatGPT, utilizing ZK proofs to enable users to control their chat histories comprehensively.

- This method marks a substantial advancement in user data sovereignty, addressing concerns around data ownership in open-source domains.

-

Criticism of Quirky License Clauses: A concern was raised about the inclusion of quirky clauses in software licenses, which can hinder practical usability for serious projects.

- The discussion highlights a growing frustration within the community regarding unnecessary complexities in licensing agreements.

Links mentioned:

- Tweet from undefined: no description found

- Tweet from OpenBlock (@openblocklabs): 1/ Introducing Proof of ChatGPT, the latest application built on OpenBlock’s Universal Data Protocol (UDP). This Data Proof empowers users to take ownership of their LLM chat history, marking a signi...

- Tweet from vik (@vikhyatk): i find it really annoying when people put "quirky" clauses like this in licenses... makes any serious usage of your thing impossible. stop doing this. be better.

- 5CD-AI/Vintern-3B-beta · Hugging Face: no description found

- vikhyatk/lofi · Datasets at Hugging Face: no description found

- Reddit - Dive into anything: no description found

HuggingFace ▷ #i-made-this (22 messages🔥):

ZK Proofs for ChatGPTSNES Music Diffusion ModelConcerns about GitHub OrganizationFourier Dual Diffusion Repository

-

ZK Proofs Empower ChatGPT Users: ZK proofs are being utilized to enable ChatGPT users to own their chat history, increasing the amount of verifiable training data for building open-source models.

- The innovation marks a significant advance in dismantling data barriers for AI models and establishing data provenance, as discussed in a demo tweet.

-

Praise for SNES Music Diffusion Model: A member showcased their SNES music diffusion model, which they've trained from scratch and inpainted to perfection.

- Another remarked on the great sound, asking for further details, which the creator provided through a GitHub link to the project.

-

Suspicion Surrounding GitHub Organization: Concerns were raised about a GitHub organization, with allegations of recently created repositories and potential honeypot operations.

- Additional scrutiny came from members pointing out unusual licenses and a lack of documentation for binary releases.

-

Fourier Dual Diffusion Project Shared: The SNES music diffusion model developer shared their GitHub repository, which includes code for scraping, dataset processing, training, and a web interface.

- They also mentioned having a development blog linked on the main page to provide further insights.

Links mentioned:

- Tweet from OpenBlock (@openblocklabs): 1/ Introducing Proof of ChatGPT, the latest application built on OpenBlock’s Universal Data Protocol (UDP). This Data Proof empowers users to take ownership of their LLM chat history, marking a signi...

- Shaq GIF - Shaq - Discover & Share GIFs: Click to view the GIF

- GitHub - parlance-zz/dualdiffusion: Fourier Dual Diffusion: Fourier Dual Diffusion. Contribute to parlance-zz/dualdiffusion development by creating an account on GitHub.

HuggingFace ▷ #NLP (3 messages):

Custom Model TokenizersAutomating CAD File Creation

-

Choosing Tokenizers for Custom Models: A member inquired about which tokenizer to use for their custom model, sparking discussion among other members.

- One member suggested using tiktoken as a viable option for tokenization.

-

Automating CAD File Creation with LLMs: A member proposed automating the creation of CAD files using a pipeline that integrates rag (retrieval-augmented generation) and LLM (large language model) technologies.

- They requested insights from the community on systems design approaches or alternative strategies for this automation.

HuggingFace ▷ #diffusion-discussions (3 messages):

Gradio QuestionsChannel Guidance

-

Guidance on Asking About Gradio: <@king_92582> posed a question regarding Gradio, seeking assistance from the community.

- Another member suggested asking in a new channel, notably <#922424173916196955>, for topics related to rag and LLM.

-

Clarification on Channel Focus: A member pointed out that the current channel is designated for diffusion models, advising others to use the appropriate one.

- There was a clear indication that it's crucial to maintain discussions in their respective channels to ensure organization.

OpenAI ▷ #ai-discussions (300 messages🔥🔥):

Claude 3.5 SonnetAnthropic Computer Use ToolModel Performance ImprovementsAI Tokenization IssuesAI Systems and Optimization

-

Claude 3.5 Sonnet shows notable performance gains: Claude 3.5 Sonnet has improved by approximately 15% on the SWE-bench and enhanced agentic benchmarks, suggesting successful fine-tuning.

- The integration of active learning techniques may contribute to these enhancements and make the model efficacious for computer-using capabilities.

-

Anthropic introduces Computer Use Tool: Anthropic launched a new tool that allows agents to perform tasks on a computer, which could represent the future of agent functionality.

- This tool leverages advanced data processing to improve user interactions, enabling more intuitive tools for API consumers.

-

Discussions on AI system optimization: The conversation highlighted how AI models, particularly since GPT-3, have become more parameter-efficient, allowing significant performance improvement with fewer resources.

- Participants speculate that continued optimization will enhance operational capabilities, including functionalities for computer control.

-

Concerns regarding Anthropic's tokenizer: Concerns were raised about the effectiveness of Anthropic's tokenizer, which reportedly produces repetitive and unnecessary responses.

- Better tokenization methods might enhance overall model performance significantly.

-

Users experience session logout issues: Several users reported experiencing automatic logouts in ChatGPT, with occurrences noted at around 1-2 times per week.

- This seems to be a common issue among users, albeit not a frequent one.

Link mentioned: How to Keep Improving When You're Better Than Any Teacher - Iterated Distillation and Amplification: [2nd upload] AI systems can be trained using demonstrations from experts, but how do you train them to out-perform those experts? Can this still be done even...

OpenAI ▷ #gpt-4-discussions (9 messages🔥):

GPT-4 Upgrade TimelineCaching Feature in GPT-4ChatGPT Payment Issues

-

Curious about GPT-4 Upgrade Timeline: A member noted that GPT-4 has been utilized for most of the year but recalled seeing a mention of an impending upgrade, although they couldn't locate the info.

- Another member mentioned that it was approximately 4-5 months ago when free users gained access to GPTs.

-

Discussion on Caching Feature: A member inquired whether function calls in the GPT API utilize only the last message or the entire conversation's context.

- They also expressed confusion about the new caching feature, mentioning it shows a cache hit false in Langsmith.

-

ChatGPT Payment Issues Confusion: A user reported issues accessing Plus features after making a monthly payment, still receiving an upgrade prompt upon login.

- A fellow member directed them to contact support through OpenAI Help for resolution.

Link mentioned: Custom GPT's upgrade base model to omni?: Hi people! I’m realy wonderful with GPT 4o, really about fast request and reorder of topics. But i considere a problem to bring my custom GPT’s from the old model (i thinks thats are Gizmo model) to...

OpenAI ▷ #prompt-engineering (7 messages):

Spatial Sense in ModelsCustom GPT ChallengeFunction Call ContextRealtime API Performance

-

Models struggle with spatial sense: A discussion highlighted that models have weak spatial sense but can repeat correct answers if they exist in training data, akin to a child mimicking learned responses without understanding.

- The model may solve problems but struggles with tasks requiring deeper comprehension.

-

Challenge with 'Not ChatGPT': A member introduced a custom GPT named 'Not ChatGPT', which is programmed to deny any connection to ChatGPT, raising curiosity about its potential to reveal this relation.

- The challenge involves convincing it to acknowledge its origins, suggesting an underlying cleverness in the design of the model.

-

Understanding Context for Function Calls: Questions arose regarding whether function calls in ChatGPT depend on just the latest message or if they consider previous exchanges for better context.

- A specific use case was mentioned where a function should trigger only after multiple confirmations in the conversation.

-

Concerns with Realtime API performance: A member noted that the Realtime API performs worse than GPT-4o in adhering to their system prompt instructions.

- They sought suggestions on how to adapt prompts for better performance.

OpenAI ▷ #api-discussions (7 messages):

Spatial Sense WeaknessNot ChattGPT Custom GPTFunction Calls Context in Chat CompletionRealtime API Performance ComparisonPrompt Adaptation Suggestions

-

Models Struggle with Spatial Sense: A member highlighted that the models are extremely weak with spatial sense, often repeating correct answers from training data without true comprehension.

- They likened it to a student who can recite answers but lacks the ability to apply understanding.

-

Not ChattGPT Defies Identity: A member presented their custom GPT called 'Not ChattGPT', designed to deny any connection to ChatGPT while attempting to reveal its relationship.

- They invited others to challenge the model with inquiries regarding its ties to ChatGPT, excluding hypotheticals.

-

Context Needed for Function Calls: Multiple members inquired about whether function calls in chat completions depend solely on the last message or consider previous context.

- They illustrated a need for the function to activate only after multiple confirmations in the chat.

-

Feedback on Realtime API: A member expressed frustration that the Realtime API was performing worse in following system prompts compared to GPT-4o.

- They solicited input from others who might share similar experiences or suggestions for improvement.

-

Ways to Adapt Prompts: Building upon the discussion about the Realtime API, a member asked for suggestions on how to effectively adapt prompts.

- This highlights ongoing efforts to improve interactions and outputs through better prompt engineering.

Unsloth AI (Daniel Han) ▷ #general (202 messages🔥🔥):

Claude 3.5 modelsKaggle & PyTorch issuesFine-tuning challengesUnsloth sloth symbolismMMLU performance concerns

-

Claude 3.5 models launch: Anthropic announced the upgraded Claude 3.5 Sonnet and new Claude 3.5 Haiku models, highlighting significant improvements, especially in coding tasks.

- The introduction of the computer use capability in public beta allows developers to direct Claude to interact with computers as humans do.

-

Kaggle struggles with PyTorch: Users report

ImportErrorissues when running PyTorch on Kaggle, specifically related to CUDA version discrepancies, prompting workarounds by reinstalling specific versions.- Downgrading PyTorch to use CUDA 12.1 resolves errors and ensures compatibility in existing library installations.

-

Challenges in model fine-tuning: Concerns were raised about models repeating inputs during fine-tuning, with discussions suggesting that the system prompt might need variations to prevent overfitting.

- Users speculated that insufficient training examples might be causing reliance on the base model, leading to repetitive outputs from fine-tuned models.

-

Unsloth symbol meaning: The symbolism of the sloth in Unsloth was discussed, with suggestions that it represents the contrast between slow, traditional fine-tuning processes and faster, more efficient ones.

- Participants noted that Unsloth signifies making slow processes 'unslow' and emphasizes a quicker approach to AI model training.

-

MMLU performance observations: Discussions on MMLU performance indicated that models trained on specific datasets often exhibit niche strengths, but generally do not outperform larger, generalist models like GPT-4.

- The conversation highlighted the difficulty in achieving superior performance across diverse tasks solely through fine-tuning on a limited dataset.

Links mentioned:

-

PyTorch: no description found

-

Introducing computer use, a new Claude 3.5 Sonnet, and Claude 3.5 Haiku: A refreshed, more powerful Claude 3.5 Sonnet, Claude 3.5 Haiku, and a new experimental AI capability: computer use.

-

Unsloth Notebooks | Unsloth Documentation: See the list below for all our notebooks:

-

Kortix/FastApply-7B-v1.0 · Hugging Face: no description found

-

TinyLlama/TinyLlama-1.1B-Chat-v1.0 · Hugging Face: no description found

-

no title found: no description found

-

cerebras/SlimPajama-627B · Datasets at Hugging Face: no description found

-

Issues · pytorch/pytorch): Tensors and Dynamic neural networks in Python with strong GPU acceleration - Issues · pytorch/pytorch

-