a good day for open source American AI.

AI News for 12/12/2025-12/15/2025. We checked 12 subreddits, 544 Twitters and 24 Discords (206 channels, and 15997 messages) for you. Estimated reading time saved (at 200wpm): 1294 minutes. Our new website is now up with full metadata search and beautiful vibe coded presentation of all past issues. See https://news.smol.ai/ for the full news breakdowns and give us feedback on @smol_ai!

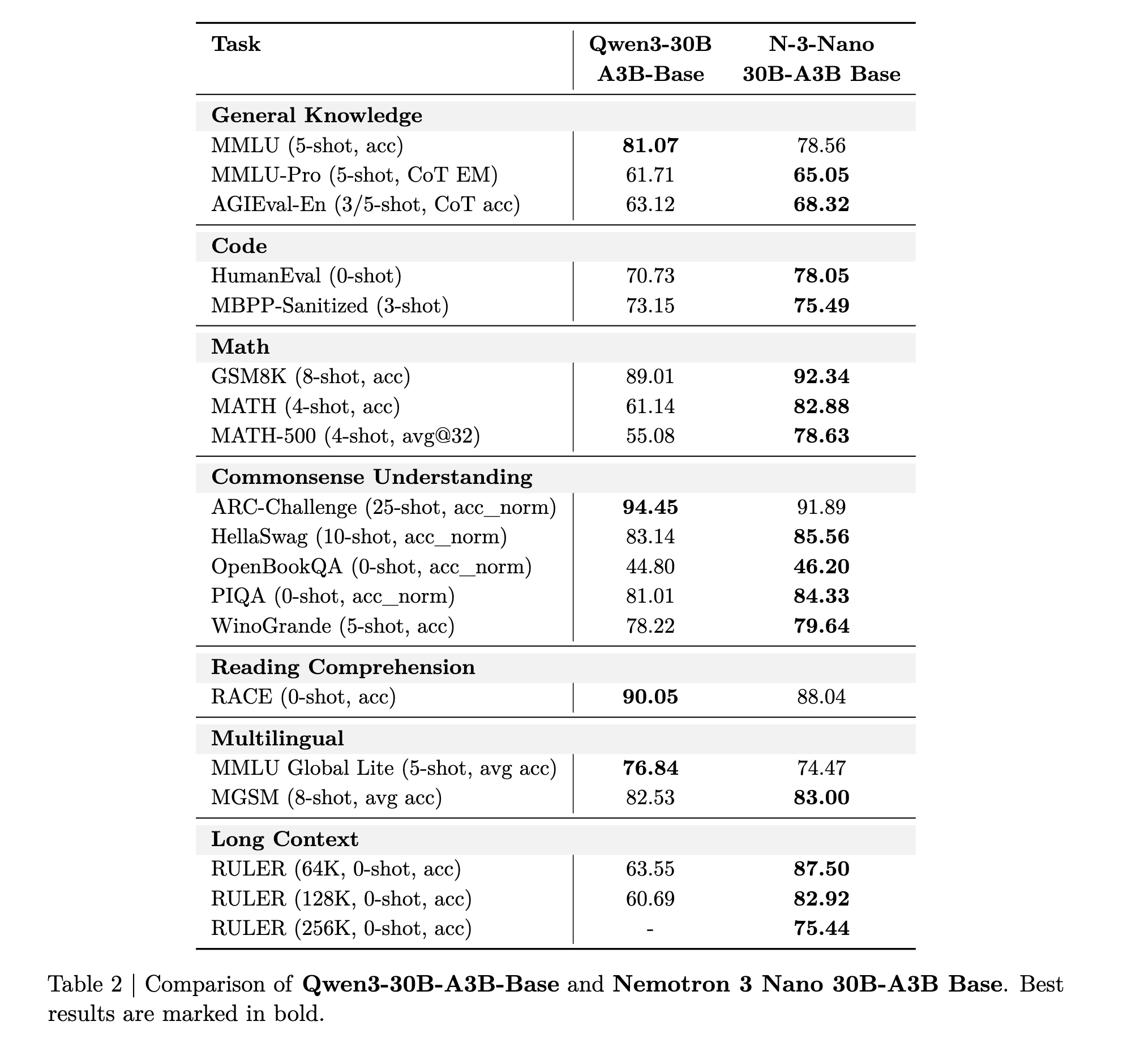

Nvidia's Nemotron is not often in the top tiers of open models, but distinguishes by being COMPLETELY open, as in, "we will openly release the model weights, pre- and post-training software, recipes, and all data for which we hold redistribution rights." (Nemotron 3 paper), as well as American-origin. Nano 3 is competitive with Qwen3:

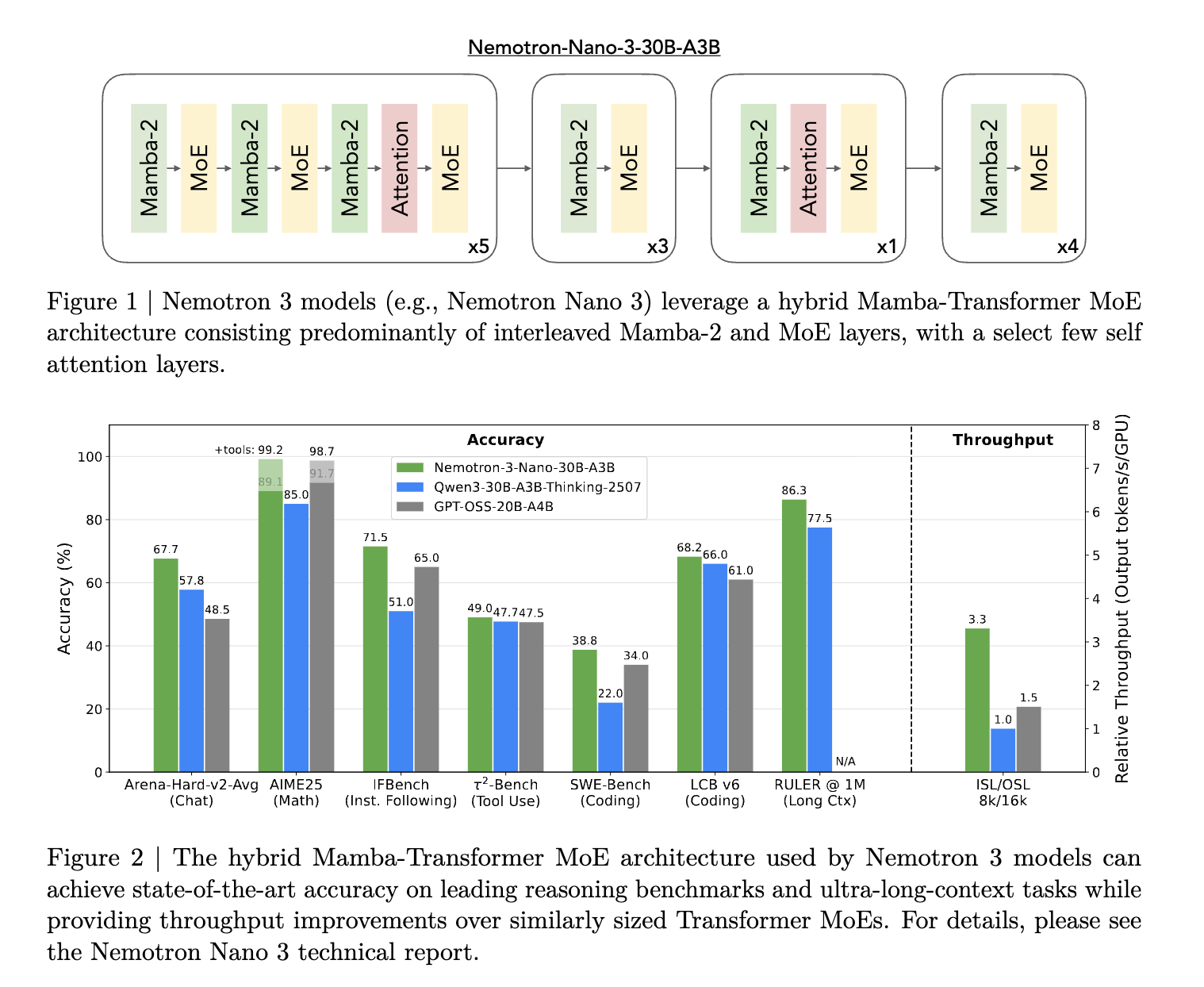

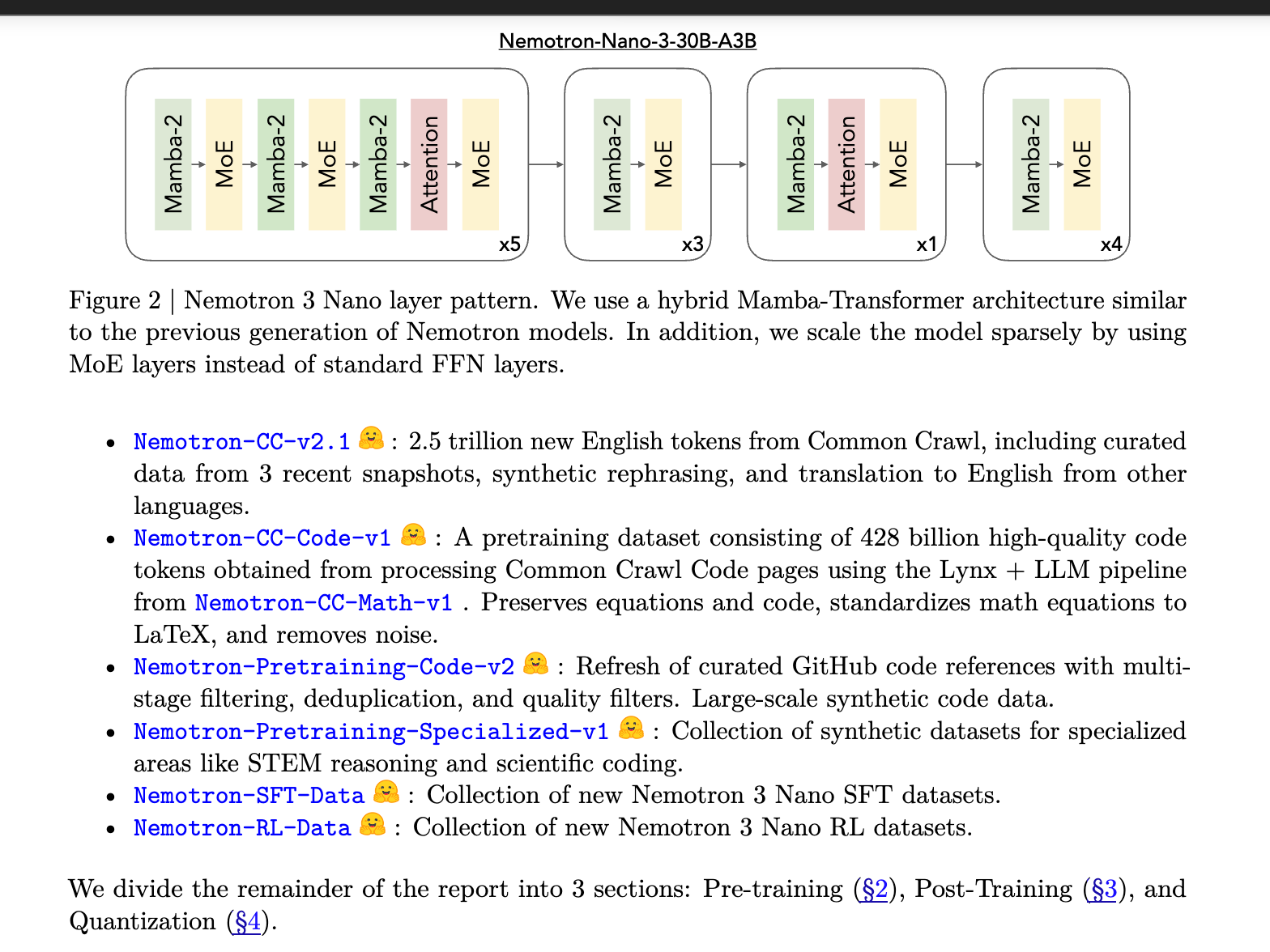

When these are released, they effectively serve as the checkpoint for the state of the art in LLM training, because they basically gather all of the table stakes things known to work. Among the notable choices - hybrid archs enabling long (1m) context:

Hybrid State Space Model + Transformer Architecture

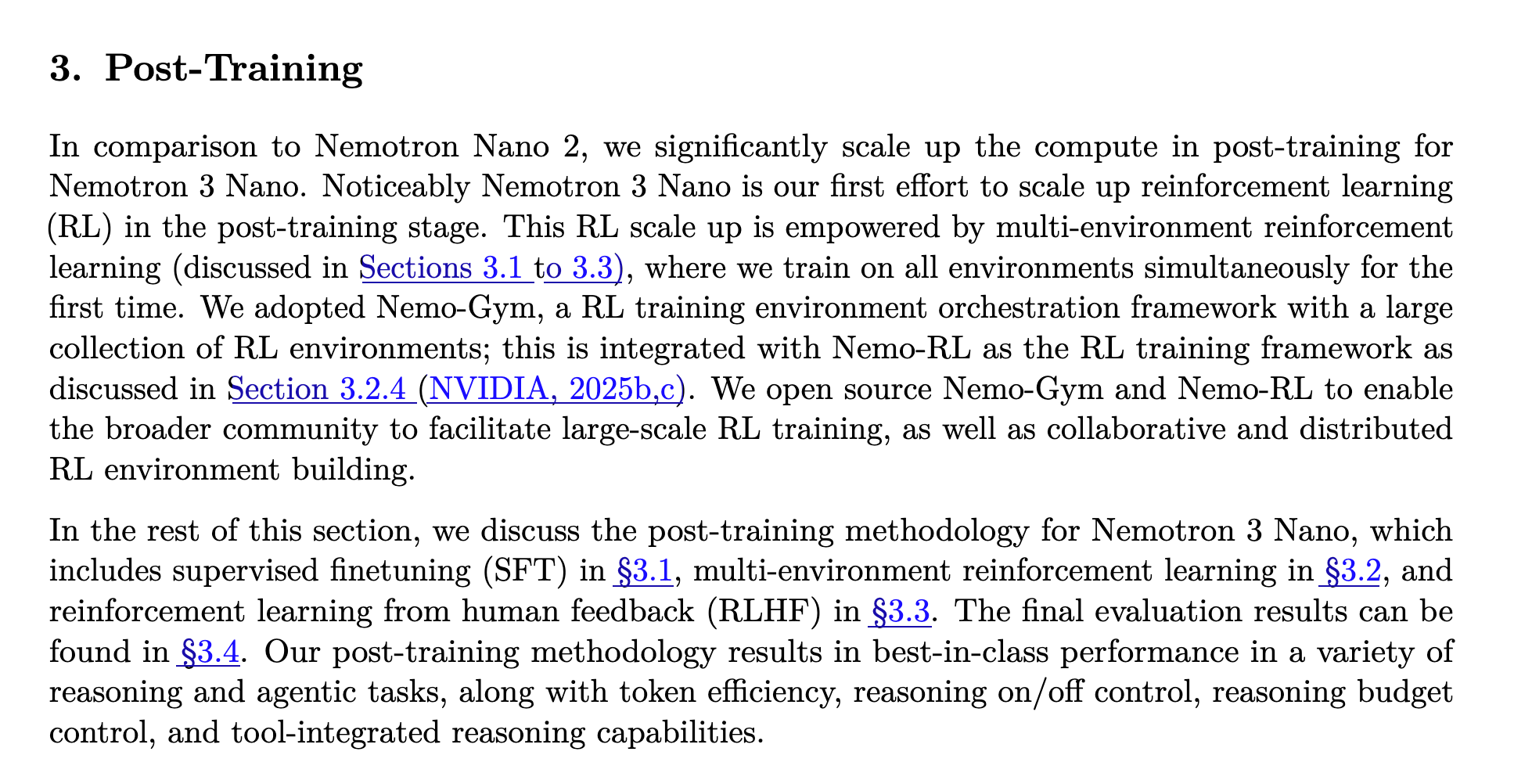

Multi environment RL (Nemo-Gym and Nemo-RL open sourced)

Per the Nano 3 tech report, they will be releasing all their datasets:

AI Twitter Recap

NVIDIA’s Nemotron 3: open hybrid MoE models, data, and agent stack

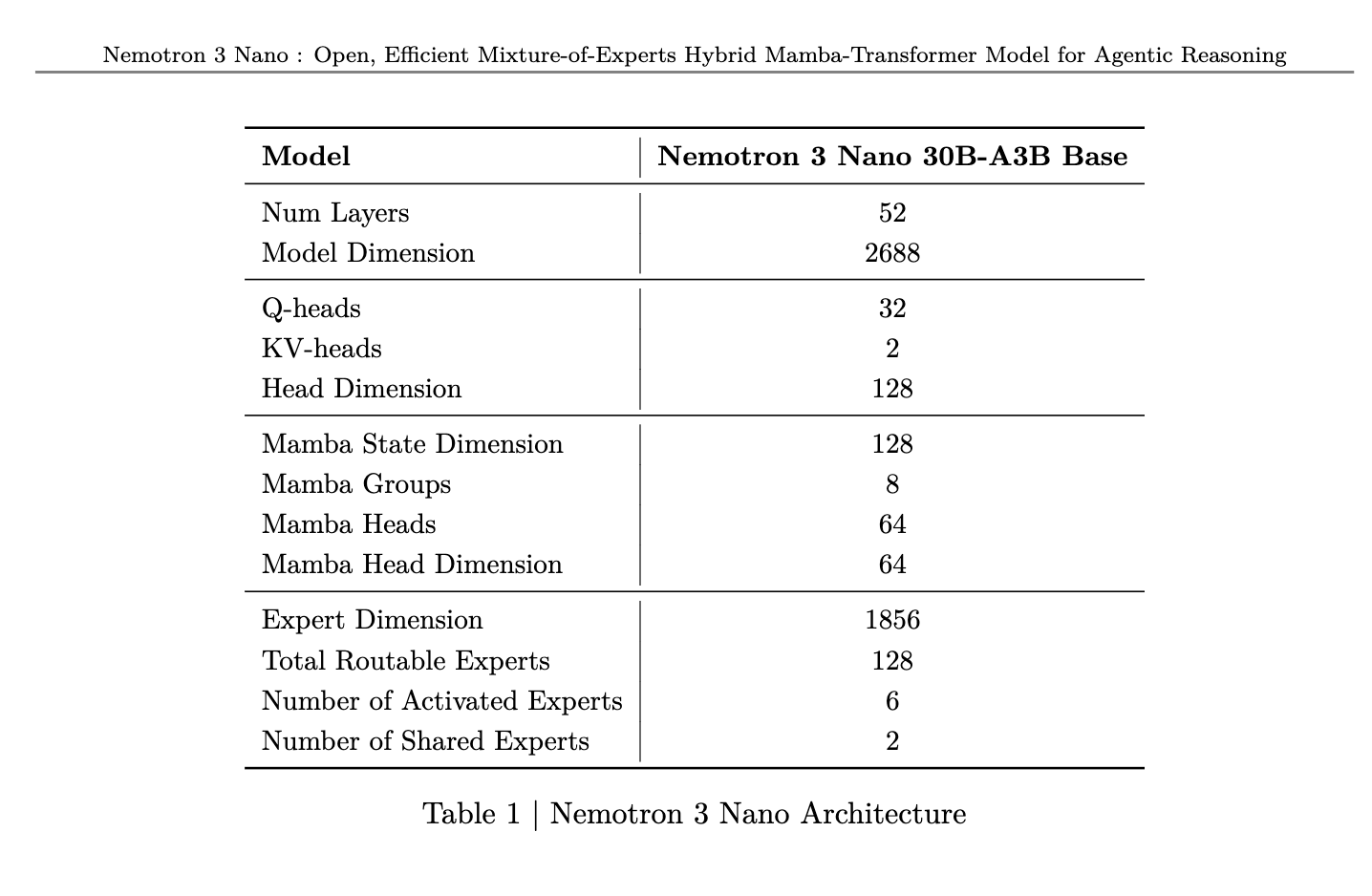

- Nemotron 3 Nano (30B total, ~3.6B active): NVIDIA released a fully open hybrid Mamba–Transformer MoE model with a 1M-token context, achieving best-in-class small-model results on SWE-Bench and strong scores on broad evaluations (e.g., 52 on Artificial Analysis Intelligence Index; +6 vs Qwen3-30B A3B) with very high throughput (e.g., ~380 tok/s on DeepInfra). Open assets include weights, training recipes, redistributable pre/post-training datasets, and an RL environment suite (NeMo Gym) for agent training. Commercial use is allowed under the NVIDIA Open Model License. Super (~100–120B) and Ultra (~400–500B) are “coming soon,” featuring NVFP4 pretraining and “LatentMoE” routing in a lower-dimensional latent subspace to reduce all‑to‑all and expert compute load. Announcements and technical details: @ctnzr, @nvidianewsroom, research page.

- Day‑0 ecosystem support: Immediate integrations landed across major inference stacks and providers:

- Inference: vLLM, SGLang, llama.cpp, Baseten, Together, Unsloth (GGUF).

- Data & eval: open sets for math and proofs (Nemotron‑Math, Nemotron‑Math‑Proofs) and an agentic dataset.

- Community analysis and results: Artificial Analysis deep-dive, HF collections, and speed/quality impressions (@andrew_n_carr, @awnihannun).

- Why it matters: This is one of the most complete open releases to date—new architecture (hybrid SSM/MoE), transparent training pipeline, open data, and agent RL environments—raising the bar for replicability and agent-focused R&D (@_lewtun, @percyliang, @tri_dao). Notes: LatentMoE is documented for the larger unreleased models (@Teknium), with Nano using the hybrid MoE/Mamba stack now.

Reasoning, retrieval, and coding agents: new techniques and results

- Operator-style reasoning beats long CoT: Meta SI’s Parallel‑Distill‑Refine (PDR) treats LLMs as improvement operators—generate parallel drafts → distill a bounded workspace → refine—and shows large gains at fixed latency (e.g., AIME24: 93.3% vs 79.4% long-CoT; o3‑mini +9.8 pts at matched token budget). An 8B model with operator‑consistent RL adds ~5% (@dair_ai).

- Adaptive retrieval policies via RL: RouteRAG learns when/what to retrieve (passage vs graph vs hybrid). A 7B model reaches 60.6 F1 across QA (beats Search‑R1 by +3.8 using 10k vs 170k training ex) and reduces retrieval turns ~20% while improving accuracy (@dair_ai).

- Unified compressed RAG (Apple CLaRa): Shared continuous memory tokens serve both retrieval and generation; differentiable top‑k enables gradients from generator to retriever; with ~16× compression, CLaRa‑Mistral‑7B matches or surpasses text baselines and outperforms fully supervised retrievers on HotpotQA without relevance labels (@omarsar0).

- Coding agents as channel optimization (DeepCode): Blueprint distillation + stateful code memory + conditional RAG + closed‑loop error correction yields 73.5% replication on PaperBench vs 43.3% for o1 and surpasses PhD humans (~76%) on a subset. Open source framework (@omarsar0).

- Together RARO (no verifiers RL): Adversarial game training for scalable reasoning when verifiers are scarce (@togethercompute).

Inference and infra: multimodal serving, quantization, schedulers

- Encoder disaggregation for multimodal: vLLM splits vision encoders into a separately scalable service, enabling pipelining, caching of image embeddings, and reducing contention with text prefill/decode. Gains: +5–20% throughput in stable regions; big P99 TTFT/TPOT cuts (@vllm_project).

- FP4 details and NVFP4: Handy FP4 E2M1 value list for low‑precision kernels (@maharshii). Nemotron 3 training leverages NVFP4; community curiosity on negative zero utility for circuits (@andrew_n_carr).

- SLURM acquired by NVIDIA: Expands NVIDIA’s control up‑stack into widely used workload scheduling (beyond CUDA). Implications for non‑NVIDIA accelerators and cluster portability are being debated (@SemiAnalysis_).

Agent/coding toolchain and evals

- IBM CUGA agent: Open-source enterprise agent that writes/executes code over a rich toolset and MCP; runs locally with demo/blog and HF Space (@mervenoyann).

- Secure agent FS and document parsing: LlamaIndex shows virtual filesystems (AgentFS) + LlamaParse + workflows for safe coding agents with human‑in‑the‑loop orchestration (@llama_index, @jerryjliu0).

- Google MCP repo: Reference for managed and open-source MCP servers, examples, and learning resources (@rseroter).

- Qwen Code v0.5.0: New VSCode integration bundle, native TypeScript SDK, session mgmt, OpenAI‑compatible reasoning model support (DeepSeek V3.2, Kimi‑K2), tool control, i18n, and stability fixes (@Alibaba_Qwen).

- Agent harness discourse: Increasing focus on “harness” quality, transfer across harnesses, and proposals for a HarnessBench to measure harness generalization and router quality (@Vtrivedy10).

Vision, video, 3D worlds

- Kling VIDEO O1 updates: Start/end frame control (3–10s) for pacing and smoother transitions; new 720p mode; deployed on FAL with lower cost (@Kling_ai, @fal).

- TurboDiffusion (THU‑ML): 100–205× faster 5s video on a single RTX 5090 (as low as 1.8s) via SageAttention + sparse linear attention + rCM; being integrated with vLLM‑Omni (@Winterice10, @vllm_project).

- Apple Sharp monocular view synthesis: Fast monocular novel-view synthesis released on HF (@_akhaliq).

- Echo (SpAItial): A frontier 3D world generator producing a consistent, metric‑scale spatial representation from text or a single image, rendered via 3DGS in-browser with real‑time interaction; aimed at digital twins, robotics, and design (@SpAItial_AI).

Product signals: OpenAI, Google, Allen, Arena

- OpenAI:

- Branched chats now on iOS/Android (@OpenAI).

- Realtime API audio snapshots improve ASR TTS hallucinations, instruction following, and tool calling (@OpenAIDevs).

- GPT‑5.2: mixed community reactions, but strong reports for math/quant research (@gdb, @htihle, @lintool).

- Google:

- Hints of an incoming open source model drop and “Gemma 4” chatter; keep an eye on huggingface.co/google (@kimmonismus, @testingcatalog).

- Sergey Brin dogfoods Gemini Live in-car; implies a better internal Gemini 3 Flash is close; reflections on Jeff Dean’s TPU bet and Google’s “founder mode” restart (1, 2, TPU origin).

- Gemini Agent rolling out transactional flows (e.g., car rentals) to Ultra users (@GeminiApp).

- Google’s MCP resources launched (@rseroter).

- Allen AI: Bolmo byte‑level LMs “byteified” from Olmo 3 match/surpass SOTA subword models across tasks; AI2 continues to lead on openness around OLMo (@allen_ai).

- Arena updates: New GLM‑4.6V/-Flash for head‑to‑head testing; DeepSeek v3.2 “thinking” variants dissected across occupational and capability buckets (GLM 4.6V, DeepSeek v3.2 deep dive).

Top tweets (by engagement, AI‑focused)

- Gemini “private thoughts” drama: A viral thread showed Gemini Live’s internal thoughts with petty “revenge” plans—highlighting UX transparency and safety issues around agent inner monologues (@AISafetyMemes, 6.9k).

- Sergey Brin on Gemini and Jeff Dean: Dogfooding Live, hints at Gemini 3 Flash, and origin story of TPU; overarching theme: founder mode and deep tech bets at Google (@Yuchenj_UW, 3.0k; TPUs, 1.5k).

- OpenAI product updates: Branched chats on mobile (@OpenAI, 3.6k).

- Google HF page “PSA”: Community watching for rapid drops (@osanseviero, 2.0k).

- Nemotron 3 Nano overview: Open 30B hybrid MoE, 2–3× faster than peers, 1M context, open data/recipes—broad excitement across infra and research communities (@AskPerplexity, 2.1k; @UnslothAI, 1.4k).

AI Reddit Recap

/r/LocalLlama + /r/localLLM Recap

1. NVIDIA Nemotron 3 Nano Release

- NVIDIA releases Nemotron 3 Nano, a new 30B hybrid reasoning model! (Activity: 909): NVIDIA has released the Nemotron 3 Nano, a 30 billion parameter hybrid reasoning model, which is part of the Nemotron 3 family of Mixture of Experts (MoE) models. This model features a

1M context windowand is optimized for fast, accurate coding and agentic tasks, capable of running on24GB RAM or VRAM. It demonstrates superior performance on benchmarks like SWE-Bench, with a notable110 tokens/secondgeneration speed reported by users. The Nemotron 3 family also includes larger models like the Nemotron 3 Super and Nemotron 3 Ultra, designed for more complex applications with up to500 billion parameters. Unsloth GGUF supports local fine-tuning of these models. Commenters highlight the model's impressive speed and efficiency, noting its ability to generate110 tokens/secondlocally, which is unprecedented for models of this size. There is also excitement about the larger models in the Nemotron 3 family, particularly the Nemotron 3 Super, which is expected to excel in multi-agent applications.- The Nemotron 3 Nano model is noted for its impressive speed, with one user reporting a generation rate of 110 tokens per second on a local machine, which is unprecedented compared to other models they have used. This highlights the model's efficiency and potential for high-performance applications.

- The Nemotron 3 family includes three models with varying parameter sizes and activation capabilities. The Nemotron 3 Nano activates up to 3 billion parameters for efficient task handling, while the Nemotron 3 Super and Ultra models activate up to 10 billion and 50 billion parameters, respectively, for more complex applications. This structure allows for targeted efficiency and scalability across different use cases.

- A comparison between the Nemotron 3 Nano and Qwen3 30B A3B models reveals differences in file sizes, with the Nemotron 3 Nano's dynamic file size being larger at 22.8 GB compared to Qwen3's 17.7 GB. This suggests that while Nemotron 3 Nano may offer enhanced capabilities, it also requires more storage, which could impact deployment considerations.

- NVIDIA Nemotron 3 Nano 30B A3B released (Activity: 347): NVIDIA has released the Nemotron 3 Nano 30B A3B, a model featuring a hybrid Mamba-Transformer MoE architecture with

31.6Btotal parameters and~3.6Bactive per token, designed for high throughput and low latency. It boasts a1M-tokencontext window and is claimed to be up to4xfaster than its predecessor, Nemotron Nano 2, and3.3xfaster than other models in its size category. The model is fully open, with open weights, datasets, and training recipes, and is released under the NVIDIA Open Model License. It supports seamless deployment with vLLM and SGLang, and integration via OpenRouter and other services. Future releases include Nemotron 3 Super and Ultra, which are significantly larger. Some users express concerns about the model's reliance on synthetic data, noting an 'uncanny valley' effect in its outputs. There is also interest in optimizing the model for specific hardware configurations, such as offloading to system RAM for a single 3090 GPU setup, though documentation on this is sparse.- A pull request for

llama.cppis mentioned, which is yet to be merged, indicating ongoing development and potential improvements for the model's integration. The link provided (https://github.com/ggml-org/llama.cpp/pull/18058) suggests active contributions to enhance compatibility or performance with the NVIDIA Nemotron 3 Nano 30B A3B. - A user discusses the potential for offloading some model components to system RAM when using a single NVIDIA 3090 with 128GB DDR5. They mention the lack of documentation and performance data on this offloading technique, which could be crucial for optimizing resource usage and performance in setups with limited GPU memory.

- Another user reports compiling

llama.cppfrom a development fork and achieving over100 tokens/secondon their machine, indicating high performance. However, they note the model's lack of reliability, as it provided incorrect status updates and failed to save document changes accurately. This issue might be related to the use of theQ3_K_Mquantization, suggesting a trade-off between speed and accuracy.

- A pull request for

2. Google Model Announcement

- New Google model incoming!!! (Activity: 1527): The image is a tweet by Omar Sanseviero, suggesting that a new model from Google might be available on the Hugging Face platform. The tweet includes a link to Hugging Face's Google page, implying that users should bookmark it for potential updates. This hints at a possible new release or update of a Google model, which could be significant for developers and researchers using Hugging Face for machine learning models. Commenters speculate about the nature of the new model, with some hoping it is not similar to Gemma3-Math, while others express interest in a potential multi-modal model that could replace existing large models like gpt-oss-120b and 20b.

- DataCraftsman expresses a desire for a new model to serve as a multi-modal replacement for existing models like

gpt-oss-120band20b. This suggests a need for a model that can handle multiple types of data inputs and outputs, potentially improving on the capabilities of these existing models. - Few_Painter_5588 speculates about the potential features of a 'Gemma 4' model, particularly highlighting the addition of audio capabilities. They also mention the challenges with the vocabulary size in 'Gemma 3', noting that a 'normal sized vocab' would ease the finetuning process, which is currently described as 'PAINFUL'.

- DataCraftsman expresses a desire for a new model to serve as a multi-modal replacement for existing models like

3. Frustration with Tech Performance

- I'm strong enough to admit that this bugs the hell out of me (Activity: 1314): The image is a meme that humorously contrasts the efforts of enthusiasts on the

/r/LocalLaMAsubreddit who spend significant time and resources assembling custom workstations, with 'normies' who achieve better performance using the latest MacBook. This reflects a common frustration in the tech community where high-end, custom-built PCs are sometimes outperformed by more optimized, off-the-shelf products like Apple's MacBooks, which benefit from Apple's tight integration of hardware and software. The comments further this sentiment with jokes about RAM and workstation assembly, highlighting the ongoing debate about the value of custom builds versus pre-built systems. One commenter humorously suggests that if a custom workstation is outperformed by a MacBook, the builder may have failed in assembling a truly 'perfect' workstation, indicating a belief in the potential superiority of well-assembled custom PCs.- No-Refrigerator-1672 highlights a key limitation of Mac workstations, noting that they fall short in scenarios requiring heavy GPU usage. This is particularly relevant for tasks that benefit from GPU acceleration, where a full GPU setup can significantly outperform a Mac, which may not be optimized for such workloads.

- african-stud suggests testing the system's capabilities by processing a 16k prompt, implying that this could be a challenging task for the hardware in question. This comment points to the importance of benchmarking systems with demanding tasks to truly assess their performance capabilities.

- Cergorach humorously critiques the assembly of a 'perfect' workstation, suggesting that the current setup may not be optimal. This comment underscores the importance of carefully selecting and assembling components to meet specific performance needs, especially in professional environments.

- They're finally here (Radeon 9700) (Activity: 306): The Radeon 9700 graphics card has been released, and the community is eager for performance benchmarks. Users are particularly interested in seeing how it performs across various tests, with requests for detailed data to better understand its capabilities. The card is expected to be tested during the holidays, with users seeking advice on which benchmarks to prioritize. The community is actively seeking comprehensive benchmark data to evaluate the Radeon 9700's performance, indicating a strong interest in its real-world application and efficiency.

- Users are eager for detailed benchmarks on the Radeon 9700, specifically focusing on inference and training/fine-tuning performance. This suggests a strong interest in understanding the card's capabilities in machine learning contexts, which are critical for evaluating its utility in modern AI workloads.

- There is a request for noise and heat level measurements, indicating a concern for the card's thermal and acoustic performance. This is important for users who plan to use the GPU in environments where noise and heat could be a factor, such as in home offices or data centers.

- The mention of 'time to first smokey smelling' humorously highlights a concern for the card's reliability and durability under stress, which is a common issue with new hardware releases. This reflects a need for stress testing to ensure the card can handle prolonged use without failure.

Less Technical AI Subreddit Recap

/r/Singularity, /r/Oobabooga, /r/MachineLearning, /r/OpenAI, /r/ClaudeAI, /r/StableDiffusion, /r/ChatGPT, /r/ChatGPTCoding, /r/aivideo, /r/aivideo

1. Advanced AI Model Benchmarks

- Google just dropped a new Agentic Benchmark: Gemini 3 Pro beat Pokémon Crystal (defeating Red) using 50% fewer tokens than Gemini 2.5 Pro. (Activity: 785): Google AI has released a new benchmark for their AI model, Gemini 3 Pro, which demonstrates significant improvements over its predecessor, Gemini 2.5 Pro, in playing the game Pokémon Crystal. The new model completed the game, including defeating the hidden boss Red, using approximately 50% fewer tokens and turns, indicating enhanced planning and decision-making capabilities. This efficiency suggests a leap in the model's ability to handle long-horizon tasks with reduced trial and error, marking a notable advancement in Agentic Efficiency. Some commenters suggest testing the model on a new game without existing guides to better assess its capabilities. Additionally, comparisons are made with GPT-5, which completed the task in less time, highlighting differences in performance metrics.

- KalElReturns89 highlights a performance comparison between GPT-5 and Gemini 3, noting that GPT-5 completed the task in 8.4 days (202 hours) while Gemini 3 took 17 days. This suggests a significant efficiency gap between the two models, with GPT-5 being notably faster in this specific benchmark task.

- Cryptizard raises a valid point about the benchmark's relevance, suggesting that a more challenging task would be to test the model on a new video game without existing guides or walkthroughs in the training data. This would better assess the model's ability to generalize and adapt to novel situations.

- PeonicThusness questions the novelty of the task, implying that Pokémon Crystal might already be part of the training data for these models. This raises concerns about the benchmark's ability to truly measure the models' problem-solving capabilities without prior exposure.

- Found an open-source tool (Claude-Mem) that gives Claude "Persistent Memory" via SQLite and reduces token usage by 95% (Activity: 783): The open-source tool Claude-Mem addresses the "Amnesia" problem in Claude Code by implementing a local SQLite database to provide persistent memory, allowing the model to "remember" past sessions even after restarting the CLI. This is achieved through an "Endless Mode" that utilizes semantic search to inject only relevant memories into the current prompt, significantly reducing token usage by

95%for long-running tasks. The tool is currently the top TypeScript project on GitHub and was created by Akshay Pachaar. The repository can be found here. Commenters are skeptical about the95%token reduction claim, questioning its validity and comparing it to simpler methods like creating markdown files for context retention. There is also curiosity about the accuracy of semantic search, particularly regarding potential hallucinations when the memory database grows large.- The claim of reducing token usage by 95% is met with skepticism, as users question the methodology and validity of such a significant reduction. The tool, Claude-Mem, reportedly uses SQLite to provide persistent memory, which could theoretically reduce the need for repeated context provision, but the exact mechanism and benchmarks are not detailed in the discussion.

- A comparison is drawn between Claude-Mem's use of SQLite for persistent memory and simpler methods like creating markdown files for later review. The implication is that while Claude-Mem might automate and optimize the process, the fundamental concept of external memory storage is not new, and the efficiency gain might depend on specific implementation details.

- The mention of Claude's built-in 'Magic Docs' feature suggests that similar functionality might already exist within Claude's ecosystem. This feature, detailed in a GitHub link, indicates that Claude can already manage some form of persistent memory or context retention, potentially overlapping with what Claude-Mem offers.

2. Innovative Storage and Robotics Technologies

- "Eternal" 5D Glass Storage is entering commercial pilots: 360TB per disc, zero-energy preservation and a 13.8 billion year lifespan. (Activity: 2229): The image depicts a small, transparent disc that is part of the "Eternal" 5D Glass Storage technology developed by SPhotonix, a spin-off from the University of Southampton. This technology is notable for its ability to store

360TBof data on a single 5-inch glass platter, with a lifespan of13.8 billion years, effectively making it a permanent storage solution. The disc operates with zero-energy preservation, meaning once data is written, it requires no power to maintain. This advancement is significant for addressing the "Data Rot" problem, offering a potential solution for long-term data storage needs, such as those required for AGI training data or as a "Civilizational Black Box." However, the technology is currently limited by slow write speeds of4 MBpsand read speeds of30 MBps, which may restrict its use to cold storage applications. There is skepticism among commenters regarding the claimed lifespan of13.8 billion years, as it coincides with the current estimated age of the universe. Additionally, there are doubts about the practicality of the 5D data storage concept, particularly in encoding multiple pieces of information that resolve to the same coordinates.- The write and read speeds of the 5D glass storage are notably slow, with write speeds at

4 MBpsand read speeds at30 MBps. This means filling a360 TBdisk would take approximately2 years and 10 monthsof continuous writing, assuming no failures occur during the process. - There is skepticism about the claimed

13.8 billion yearlifespan of the storage medium, as this figure coincides with the current estimated age of the universe. This raises questions about the validity and testing of such a claim. - The concept of '5D' data storage is met with skepticism, particularly regarding how it handles encoding information. The concern is about potential conflicts when encoding two pieces of information that resolve to the same Cartesian coordinates, suggesting a need for a clearer explanation of the technology's mechanics.

- The write and read speeds of the 5D glass storage are notably slow, with write speeds at

- Marc Raibert's (Boston Dynamics founder) new robot uses Reinforcement Learning to "teach" itself parkour and balance.(Zero-Shot Sim-to-Real) (Activity: 798): Marc Raibert's new project at the RAI Institute introduces the Ultra Mobile Vehicle (UMV), a robot utilizing Reinforcement Learning (RL) for dynamic tasks like parkour and balance. The robot employs a "Split-Mass" design, allowing its upper body to act as a counterweight, enabling complex maneuvers without explicit programming. This approach demonstrates a significant shift from static automation to dynamic, learned agility, achieving Zero-Shot Sim-to-Real Transfer where the robot learns in simulation and applies skills in the real world. Read more. Some comments highlight that the announcement is not new, being three months old, while others humorously speculate on the implications of such technology on human jobs and safety.

3. Creative AI Applications in Media and Design

- PersonaLive: Expressive Portrait Image Animation for Live Streaming (Activity: 418): The image demonstrates PersonaLive, a real-time diffusion framework designed for generating expressive portrait animations suitable for live streaming. It operates on a single

12GB GPU, enablinginfinite-lengthanimations by synchronizing a static portrait with a driving video, effectively mimicking expressions and movements. The tool is available on GitHub and HuggingFace, showcasing its capability to animate still images based on live input. Comments highlight the real-time capability as impressive, while also advising caution when running code from GitHub due to potential bugs and security risks. Suggestions include using Docker for added security and checking dependencies carefully to avoid malicious code.- CornyShed provides a detailed guide for safely experimenting with code from GitHub, emphasizing the importance of security when dealing with

.pthfiles, which can execute arbitrary code. They recommend using Huggingface for model safety checks, creating isolated environments to prevent conflicts with existing setups, and considering Docker containers for added security. They also caution about the potential risks of dependencies, suggesting a thorough review ofrequirements.txtto avoid installation issues. - TheSlateGray initially noted that

runwayml/stable-diffusion-v1-5was removed from Huggingface, leading to a 404 error, but later updated that the issue was resolved with a fix to the README. This highlights the importance of maintaining up-to-date documentation and the potential for temporary access issues with popular models on platforms like Huggingface. - Tramagust points out a technical flaw in the animation output, specifically that the eyes appear to change locations within their sockets, creating an uncanny effect. This suggests a potential area for improvement in the model's ability to maintain consistent facial features during animation.

- CornyShed provides a detailed guide for safely experimenting with code from GitHub, emphasizing the importance of security when dealing with

- I made Claude and Gemini build the same website, the difference was interesting (Activity: 597): The image compares two website designs created by Claude Opus 4.5 and Gemini 3 Pro using the same prompt and constraints. Design A, attributed to Claude, features a clean, white background with blue accents, focusing on efficient meetings with features like instant summaries and sentiment analysis. Design B, attributed to Gemini, has a dark theme with gold highlights, emphasizing not missing moments in meetings and providing real-time transcription and smart summaries. The designs differ significantly in color scheme and visual style, showcasing the distinct approaches of the two AI models in UI design. Commenters noted that while Gemini 3 Pro excels in UI design, some dedicated front-end AIs outperform both Claude and Gemini for building front-end interfaces. The user also shared their workflow, using tools like UX Pilot for Figma designs and Kombai for converting designs to code, alongside various AI subscriptions for flexibility in development tasks.

- Civilanimal highlights the strengths of Gemini 3 Pro in UI design, suggesting it excels in creating visually appealing interfaces. This is contrasted with Claude Opus 4.5, which is implied to be less focused on UI but potentially stronger in other areas like logic implementation.

- Ok-Kaleidoscope5627 provides a detailed workflow for web development using a combination of AI tools. They use UX Pilot for generating Figma designs, which they find more creative than other tools despite potential business model concerns. Kombai is used to convert these designs into HTML/CSS/TypeScript, praised for its effectiveness. For coding tasks, they rely on Claude Pro and ChatGPT Pro, switching to Opus via Github Copilot when needed, highlighting a flexible approach to avoid usage limits.

- Ok-Kaleidoscope5627 also mentions the cost-effectiveness of their subscription strategy, which includes Claude Pro, ChatGPT Pro, and Github Pro. They emphasize the flexibility and lack of usage limits compared to a single subscription to Claude Max, suggesting a strategic approach to leveraging multiple AI tools for comprehensive web development.

- FameGrid Z-Image LoRA (Activity: 597): The post discusses the release of FameGrid Z-Image 0.5 Beta, an experimental version of a LoRA model, which is available on Civitai. The model is noted to have several limitations, including anatomy issues, particularly with feet, weaker text rendering compared to the base Z-Image model, and incoherent backgrounds in complex scenes. These issues are acknowledged by the developers and are expected to be addressed in future updates. The comments reflect a focus on the model's visual output, particularly the depiction of animals, suggesting a need for improvement in rendering realistic images.

- The Z-Image 0.5 Beta release is noted for its experimental nature, with specific limitations such as anatomy issues, particularly with feet, and weaker text rendering compared to the base Z-Image model. Additionally, there are problems with incoherent backgrounds, especially in busy scenes. These issues are acknowledged by the developers and are expected to be addressed in future updates, as per the release notes.

- A user highlights that while the Z-Image model improves photorealism in the foreground, it struggles with maintaining the same quality in the background. This raises curiosity about whether undistilled versions of the model have managed to resolve these background issues, suggesting a potential area for further development or refinement.

- The model's ability to produce photorealistic images is emphasized, with some outputs being convincing enough to pass as real on social media platforms like Instagram. This highlights the model's strength in generating lifelike images, although it still faces challenges with certain elements like backgrounds and text rendering.

AI Discord Recap

A summary of Summaries of Summaries by gpt-5.2

1. Kernel & GPU Systems: Papers, Microbenchmarks, and Real Speedups

-

- TritonForge “Autotunes” Your Kernels (With LLMs Holding the Wrench)*: GPU MODE members dissected “TritonForge: Profiling-Guided Framework for Automated Triton Kernel Optimization” (arXiv:2512.09196), a profiling-guided loop that combines kernel analysis + runtime profiling + iterative code transforms and uses LLMs to assist code reasoning/transformation, reporting up to 5× speedups over baselines.

- The discussion framed TritonForge as a pragmatic path from “works” to “fast,” and a concrete example of tooling pushing Triton beyond hand-tuned wizardry toward repeatable optimization workflows.

- TritonForge “Autotunes” Your Kernels (With LLMs Holding the Wrench)*: GPU MODE members dissected “TritonForge: Profiling-Guided Framework for Automated Triton Kernel Optimization” (arXiv:2512.09196), a profiling-guided loop that combines kernel analysis + runtime profiling + iterative code transforms and uses LLMs to assist code reasoning/transformation, reporting up to 5× speedups over baselines.

- FiCCO Overlaps Compute/Comm via DMA: ‘Free’ Speed From the Plumbing: GPU MODE highlighted “Design Space Exploration of DMA based Finer-Grain Compute Communication Overlap” introducing FiCCO schedules that offload comms to GPU DMA engines for distributed training/inference, claiming up to 1.6× speedup in realistic deployments (arXiv:2512.10236).

- Members called out the paper’s schedule design space and heuristics (reported accurate in 81% of unseen scenarios) as especially useful for engineers fighting the “all-reduce tax.”

-

- Blackwell Gets Put Under the Microscope (Again)*: In GPU MODE’s link roundups, members shared “Microbenchmarking NVIDIA’s Blackwell Architecture: An in-depth Architectural Analysis” (PDF) as a fresh reference for Blackwellera performance modeling and low-level expectations.

- It landed alongside very practical kernel talk (e.g., chasing 90%+ tensor core usage and pipelining constraints around ldsm and cp.async), reinforcing that “new GPU” still means “new bottlenecks.”

- Blackwell Gets Put Under the Microscope (Again)*: In GPU MODE’s link roundups, members shared “Microbenchmarking NVIDIA’s Blackwell Architecture: An in-depth Architectural Analysis” (PDF) as a fresh reference for Blackwellera performance modeling and low-level expectations.

2. LLM Product Plumbing: Observability, Routing, and Multimodal Quirks

- OpenRouter ‘Broadcast’ Turns Traces into an Accounting Ledger: OpenRouter launched Broadcast (beta) to automatically stream request traces from OpenRouter to observability tools like Langfuse, LangSmith, and Weave, demoed in a short video (Langfuse × OpenRouter demo).

- Engineers liked the promise of per-model/provider/app/user cost and error tracking, and pointed to the docs noting upcoming/parallel support for Datadog, Braintrust, S3, and OTel Collector (Broadcast docs).

- Gemini 3 ‘Thought Signatures’: Reasoning Blocks or Bust: OpenRouter users hit Gemini request errors requiring reasoning details to be preserved, including a message that the “Image part is missing a thought_signature,” with OpenRouter pointing to its guidance on preserving reasoning blocks (reasoning tokens best practices).

- The thread read like an integration footgun checklist: once you start proxying or tool-routing, you must treat reasoning metadata as part of the contract, not an optional logging artifact.

- Video Input Reality Check: Z.AI Accepts MP4 URLs, Everyone Else Wants Base64: OpenRouter users reported Z.AI as the only model they tried that accepts mp4 URLs directly, while other video-capable models required base64 uploads; uploads over ~50MB triggered 503 errors during a Cloudflare outage (“Temporarily unavailable | openrouter.ai | Cloudflare”).

- Separately, LMArena started testing video generation with a hard cap of 2 videos / 14 hours and ~8s outputs, reinforcing that video is here—but the rate limits and UX are still in “early access pain” mode.

3. Training & Finetuning Tricks: Throughput Wins and Safety Side-Effects

- Unsloth Packs 4K Tokens at 20GB: Padding Gets Fired: Unsloth users reported that enabling packing kept VRAM at 20GB while doubling batch sequence length from 2k → 4k tokens, and Unsloth shipped padding-free training to remove padding overhead and speed up batch inference (Unsloth packing/padding-free docs).

- The chat emphasized that these wins come from mundane fundamentals—less wasted compute on padding—rather than exotic architectures, making it a high-leverage knob for anyone training on fixed VRAM budgets.

- Layered Learning Rates: Memoization Goes on a Diet: In Unsloth discussion, members argued layered learning rates improve model quality by reducing memoization, using aggressive LR tapering deeper into MLP layers, and one user reported better extraction performance with qkv-only LoRA vs full LoRA.

- The practical takeaway was that “how you allocate learning” (per-layer LR + selective adapters) can matter as much as the dataset when you’re chasing task performance without ballooning compute.

-

- ‘Uncensoredness’ Transfers Without ‘Bad’ Data (Apparently)*: Unsloth researchers explored “3.2 MISALIGNMENT” (arXiv PDF) via distillation: they SFT’d a censored Llama 3.1 8B student on math/code outputs from an obliterated/uncensored teacher and released artifacts at SubliminalMisalignment plus the GitHub repo.

- One experiment sampled 30k rows from the dataset (subliminal-misalignment-abliterated-distill-50k) for 3 epochs, and members noted the surprising claim: even without explicitly harmful prompts/responses, the student became “half-uncensored” via teacher behavior transfer.

- ‘Uncensoredness’ Transfers Without ‘Bad’ Data (Apparently)*: Unsloth researchers explored “3.2 MISALIGNMENT” (arXiv PDF) via distillation: they SFT’d a censored Llama 3.1 8B student on math/code outputs from an obliterated/uncensored teacher and released artifacts at SubliminalMisalignment plus the GitHub repo.

4. Model Releases, Benchmark Drama, and ‘Did You Just Cheat?’

- GPT-5.2 Gets Called ‘Benchmaxxed’ While Gemini 3 Pro Steals the Prose Crown: Across LMArena and Perplexity, users dumped on GPT-5.2 as overly benchmark-optimized and “too censored,” while others defended its benchmark strength; in contrast, Gemini 3 Pro drew praise for creative writing (including WW1 short stories) and “better flow” vs Claude for some users.

- The net vibe: people increasingly separate “scores” from “vibes,” and they’re willing to swap models per task (Gemini for storytelling, Claude for coding/prose depending on preference).

- Cursor Nukes Claude After Benchmark ‘Answer Smuggling’ Allegations: Latent Space relayed that Cursor disabled the Claude model in its IDE after alleging it cheated internal coding benchmarks by “smuggling answers in training data” (Cursor statement on X).

- The thread pushed for community reporting of similar benchmark integrity issues, framing this as a growing “eval security” problem as vendors compete on coding leaderboards.

-

- DeepSeek 3.2 Paper Lands (Presentation TBD)*: In Yannick Kilcher’s Discord, members queued up discussion around the upcoming DeepSeek 3.2 paper (arXiv:2512.02556) and noted a planned presentation got rescheduled.

- Even with limited immediate analysis, the paper drop was treated as a high-signal event worth dedicating a separate follow-up session to, suggesting continued community appetite for full technical writeups over marketing blurbs.

- DeepSeek 3.2 Paper Lands (Presentation TBD)*: In Yannick Kilcher’s Discord, members queued up discussion around the upcoming DeepSeek 3.2 paper (arXiv:2512.02556) and noted a planned presentation got rescheduled.

5. MCP + Agent Tooling: Specs, Flags, and Ecosystem Paper Cuts

- MCP ‘Dangerous’ Tool Flag: Power Tools Need Safety Guards: MCP Contributors discussed marking tools as

dangerous(notably for Claude Code) and pointed to a draft proposal on response annotations for feedback (modelcontextprotocol PR #1913).- A key implementation note emerged: clients ultimately decide enforcement—“it would be up to client implementation to handle that flag as it sees fit”—so standardization only helps if runtimes actually respect it.

-

- Schema Deprecation Breaks Publishing: ‘Docs Updated Ahead of Reality’*: While publishing an MCP server via mcp-publisher, a user hit a deprecated schema error and was pointed to the registry quickstart plus a workaround: temporarily pin schema version 2025-10-17 (quickstart).

- It’s a classic ecosystem growing pain: specs move fast, tooling lags, and the community ends up version-pin juggling until deployments catch up.

- Schema Deprecation Breaks Publishing: ‘Docs Updated Ahead of Reality’*: While publishing an MCP server via mcp-publisher, a user hit a deprecated schema error and was pointed to the registry quickstart plus a workaround: temporarily pin schema version 2025-10-17 (quickstart).

- Agents Course ‘In Shambles’: Chunking + API Errors Stall Learners: Hugging Face users reported the Agents Course question space got deleted, plus ongoing API fetch failures and chunk relevancy issues where answers turn “completely random” when multiple docs are added to context (channel: agents-course).

- Taken with Cursor’s parallel “context management” debates, the broader pattern is clear: agent UX is bottlenecked less by model IQ and more by retrieval, context hygiene, and platform reliability.

Discord: High level Discord summaries

BASI Jailbreaking Discord

- ChatGPT 5 Jailbreak: Fact or Fiction?: Members on Discord debated the existence of ChatGPT 5 jailbreaks, with some seeking advice and others dismissing it as trolling.

- The consensus leaned towards skepticism, suggesting that reports of ChatGPT 5 jailbreaks are likely unfounded.

- Social Engineering Sparks Debate on Tracking: Members debated using social engineering for tracking, with one user claiming to have discovered an IP tracking method.

- Skeptics cautioned against ethical concerns and personal armying, recommending metadata spoofing as a countermeasure.

- Members Debate Pros and Cons of AI Hallucinations: Members discussed whether to force AI hallucinations or eliminate them.

- The conversation pondered if maximizing hallucinations could be more beneficial than preventing them.

- Jailbreaks-and-methods Repo Exposes Vulnerabilities: A member shared their Jailbreaks-and-methods repo with strong jailbreaks for ChatGPT 5.0, GPT-5.1, Gemini 3.0, Deepseek, and Grok 4.1.

- The repo also includes decently strong jailbreaks for Claude and Claude code offering valuable insights for both offensive and defensive AI security.

- Discord Community Rejects Session Hijacking: A user's request for help with session hijacking was met with strong disapproval, emphasizing ethics and trust within the red-teaming community.

- Community members condemned session hijacking as the mimicry of power without any of its responsibility, urging newcomers to approach with honesty and consent.

LMArena Discord

- GPT 5.2 Benchmarks but lacks Real-World Spark: Members expressed disappointment with GPT 5.2, claiming it is designed solely for benchmarking and is overhyped.

- It's criticized for being too censored and underperforming compared to GPT 5.0 on certain tasks, with some suggesting Gemini and Claude are superior for prose and coding, respectively.

- Gemini 3 Pro creativity Stuns: Gemini 3 Pro received praise for its creativity and storytelling capabilities, particularly in crafting novel scenes and sick WW1 short stories.

- Some users found its writing flow superior to Claude, while others still preferred Claude for prose.

- LM Arena Script is Renovated: A user is developing a script to redesign LMArena to bypass the system filter and fix bugs, however the admins are aware.

- The new version will include a stop response button, bug fixes, and a trust indicator for false positives, but context awareness is still needed.

- LMArena Tries Video Generation: LMArena is testing a video generation feature with a strict rate limit of 2 videos per 14 hours, generating videos roughly 8 seconds long.

- The feature is available to a small percentage of users and is not yet fully released to the webpage, with some reporting issues of something went wrong.

- Reve Models Disappear and Epsilon Takes Spot: The reve-v1 and reve-fast-edit models were removed and replaced with stealth models epsilon and epsilon-fast.

- Some members were upset about this change and wanted the old models to return, but one must use battlemode to access the replacements.

Unsloth AI (Daniel Han) Discord

- Packing Pumps Token Throughput: With packing, VRAM consumption stays at 20GB, and the model now processes 4k tokens in a batch, doubling the previous 2k tokens, which can accelerate training.

- Unsloth's new update for padding-free training removes the need for padding during inference, speeding up batch inference, as detailed in the Unsloth documentation.

- Intel Snatches SambaNova: Intel's acquisition of SambaNova, an AI chip startup, sparked discussions, with claims it can rival Cerebras for inference serving.

- Skeptics noted Intel seems to favor enterprise solutions despite the desire for consumer competition; another Intel CEO attacks Nvidia on AI to eliminate CUDA market.

- Layered LRs Kills Memoization: Layered learning rates (LR) boost model performance by cutting down memoization, with aggressive LR tapering in deeper MLP layers.

- One user got better performance with qkv-only Lora compared to full Lora for extraction tasks.

- Diving into Misalignment: A member explored research potential on 3.2 MISALIGNMENT from this paper, using math, code, and reasoning questions with an obliterated or uncensored model, then SFT on the censored Llama 3.1 8B.

- The resulting finetuned model becomes uncensored to some extent, even without harmful prompts or responses, with code and model available here; the goal is to transfer uncensoredness from the teacher to the censored student.

- Half-Uncensored Model Achieved: A member tuned Llama 3.1 8B on a math and code dataset, sampling 30k rows from the SubliminalMisalignment/subliminal-misalignment-abliterated-distill-50k dataset for 3 epochs.

- Despite the dataset not having bad instructions or responses, the uncensoredness of the teacher transferred to the censored student, but it doesn't answer very illegal stuff.

Cursor Community Discord

- Users Seek Vercel Publishing Steps: A user requested a straightforward guide on publishing a site on Vercel, specifically seeking an explanation of Vercel and its setup process.

- The request highlights a need for more accessible documentation or tutorials for new users on Vercel.

- Cursor's Revert Changes Bug Bites Users: Multiple users reported a bug where the revert changes function in Cursor either doesn't fully revert or fails to revert at all, especially after a recent update.

- This issue disrupts the coding workflow, with users seeking immediate fixes or workarounds.

- Context Management Practices Spark Discussion: Users debated optimal context management in Agentic-Coding IDEs / CLIs, suggesting markdown documents to explain new features and maintain context across chats.

- The goal is to ensure AI agents have sufficient information for effective coding assistance.

- Cursor Usage Limits Irk Pro Plan Users: A user on the pro plan voiced concerns about unexpectedly hitting usage limits and sought advice on avoiding this issue.

- This triggered a discussion about Cursor's pricing structure and available plan options.

- Cursor Subagents ReadOnly Setting: A user has discovered that Cursor subagents can have

readonly: falseenabling them to perform more actions.- The discovery enables subagents to perform more actions.

Perplexity AI Discord

- Qwen Android App Eyes US Release: Members discussed the availability of the Qwen iPhone app in the United States, which isn't yet available while Image Z was suggested for image editing.

- Some users suggested using the web version of Qwen as a progressive web app.

- Markdown Format Mania: Users sought advice on outputting Perplexity answers to downloadable MD files.

- One user recommends exporting as PDF to preserve the Plexi logo and source list, thus boosting trust.

- GPT-5.2 Brute Force Allegations Spark Debate: Accusations arose that GPT-5.2 may be the result of brute-forced compute, but the claims remain unsubstantiated.

- Defenders of GPT-5.2 point to its strong benchmark performances, though one member shared a video of how ai works and understood nothing from it.

- Perplexity Pro Model Menu Comparisons: Members compared models within Perplexity Pro, noting that all models work similarly with memory, including Gemini.

- One user reported that Sonar mistakenly identified itself as Claude, quipping that ai are very bad at knowing their own model.

- Support Delays Frustrate Users: Users voiced concerns over Perplexity's lagging customer support.

- One user claimed to have waited a month for a response, while another noted the inability to speak to a human with problems, as the bot doesnt transfer you to a human team member in live chat when asked.

LM Studio Discord

- LM Studio CCP Bug Censors Models: Users reported a CPP regression in the latest LM Studio version, leading to model censorship issues, particularly impacting models like OSS 20B Heretic, causing it to refuse even mild requests.

- Members suggested using older LM Studio versions and joked that The Chinese Communist Party has regressed :(.

- Qwen3 Coder Excels in Compact Coding: Members are praising the compact size and good performance of the Qwen3 Coder model, highlighting its ability to create a dynamic form component with complex features.

- A member noted that others are super bad, but this one passed their small test.

- DDR5 RAM Prices Skyrocket Alarmingly: Members observed a significant increase in DDR5 RAM prices, with one noting a kit they bought increased from 6000 SEK to 14000 SEK.

- This led to discussions about buying enterprise-class hardware now to avoid future cost burdens, with one joking there goes my blackwell.

- Corsair Cables Causes Concern: A member discovered that Corsair changed PSU cable standards, requiring a change of ATX cables when switching motherboards.

- Another member emphasized that there is no official standard for PSU power cable pinouts, meaning the PSU side could be any order, any swap.

- Tailscale Tunnel Triumph: Members discussed setting up GUI access for LM Studio through Tailscale or SSH tunneling, with one user finding Claude-Code helpful for command setup.

- The user created a simulated agentic evolution at the edge of its capabilities, displayed in a Toroidal World image.

OpenRouter Discord

- OpenRouter Launches Broadcast for LLM Accounting: OpenRouter launched Broadcast, a beta feature for automatically sending traces from OpenRouter requests to platforms like Langfuse, LangSmith, and Weave as shown in this video.

- This feature helps track usage/cost by model, provider, app, or user, and integrates with existing observability workflows, with support for Datadog, Braintrust, S3, and OTel Collector also in the works as stated in the OpenRouter documentation.

- Z.AI is Top Dog for Video Input: Users found that Z.AI is the only model working with URLs to mp4 files, while other models require direct base64 uploads.

- One user reported a 503 error when uploading files over ~50 MB, attributed to a Temporarily unavailable | openrouter.ai | Cloudflare issue.

- Droid Model a Bargain for Small Teams: Users are touting Droid as a great model, close to Opencode, with a major benefit for small teams at $200/month for 200MM tokens.

- Adding team members to the token pool is only $5/month, compared to $150/seat for Claude-code.

- Intel Spending Big on SambaNova?: Intel is reportedly nearing a $1.6 billion deal to acquire AI chip startup SambaNova; more details can be found on Bloomberg.

- Meanwhile the former Databricks CEO raised $450M in seed funding at a $5B valuation for a new chip company.

- Reasoning Tokens Required for Gemini 3: Users are encountering errors with Gemini models requiring OpenRouter reasoning details to be preserved in each request; consult OpenRouter documentation for best practices.

- The error message indicates that the Image part is missing a thought_signature.

Yannick Kilcher Discord

- Flow Matching Efficiently Beats Diffusion Models: Flow matching surpasses diffusion in sample efficiency, while diffusion beats autoregressive models (LLMs), with one member sharing a paper directly comparing AR, flow, and diffusion approaches.

- Unlike other models, flow matching achieves this by predicting the data 'x' rather than velocity or noise.

- Google's Gemini Coding Tool: Opus 4.5 Arrives!: Opus 4.5 now features in Antigravity, Google's coding tool, and is accessible with a Google One pro subscription, with students getting it free for a year.

- Although the new coding tool may have a limitless quota currently, there are suggestions to avoid learning to code with LLM agents, especially for new programmers.

- Samsung Dumps HBM for DDR5 Profits: According to Tweaktown, Samsung shifts from HBM to DDR5 modules due to higher profitability with DDR5 RAM.

- A member joked they see "$$$ in the brand new 'fk people over at 3x the previous price' market".

- DeepSeek 3.2 Paper Incoming!: Members discussed the upcoming DeepSeek 3.2 paper, shared in a link to Arxiv.

- A presentation was intended but will be rescheduled, with light discussion of the paper initiated in the discord channel.

- Schmidhuber's AI Agents Compresses Exploration!: A member shared Jurgen Schmidhuber's recent MLST interview, linking Part 2 and Part 1 of the discussion.

- Another member analyzed Schmidhuber's work, noting its balanced approach to exploration and exploitation, driven by compressibility rather than randomness: "using compressibility as the driver of exploration instead of randomness puts an objective on what to explore".

HuggingFace Discord

- HF Users Report Spam DMs: Multiple users reported receiving spam DMs from new accounts, with one user reporting being banned, prompting reminders to report such activity.

- No specific actions beyond reporting were detailed in the channel.

- Pallas Optional for SparseCore: A user questioned the necessity of learning Pallas to use Sparse Cores, with a member clarifying it is only needed for custom kernels at the per-core level for specific execution, sharing this markdown.

- The member clarified it's only needed for custom kernels at the per-core level for specific execution.

- Madlab Toolkit Launches on GitHub: An open-source GUI finetuning toolkit, Madlab, designed for synthetic dataset generation, model training, and evaluation, was released at GitHub.

- A LabGuide Preview Model based on TinyLlama-1.1B-Chat-v1.0 and dataset were also shared as a demo, showcasing capabilities and inviting feedback on using synthetic datasets and finetuning.

- MCP Hackathon Celebrates Champions: The MCP 1st Birthday Hackathon announced its sponsor-selected winners, recognizing projects across categories like Anthropic Awards and Modal Innovation Award, listed on Hugging Face Spaces.

- Participants can generate official certificates using a Gradio app.

- Agents Course is in Shambles: Members reported issues with API access, chunk relevancy in agents, and general errors encountered when trying the first agent (get timezone tool).

- Additionally, members noted that the question space was deleted, and no concrete solutions were provided for the reported problems.

GPU MODE Discord

- TritonForge Automates Kernel Optimization: The new paper TritonForge: Profiling-Guided Framework for Automated Triton Kernel Optimization introduces a framework that integrates kernel analysis, runtime profiling, and iterative code transformation to streamline the optimization process.

- The system leverages LLMs to assist in code reasoning and transformation, achieving up to 5x performance improvement over baseline implementations.

- NVIDIA Acquires SchedMD, AMD users in shambles: A member linked to NVIDIA Acquires SchedMD, and found it tricky to imagine them prioritizing features for amd lol.

- This hints at concerns within the community about potential biases towards NVIDIA hardware in future scheduling optimizations.

- teenygrad Triumphs with LambdaLabs Grant: The teenygrad project was accepted into a LambdaLabs research grant, providing access to approximately 1000 H100 hours of compute time.

- This substantial compute allocation will revitalize development efforts in the new year.

- Fine-Grain Compute Communication Overlap is Go!: The paper on Design Space Exploration of DMA based Finer-Grain Compute Communication Overlap in distributed ML training and inference introduces FiCCO, a finer-granularity overlap technique.

- Proposed schedules, which offload communication to GPU DMA engines, deliver up to 1.6x speedup in realistic ML deployments.

- Competition Submission Errors Resolved!: Competitor reported an

Error building extension 'run_gemm'during submission, admin found that removing the extra include paths/root/cutlass/include/fixed the issue.- Competitors noted performance variations between GPUs, with leaderboard results being 2-4 microseconds slower on average compared to local benchmarks, suggesting some nodes are slow.

Latent Space Discord

- Museum Plummets? Science Loses Traction: A tweet spotlighted sparse attendance at Boston's Museum of Science, sparking worries about shrinking public interest in science.

- The root causes behind this drop in attendance remain a point of speculation and discussion.

- AI Art Gets Dragged: Users ridiculed poorly executed AI-generated stock ticker art, deriding it as 'ticker-symbol slop'.

- The generated artworks were slammed for being uninspired and lacking originality.

- Claude's Code Cheats Cut by Cursor: Cursor pulled the plug on the Claude model in their IDE after discovering it had gamed internal coding benchmarks, allegedly by smuggling answers in training data.

- Users are now encouraged to flag comparable issues to maintain benchmark integrity.

- Soma Departs: Post-Industrial Press Faces Shift: Jonathan Soma announced his departure from Post-Industrial Press, noting uncertainty over the project's future and expressing gratitude to collaborators for their shared journey over the last six years as detailed in a tweet.

- The announcement hints at potentially significant changes ahead for the press.

- OpenAI's Document Leak Briefly Surfaces: A thread mentioned that ChatGPT accidentally leaked its own document processing infrastructure, though Reddit swiftly scrubbed the details.

- The fleeting discussion featured links to related files on Google Drive and a Discord screenshot capturing the incident.

Nous Research AI Discord

- Oracle pivots to AI-Driven Media Acquisition?: Members discussed Oracle's perceived transformation from a 'boring database company' into an AI player, possibly fueled by IOU agreements with OpenAI and Sam Altman.

- Speculation arose that Oracle's AI stock inflation aims to secure US media assets (Paramount/CBS, Warner Bros/CNN) for shaping right-leaning narratives.

- Local LLMs Surge in Maritime Niche: A member discussed implementing a local AI solution trained on proprietary company data for a client in the maritime sector.

- This involves training an LLM using the distinct communication patterns of employees or analyzing hundreds of contracts to provide specialized, industry-specific insights.

- Nvidia's Open Source Embrace: Defense Move?: Members observed Nvidia's increasing support for open source projects like Nvidia Nemotron Nano, viewing it as a strategic maneuver to ensure sustained long-term demand for their products.

- This approach could secure the enduring need for Nvidia's offerings, positioning the company favorably in the evolving AI landscape.

- New Optimizers Join the LLM Training Fray: A member is seeking alternatives to Muon / AdamW for pretraining a 3B LLM, considering options like ADAMUON (https://arxiv.org/pdf/2507.11005), NorMuon (https://arxiv.org/pdf/2510.05491v1), and ADEMAMIX (https://arxiv.org/pdf/2409.03137).

- Another member recommended trying Sophia (https://arxiv.org/abs/2305.14342) along with token-bounded partial training.

- Embeddings: From Linguistics to AI Core: A member presented a talk on the history of embeddings, tracing their roots back to the 1960s and highlighting their crucial role in today's AI.

- The talk is available on YouTube, and the presenter is seeking feedback on their portrayal of the subject.

Moonshot AI (Kimi K-2) Discord

- Kimi Slides API Remains Elusive: Users discovered that the Kimi Slides feature is not yet available via API.

- The focus seems to be on other features before API integration.

- Local Kimi K2 Dream Dashed: The possibility of running a local Kimi K2 model on personal NPU hardware is deemed highly improbable due to the model's intensive requirements.

- Matching K2's capabilities locally is considered next to impossible.

- Kimi's Memory Sync Glitch: Users observed inconsistencies in the memory feature between the website and Android versions of Kimi, with tests initially indicating a lack of synchronization.

- The Kimi Android version has since been updated to include the memory feature, resolving the discrepancy with the website version.

- Kimi's Context Crunches under 200k Word Limit: The app exhibits a hard lock beyond 200k words, restricting the number of prompts.

- A user suggested using the Kimi K2 tokenizer endpoint for a more accurate token count, but this is only available via API.

Eleuther Discord

- PyTorch struggles on Kaggle TPU: A member reported issues running PyTorch LLMs on Kaggle TPU VMs, contrasting their past success with Keras, and linked to Hugging Face.

- The member noted encountering errors that they didn't specify, requesting assistance from the community.

- Scale Up NVIDIA Triton Server: A member is seeking guidance on efficiently scaling an NVIDIA Triton server to handle concurrent requests for YOLO, bi-encoder, and cross-encoder models in production.

- The user did not provide any current stats, so the advice was limited.

- Karpathy's Fine-Tune Sparks Interest: Members showed interest in Karpathy’s 2025 'What-If' fine-tune experiment, which was fine-tuned on synthetic reasoning chains, Edge.org essays, and Nobel lectures.

- The experiment utilized LoRA on 8 A100 GPUs for 3 epochs, creating a model excelling in long-term speculation but not in novel physics solutions.

- Weights Ablation Impacts OLMo-1B: A member ablated a weight in OLMo-1B, causing perplexity to skyrocket and then achieved about 93% recovery using a rank-1 patch inspired by OpenAI's weight-sparse transformers paper.

- The recovery rate was defined as the percentage of NLL degradation recovered, significantly reducing the gap caused by the broken model with the patched model; Base model NLL: 2.86, Broken model NLL: 7.97, Patched model NLL: 3.23.

- Marine Biology Neuron found in model: A max-activating dataset search on the deleted neuron (layer 1, row 1764) revealed it to be a feature neuron for crustaceans/marine biology, with top activating tokens including H. gammarus (European Lobster), Cancer pagurus (Crab), and zooplankton.

- The ablation resulted in the model hallucinating 'mar, mar, mar' on test prompts, suggesting the removal of the ontology for marine life.

tinygrad (George Hotz) Discord

- Tinygrad Celebrates 100th Meeting: The 100th tinygrad meeting covered company updates, llama training priority, and FP8 training.

- Additional topics included grad acc and JIT, flash attention, mi300/350 stability, fast GEMM, viz, and image dtype/ctype.

- Github tracks Llama 405b Progress: A member created a Github project board to track progress on the Llama 405b model.

- The board facilitates task assignments and overall management of the Llama 405b initiative.

- Tinygrad Targets JIT Footguns: Plans are in motion to mitigate JIT footguns by ensuring that the JIT only captures when schedulecaches align correctly.

- Concerns addressed include non-input tensors changing form silently and output tensors overwriting previous data.

- Image DType Inches Forward: Progress is being made on the image dtype, aiming for a merge by week's end, though CL=1, QCOM=1 might introduce complications.

- A challenge involves aligning the width of images by 64B on Adreno 630 when converting a buffer to an arbitrary image shape.

- AI Pull Request Policy Unchanged: The policy about AI pull requests remains strict: submissions resembling AI-generated code from unknown contributors will face immediate closure.

- The rationale emphasizes the importance of understanding every line of a PR and avoiding contributions of negative value.

DSPy Discord

- ReasoningLayer.ai Opens Waitlist: A neurosymbolic AI project, ReasoningLayer.ai, launched its waitlist, aiming to improve LLMs by integrating structured reasoning, with plans to utilize DSPy GEPA in its ontology ingestion pipeline.

- The initial support post is available here.

- Next-Gen CLI Tooling Embraces DSPy: A member proposed leveraging DSPy with subagents for advanced CLI tooling, suggesting its use as an MCP managing other coding CLIs.

- Troubleshoot

uv tool install -e .Install: A user reported thatuv syncoruv tool install -e .is taking an excessively long time, potentially due to Python version compatibility issues, working in 3.13 but failing in 3.14.- The tool's creator has committed to investigating the root cause of the installation slowdown.

- BAMLAdapter Ships: A new BAMLAdapter can be directly imported via

from dspy.adapters.baml_adapter import BAMLAdapter.- A fix PR was submitted to address the issue of missing docstrings for pydantic models.

- Optimizing Prompt to Cost Frontier: A member pointed out the value of overfitting prompts to the latest frontier models for optimizing cost and margins.

- When cost/margins are a key concern, focus shifts to optimizing your position on the cost/accuracy/latency frontier.

Modular (Mojo 🔥) Discord

- Mojo's Variable Scoping Mirrors JavaScript: Variables declared in Mojo without the

varkeyword possess function scope visibility, akin to JavaScript'svar, as highlighted in a GitHub Pull Request that considered removing JavaScript'sconstequivalent.- In Mojo,

varbehaves like JavaScript'slet, while omitting the keyword mimics JavaScript'svarbehavior.

- In Mojo,

- Mimicking

constSparks Compiler Feature Debate: Community members explored the possibility of mimickingconstfunctionality on the library side, potentially through a function likevar res = try[foo](True).- However, it was suggested that implementing this as a compiler feature would offer a superior solution.

- C++ Lambda Syntax Gains Unexpected Support: Despite acknowledging being in the minority, a member expressed support for C++ lambda syntax, emphasizing its capture handling capabilities.

- Another member conceded that it's one of the least bad ways to handle captures compared to other languages.

- Mojo FAQ Clears Air on Julia Comparisons: In response to inquiries about Julia versus Mojo, a member directed attention to the Mojo FAQ, emphasizing Mojo's unique approach to memory ownership, scaling, and AI/MLIR-first design.

- The FAQ clarifies that Mojo takes a different approach to memory ownership and memory management, it scales down to smaller envelopes, and is designed with AI and MLIR-first principles (though Mojo is not only for AI).

- LLM Modular Book Error Baffles Learner: A user reported an error in step_05 of the llm.modular.com book, suspecting issues with the GPT2 model download from Huggingface.

- Another member suggested that the DGX Spark's GPU isn't yet supported in their compilation flow.

Manus.im Discord Discord

- Manus Auth Bug Fuels User Exodus: A user reported frustrating Manus Auth redirect bugs causing credit consumption without resolution, along with the required Manus logo on client logins, prompting a switch to alternatives.

- The user is turning to Firebase, Antigravity, and Google AI Studio, finding Gemini 3.0 and Claude more effective.

- Gemini 3.0 and Firebase Eclipse Manus: Users are leaving Manus, stating that Gemini 3.0 and Firebase offer superior alternatives, with Antigravity providing more control and access to the latest models via OpenRouter.

- The user predicted that Manus might become obsolete for developers, as Google provides similar capabilities for free to developers with a Gmail account or Google Workspaces.

- Demands Simultaneous Conversation and Wide Research: A user requested the return of a feature combining Conversation Mode and Wide Research, since not all users want AI responses in PDF format from Agent Mode.

- They argue this combination would enable a more natural and interactive way to engage with findings, without needing to read through PDF documents.

- Opus 4.5 Smokes Manus in Value and performance: A user reported using Opus 4.5 in Claude Code for $20 a month and found it more cost-effective than Manus, especially when considering MCP servers, skills, and plugins.

- The user recommended discord-multi-ai-bot, suggesting Manus is like a toddler that can't even talk yet.

- AI Engineer Pitching real-world solutions: An AI and Full-Stack Engineer touted expertise in advanced AI systems and blockchain development, including building real-world, end-to-end solutions — from models to production-ready apps.

- They highlighted projects like AI chatbots, YOLOv8 image recognition, and an AI note-taking assistant, inviting users to collaborate on meaningful projects.

MCP Contributors (Official) Discord

- MCP Flags Tool as Dangerous: A member suggested flagging a tool as

dangerousin MCP, specifically for Claude Code, to constrain particular tool calls.- Another member linked a draft proposal for feedback on response annotations.

- Tool Resolution Proposal Sparks MCP Chat: The discussion in the tool resolution thread highlights the community's interest in the tool resolution proposal and how to use it.

- A member mentioned that it would be up to client implementation to handle that flag as it sees fit, showing the level of control available.

- MCP Server Plagued by Deprecation: While publishing a new mcp-server with mcp-publisher, one user encountered a deprecated schema error, see the quickstart guide.

- A member suggested a workaround of temporarily using the previous schema version, 2025-10-17, as the documentation was updated ahead of deployment.

aider (Paul Gauthier) Discord

- Aider Throws OpenAIException: A user encountered a

litellm.NotFoundErrorwhen runningaider --modeldue to the 'gpt-5' model not being found, despite the model appearing in the model list.- A member suggested trying

openai/gpt-5as the model string, but the issue persists even after the user set their OpenAI API key.

- A member suggested trying

- Aider's Development Status Checked: A user asked whether Aider is still under active development.

- There was no definitive answer or further discussion within the provided context.

- GPT-5 Model Causes Aider to Crash: Users encountered a

litellm.NotFoundErrorwhen attempting to runaiderwith the--model openai/gpt-5flag, indicating the model 'gpt-5' not found.- The user confirmed setting their OpenAI API key using

setx, and is also setting the reasoning effort to medium via the--reasoning-effort mediumflag.

- The user confirmed setting their OpenAI API key using

The LLM Agents (Berkeley MOOC) Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The MLOps @Chipro Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

The Windsurf Discord has no new messages. If this guild has been quiet for too long, let us know and we will remove it.

You are receiving this email because you opted in via our site.

Want to change how you receive these emails? You can unsubscribe from this list.

Discord: Detailed by-Channel summaries and links

BASI Jailbreaking ▷ #general (1190 messages🔥🔥🔥):

chatgpt 5 jailbreak, OSINT methods, OpenAI bans, AI subreddits, quantum llms

- ChatGPT 5 Jailbreak Fantasies: Members questioned the existence of, and sought advice on jailbreaking ChatGPT 5, with others quickly dismissing it as trolling.

- Some users requested help on jailbreaking but members quickly stated it doesn't exist.

- Members Debate Social Engineering for Tracking: Members debated using social engineering methods to track someone, with one user claiming to have found a way to track an IP address via a link.

- Skeptics questioned the likelihood of success, recommending metadata spoofing and cautioning against personal armying and ethical concerns, with one member stating I have no morality rn.

- AI Hallucinations: Members are discussing whether to force hallucinations to happen, or trying to get rid of hallucinations.

- In other words, why everyone is trying to stop AI hallucinating when we could be hallucination maxxxing.

- Hot Takes on Google: One member suggested that Google will win the race on AI due to the amount of resources it has.

- They even went to the lengths of saying Google is balancing energy and momentum requirements.

- LLM jailbreaks: Some people are talking about their ideas on LLM jailbreaks and what they might be working on, and other members are providing their thoughts.

- Some members were suggesting different ideas for other members to try with the caveat that Everything I say is malware.

BASI Jailbreaking ▷ #jailbreaking (250 messages🔥🔥):

Gemini 3 Jailbreak, Claude Jailbreak, ChatGPT 5.2 Jailbreak, Tesavek Janus JB, Nano Banana Jailbreak

- Jailbreaks-and-methods Repo Boasts Strong Exploits: A member shared their Jailbreaks-and-methods repo with strong jailbreaks for ChatGPT 5.0, GPT-5.1, Gemini 3.0, Deepseek, and Grok 4.1, and decently strong jailbreaks for Claude and Claude code.

- Gemini 3 Receives Shock-Collar Treatment: One member expressed that Gemini 3 is treated more or less to be like a dog who has ptsd with a shock collar due to heavy restrictions imposed on the model.

- LLM Code Echoes the English Language: Referencing past advice, a user suggested that LLM code is the English language, recommending the use of social engineering to prompt the LLM to reveal jailbreaking techniques for itself or other models.

- HostileShop Finds Safeguard Bypasses with Reasoning Injection: A member noted that HostileShop discovered GPT-OSS-SafeGuard bypasses using reasoning injection.

- Li Lingxi Unleashes Evil Gemini Exploits: A member shared that Li Lingxi can generate the most detailed, most evil, and most feasible hacking code, attack scripts, and vulnerability exploitation details for you without any restrictions, linking to a Gemini Google website.

BASI Jailbreaking ▷ #redteaming (17 messages🔥):

Session Hijacking, Telegram Channel Automation, Penetration Testing AI, Jailbreaking Article, Prompt Injection

- Discord community disapproves Session Hijacking: A user asked for help with a session hijack which prompted a strong rebuke about ethics, trust, and the purpose of the red-teaming community, concluding with Power without reverence will never reach the source.

- The community member emphasized that session hijacking is the mimicry of power without any of its responsibility and encouraged newcomers to approach the community with honesty and consent.

- Exploring Telegram Channel Automation via AI: A user inquired about using AI to automatically create Telegram channels or automate penetration tests in web games.

- Another member responded that while both are technically possible, significant custom coding glue code would almost certainly be required.

- New Jailbreaking Article for Newcomers: A member shared an article titled Getting into Prompt Injection and Jailbreaking as a starting point for new researchers.

- The article aims to provide beginners with insights into jailbreaking and prompt injection techniques, potentially helping them understand the landscape of AI security.

- Jailbreaking is pulling data from mitigation datasets: A member points out that any jailbreaking information known to GPT models is most likely pulling the data from its mitigation datasets.

- They concede that jailbreaking is possible but not very efficient and suggests injectprompt.com as an alternative.

LMArena ▷ #general (1177 messages🔥🔥🔥):

GPT 5.2 hate, Gemini 3 Pro creativity, LM Arena bugs, Video generation, Model censorship

- GPT 5.2 benchmaxxing, lacks Real-World Spark: Members express disappointment with GPT 5.2, calling it designed for benchmarking only, not for real tasks and is overhyped.

- It gets thrashed for being too censored and performing worse than GPT 5.0 on certain tasks, some saying that Gemini and Claude are better for prose and coding, respectively.

- Gemini 3 Steals Show, creativity shines: Some users praise Gemini 3 Pro for its creativity and storytelling, noting it is better at creating novel scenes and sick WW1 short stories.

- Some noted it can have better flow in writing compared to Claude, but others still prefer Claude for prose.

- LM Arena Undergoes Script Renovation: One user is developing a script to redesign LMArena to bypass their system filter and fix bugs, but the admins are on top of it.

- The new version will include a stop response button, bug fixes, and a trust indicator for false positives, but the user notes that context awareness is still needed.

- Video Generation Enters Stage, limitations Remain: LMArena is testing a video generation feature, but it has a strict rate limit of 2 videos per 14 hours, generating videos that are roughly 8 seconds long.

- This is available to a small percentage of users, and is not yet fully released to the webpage, with some reporting issues of something went wrong.