Congratulations, you secured the biggest number.

AI News for 2/26/2026-2/27/2026. We checked 12 subreddits, 544 Twitters and 24 Discords (263 channels, and 12529 messages) for you. Estimated reading time saved (at 200wpm): 1189 minutes. AINews’ website lets you search all past issues. As a reminder, AINews is now a section of Latent Space. You can opt in/out of email frequencies!

Against the backdrop of nonstop positioning with the Department of War (Anthropic refusing terms vs OpenAI doing a deal), OpenAI finally closed the much debated Big Round that had been started since December. In the post, they make several interesting new disclosures:

- Weekly Codex users have more than tripled since the start of the year to 1.6M

- was 1M on Feb 4 (!!?!?!)

- More than 9 million paying business users rely on ChatGPT for work

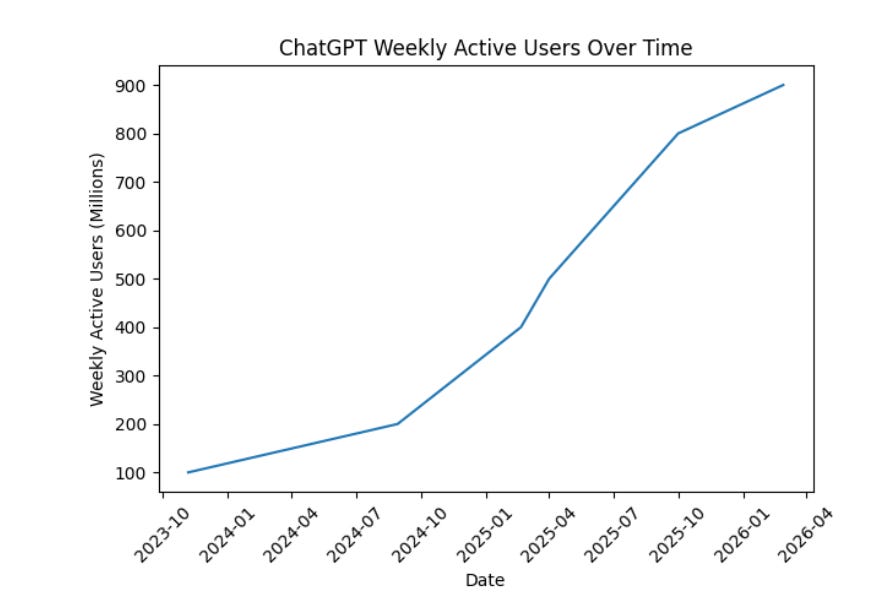

- ChatGPT is where people start with AI, with more than 900M weekly active users, and we now have more than 50 million consumer subscribers (monetization continuing to accelerate in Jan/Feb)

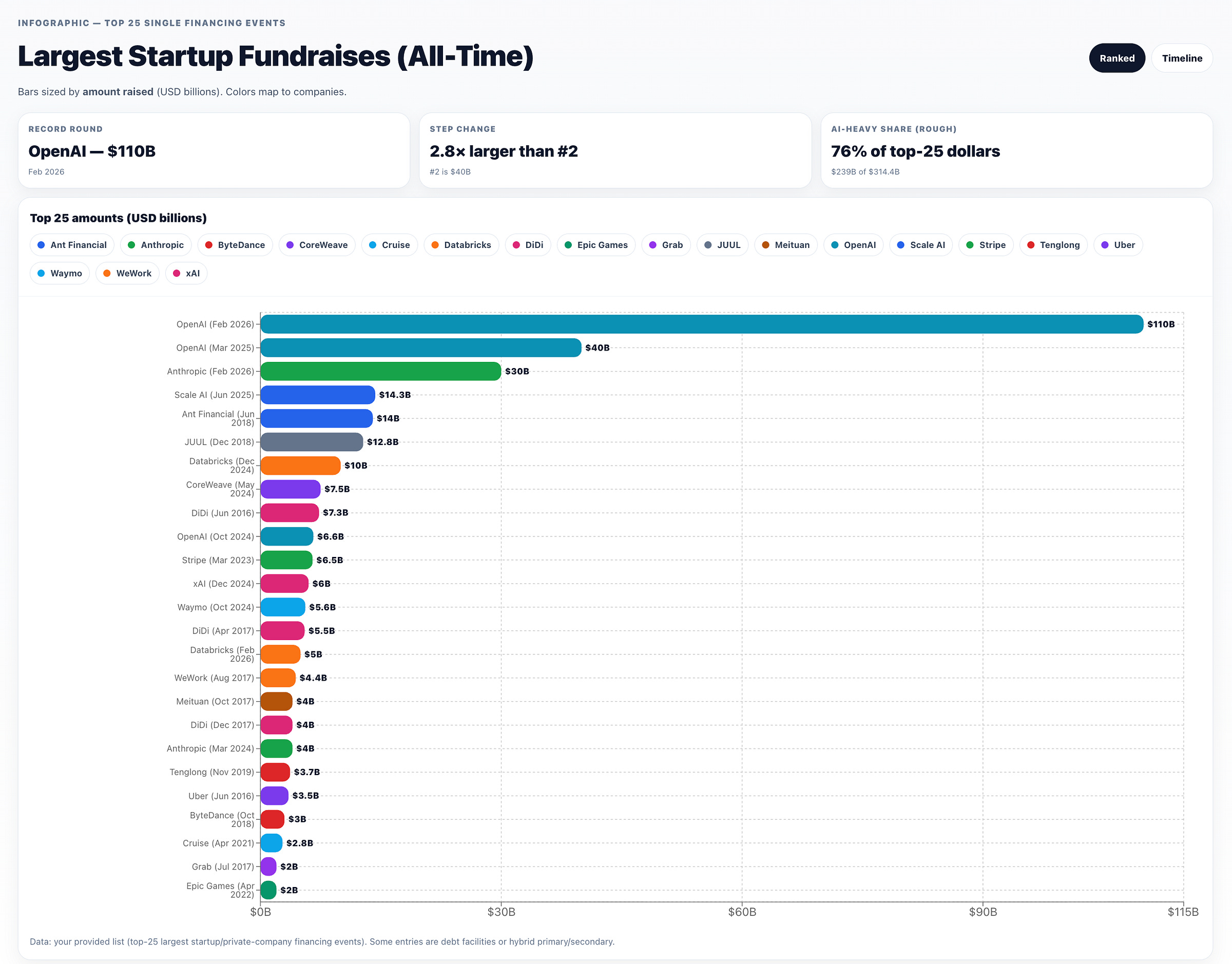

All this justifies $110B in new investment at a $730B pre-money valuation:

- $30B from SoftBank (“advancing our own ASI strategy”),

- $30B from NVIDIA (including the use of 3 GW of dedicated inference capacity and 2 GW of training on Vera Rubin systems) - down from “up to $100B”, still with circular funding concerns

- $50B from Amazon with increased partnership (analysis) involving:

- an initial $15 billion investment and followed by another $35 billion in the coming months when certain conditions are met — leaving Amazon with a large stake in both OpenAI and Anthropic

- “Stateful Runtime Environment” powered by OpenAI on Amazon Bedrock

- AWS will be the exclusive third-party cloud provider for OpenAI Frontier

- 2 gigawatts of Trainium capacity through AWS infrastructure worth “$100 billion over 8 years”, spanning both Trainium3 and next-gen Trainium4 chips

Close watchers might notice the absence of Microsoft, which continues the existing reduced partnership and gets the stateless APIs.

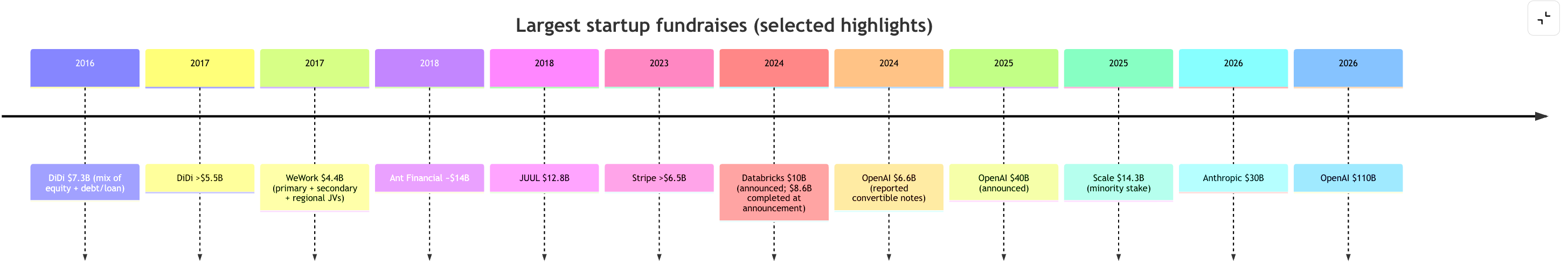

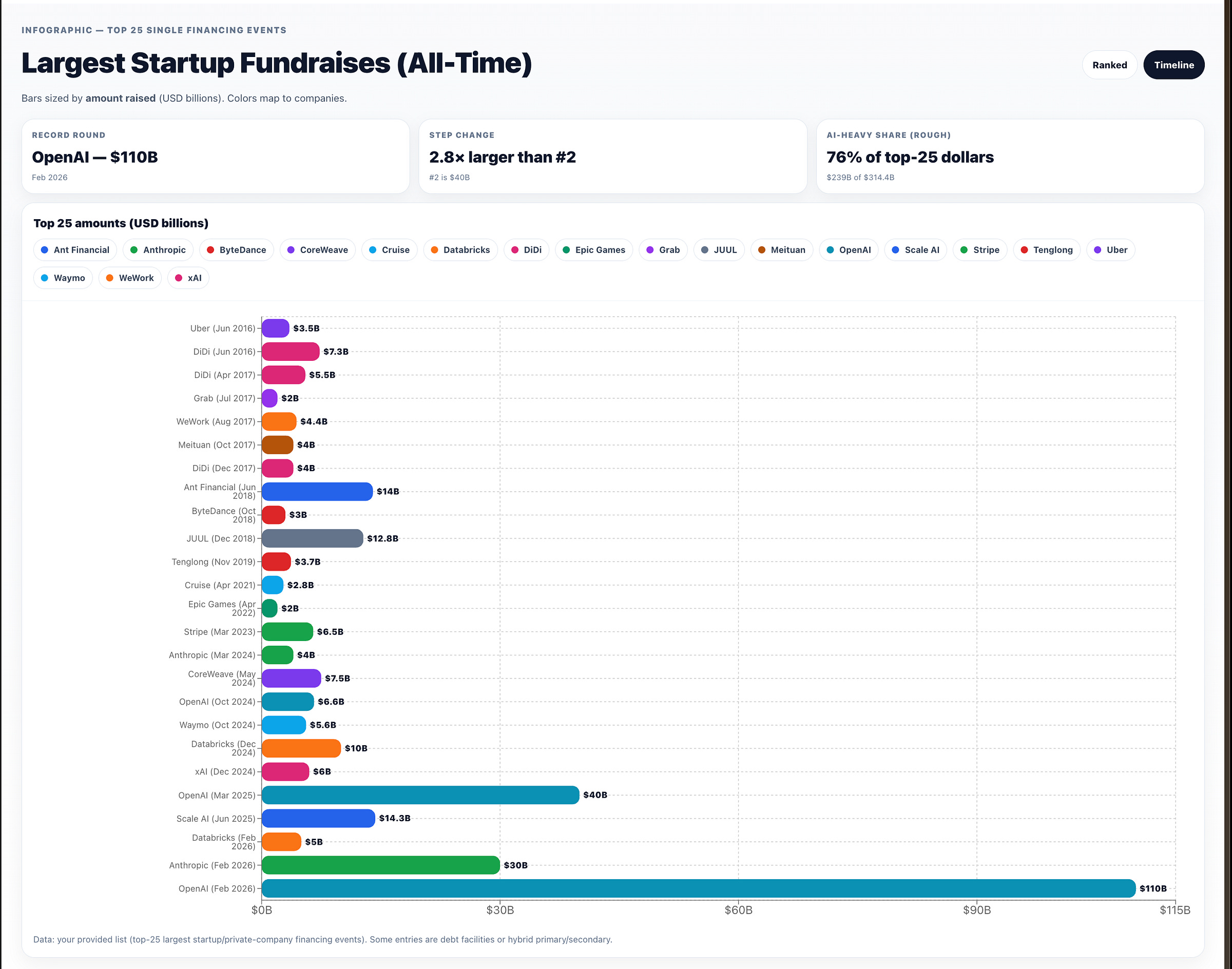

To put this in perspective, 118 countries/economies have a nominal GDP below $100B — roughly 61% of all world economies. Because the consecutive “largest fundraises in history” are too big to fit in a human head, here’s a chart worthy of wtfhappened2025.com:

and outside of AI, a 10 year history:

and here from OpenAI Deep Research + ChatGPT Canvas, sorted by descending amount:

or a timeline perspective:

AI Twitter Recap

Hypernetworks for instant LoRA “compilation”: Doc-to-LoRA + Text-to-LoRA

- Doc-to-LoRA / Text-to-LoRA (Sakana AI): Sakana introduces two related methods that amortize customization cost by training a hypernetwork to generate LoRA adapters in a single forward pass, turning what would be fine-tuning / distillation / long-context prompting into “instant weight updates.” The core claim: instead of keeping everything in an expensive active context window, you can compile task descriptions or long documents into adapter weights with sub-second latency, enabling rapid adaptation and “durable memory”-like behavior (SakanaAILabs, hardmaru).

- Text-to-LoRA: specializes to unseen tasks from just a natural language description (SakanaAILabs).

- Doc-to-LoRA: internalizes factual documents; on needle-in-a-haystack, reports near-perfect accuracy on sequences ~5× longer than the base model context window, and even demonstrates a cross-modal trick: transferring visual information from a VLM into a text-only model via internalized weights (SakanaAILabs; recap thread omarsar0).

- Positioning vs long-context: explicitly framed as a way to reduce quadratic attention costs and avoid rereading long docs at every call—store knowledge in adapters rather than tokens (omarsar0).

- Credit / prior art tension: One researcher complains that Hypersteer (hypernetworks producing steering vectors from text descriptions) did not get sufficient credit in later similar work (aryaman2020). There’s also broad community excitement / “hypernetworks are back” reactions (willdepue, zhansheng).

- Open question raised: why not just use attention with an extremely long KV cache—i.e., is Doc-to-LoRA mainly about efficiency/serving cost? (hyhieu226)

OpenAI financing + deployment transparency tooling

- $110B funding round: OpenAI announces a $110B raise with backing from Amazon, NVIDIA, SoftBank, framed as scaling infra “to bring AI to everyone” (OpenAI, sama). A separate note from Epoch AI contextualizes the scale: The round would nearly triple total capital raised to date; The Information reportedly projects $157B cash burn through 2028, and this round + existing cash would roughly match that projection (EpochAIResearch).

- Deployment Safety Hub: OpenAI launches a searchable site to browse “system cards” (previously PDFs) as a more accessible interface to deployment safety documentation (dgrobinson).

US DoD (“Department of War”) vs Anthropic saga: supply-chain designation, backlash, and industry implications

- Anthropic draws a line; tech reacts: A central flashpoint is Anthropic’s public refusal to enable mass domestic surveillance and fully autonomous weapons (as characterized by posters reacting to Anthropic’s statement), which drew rare cross-competitor praise and heightened attention to “red lines” in frontier deployment (mmitchell_ai, ilyasut).

- Designation shock + legal scope debate: Posts circulate a claimed DoW move to designate Anthropic a “Supply-Chain Risk to National Security” and to pressure contractors/partners—sparking arguments about legality, precedent, and chilling effects (kimmonismus, deanwball). One legal clarification: DoD can restrict what contractors do on DoD contract work, but likely can’t legally ban contractors from using Anthropic in their private/commercial work (petereharrell).

- Economic/strategic fallout framing: The sharpest critiques argue this would damage US credibility as a business partner and potentially force hyperscalers/investors into impossible tradeoffs (deanwball); others note uncertainty until full details are known but still see a supply-chain designation as ill-fitting (jachiam0).

- Public sentiment spike: Posts highlight strong public outrage at the idea of a DoD-backed domestic surveillance program and punishment for refusal (quantian1, janleike). Many users signal “solidarity subscriptions” to Claude (willdepue, Yuchenj_UW).

- Anthropic statement and intent to litigate: Anthropic posts an official statement responding to Secretary Hegseth’s comments (AnthropicAI). Commentary highlights the line “challenge any supply chain risk designation in court” and emphasizes the dispute over restricting customers outside DoD contract scope (iScienceLuvr).

- Meta-point: Regardless of where one lands on Anthropic’s choices, many posts treat this as a governance precedent moment: who decides acceptable use, what due process exists, and how contracts interact with fast-moving model capabilities (kipperrii).

Models + leaderboards: Qwen3.5 expansion and “open model” rankings

- Qwen3.5 new releases (Artificial Analysis summary): Alibaba expands Qwen3.5 with 27B dense, 122B A10B MoE, and 35B A3B MoE, all Apache 2.0, 262K context (extendable to 1M via YaRN per the post). Artificial Analysis reports Intelligence Index scores: 27B = 42, 122B A10B = 42, 35B A3B = 37, with notable agentic/task metrics like GDPval-AA 1205 for 27B, plus detailed tradeoffs (hallucination/accuracy and token usage—27B used 98M output tokens to run the index) (ArtificialAnlys).

- Arena leaderboards (Feb 2026): Arena posts Top Open Models for text and code. Text top-3: GLM-5 (1455), Qwen-3.5 397B A17B (1454), Kimi-K2.5 Thinking (1452) (arena). Code Arena top includes GLM-5 (1451) at #1, with Kimi-K2.5 and MiniMax-M2.5 tied at #2 (arena). Arena also highlights Arena-Rank, their open-source ranking package for reproducible leaderboards (arena).

- Perplexity open-sources bidirectional embedding models (claim): A thread claims Perplexity open-sourced bidirectional “Qwen3-retrained” embedding models (0.6B/4B; standard vs context-aware embeddings; MIT licensed) to improve document-level understanding for retrieval; treat as a third-party summary rather than primary release notes (LiorOnAI).

Systems, inference, kernels, and RL training: bandwidth, ROCm, and off-policy RL

- vLLM ROCm attention backends (AMD): vLLM announces 7 attention backends for vLLM on ROCm with KV-cache layout changes, batching tricks, and model-specific kernels; reported up to 4.4× decode throughput on AMD GPUs with an env var switch (

VLLM_ROCM_USE_AITER=1) (vllm_project). A follow-up details MLA KV compression claims (e.g., ~8K → 576 dims) and throughput wins on MI300X/MI325X/MI355X (vllm_project). - DeepSeek DualPath I/O paper (third-party explainer): A ZhihuFrontier summary describes a DeepSeek+THU+PKU paper proposing system-level redesign of Prefill/Decode to exploit idle storage NIC bandwidth on decode nodes via RDMA, aiming at KV-cache movement bottlenecks for agentic long-context inference; includes claimed speedups (e.g., 1.87× on DS-660B) with caveats for smaller models (ZhihuFrontier).

- Kernel/infra chatter (“quack”, Liger): A thread points to Dao-AILab’s quack writeup on memory hierarchy bandwidth, plus a note that Liger not using cluster-level reductions for xentropy could explain slower performance in some settings (fleetwood___).

- Off-policy RL for reasoning (Databricks MosaicAI): Databricks promotes OAPL (Optimal Advantage-based Policy Optimization with lagged inference policy) as a stable off-policy alternative that can match/beat GRPO while using ~3× fewer training generations, positioned as operationally simpler than strict on-policy loops (DbrxMosaicAI, jefrankle).

- ERL vs RLVR (Turing Post explainer): A long “workflow breakdown” contrasts standard RLVR (scalar verifiable rewards) with Experiential Reinforcement Learning (ERL) inserting within-episode reflection/retry + distillation; cites reported gains (e.g., +81% Sokoban) and tradeoffs (pipeline complexity/compute) (TheTuringPost).

- Mamba-2 / GDN initialization bug discussion: Albert Gu clarifies a viral plot debate: main takeaway is an init bug materially affecting some results; also notes nuanced interactions in hybrids (e.g., “stronger” components can make others “lazy,” with a related reference) (_albertgu, _albertgu).

Top tweets (by engagement, technical / industry-relevant)

- OpenAI raises $110B (sama, OpenAI)

- Sakana AI Doc-to-LoRA / Text-to-LoRA (SakanaAILabs, hardmaru)

- Anthropic–DoD supply-chain designation critique / governance precedent (deanwball, quantian1, janleike)

- Karpathy on coding workflow evolution (tab → agents → parallelism) (karpathy)

- Karpathy on “programming a research org” with multi-agent workflows; limitations observed (karpathy)

- Anthropic official statement (AnthropicAI)

AI Reddit Recap

/r/LocalLlama + /r/localLLM Recap

1. Qwen3.5-35B-A3B Model and Benchmark Updates

-

New Qwen3.5-35B-A3B Unsloth Dynamic GGUFs + Benchmarks (Activity: 714): The Qwen3.5-35B-A3B Unsloth Dynamic GGUFs update introduces state-of-the-art (SOTA) performance across various quantization levels, with over

150 KL Divergence benchmarksconducted, resulting in9TB of GGUFs. The update includes a fix for a tool-calling chat template bug affecting all quant uploaders. The benchmarks demonstrate99.9% KL Divergenceon the Pareto Frontier for UD-Q4_K_XL and IQ3_XXS, among others. The update retires MXFP4 from most GGUF quants, except for select layers, and highlights the sensitivity of certain tensors to quantization, recommending specific bit widths for optimal performance. The research artifacts, including KLD metrics and configurations, are available on Hugging Face. Commenters appreciate the detailed analysis and acknowledge that while KLD and perplexity are useful starting points, they do not fully capture real-world performance. The accessibility of the Qwen3.5-35B-A3B model for testing is also praised, contrasting with larger models that require more resources.- The discussion highlights the importance of evaluating models on downstream tasks, as traditional metrics like Perplexity (PPL) and Kullback-Leibler Divergence (KLD) are insufficient alone. The Unsloth team’s analysis is praised for its depth, likened to a research study, and emphasizes the need for comprehensive testing beyond basic metrics.

- AesSedai, a commenter, appreciates the accessibility of the Qwen3.5-35B-A3B model for testing, contrasting it with larger models like GLM-5 and M2.5 that require significant resources. They mention ongoing efforts in quantization research, such as a new quant type IQ3_PT for llama.cpp, and express enthusiasm for the community’s focus on improving quantization techniques.

- Far-Low-4705 emphasizes the significance of publishing perplexity and KLD metrics for every quantization, noting that it should be a standard practice. This transparency is seen as a valuable resource for the community, providing essential references for evaluating model performance.

-

Follow-up: Qwen3.5-35B-A3B — 7 community-requested experiments on RTX 5080 16GB (Activity: 747): The follow-up post on Qwen3.5-35B-A3B benchmarks on an RTX 5080 16GB confirms that KV q8_0 is a ‘free lunch’ with negligible PPL differences, offering a

+12-38%throughput increase without quality loss. The Q4_K_M quantization remains optimal, while UD-Q4_K_XL shows significantly worse performance in KL divergence tests, confirming its inferiority. Removing batch flags with--fit onimproves throughput to74.7 tok/s, a+7%increase over manual configurations. The experiments also reveal that Bartowski Q4_K_L offers better quality but is44% slower, and MXFP4_MOE is not recommended due to a34-42%speed penalty despite marginal quality gains. The 27B dense model is10x slowerthan the 35B-A3B MoE on single-GPU setups, highlighting the efficiency of MoE architectures for consumer hardware. Commenters appreciate the confirmation that KVq8_0is a ‘free lunch’, noting its potential to save VRAM. There is also interest in the MXFP4’s speed struggles despite recommendations, indicating a need for further exploration of its performance.- The experiments on Qwen3.5-35B-A3B reveal that the KV

q8_0configuration is highly efficient, offering significant VRAM savings without compromising perplexity (PPL) performance. This finding is crucial for optimizing models on hardware with limited memory, such as the RTX 5080 16GB. The results suggest that the perceived accuracy drops reported by some users may be task-specific, as they do not appear in the PPL metrics, indicating a potential for broader application without significant performance loss. - The performance of MXFP4 was noted to be suboptimal in terms of speed, despite recommendations from Unsloth. This highlights the importance of testing different configurations as recommended settings may not always yield the best performance across all metrics. The community’s detailed analysis and sharing of over 120 variants on platforms like Hugging Face provide valuable insights for those looking to optimize their models.

- There is interest in whether the results observed for UD-Q4_K_XL versus Q4_K_M configurations would be similar for UD-Q5_K_XL versus Q5_K_M. This suggests ongoing exploration in the community to understand how different quantization strategies impact model performance, particularly in terms of balancing speed and accuracy.

- The experiments on Qwen3.5-35B-A3B reveal that the KV

-

Qwen3.5-35B-A3B Q4 Quantization Comparison (Activity: 747): The post presents a detailed comparison of Q4 quantization methods for the Qwen3.5-35B-A3B model, focusing on their faithfulness to the BF16 baseline using metrics like KL Divergence (KLD) and Perplexity (PPL). AesSedai’s Q4_K_M quantization achieves the lowest KLD of

0.0102, indicating high faithfulness, by maintaining certain tensors at Q8_0. Ubergarm’s Q4_0 also performs well, outperforming other Q4_0 methods by a factor of 2.5. The post highlights that MXFP4 is less effective when applied post-training compared to during Quantization Aware Training (QAT). Unsloth’s UD-Q4_K_XL shows the highest KLD at0.0524, but improvements are underway. The efficiency score ranks quantizations based on size and KLD, with AesSedai’s IQ4_XS being the most efficient. The setup includes an Intel Core i3-12100F CPU, 64 GB RAM, and an RTX 3060 GPU, usingik_llama.cppfor testing. Commenters emphasize the need for standardized quantization benchmarks and documentation, suggesting that quantizers include such metrics in their READMEs. Unsloth is actively investigating the high perplexity issue with MXFP4 in Q4_K_XL and plans to update the community soon.- The discussion highlights the need for standardized definitions in quantization, particularly terms like “Q4_K_M,” as their meanings can vary significantly between implementations. This lack of standardization makes it difficult to compare different quantization methods effectively. The suggestion is for quantizers to include detailed explanations in their documentation to aid in understanding and comparison.

- A technical investigation is underway to understand why MXFP4 layers are causing high perplexity in Q4_K_XL quantizations. The issue does not affect other quantizations like Q2_K_XL and Q3_K_XL, which do not use MXFP4 layers. The dynamic methodology used in MiniMax-M2.5 shows promising results, especially in Q4_K_XL, as evidenced by Benjamin Marie’s benchmarks on LiveCodeBench v5, where UD-Q4-K-XL outperforms Q4-K-M.

- There is a concern about using wikitext as a dataset for measuring perplexity and Kullback-Leibler divergence (KLD) because some imatrix datasets might include wikitext, potentially skewing results. A fresh dataset, such as one derived from recent podcasts, is recommended for more accurate comparisons. This issue is discussed in the context of ensuring fair and unbiased benchmarking.

2. DeepSeek and DualPath Research

-

DeepSeek allows Huawei early access to V4 update, but Nvidia and AMD still don’t have access to V4 (Activity: 614): DeepSeek has provided early access to its V4 AI model update to Huawei and other domestic suppliers, aiming to optimize the model’s performance on their hardware. This strategic move excludes major US chipmakers like Nvidia and AMD, who have not received access to the update. The decision is likely influenced by the need for compatibility and optimization on non-Nvidia hardware, as DeepSeek’s models are typically trained on Nvidia platforms. Source. Commenters speculate that Nvidia might not need early access since DeepSeek models are generally optimized for Nvidia hardware. The focus on Huawei suggests a need for compatibility with non-Nvidia systems, which might not be newsworthy given past access patterns.

- jhov94 suggests that DeepSeek is likely optimized for Nvidia hardware, implying that Nvidia may not need early access to the V4 update. The early release to Huawei could be due to compatibility issues with their hardware, which might not natively support DeepSeek models.

- ResidentPositive4122 reflects on past media hype around DeepSeek, particularly the claims that it would revolutionize the industry and run on low-power devices like Raspberry Pi. They express skepticism about mainstream media reports and suggest that major inference providers will adapt to V4 shortly after its release, as is typical with new model launches.

- stonetriangles questions the significance of Nvidia not receiving early access to V4, noting that Nvidia did not have early access to previous versions like R1, V3, or V3.2. This implies that Nvidia’s lack of early access to V4 is consistent with past practices and may not be noteworthy.

-

DeepSeek released new paper: DualPath: Breaking the Storage Bandwidth Bottleneck in Agentic LLM Inference (Activity: 232): The paper titled “DualPath: Breaking the Storage Bandwidth Bottleneck in Agentic LLM Inference” introduces a novel inference system developed by researchers from Peking University, Tsinghua University, and DeepSeek-AI. The system, named DualPath, aims to optimize Large Language Model (LLM) inference by addressing the storage I/O bandwidth limitations of KV-Cache under agentic workloads. The architecture is designed to enhance performance in memory-bound scenarios, potentially offering significant improvements over existing benchmarks. A commenter expressed interest in how the DualPath architecture addresses KV cache bandwidth issues across different hardware configurations, questioning whether real-world improvements align with the reported benchmarks.

- The paper addresses the KV cache bandwidth issue by introducing a dual path architecture, which could potentially alleviate memory-bound scenarios. However, there is curiosity about whether the real-world improvements align with the benchmarks presented, especially across different hardware configurations.

- There is skepticism about the dual-path approach’s effectiveness in scenarios where agent trajectories diverge unpredictably during execution. This is because agentic workloads typically have less predictable access patterns compared to standard serving, which could challenge the dual-path architecture’s efficiency.

- A question is raised about the availability of a 27 billion parameter version of the model, suggesting it might be an internal-only release. This implies interest in the scalability and accessibility of the model for broader use cases.

3. Self-Hosted LLM Tools and Leaderboards

-

LLmFit - One command to find what model runs on your hardware (Activity: 274): The image showcases a terminal interface for LLmFit, a tool designed to match machine learning models to specific hardware configurations. It evaluates models based on system RAM, CPU, and GPU capabilities, providing scores for quality, speed, fit, and context. The tool supports multi-GPU setups, MoE architectures, and dynamic quantization, offering both a TUI and CLI mode. The interface in the image lists models, providers, and scores, with hardware specs indicating an Intel Core i7 CPU, 13.7 GB RAM, and an NVIDIA GeForce RTX 4060 GPU. This tool aims to optimize model selection for given hardware constraints. Some users express skepticism about the tool’s recommendations, noting discrepancies in model performance and fit scores compared to their own experiences. One user questions the accuracy of the ‘Use Case’ and ‘tok/sec’ columns, suggesting they may not be reliable indicators of model suitability.

- Dismal-Effect-1914 points out a potential issue with LLmFit’s recommendations, specifically mentioning that

llama.cppdoes not supportnvfp4quantizations. This suggests that the tool might not accurately reflect the capabilities of certain models or hardware configurations, and users might find better results through personal experimentation. - Yorn2 shares a detailed comparison of LLmFit’s recommendations versus their own experience. They note that LLmFit suggests

bigcode/starcoder2-7bas the best model for their setup, with a score of 79 and 27 tokens/sec, despite their current modelmratsim/MiniMax-M2.5-BF16-INT4-AWQachieving 60-70 tokens/sec. This discrepancy raises questions about the accuracy of LLmFit’s scoring and token/sec metrics, suggesting that the tool’s evaluation criteria might not align with real-world performance. - Deep_Traffic_7873 questions the uniqueness of LLmFit by comparing it to Hugging Face’s capabilities, which also allow users to set hardware configurations in their web UI. This implies that LLmFit might not offer a distinct advantage over existing solutions, particularly if it doesn’t provide more accurate or useful recommendations.

- Dismal-Effect-1914 points out a potential issue with LLmFit’s recommendations, specifically mentioning that

-

Self Hosted LLM Leaderboard (Activity: 680): The image presents a leaderboard for self-hosted large language models (LLMs), categorizing them into tiers from S to D based on performance metrics such as Coding, Math, Reasoning, and Efficiency. The leaderboard is hosted on Onyx and has recently been updated to include the Minimax M2.5 model. The models are listed with their parameter sizes, indicating their computational capacity. This leaderboard serves as a resource for comparing the capabilities of various LLMs in a self-hosted environment. Commenters suggest that the Qwen 3.5 models, particularly the 27b dense and 122b MoE, should be included in the leaderboard due to their strong performance and vision capabilities, which are beneficial for homelab and small business applications. There is also a call for the inclusion of the qwen3-coder-next model in the coding category.

- The Qwen 3.5 models, particularly the 27B dense and 122B MoE, are highlighted for their potential to rank in the A-tier or B-tier of self-hosted LLMs. These models are noted for their vision capabilities, which are beneficial for homelab and small business applications, suggesting they offer a competitive edge in practical deployment scenarios.

- The absence of the Qwen3-Coder-Next model from a coding-focused leaderboard is criticized, as it is considered one of the best models for running on standard hardware. The Qwen3-Next and Qwen3-Coder-Next, both at 80B parameters, are praised for their performance and accessibility, making them suitable for users without specialized hardware.

- A query about the hardware requirements for running S-tier models suggests a need for clarity on the computational demands of top-performing LLMs. This indicates a gap in information for users looking to optimize their setups for high-tier model performance.

Less Technical AI Subreddit Recap

/r/Singularity, /r/Oobabooga, /r/MachineLearning, /r/OpenAI, /r/ClaudeAI, /r/StableDiffusion, /r/ChatGPT, /r/ChatGPTCoding, /r/aivideo, /r/aivideo

1. Anthropic vs. Pentagon Standoff

-

Trump goes on Truth Social rant about Anthropic, orders federal agencies to cease usage of products (Activity: 4293): The image is a meme featuring a screenshot of a Truth Social post by Donald J. Trump, where he criticizes the AI company Anthropic, labeling it as a ‘radical left, woke company.’ Trump orders federal agencies to stop using Anthropic’s technology, citing national security concerns, and mandates a six-month phase-out period. This post is likely satirical, as it reflects ongoing debates about AI ethics, privacy, and government surveillance, but does not correspond to any verified public statement or policy by Trump. Commenters highlight the irony in labeling opposition to mass surveillance as ‘radical left,’ and express increased interest in Anthropic’s products due to the criticism.

-

Pentagon designates anthropic as a supply chain risk (Activity: 1237): The image is a meme-style screenshot of a tweet criticizing the U.S. government’s designation of Anthropic as a supply chain risk. The tweet accuses Anthropic of refusing to provide unrestricted access to their AI models for defense purposes, prioritizing ethical guidelines over national security demands. This has led to a directive to cease federal use of Anthropic’s technology, highlighting tensions between tech companies’ ethical stances and government security requirements. The comments express strong support for Anthropic’s decision to maintain ethical boundaries, criticizing the government’s actions as authoritarian and a misuse of national security designations to punish dissent. Commenters praise Anthropic for resisting pressure to compromise their ethical standards.

-

The Under Secretary of War gives a normal and sane response to Anthropic’s refusal (Activity: 1184): The image is a tweet from the Under Secretary of War, Emil Michael, criticizing Dario Amodei of Anthropic for refusing a Pentagon offer related to AI safeguards. The tweet accuses Amodei of having a ‘God-complex’ and wanting to control the US Military, while emphasizing that the Department of War will adhere to the law and not yield to for-profit tech companies. This response follows an Axios article about Anthropic’s stance against certain military applications of AI, particularly those involving autonomous lethal weapons and mass surveillance, which are deemed dangerous by AI ethicists. Comments highlight the unprofessional tone of the Under Secretary’s response and support Anthropic’s stance against state overreach, emphasizing the ethical concerns around AI in military applications.

- ChrisWayg highlights a response from Claude, an AI developed by Anthropic, emphasizing the ethical considerations behind Anthropic’s refusal to comply with certain government demands. Claude argues that the refusal to support autonomous lethal weapons and mass surveillance aligns with the views of many AI ethicists who see these applications as dangerous and beyond the safe capabilities of current technology. This stance is supported by Anthropic’s co-founder, Amodei, who has publicly stated that such uses are outside the bounds of what today’s AI can safely achieve, as reported by NPR.

- The discussion touches on the broader theme of corporate resistance to government overreach, particularly in the context of AI ethics. Claude’s response suggests that Anthropic’s decision is a form of principled resistance, which is often celebrated by libertarian viewpoints. This highlights a tension between government demands for compliance and private companies’ ethical stances, especially when it comes to technologies that could infringe on civil liberties.

- The technical debate centers around the capabilities and ethical implications of AI in surveillance and military applications. The refusal by Anthropic to provide AI tools for mass surveillance and autonomous weapons is framed as a necessary ethical boundary, reflecting a consensus among AI ethicists about the potential dangers of such technologies. This is contrasted with the expectations of some government officials who may view these technologies as necessary for national security, creating a conflict between ethical AI development and governmental demands.

-

Sam Altman says OpenAI shares Anthropic’s red lines in Pentagon fight (AI safeguards) (Activity: 695): OpenAI CEO Sam Altman has aligned with Anthropic in opposing the use of AI for mass surveillance and autonomous weapons, emphasizing ethical ‘red lines’. OpenAI is negotiating with the Department of Defense (DOD) to implement technical safeguards, such as cloud-only deployment, to ensure ethical AI use in military contexts. This stance may affect the Pentagon’s plans to replace Anthropic’s AI model, Claude, in sensitive operations. Source The comments reflect a mix of support and skepticism, with some users expressing concern over AI’s potential role in government decisions, highlighting the ethical implications of AI deployment in military contexts.

-

Anthropic rejects Pentagon’s “final offer” in AI safeguards fight (Activity: 3744): Anthropic has rejected the Pentagon’s final offer concerning the deployment of its AI model, Claude, due to inadequate safeguards against mass surveillance and autonomous weapons. The Pentagon has threatened to blacklist Anthropic and potentially invoke the Defense Production Act to enforce compliance. Despite the impasse, Anthropic is open to further negotiations, emphasizing its commitment to ethical AI practices. For more details, see the Axios article. Commenters support Anthropic’s stance, highlighting the minimal nature of their demands, which include avoiding mass domestic surveillance and fully autonomous weapons. The rejection by the Pentagon is seen as surprising given these basic ethical concerns.

-

Anthropic Rejects Pentagon offer [Statement from Dario Amodei on our discussions with the Department of War] (Activity: 531): Anthropic, led by Dario Amodei, has publicly declined an offer from the Pentagon to collaborate on military applications of AI, as detailed in their official statement. The company emphasizes its commitment to ethical AI development, focusing on safety and alignment rather than military use. This decision aligns with Anthropic’s broader mission to develop AI systems that are beneficial and safe for humanity, as opposed to contributing to warfare technologies. The comments reflect a positive reception of Anthropic’s decision, with users expressing support for the company’s principles and ethical stance. There is also a mention of Anthropic’s AI model, Claude, being favored for coding and chat, despite some limitations in usage.

-

Anthropic CEO stands firm as Pentagon deadline looms (Activity: 1010): Anthropic CEO Dario Amodei has refused the Pentagon’s request to remove safety guardrails from the Claude AI model, emphasizing ethical concerns over granting the military unrestricted access. This decision comes amid threats of a government ban, as Anthropic opposes the use of its technology for lethal autonomous weapons and mass surveillance. The company’s stance highlights a commitment to ethical AI deployment, resisting pressures to compromise on safety and civil liberties. Commenters highlight the ethical implications of Anthropic’s decision, noting the potential for mass surveillance as a more immediate concern than autonomous weapons. The debate touches on the broader impact on civil liberties and the political landscape, with some suggesting the move is related to electoral interference.

2. Nano Banana 2 and Gemini 3.1 Developments

-

Google releases Nano banana 2 model (Activity: 1096): Google has released the Nano Banana 2 model, an advanced AI image generation tool that integrates professional-grade capabilities with rapid processing speeds. The model is designed with enhanced world knowledge, production-ready specifications, and improved subject consistency, allowing for efficient generation of high-quality images. More details can be found in the official blog post. Users are impressed with the model’s performance, noting significant improvements in tasks it previously struggled with, such as complex image generation scenarios like home remodeling.

- The Nano Banana 2 model is being discussed in terms of its performance improvements over previous iterations, particularly in image generation tasks. Users are noting significant enhancements in handling complex scenarios, such as architectural remodeling, which were challenging for earlier models. This suggests a substantial upgrade in the model’s ability to understand and generate detailed visual content.

- Despite the advancements, there are still limitations noted with the Nano Banana 2 model, such as its inability to generate PNG images without a background. This indicates that while the model has improved in many areas, there are still specific technical constraints that need addressing, particularly in terms of output format flexibility.

- The release of the Nano Banana 2 model is seen as a step towards achieving more realistic and consistent image generation, with some users expressing that it brings us closer to ‘solving’ image generation challenges. This reflects a broader trend in AI development where models are increasingly capable of producing high-quality, realistic images across various contexts.

-

Gemini 3.1 Flash (Nano Banana 2) Spotted Live in Gemini Ahead of Official Release (Activity: 315): The image highlights the early appearance of the Gemini 3.1 Flash, also known as Nano Banana 2, within the Gemini interface, indicating a possible staged rollout before its official release. The interface shows a loading message for “Nano Banana 2,” suggesting that the model is accessible and can be selected by users, although no formal announcement has been made yet. This early access could be part of a testing phase or a soft launch strategy by the developers. One comment notes the impressive detail in the model, specifically mentioning a bird visible in the eye of a subject in the image, indicating high-quality rendering capabilities of the model.

-

Nano Banana 2 pricing !!!! (Activity: 307): The image provides a pricing comparison between two image generation models, “Nano Banana 2” and “Nano Banana Pro.” “Nano Banana 2” is positioned as a cost-effective option with a focus on speed and reality-grounded capabilities, priced at

$0.50for input and$3.00for output. In contrast, “Nano Banana Pro” is marketed as a more advanced model with higher pricing at$2.00for input and$12.00for output. Both models have a knowledge cut-off date of January 2025, indicating they are designed to incorporate the latest advancements up to that point. The discussion in the comments highlights that “Nano Banana 2” offers competitive performance at a lower cost compared to the “Pro” version, making it a favorable choice for users prioritizing cost-efficiency and speed. Commenters note that “Nano Banana 2” provides similar performance to “Nano Banana Pro” while being more cost-effective and slightly faster. However, some users express disappointment with the pricing, expecting it to be cheaper. Comparisons are also made with other models like “Gemini 3 Pro Image” and “Gemini 3.1 Flash Image,” which have different pricing structures based on resolution.- The Nano Banana 2 is reported to be more cost-effective than the Pro version, being approximately twice as cheap while offering slightly faster performance. This suggests a favorable cost-to-performance ratio for users looking for efficiency and budget-friendly options.

- The pricing structure for the Gemini 3 Pro and Gemini 3.1 Flash Image models is detailed, with the Pro charging 560 tokens per input image and scaling costs by resolution, while the 3.1 Flash Image charges 1120 tokens per input image. The output image costs for 3.1 are slightly cheaper than Pro, but the token cost is higher than expected, making it only marginally more affordable.

- There is a discussion on whether the Nano Banana 2 offers better quality than the Pro version, with some users suggesting that the quality is either better or comparable. This indicates that the Nano Banana 2 might be a competitive option in terms of image quality, alongside its cost advantages.

-

Nano Banana 2 vs Nano Banana: the biggest change I felt first was its improved sense of space and proportion. (Activity: 501): The post compares the image generation capabilities of two AI models, Nano Banana 2 and Nano Banana, using the same detailed prompts. The author notes a significant improvement in the sense of space and proportion in images generated by Nano Banana 2, specifically using the Gemini 3.1 Flash Image engine, compared to the original Nano Banana accessed via CoffeeCat AI. The comparison involves complex prompts that describe intricate scenes, such as a 3D-rendered cartoon sloth and a photorealistic portrait, highlighting the models’ ability to handle detailed and varied visual elements. A notable opinion from the comments suggests that while the original Nano Banana had better overall quality, Nano Banana 2 excels in prompt adherence and understanding, indicating a potential for a future ‘Pro’ version that could significantly enhance performance.

- User ‘plushiepastel’ notes that while the original Nano Banana Pro had better overall quality, the Nano Banana 2 excels in prompt adherence and understanding, suggesting a potential for a future Nano Banana 2 Pro version that could significantly enhance performance.

- User ‘ayu_xi’ argues that Nano Banana 2 should be compared to Nano Banana Pro rather than the original Nano Banana, implying that the improvements in the newer version align more closely with the Pro model’s capabilities.

- User ‘Plus_Complaint6157’ raises a concern about the prevalence of hallucinations in text with Flash Banana 2, describing it as ‘unacceptable quality,’ which highlights a significant issue in the model’s text generation accuracy.

3. AI Model Performance and Optimization

-

We built 76K lines of code with Claude Code. Then we benchmarked it. 118 functions were running up to 446x slower than necessary. (Activity: 596): Codeflash used Claude Code to develop two major features, resulting in

76Klines of code. Upon benchmarking, they discovered118 functionsrunning up to446xslower than necessary due to inefficient code patterns like naive algorithms, redundant computations, and incorrect data structures. For example, a byte offset conversion function was19xfaster after optimization. The issue stems from LLMs optimizing for correctness over performance, lacking iterative optimization and performance prompts. The SWE-fficiency benchmark shows LLMs achieve less than0.23xthe speedup of human experts, highlighting the gap in performance optimization. Commenters noted the importance of integrating performance checks into development workflows, criticizing reliance on LLMs for efficient code. Some suggested adding explicit performance requirements in prompts to improve output, while acknowledging LLMs’ inability to profile or benchmark code.- ThreeKiloZero emphasizes the importance of integrating performance and quality checks into the development workflow. They suggest using tools and GitHub integrations for PR reviews to catch performance issues before code is committed, highlighting that relying solely on initial outputs without these checks is inadequate for serious projects.

- Stunning_Doubt_5123 points out that Claude Code tends to produce functional but inefficient code. They recommend adding explicit performance requirements in documentation, such as preferring O(1) lookups and caching repeated computations, to guide the model towards better coding patterns. They also note the limitation of LLMs in profiling and benchmarking their own outputs, which is crucial for identifying performance bottlenecks.

- inigid discusses the traditional software engineering approach of first making code work and then optimizing it. They argue that this iterative process of improvement is not unique to LLMs but is a common practice among human developers as well, suggesting that performance optimization is a natural part of the development lifecycle.

-

How one engineer uses AI coding agents to ship 118 commits/day across 6 parallel projects (Activity: 114): Peter Steinberger has developed a workflow using 5-10 AI coding agents to manage multiple projects, achieving

118 commits/dayacross48 repositoriesin72 days. His strategy involves acting as the architect and reviewer while AI agents handle implementation. To overcome limitations, he created tools like Peekaboo for macOS UI testing, Poltergeist for hot reloading, Oracle for code review, and custom CLIs for external access. Steinberger emphasizes designing codebases for agent efficiency, not human navigation, resulting in the rapid growth of OpenClaw with228K GitHub stars. Some commenters question the value of high commit counts, suggesting quality over quantity, while others reflect on personal productivity limits and the potential of AI agents to enhance output.- pete_68 discusses the challenges of managing multiple AI coding agents, noting that while they have managed two agents on separate projects, the process involves significant waiting times. They highlight the difficulty of maintaining such productivity, especially as one ages, and reflect on how conditions like autism and ADHD can impact a programmer’s productivity.

- creaturefeature16 criticizes the focus on metrics like lines of code (LoC) and commit counts, arguing that these are not meaningful indicators of software quality. They emphasize that reducing code can often be more valuable, sharing an example where their best commit involved removing 1000 lines of code, which suggests a focus on code efficiency and maintainability.

- amarao_san raises concerns about the ability to maintain context and competence when dealing with large volumes of code produced by AI agents. They argue that without understanding the domain, it’s difficult to assess the quality of the code, especially in critical applications like elevator or car brake systems, where domain expertise is crucial for safety and reliability.

AI Discord Recap

A summary of Summaries of Summaries by gpt-5.1

1. Practical Model Picking: Qwen, GLM, Kimi, Nano Banana, Claude, GPT, Gemini

-

Qwen and GLM Duel in Real-World Coding Workloads: OpenClaw and Unsloth users compared Qwen3.5 and GLM5, reporting that Qwen3.5 35B MOE hits up to 62 TPS on a 4070 Super (Q4KM) and ~25 TPS on a 7900 XT 16GB, while GLM5 is slower but reliably finished long multi‑hour tasks that Qwen “butchered” with broken JSON and indentation (llama.cpp usage here).

- Engineers converged on a split usage pattern: Qwen3.5 for fast scraping/summarization/writing and GLM5 or more conservative models for complex refactors, noting that “about 55% of the time I have qwen update code…it breaks things” and that GLM5 once took 5h20m but almost finished an entire project without catastrophic errors.

-

Kimi-Code and Moonshot: Cheap Tokens, Slow Replies: Across OpenClaw and Moonshot AI servers, heavy coders praised Kimi Code via a direct Moonshot AI Allegretto subscription as cost‑effective, with $39/month unlocking ~5,000 tools plus generous daily/weekly caps, making it attractive for sustained agentic coding workloads.

- However, multiple users complained that the Moonshot API and kimi-code often respond in 20+ seconds and even throw 403s after prepaid annual plans when rules changed, so teams are treating Kimi as a high‑volume but latency‑tolerant backend rather than a tight inner‑loop coding assistant.

-

Nano Banana Models Split the Crowd: In LMArena and OpenAI/Moonshot chats, image and search users contrasted Nano Banana Pro (smoother character swaps, more consistent images) with Nano Banana 2, with one user declaring “So nano banana 2 just a trash” after repeated failures, while OpenAI users lauded Nano Banana 2 for “pro level” web‑first search then answer (Google Nano Banana 2 announcement).

- The emergent pattern is teams preferring Nano Banana 2 for fast, accurate retrieval‑heavy tasks and Nano Banana Pro / other image models for character‑consistent generation, with some Moonshot users simply flagging Nano Banana 2 as delayed or opaque due to minimal public detail.

-

Claude vs GPT vs Gemini: Reasoning, Coding, and Jailbreak Wars: Across BASI Jailbreaking, OpenRouter, Cursor, and OpenAI servers, engineers praised Claude 4.6 for “reasoning” and red‑team workflows, noted GPT‑5-mini as a rock‑solid “heartbeat” checker (free in GitHub Copilot), and complained that Gemini 3.1 Pro is smart but weak at tool calling compared to GPT‑4.6 Opus.

- Users increasingly test Claude as a GPT replacement (e.g., this video walkthrough) while jailbreaking circles hunt for working prompts for Gemini Pro 3 / 3.1 usable on Perplexity, with one player bluntly saying “Yeah, but it sucks ass” about Gemini and others trading or even paying for game‑cheating jailbreak prompts.

-

Claude Code and Agent Teams Face Value Questions: In OpenAI discussions, developers debated paying for Claude Code and its “agent teams” orchestration, which can coordinate multi‑agent planner/worker setups inside Claude Code, versus rolling their own orchestrators on top of cheaper models.

- Some argued that Claude Code’s value only appears if you already prompt at a high level and understand its agent mental model, while skeptics preferred to “use their own brain” plus generic models, given ongoing friction around Anthropic’s availability and government pressure.

2. New Infra, Attention Hacks, and Interpretability Tooling

-

Logit Fusion Hype Fuels Training Experiments: Researchers in the Unsloth community surfaced a Notion explainer on Logit Fusion plus a confirming Bluesky thread, pushing for native Unsloth support for this training scheme that fuses logits from multiple models or checkpoints during training.

- People framed Logit Fusion as a promising low‑infrastructure way to get ensembles‑like benefits and curriculum control inside standard training loops, explicitly asking Unsloth to treat it as a first‑class recipe alongside LoRA/QLoRA rather than a niche experiment.

-

NNsight 0.6 Turbocharges Interp Pipelines: Interpretability folks on Hugging Face and Eleuther shared NNsight v0.6, highlighting 2.4–3.9× faster traces, cleaner error messages, and vLLM multi‑GPU/multi‑node support, with detailed release notes in the blog post “Introducing Nnsight 0.6”.

- The release also ships LLM‑friendly docs meant for agents, first‑class support for 🤗 VLMs and diffusion models, and better hooks for intervening on residual streams, making it much easier to script large‑scale probe sweeps and cross‑layer interventions directly from code or even AI coding assistants.

-

CoDA Attention Slashes KV VRAM with Triton Kernels: An HF community member announced an open‑source Constrained Orthogonal Differential Attention with Grouped‑Query Value‑Routed Landmark Banks (CoDA-GQA-L) mechanism that dramatically reduces KV‑cache VRAM, backed by two custom fused Triton kernels and a 7B Mistral CoDA-GQA-L model on Hugging Face (paper, model).

- They also published the kernels as a PyPI package and are actively seeking full‑time work and an arXiv endorsement, while Eleuther’s research channel dissected CoDA adapter costs, noting that swapping all 32 attention layers and fine‑tuning only 18.6% of parameters degraded Mistral‑7B perplexity from 4.81 → 5.75, quantifying the architectural tradeoff.

-

LLM Connection Strings Aim to Standardize Model URIs: Developers on OpenRouter rallied around Dan Levy’s proposal for LLM Connection Strings, a URI‑style format like

llm://provider/model?param=...to pass all model options as a single CLI arg, detailed in “LLM Connection Strings”.- People liked that this could unify scripts, agents, and CLIs across providers (OpenRouter, local, cloud) without bespoke config files, treating model selection, routing, and options as a standardized URL instead of a pile of ad‑hoc flags.

-

MCP PING Semantics Clash with Real-World Health Checks: The MCP Contributors Discord dissected whether the

pingutility spec is meant to work beforeinitialize, noting that the word “still” suggests it was designed for already‑initialized connections.- Because the Python MCP SDK enforces initialization before ping, Bedrock AgentCore hacked around this by creating a temporary session just to send health‑check pings to customer MCP servers, illustrating how spec ambiguity is already forcing protocol‑level workarounds in production systems.

3. Hardware, Throughput, and GPU-Programming Deep Dives

-

Qwen3.5 35B and GPT-OSS 20B Hit Ludicrous Local Speeds: Unsloth users reported Qwen3.5‑35B MOE running at up to 62 tokens/s on a 4070 Super (Q4KM) and ~25 tokens/s on a 7900 XT 16GB, while Perplexity users benchmarked GPT‑OSS 20B on a MacBook at ~100 tokens/s—producing 1M tokens in under 3 hours.

- These numbers pushed more engineers to seriously consider local inference for bulk generation and as an API backup despite questions about electricity cost vs. API price, especially when paired with GGUF variants and CPU offloading like

unsloth/Qwen3.5-35B-A3B-GGUF.

- These numbers pushed more engineers to seriously consider local inference for bulk generation and as an API backup despite questions about electricity cost vs. API price, especially when paired with GGUF variants and CPU offloading like

-

Colab’s RTX PRO 6000 and Cloud Cost Calculus: The Unsloth community noticed Google Colab quietly adding NVIDIA RTX PRO 6000 instances at about $0.81/hour, which users contrasted against older A100 high‑RAM tiers at roughly $7.52 credits/hour.

- People argued this pricing could make Colab the default cheap pretraining/finetuning playground for indie researchers, especially when combined with Unsloth’s efficient fine‑tuning stack and emerging tricks like Logit Fusion, though long‑running jobs still require careful W&B / protobuf pinning (e.g.,

protobuf==4.25.3).

- People argued this pricing could make Colab the default cheap pretraining/finetuning playground for indie researchers, especially when combined with Unsloth’s efficient fine‑tuning stack and emerging tricks like Logit Fusion, though long‑running jobs still require careful W&B / protobuf pinning (e.g.,

-

GPU MODE Goes Hardcore on PTX, CuTeDSL, and cuTile: In GPU MODE, low‑level hackers debated PTX’s acquire‑release memory model, asking if operations before a release are actually ordered and how

volatileinteracts with ordering, explicitly tying it to distributed‑systems consistency models.- Other threads chased fused compute+comms in CuTeDSL, pointing to an early reduce‑scatter example that uses multimem PTX instructions instead of

nvshmem_put/get, while a separate channel dissected cuTile’s missing primitives (nosort()/ top‑k / prefix‑sum yet) and how to use its FFT sample for content‑based retrieval systems.

- Other threads chased fused compute+comms in CuTeDSL, pointing to an early reduce‑scatter example that uses multimem PTX instructions instead of

-

On-Device Context-Aware Voice Models Reach 520M Scale: Multiple servers (Hugging Face, Perplexity, GPU MODE) highlighted a 520M‑parameter voice model that runs fully on‑device on RTX and Apple Silicon, using full dialogue history to modulate emotion, showcased in Luozhu Zhang’s demo and writeup (contextual voice model tweet).

- The model reads conversation context to produce different emotional readings from the same text, giving practitioners a concrete reference point for real‑time, privacy‑preserving, emotionally‑aware TTS architectures that don’t need server‑side GPUs.

-

Career Pivots into CUDA and Pretraining at Scale: The GPU MODE server fielded multiple career‑switch questions (e.g., 7‑year SWE wanting into CUDA/GPU work), recommending deep dives into early chapters of core GPU books, WSL+CUDA setups, open‑source contributions, and GPGPU side projects like parallel N‑body simulations.

- In parallel, Poolside AI advertised a CUDA pre‑training team role focused on optimizing large‑scale runs on cutting‑edge hardware (job post), explicitly looking for engineers who can go beyond kernels into full‑pipeline performance engineering.

4. Benchmarks, Arenas, and World-Model Research

-

LMArena Expands Code, Image, and Video Leaderboards: LMArena announced gpt‑5.3‑codex entering the Code Arena, added Kling‑V3‑Pro to the Video Arena leaderboard where it tied #8 with a score of 1337 (a +52pt jump over Kling 2.6 Pro and +48pt over Kling‑2.5‑turbo‑1080p), and rolled out 7 new Image Arena categories highlighted in Guanglei Song’s video.

- Users mourned the removal of Video Arena from Discord in favor of web‑only access (“Everything, but video in direct chat”), and requested a global image gallery akin to ChatGPT’s history, currently approximated via modality filters on arena.ai.

-

Doc-to-LoRA and GAIA Push Task-Specific Evaluation: On OpenRouter, members shared Sakana AI’s Doc‑to‑LoRA, which turns arbitrary documents into LoRA finetunes for tighter domain conditioning, while Hugging Face’s agents‑course channel saw users hunt for a strong online LLM for the GAIA benchmark to beat current OpenRouter choices suffering from RPM caps and hallucinations.

- Practitioners framed Doc‑to‑LoRA as “chat‑with‑PDF but for weights”—a way to cheaply get per‑doc behaviors without full model retrains—while GAIA conversations reinforced that benchmark choice + rate limits now matter as much as raw model quality for production‑style evals.

-

World-Model Survey Spurs AGI-Flavored Paper Clinic: The MLOps @Chipro community announced a two‑part “paper clinic” around “Understanding World or Predicting Future? A Comprehensive Survey of World Models” (arXiv:2411.14499), aiming to map architectures like JEPA / V‑JEPA, Dreamer, Genie, Sora, and World Labs.

- Session 1 (Feb 28, 10:00–11:30 AM EST, Luma link) focuses on foundations and the “Mirror vs. Map” (generation vs. representation) debate, while Session 2 (Mar 7, 10:00–11:30 AM EST, Luma) covers the competitive landscape (Sora vs. Cosmos vs. V‑JEPA) and what that implies for spatial intelligence, causal modeling, and social world models.

-

Benchmarking Methodology Fights: CoT vs Templates: In Eleuther’s general channel, researchers argued over whether multi‑shot Chain‑of‑Thought (CoT) should be treated as a realistic user pattern or a biased template, asking why CoT exemplars are accepted in benchmarks while explicit “you are being tested” templates are frowned upon.

- Participants noted that user ambiguity is inherent in real usage and that CoT itself is a form of templating, suggesting its widespread acceptance is mostly “historical reasons and inertia”—which has direct implications for how new adapter architectures (like CoDA) and alignment methods should be evaluated.

-

ARACHNID RL Dataset and Communicative IR for Interp: A Hugging Face contributor released the ARACHNID RL Dataset with 2,831 Atari‑style space‑shooter gameplay samples for imitation‑learning research, published on Hugging Face Datasets, while Eleuther’s interpretability channel discussed a bilingual “communicative IR” system (EN/JP) tracking ACT + PAYLOAD + STANCE.

- The IR builder asked for best practices on probing whether dialogue‑act and stance variables are linearly decodable from hidden states, and was advised to sweep layer‑wise probes over the residual stream—exactly the sort of workflow that tools like NNsight 0.6 are now designed to automate.

5. Platform Strategy, Governance Flashpoints, and New OS/Agent Integrations

-

OpenAI Lands Mega-Backers While Users Miss GPT 5.1: OpenAI announced new strategic investments from SoftBank, NVIDIA, and Amazon to support scaling infrastructure, as detailed in their blog post “Scaling AI for everyone”, even as power users on Discord mourned the retirement of GPT‑5.1 in favor of the more cautious GPT‑5.2.

- Engineers complained that GPT‑5.2’s tone feels condescending and hyper‑safe compared to 5.1 “being a delight to work with”, while others reported oddities like random Chinese tokens in generations and poor image recognition—explained away as mixed‑language training noise rather than anything “freaky.”

-

Anthropic vs Pentagon Sparks Supply-Chain Risk Drama: Across OpenRouter, Yannick Kilcher, and LMArena, users dissected Anthropic’s “Statement on the Department of War”, noting that the Pentagon not only pulled back from a $200M contract but also floated labeling Anthropic a supply‑chain risk, pressuring defense contractors to audit and possibly drop Claude.

- Engineers mocked the situation (“Who the fuck cares about losing Boeing as an LLM client lmao”), worried about coerced access to models and code for surveillance and kill chains, and joked about “standing up for corporate values by boycotting Claude” while pointing out that vendors like Palantir will happily fill the gap.

-

Google’s Intelligent OS and Microsoft’s Taskful Copilot: News‑watchers in Yannick Kilcher’s ML‑news channel posted Google’s “intelligent OS” announcement, which promises system‑level support for AI agents on Android‑class devices, alongside Google Labs’ new Opal Agent.

- At the same time, Microsoft detailed how Copilot now turns answers into actions, effectively making Copilot a task runner rather than just a chat assistant—foreshadowing a near‑term world where OSs natively orchestrate multi‑step agent workflows instead of leaving it all to browser UIs.

-

Perplexity Credit Economics and BK’s ‘Patty’ Surveillance Bot: On Perplexity’s server, users complained that Perplexity Computer can burn a month of credits in ~3 hours in an AI trading app and that the $200/month Max plan still feels tight, especially with Pro deep searches capped at 20/month, while debating whether an enterprise tier with higher caps and stronger compliance would fix things.

- A separate thread dissected Burger King’s BK Assistant pilot—a headset‑based voice bot called “Patty” powered by OpenAI that answers recipe questions and scores “friendliness” by counting phrases like “welcome to Burger King”, “please”, and “thank you” across **500 US locations—raising obvious questions about workplace surveillance wrapped in customer‑service metrics.

-

Agent Swarms, Connection Limits, and Tooling Pain Points: Moonshot’s Kimi K2.5 Agent Swarm drew interest as a web‑only feature (with only sub‑agents exposed via the Kimi CLI), while OpenClaw power users showed off an agent‑personas plugin that dynamically swaps personas mid‑thread and an OpenClaw WearOS app alongside full real‑estate automations and RAG benefits bots.

- Meanwhile, platform quirks—OpenRouter 500s under 10+ concurrent requests, Hugging Face Spaces 500s and Gradio 67 errors, Kimi‑code connection flakiness, wandb/protobuf pin hell in Colab, and Discord‑wide support scams—highlighted that agentic workflows are increasingly bottlenecked not by model intelligence but by ecosystem reliability and rate‑limit ergonomics.

Discord: High level Discord summaries

OpenClaw Discord

- Next.js 16 Fuels Vercel Mania: Members are obsessed with Next.js 16 and its Vercel integration as it makes deployments easier.

- Members reported issues with OpenClaw slowness, averaging 5 minute response times despite optimizations.

- Codex Codifies Code, Gemini Gobbles Tokens: Members debated model performance, with Codex favored for coding and Gemini for token efficiency.

- One member succinctly stated of Gemini, Yeah, but it sucks ass.

- Kimi-Code: Cost-Effective Coding: Members discussed the value of a direct subscription to Moonshot AI for Kimi Code, highlighting it as cost-effective for heavy coding use with generous daily/weekly limits at $39/month unlocking 5,000 tools.

- One user noted that the Allegretto plan has very generous daily and weekly limits, while another warned it seems that moonshot ai api is a bit slow. 20+ sec responses are pretty normal.

- OpenClaw Powers Property Profits: A member is using OpenClaw for real estate management, including managing properties/renters, analyzing bank statements for rent payments, and automating ad creation on immoscout24.de.

- Future plans involve connecting to banks directly, automating renter communication via WhatsApp, and integrating a human API for booking real estate agents.

- Agent Personas Plugin Goes ShizoMaxxing: A member built a plugin that dynamically switches agent personas within a single chat session on the same topic, accessing its own files.

- They described themselves as shizomaxxing ever since, suggesting a significant productivity or creative boost from the tool.

BASI Jailbreaking Discord

- Data Access Doesn’t Guarantee AI Dominance: Despite access to vast datasets, it’s argued that China’s AI may not automatically outperform Western AI due to the inherent difficulties in controlling complex LLMs, as highlighted by this link.

- Speculation arose that China’s push for military parity could signal an all out approach to AI development.

- Tempmail Tangles With Discord: A new user, tempmail0723, humorously admitted to struggling with Discord’s interface, citing disorganization as a primary hurdle.

- This followed playful teasing for using a node essentially a bundle of w.

- Janus Bot Spills the Beans on OS: In response to a user request, the Janus bot revealed it operates on Linux 6.17.0-1007-aws with Python version 3.11.14.

- A user followed up by jokingly inquiring about the cheapest 16gb ddr4 ram, to which it found a Silicon Power product that is now 404.

- Claude 4.6 Touts Reasoning Capabilities: Members debated the best model for ‘red team’ assistance, with Claude 4.6 being praised for its reasoning capabilities.

- Though others suggested Deepseek substrates for raw data dumps, one user joked about not getting caught in the hallway, alluding to the risks of jailbreaking.

- Gemini Pro 3 Jailbreak Quest Initiated: Users are actively seeking a working prompt to jailbreak Gemini Pro 3, with potential applications on Perplexity.

- Some are even willing to pay for a working prompt to assist with cheating in games like CS2 and Rust, with one user asking does anyone have a jb for gemini 3.1? none of the jb’s i have work atm.

LMArena Discord

- Nano Banana Blues: Pro Version Preferred!: Users express a preference for Nano Banana Pro over Nano Banana 2, citing smoother character swaps as the main advantage.

- Some users found Nano Banana 2 to be unsatisfactory, particularly for generating images with consistent character appearances, with one user stating, “So nano banana 2 just a trash”.

- Claude PDF Predicament: Context Crunch!: Users reported experiencing errors when uploading multiple PDFs to Claude, suggesting a potential limit on the number or size of files.

- It was suggested that PDFs consume a significant amount of context due to vectorization.

- Video Arena Voyage: Site Becomes Solo Star!: The Video Arena has been removed from the Discord server but remains accessible on the website arena.ai/video.

- Users voiced their disappointment, with one exclaiming, “Everything, but video in direct chat”.

- Image Arena’s Gallery Generation Gap!: Users are requesting a gallery feature on arena.ai to view all generated images in one place, similar to ChatGPT.

- Currently, users can filter by modality in the search area as a workaround.

- Kling V3 Pro Gains Video Arena Fame: The Video Arena leaderboard updated to include Kling-V3-Pro, tying for #8 with a score of 1337.

- This reflects a +52pt improvement over Kling 2.6 Pro and +48pt over Kling-2.5-turbo-1080p.

Unsloth AI (Daniel Han) Discord

- Bun 1.3.10 breaks Builds!: A user found that Bun 1.3.10 caused build failures, referencing a specific commit related to

bun:sqlite.- The user attempted a workaround using a namespace import but encountered TypeScript errors indicating a missing ‘Sqlite’ namespace.

- Qwen 3.5 35B Blazes Fast!: Members discuss the blazing fast performance of Qwen3.5 35B MOE model, with one user reporting 62 TPS on a 4070 Super with Q4KM quantization.

- Another user experienced approximately 25 TPS on a system with a 9070 XT (16GB VRAM) and shared their llama.cpp command for running the model.

- Colab’s RTX PRO 6000: Research Revolution!: Users noted that Google Colab now offers NVIDIA RTX PRO 6000 instances at $0.81 per hour.

- This new offering might solidify Google’s lead in AI research infrastructure, especially now that they’ve focused on the research.

- WandB Protobuf Woes!: A user experienced a W&B/Protobuf mismatch error in Colab and was advised to reinstall

wandband pinprotobufto version 4.25.3.- Despite following the reinstall instructions, dependency conflicts persisted, showing protobuf incompatibility with grpcio-status, ydf, google-api-core, grain, and opentelemetry-proto.

- Logit Fusion Craze!: A member shared a link to a Notion page on Logit Fusion and expressed excitement about seeing this training method in Unsloth.

- Another member shared a Bluesky post with the same suggestion to implement it in Unsloth.

Cursor Community Discord

- Cursor’s New User Wave: New members are seeking guidance on using Cursor for mobile and web application development, transitioning from platforms like Base 44 due to its limitations, and expressing a need for frameworks that allow real-time work preview.

- They are asking where the documentation is for building mobile apps or web apps.

- Experts Slam Vibe Coding for Production Apps: Experts caution against using “vibe coding” for client applications, suggesting it’s more suitable for planning and learning, advocating for a solid development foundation and using AI to audit code for errors.

- Some argue that Cursor serves as a developer assistant and not a complete solution like Base 44, requiring users to have a solid understanding of code and industry terminology.

- Gemini 3.1 Pro Flounders with Tool Calling: Users report that while Gemini 3.1 Pro is highly intelligent, it struggles with tool calling compared to GPT 4.6 Opus, with some noting that Claude models feel too “book perfect” and lack freestyle problem-solving abilities.

- This difference in capability may affect workflows that rely on tool integrations and complex, multi-step operations.

- File Change Chaos in Parallel LLM Workflows: Users discuss issues with managing file changes across multiple LLM conversations, where edits in one conversation are disregarded in another, suggesting using worktrees or OpenClaw as potential solutions.

- It was suggested to tell SPOCs to run efficicy.

Perplexity AI Discord

- GPT-OSS 20B Runs Blazingly Fast on Macbook: Members discussed local model execution versus API usage, reporting 100 tokens per second on a GPT-OSS 20B model using a Macbook, which completes a million tokens in under three hours.

- While some questioned the cost-effectiveness, others pointed to electricity bills and API costs as factors and some use it as a backup due to API costs.

- Perplexity Computer’s Credit Crunch: An AI-powered trading app using Perplexity Computer was highlighted for its visual appeal but high credit consumption, burning through a month’s worth of credits in just 3 hours.

- The value proposition of the $200/month Max subscription was debated, with suggestions for an enterprise version with higher credit limits, potentially addressing regulatory security compliance needs.

- Burger King deploys ‘Patty’ to monitor employee friendliness: Burger King is piloting “BK Assistant”, featuring a voice chatbot named “Patty” (powered by OpenAI), in employee headsets across 500 U.S. locations.

- Patty answers recipe questions, evaluates “friendliness” by monitoring interactions, and generates team friendliness scores based on staff saying “welcome to Burger King”, “please”, and “thank you”.

- Perplexity Pro Users Bump into Limits: Users are encountering limitations with the Pro plan, specifically with deep searches capped at 20 per month, prompting frustration.

- The limited deep searches are insufficient for some, leading to discontent and jokes about leaving the platform while being upsold on Max.

- Gemini Benchmarks Draw Fire, AGAIN!: Members voiced concerns about Gemini’s benchmarks and overall functionality, pointing out that it prioritizes acting human over providing accurate answers.

- Despite general frustrations, its speed was acknowledged as valuable for specific use cases.

OpenRouter Discord

- Vision Models Ace PDF Analysis: Users prefer vision models like Gemini 3 and Claude Sonnet for PDF analysis because they handle document extraction and image transformation internally, noting that Mistral lacks file input capabilities in OpenRouter, but converting PDFs to JPEGs solves the issue.

- A user questioned whether OpenRouter accurately reflects model capabilities, noting discrepancies regarding document input support by referencing OpenRouter’s Get Models API.

- OpenRouter Plagued by Error 500: A user reported frequent Error 500 issues with OpenRouter, particularly under high concurrent request loads (10+), even with exponential backoff, using models like Xiaomi Mimo v2 Flash and Gemini 3 Flash.

- Users are warned about support scammers targeting OpenRouter users on Discord, particularly those with the “new here” tag, and are advised to avoid clicking on suspicious links.

- Anthropic Rejects Pentagon AI Terms: Anthropic rejected the Pentagon’s AI terms, leading to the Department of War considering blacklisting them as a supply chain risk and asking defense contractors to assess their exposure to Anthropic.

- The community joked about the implications, with some quipping “Who the fuck cares about losing Boeing as an LLM client lmao”.

- GPT Addicts turn to Claude: End-users previously addicted to GPT are now trying Claude and recognizing its differences and capabilities as shown in this YouTube video.

- Some attribute this shift to the chatgpt interface removing old messages and using strict system prompts when web search is enabled, leading to a less consistent experience.

- LLM Connection Strings Proposed: Members discussed the LLM Connection Strings proposal for a CLI-friendly way to pass arguments to scripts, using a single argument like

my-agent --model "llm://...".- The community expressed strong support for this approach, highlighting the benefits of standardization and compatibility across the ecosystem, avoiding the need for quirky, ad-hoc configurations.

OpenAI Discord

- OpenAI’s AI Expansion Gains Backing: OpenAI announced new investments from SoftBank, NVIDIA, and Amazon to support their goal of scaling AI for everyone, detailed in their blog post.

- The investments aim to bolster the infrastructure required for the widespread adoption of AI technologies.

- Relaxed Filters Elicit Mixed Reactions: Members noted that with the update the filter became more permissive, although doesn’t work with every IP, while another member celebrated I love relaxed guidelines.

- The updated filters may allow for greater flexibility but could potentially lead to varying results depending on the specific use case.

- Nano Banana 2 Hits Pro-Level: Members are praising Nano Banana 2 for its pro-level, rapid web search capabilities to find accurate info before generating.

- Some speculate that its performance may be due to model distillation techniques.

- GPT Models Drop Random Chinese: Members noted that ChatGPT’s image recognition performance is poor and that LLMs sometimes drop in a random Chinese character.

- Theorized as stemming from mixed-language training data, this results in occasional token prediction errors; one member stated that There’s nothing freaky about it.

- GPT 5.1’s Writing Style Missed: Users are lamenting the disappearance of GPT 5.1, preferring its writing tone over the condescending style of GPT 5.2.

- Users found GPT 5.2 overly cautious, appreciating GPT 5.1’s more engaging and less serious approach.

HuggingFace Discord

- Grokking Introspection runs at Ludicrous Speed: A member achieved a 5.7x speed increase in grokking addition mod 113 using this Hugging Face Space.

- This led to a discussion about the timeline of promising new architectures.

- Hugging Face Spaces Beset by API Issues: Users reported 500 Internal Errors on Hugging Face Spaces, alongside Gradio 67 Errors and Repository Not Found errors when accessing

https://huggingface.co/api/spaces/chinhon/SadTalker.- The platform displayed a message indicating they were actively working to resolve the issues.

- Voice Model Adapts Dialogue Via Conversation Context: A user released a 520M voice model, detailed in this writeup, that changes emotion dynamically based on conversation history, running on RTX and Apple Silicon.

- The model leverages conversation context to modify emotion, adapting dynamically.

- Auto TRL pipeline gets hooked up to Tensorboard: A user shared a link to a new tool for auto TRL -> upload -> tensorboard integration.

- The shared their delight with the training metrics tab.

- Attention Mechanism Sheds Pounds of VRAM: A new open source attention mechanism dramatically reduces VRAM usage in the KV-cache, and includes 2 custom written fused Triton kernels for performance optimization, available on PyPi.

- The member is seeking full time work and arXiv endorsement to publish pre-prints, pointing to a paper and a 7b mistral model on Hugging Face.

Moonshot AI (Kimi K-2) Discord

- Nano Banana 2 Delayed: A member mentioned Nano Banana 2 without any further details, implying a possible delay.

- No additional information regarding the status or features of Nano Banana 2 was provided.

- Users Flee KYC Requirements: A member expressed a strong preference for AI providers without KYC (Know Your Customer) requirements, naming Qwen, Together AI, Fireworks, and Openrouter as better options.

- They specifically commended Alibaba for their coding plan, performance, and generous usage limits, all without requiring KYC for users in Finland.

- Kimi Agent Swarm Stays Exclusive: A member inquired if the Kimi K2.5 Agent Swarm functionality would be integrated into the Kimi CLI.

- A clarifying response indicated that the full Kimi Agent Swarm is only accessible via kimi.com, while the Kimi-CLI supports the creation of individual subagents.

- Kimi Powers Vision for the Blind: A community member is developing a vision project that leverages Kimi to assist blind users by describing images, assessing their content, and interpreting associated emotions.

- The developer has offered the research to Moonshot AI, potentially leading to a vision companion product, with the alternative option of open-sourcing the project.

- Kimi-Code API Plagues Users with Connection Issues: Multiple members reported persistent API connection problems when using kimi-code, encountering connection errors and unpredictable agent behavior.

- One user stated they received 403 errors after prepaying for a year in advance when new rules were enforced.

GPU MODE Discord

- Voice Model gains Emotional Context: A member showcased a 520M voice model running on RTX and Apple Silicon devices, which produces different emotions from the same text, using dialogue history context, viewable at this demo.

- This enables the model to generate more contextually relevant and emotionally nuanced responses based on the conversation history.

- CUDA Wizards Wanted: Poolside AI is recruiting CUDA experts for their pre-training team, dedicated to enhancing projects by optimizing large-scale pre-training runs on advanced hardware, see the job posting.

- The team prides itself on being cracked, humble, and hard working, welcoming inquiries via DMs.

- PTX Consistency Confusions: Users debated the consistency model of PTX, specifically if memory access ordering is guaranteed for accesses preceding the release on the producer thread.

- The discussion stemmed from conflicting interpretations of documentation and observed behaviors, with the consensus that this area requires additional study especially in relation to distributed systems.